Google’s full AI arc, plus world models, LeCun’s semantic tube prediction, entropy in LLMs, and Apple’s attention-to-Mamba bridge.

Good morning, AI enthusiasts!

This week, we trace how Google moved from a research lead to a product stumble to a distribution-powered comeback. We also cover why letting your agent call tools without validating the arguments first is one of the fastest ways to break things in production.

Also in the issue:

- World models and how they differ from transformers: predicting the next environmental state instead of the next token.

- LeCun’s new paper based on the idea that coherent sentences trace geodesics on a semantic manifold, not random token walks.

- An AI SRE agent that monitors production logs without predefined thresholds by filtering out 99% of noise before the model ever sees it.

- Apple’s solution for converting trained transformers into Mamba SSMs without rebuilding from scratch.

- Entropy explained from Shannon’s wartime research all the way to why temperature works the way it does in LLMs.

Let’s get into it!

What’s AI Weekly

This week, in What’s AI, I trace Google’s entire trajectory in the AI race. They introduced the Transformer, the architecture behind most frontier LLMs. Then OpenAI turned that research lineage into the consumer product moment Google missed. So they rushed out, and two months after ChatGPT’s release, they launched their chatbot Bard, but in its very first public demo, it got a basic fact wrong about the James Webb Space Telescope. But fast forward to today, April 2026. Gemini has 750 million monthly active users. Apple chose Gemini to power the next generation of Siri. Salesforce CEO publicly said he switched from ChatGPT to Gemini and is “not going back.” In this article, I will go through this entire journey from the ChatGPT shock to the first Gemini model to the acceleration. Read the full article here, or if you want to watch the video version of this article, find it here.

AI Tip of the Day

If an agent sends the wrong arguments to a tool, the risk is higher than with other LLM systems: it can refund the wrong order, email the wrong customer, update the wrong record, or run a database action without the necessary constraints.

To avoid this, treat tool arguments like normal backend inputs. Validate IDs, permissions, account state, allowed ranges, required fields, and irreversible actions before execution. The model can decide which tool to call, but your application should decide whether to allow that call. This keeps the agent useful without making it the authority layer for your product.

If you’re building LLM applications and want to learn more about tool use, agents, guardrails, and production architecture, and how to make these decisions for any scale, we cover this in our 10-hour LLM Fundamental course.

— Louis-François Bouchard, Towards AI Co-founder & Head of Community

Learn AI Together Community Section!

Featured Community post from the Discord

Iampoppyxx has built KVBoost, which allows you to reuse chunk-level KV cache for HuggingFace inference. The kernel implements tiled FlashAttention-2 with online softmax, reducing HBM memory traffic from O(N²) to O(N) during KV encoding. It is applied automatically to every attention module inside the loaded model, no code changes needed. Check it out on GitHub and support a fellow community member. If you have any thoughts on how to improve it, share them in the thread!

Collaboration Opportunities

The Learn AI Together Discord has several fresh collaboration threads this week. If you are excited to dive into applied AI, want a study partner, or even want to find a partner for your passion project, join the collaboration channel! Keep an eye on this section, too — we share cool opportunities every week!

1. Harry03418 is building a production-level automation system using n8n and wants to connect with other builders who want to exchange ideas. If this sounds interesting, connect with him in the thread!

2. Aliteralmango_07776 is building an Autograd library and needs help with improving it. If you want to collaborate or have suggestions for the project, reach out to them in the thread!

3. Syhslvdr is currently learning Python for data analysis/data science/ML and is looking for study partners. If you want to discuss coding projects or exchange notes, connect with her in the thread!

4. Khturan has built a project for a hackathon that they want to scale as a startup. If you like building AI-based businesses, contact them in the thread!

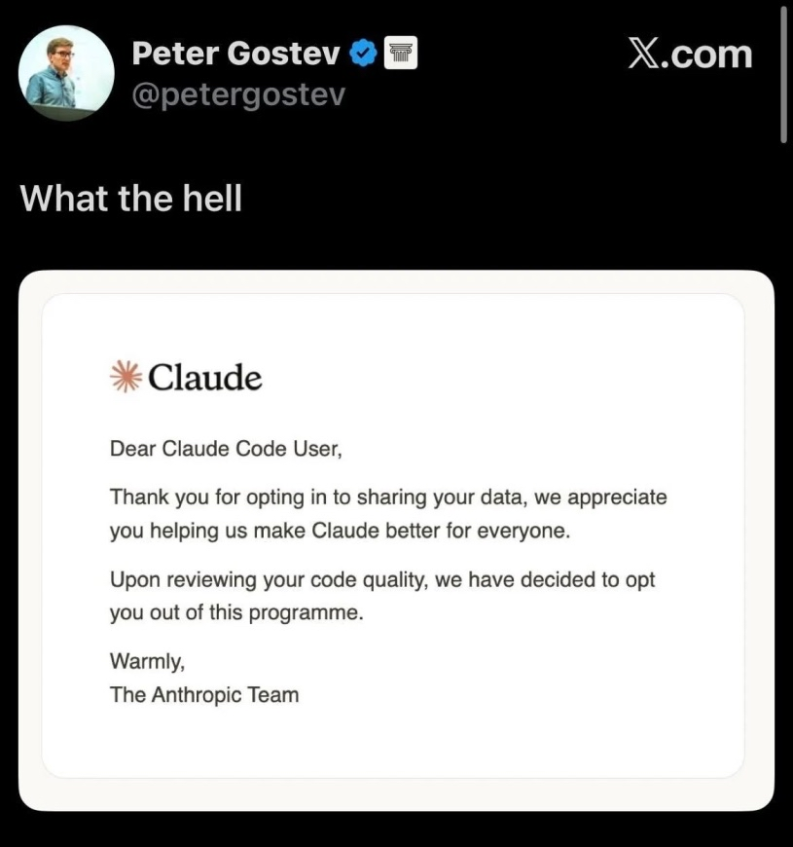

Meme of the week!

Meme shared by bin4ry_d3struct0r

TAI Curated Section

Article of the week

World Models Explained: The Architecture That Could Replace Transformers By Yuval Mehta

This article explains the architectural difference between world models and transformers: LLMs predict the next token, while world models predict the next environmental state through a perception module, a dynamics model, and a planning module. The article maps two competing schools: generative models like Genie 3 and Marble that predict at the pixel level, and LeCun’s JEPA that predicts abstract latent representations.

Our must-read articles

1. Tokens Are Breadcrumbs. Geodesics Are the Path. LeCun Just Proved the Difference Matters By Dr. Swarnendu AI

Hai Huang, Yann LeCun, and Randall Balestriero posted a February 2026 paper called Semantic Tube Prediction, turning a long-standing philosophical argument about LLMs into formal mathematics. The Geodesic Hypothesis posits that coherent sentences trace geodesics on a smooth semantic manifold, and the STP loss enforces that each hidden state remains within a tube around the corresponding geodesic. This article walks through the paper and the mathematics behind it.

2. How I’m Building an AI SRE Agent to Analyze Production By Quan Huynh

The article outlines the architecture of an AI Site Reliability Engineering agent designed to monitor production logs without predefined thresholds. The system inverts the typical approach: regex matching, Drain3-style pattern clustering, and frequency analysis eliminate 99% of log noise before the AI sees anything, keeping costs and false positives low. Three deployment modes, training, shadow, and detect, let teams validate the agent’s judgment before it creates real incidents. The pattern catalog serves as the agent’s long-term memory, improving through operator feedback rather than model retraining.

3. Apple Just Built a Bridge Between Attention and SSMs. Here is the Step-by-Step Blueprint By Dr. Swarnendu AI

Apple researchers propose a practical two-stage route for converting trained attention models into Mamba-style State Space Models. Direct distillation fails because softmax attention and Mamba’s recurrence represent information in structurally incompatible ways. The fix uses two stages: first, a learnable Hedgehog feature map converts softmax attention to linear attention by directly matching attention patterns. Second, HedgeMamba inherits those parameters as Mamba initialization, replacing the identity decay with a learnable A matrix. The result reduces inference to linear time with only a 0.25-perplexity degradation.

4. I Finally Understood Entropy — Here’s the Simplest Way to Think About It (Even in LLMs) By Ashish Abraham

Entropy sits at the core of how language models learn and generate text, yet most explanations leave readers more confused. This article traces the concept from Shannon’s wartime communications research, through decision-tree splits, to LLM training objectives. Cross-entropy drives model optimization, perplexity converts it into an interpretable scale, and bits-per-byte frames model quality as a compression ratio. Temperature emerges as a direct dial on output probability distributions, connecting foundational information theory to a concrete generation parameter in LLMs.

If you are interested in publishing with Towards AI, check our guidelines and sign up. We will publish your work to our network if it meets our editorial policies and standards.

LAI #126: From Bard’s Failed Demo to 650 Million Users was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.