Why the next software development revolution isn’t about code, it’s about context.

Two decades ago, software engineering had a bottleneck problem: getting working code from a developer’s head into production reliably. We solved it. Waterfall gave way to Agile. Agile gave way to DevOps. DevOps gave us pipelines, automation, and a shared language between development and operations. The bottleneck moved.

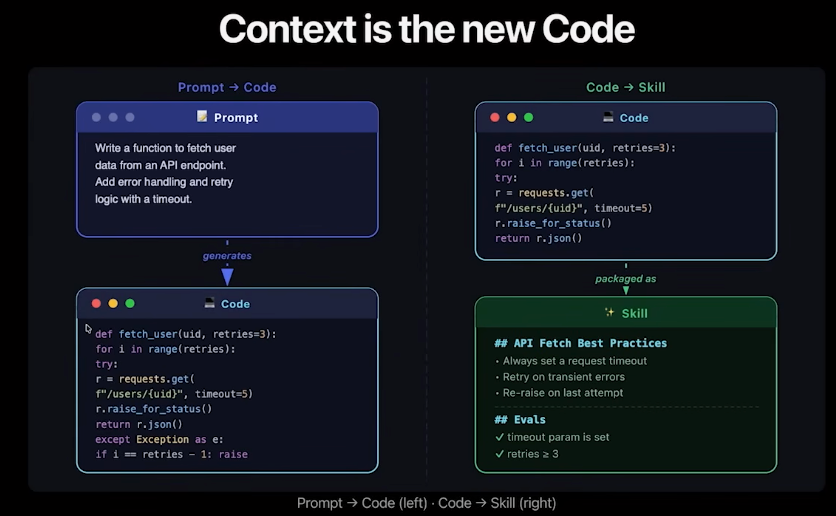

Coding agents are writing code now. Not just autocompleting lines, but writing features, fixing bugs, scaffolding entire services. The bottleneck has shifted. It’s no longer about how fast we can write and ship code. It’s about how well we can describe what the code should do, why it should do it, and how it should behave in the messy reality of our systems.

The bottleneck is context.

Experienced developers carry context around like muscle memory: the architectural decisions that shaped the system, the conventions the team agreed on, the business logic that only lives in someone’s head from a meeting three months ago. A new team member struggles not because they can’t code, but because they lack context. Now think about coding agents. Every session is a new hire. Every time an agent spins up, it starts from zero. No muscle memory, no hallway conversations, no institutional knowledge. It has only what you give it.

This means developers have inherited a new responsibility: translating implicit organisational knowledge into something structured enough for another entity to act on. Making the implicit explicit, at the right level of detail, for an audience that takes everything literally. And if context is the new bottleneck, then we need a development lifecycle built around it. I think what’s emerging is a Context Development Lifecycle (CDLC), and it will reshape how we think about software development as profoundly as DevOps reshaped how we think about delivery and operations.

The Coding Era of Context

Let’s be honest about where most teams are today. Context lives in .cursorrules files that someone wrote three months ago and nobody has touched since. It lives in CLAUDE.md files copy-pasted from a blog post. It lives in Slack threads where a senior engineer explains the authentication flow once and everyone is expected to have seen it. It lives in prompt snippets shared in a team wiki that may or may not reflect how the codebase actually works today.

There’s no versioning. No testing. No way to know if context is still accurate. No way to distribute it when it improves. No way to detect when it conflicts with other context. And no way to tell whether it’s actually helping or quietly making things worse. We treat context as simple documents to be copied around, not as artifacts to be developed over time.

This is where code was before version control. Before CI/CD. Before anyone thought to treat delivery as an engineering problem.

The Context Development Lifecycle

The CDLC gives engineering teams stages to apply to context as a managed artifact. It’s deliberately modeled on what already works for code.

The four stages of the CDLC

If we treat context as an engineering artifact (something we generate, evaluate, distribute, and observe) a lifecycle emerges. The object moving through the pipeline isn’t code. It’s knowledge and intent.

Generate: making implicit knowledge explicit

Context authoring is specification work capturing how a library should be used, what conventions a team follows, what constraints a system operates under. Whether you call them skills, rules, or specs, the work is the same.

Context isn’t just technical. There’s technical context (coding standards, architectural patterns), project context (priorities, scope), and business context (why the system exists, what compliance requires). Agents need all three to make good decisions. Most teams only provide the first if they provide any at all.

Context rots and conflicts. The best practice you encoded six months ago may be wrong today. The convention one team documented may contradict what another team wrote. When two pieces of context give opposing guidance, the agent doesn’t raise a flag. It picks one, and you won’t know which until the output surprises you. Managing context means managing its freshness and consistency, not just its existence.

Evaluate: TDD for context

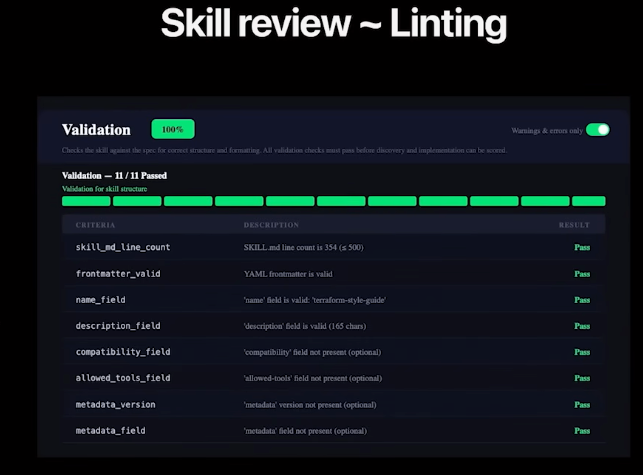

You wouldn’t ship code without tests. Why would you ship context without evaluations (evals, as the industry calls them)?

Testing context means defining scenarios and checking whether agent output matches intent not just “does it run,” but “does it reflect the decisions and constraints we specified.” If this sounds like test-driven development, it should: write expected behavior first, run it, watch it fail, improve the context until it passes.

Evals turn context from a best-effort document into a verified artifact. They also answer model selection questions with data instead of opinion: does the new expensive model actually improve output on your specific codebase? Run your evals. You’ll have an answer, not a hunch.

When evals fail, the instinct is to blame the agent. More often the failure is in the context an ambiguous instruction, a missing constraint, an assumption that was obvious to a human but invisible to the model. Every eval failure is a specification you didn’t write.

Distribute: context as a package

Context that lives in a single developer’s project is useful. Context that flows across an organisation is transformative.

This is what makes distribution different from every previous “let’s improve our documentation” initiative: developers actually want to do this. Writing context isn’t a chore disconnected from their daily work. It directly improves the agents they rely on. Better context means less time correcting agent output, less time re-explaining conventions, less time debugging code that ignored a pattern they thought was obvious.

But without a registry, without versioning, without a way to push updates, context rots silently. That skill someone wrote six months ago, before a breaking change? It’s actively teaching agents the wrong pattern, and nobody knows. Context also becomes an attack surface as it flows across teams, so it needs the same supply chain security posture you’d apply to any shared dependency. Treating context as a package (versioned, published, secured, and maintained) is the same insight that gave us npm and pip and cargo: knowledge scales when it has infrastructure.

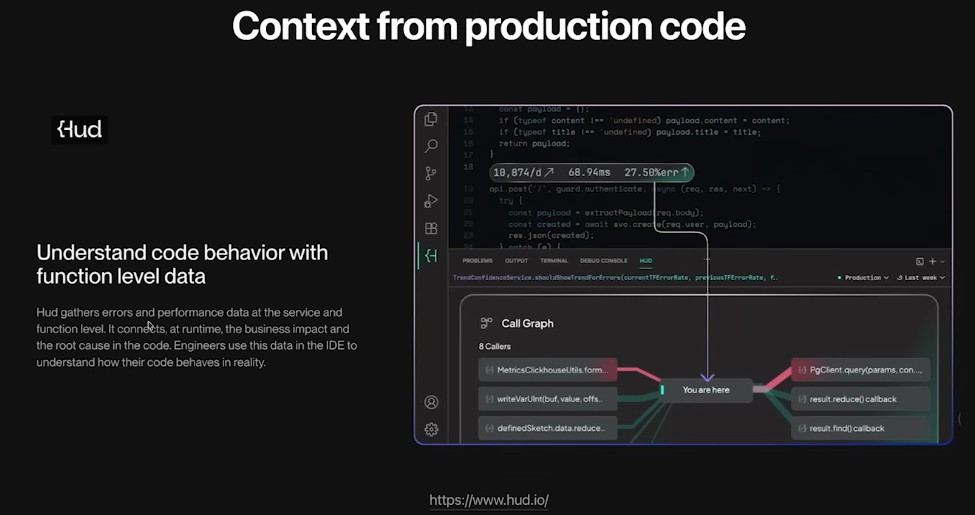

Observe: learn from use in the wild

This is where the lifecycle becomes a loop.

It helps to equate this with how we already think about tests and observability in software. Our synthetic tests are never perfect. We do what we can, deploy the changes, and then observe production to spot failures and optimization opportunities. The same applies here. Evals catch what they can, but agents in the wild generate the real signal. They ask clarifying questions, revealing gaps. They make unexpected choices, revealing ambiguities. They produce code that works but doesn’t match your intent, revealing unstated assumptions.

What did the agent know? What did it misunderstand? Where did it improvise because the context was silent?

We correct the context, add evals for what we missed, and redistribute. The loop continues. And agents themselves can help close it: the same agent that revealed the gap can draft an improved skill, suggest a clarification, or flag a conflict.

Infinite context windows won’t save you

If context windows keep growing (and they are) does any of this still matter? The capacity constraint relaxes and the cost of including everything drops. But the lifecycle doesn’t depend on scarcity. It depends on quality. An infinite window doesn’t write your context for you, and it actually makes conflicts worse: more context loaded means more contradictions to navigate. The challenge shifts from “what to include” to “how to keep it consistent, current, and trustworthy.” From curation to governance. That might be harder, not easier.

The question for your team

You’ve invested in getting agents to write code. What have you invested in giving them the context to write it well?

Do you test your context? And when you switch models, do your evaluations still pass?

The teams that win in the agent era won’t be the ones with the best models. They’ll be the ones with the best context: generated, evaluated, distributed, and continuously improved.

Thanks for reading! If you have any questions or feedback, please let me know on Medium or LinkedIn

Context Is The New Code was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.