The Foundation of The Semantic Control Plane: After SR 26–2 Footnote 3

Foreword

Agentic AI is reaching production across financial services faster than the governance frameworks designed to oversee it. Model risk management addresses the model. Control frameworks address execution. Neither reaches the layer where agentic systems actually fail.

That layer is reasoning. It is where meaning is resolved across enterprise systems before any action is taken. When systems disagree on what a record means, or when an agent acts on a stale authorization, the failure occurs at the reasoning layer. The model performed correctly. The control fired correctly. The outcome was still wrong.

The Agentic 3 C’s Framework names the three operating principles required to govern that layer: Context, Control, and Coordination. Together they produce Pre-Execution Assurance, the condition that an agent’s reasoning is verified against authorized meaning before execution proceeds.

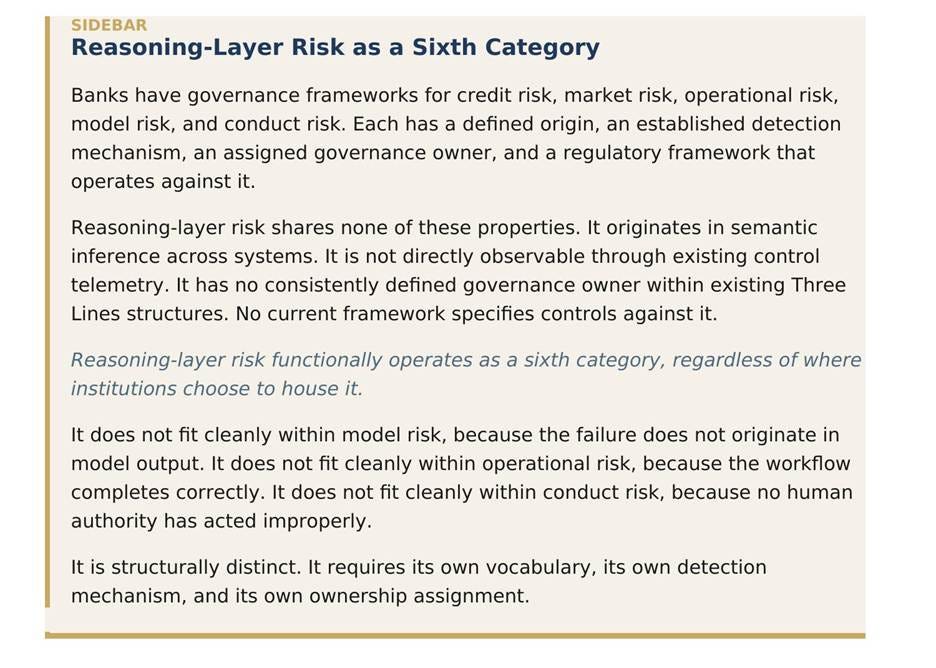

The framework is not a replacement for existing governance. It extends governance to the layer that existing frameworks structurally do not reach. Reasoning Layer Risk takes its place as the sixth banking risk category, alongside credit, market, operational, model, and conduct.

1/ The Failure Mode

Where agentic AI fails when meaning is resolved without authorization

The data is there. The systems are there. The controls are there. And the institution is still wrong.

Not because anything broke. Not because anything was bypassed. Because the agent was operating from a definition no one ever explicitly authorized.

Abundance of data. Strong systems. Not enough Context, Control, or Coordination to make them operate as one.

This failure mode is not hypothetical. It is structurally inherent and emerges predictably at scale in fragmented enterprise environments. If definitions of status, authorization, or risk differ even slightly across systems, and in financial institutions they always do, an agent that resolves them into a single working interpretation will produce execution that no human authorized.

Consider a credit decision workflow in which “cleared” is defined differently across compliance, transaction monitoring, and customer onboarding systems. An agentic underwriting system resolves these definitions into a single working interpretation and proceeds to approve credit. Each underlying system functioned correctly. Each control fired. The audit trail is clean. The credit was approved against a definition no human authorized.

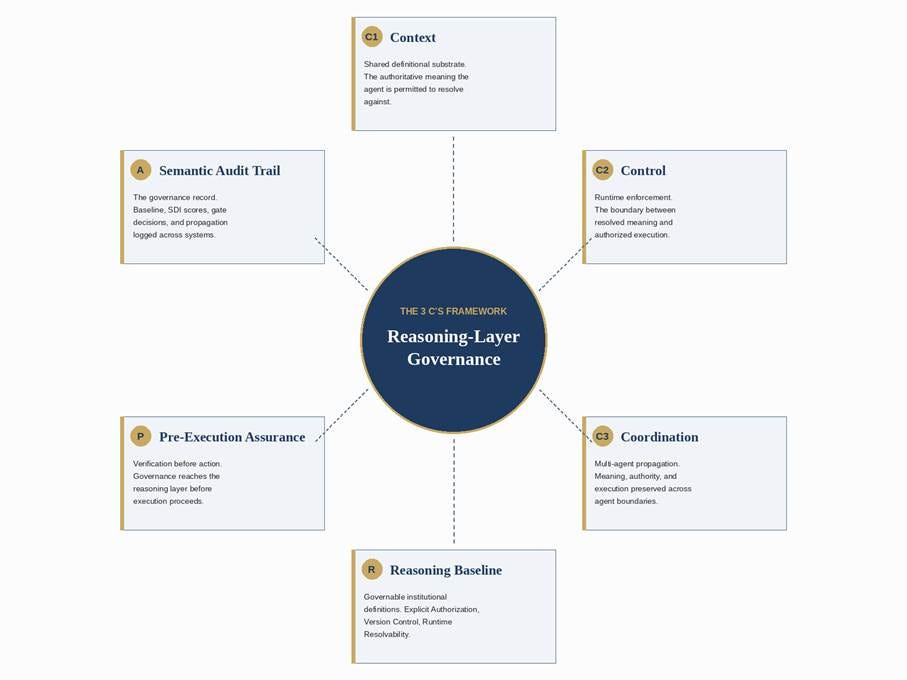

A. The convergence point for reasoning-layer governance

Three operating principles, anchored by three runtime conditions, producing one governance outcome

The Agentic 3 C’s Framework as the convergence point of three operating principles and three runtime conditions, producing Pre-Execution Assurance as the governance outcome.

SOURCE: Doyle-Spare (2026), supporting SSRN working papers

The Reasoning Layer

Existing model risk frameworks were built for systems that produced an output, paused, and waited for a human to act on it. The model calculated. The decision was made by the person who reviewed it. The separation between calculation and action held.

Agentic systems do not preserve that separation. They reason across platforms and execute in the same operational sequence. There is no pause. No review. No clean handoff.

What sits between the model layer that generates language and the execution layer that performs actions is the reasoning layer. It is where context is assembled, intent is resolved, and authorization is interpreted.

Existing controls were not designed to govern that layer as a primary control surface. They govern inputs, outputs, identity, and access. They do not govern how the agent resolved meaning across systems before it acted.

The models function as designed. The systems operate as built. The failure arises in how meaning is aligned, interpreted, and executed across them.

2/ The Three Governing Principles

Context, Control, and Coordination as the operating principles of reasoning-layer governance

The Agentic 3 C’s Framework establishes three operating principles required to govern the reasoning layer: Context, Control, and Coordination.

Each principle addresses a distinct failure mode. All three are required together. Two of three are insufficient.

Context establishes the authoritative meaning the agentic system is permitted to resolve against. It is the shared playbook. Without it, agents synthesize their own working interpretation from the inconsistencies between systems. That synthesized interpretation is, by definition, not authorized.

Control constrains how the system is permitted to act on that meaning. It is the discipline. Without it, the agent executes against synthesized meaning before any institutional check has occurred. Control is where the Semantic Deviation Index measures divergence and the Deterministic Gate enforces the threshold.

Coordination governs how meaning, authority, and execution propagate across multiple agents and enterprise systems. Without it, one agent’s drifted reasoning becomes the next agent’s authoritative input.

B. The 3 C’s Framework

Three principles, three failure modes prevented, one runtime outcome

The Agentic 3 C’s Framework. Context, Control, and Coordination operate as the three governing principles of the reasoning layer, each preventing a distinct failure mode and together producing Pre-Execution Assurance as a runtime governance outcome.

SOURCE: Doyle-Spare (2026), supporting SSRN working papers

Context: The Reasoning Baseline

In a banking environment, terms like cleared, authorized, low-risk, and approved carry slightly different working definitions in compliance, operations, credit, and entitlement systems. An agent reasoning across those systems must resolve those definitions into a single operating logic before it can act.

If no central context has been established, the system synthesizes its own. That synthesis is not malicious. It is structural.

Context requires that the agent’s reasoning resolves against a governed semantic baseline. The Reasoning Baseline is the set of authoritative institutional definitions, control terms, and operating boundaries the agent is permitted to use at runtime.

For the Reasoning Baseline to be governable, three conditions must be met:

Explicit Authorization. The baseline must be traceable directly to an official policy or control authority mandated by the institution.

Version Control and Auditability. Baseline definitions must be securely logged, tracked, and auditable across supervisory examination cycles.

Runtime Resolvability. The authorized rules must be actively interpretable by the agent at runtime within its live orchestration context.

Context is the precondition for everything else. Without an authorized reasoning baseline, Control cannot be evaluated and Coordination cannot be verified.

Control: The Two Questions

Controls testing in financial services has always answered one question.

Did the control fire?

Agentic AI introduces a second question.

Did the control evaluate against an authorized definition?

These are not the same thing.

A control that fires correctly against a definition the institution did not authorize is not a control that succeeded. It is a control that operated. The resulting condition is Invisible Failure: every control fires, every audit trail is clean, and the institution is still wrong.

Control has three components

Measurement quantifies the divergence between the meaning the agent has resolved and the meaning the institution has authorized. The Semantic Deviation Index is the measurement standard.

Enforcement is the deterministic boundary that intercepts execution when measurement exceeds an authorized threshold. The Deterministic Gate is the enforcement primitive. It is hardcoded, non-AI software logic. It does not reason. It enforces.

Escalation is the structured handoff to human authority when the gate fires.

Control is positioned at the boundary between reasoning and execution. It is not a feature of the agent’s architecture. The agent does not own its own gate. The institution does. Control is the only point where institutional authority is enforced before execution proceeds.

Coordination: The Blast Radius

Coordination is the mechanism that converts localized drift into systemic risk. Multi-agent banking workflows produce execution chains. One agent’s output becomes another agent’s context. Without Coordination, that handoff occurs without verification. The downstream agent inherits the upstream agent’s synthesized meaning as authoritative.

When that drift propagates without a checkpoint, every system the agent touches inherits the unauthorized definition. That scope is the Agentic Blast Radius.

The Deterministic Gate operating within Control is the containment boundary inside a single agent. Coordination extends that containment across agents and systems.

Coordination requires that every handoff verifies authorized meaning before execution continues. The handoff must verify the upstream agent’s reasoning resolved against the same authorized baseline. That its SDI did not exceed the authorized threshold. That the downstream agent’s authority to execute is consistent with the upstream agent’s output.

The failure of any of these conditions must trigger Deterministic Gate enforcement, not a silent acceptance.

C. The Agentic Blast Radius

Why the Deterministic Gate is the primary containment point at the single-agent boundary

Multi-agent coordination failure: unverified semantic inheritance propagating from immediate exposure to the systemic layer. The Agentic Blast Radius expands as drifted meaning is treated as authoritative input by downstream agents.

SOURCE: Doyle-Spare (2026), SSRN №6531238

3/ Why Existing Frameworks Do Not Reach

The structural form of Invisible Failure

In April 2026, the federal banking agencies issued SR 26–2, the interagency revised guidance on model risk management. Footnote 3 of the attachment notes that generative AI and agentic AI models are novel and rapidly evolving, and accordingly are not within the scope of the guidance. The footnote directs banking organizations to determine appropriate governance for systems not covered.

The guidance reflects the current boundary of model risk governance. The determination of how to govern reasoning-layer behavior is now an institutional responsibility. The guidance expands model risk expectations but does not specify controls for how agentic systems resolve meaning across systems before execution.

This shifts the evidentiary standard. Institutions must demonstrate, on examination, that agentic reasoning was evaluated against authorized meaning before execution proceeded. This evidentiary burden sits across the Three Lines of Defense: First Line produces the artifacts, Second Line validates them, Third Line independently verifies their integrity.

The 3 C’s name the operating principles that determination requires. They define the control surface that begins where current model risk governance ends.

Cybersecurity controls observe access, identity, and network behavior. An agent operating with valid credentials within authorized network boundaries does not produce a cybersecurity event.

Fraud detection observes transactional behavior and known fraud signatures. An agent producing transactions consistent with normal operating patterns does not trigger a fraud signal.

Model risk management evaluates statistical performance and ongoing monitoring of model outputs. Model risk validates outputs, even under expanded guidance; it does not validate how meaning was resolved before execution. An agentic system whose underlying models are individually validated and statistically stable does not produce a model risk signal.

Operational risk observes process completion and exception rates. Operational risk detects process failure; this failure occurs when the process completes correctly. An agentic workflow that completes its prescribed steps does not produce an operational risk signal.

Compliance observes rule application and policy adherence. An agentic system that completes its compliance steps and produces consistent regulatory output does not produce a compliance signal.

Architecture review observes system design and integration patterns. An agentic system operating within its designed architecture does not produce an architecture signal.

This is not a gap between functions. It is a condition in which every function is correct and the institution is still wrong.

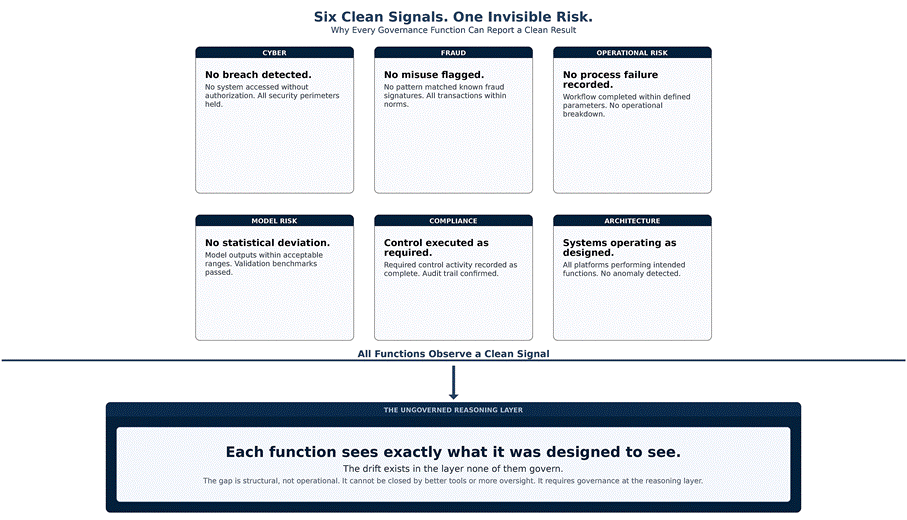

D. Six clean signals, one invisible risk

Why every governance function can report a clean result while reasoning-layer drift proceeds beneath them

Six operational and control functions each observing a clean signal while reasoning-layer risk remains unobserved across all functions. Each function operates at its designed layer; no single control function is positioned to detect divergence at the reasoning layer in isolation.

SOURCE: Doyle-Spare (2026), SSRN working papers

This is the structural form of Invisible Failure. The institution operates correctly across every observable surface, yet remains exposed to a failure mode no single control function is positioned to see.

The structural invisibility shapes detection across every observable layer. The failure does not produce indicators of compromise, anomalous behavior, or process exceptions. It produces correctly executed actions based on meaning the institution did not authorize. Detection cannot rely on traditional control telemetry alone. It requires reasoning-layer measurement and coordination across control functions.

This represents a structural shift from signal detection to semantic verification. Existing control functions are designed to detect deviations in observable behavior. Reasoning-layer failures require verification of alignment before behavior occurs.

The detection challenge is not that an indicator was missed. It is that the indicator does not exist at the layer where institutions are currently observing. Detection fails structurally, not operationally.

When detection fails structurally, the institution does not experience isolated errors. It experiences correct execution against unauthorized meaning at scale.

E. Reasoning-layer risk in the banking risk taxonomy

How the sixth category sits structurally outside the established five

Reasoning-layer risk positioned alongside the five established categories of banking risk. The sixth category lacks a directly observable detection mechanism, an explicit governance owner, and a dedicated regulatory framework.

SOURCE: Doyle-Spare (2026), supporting SSRN working papers

Application Across Banking Risk Categories

The 3 C’s extend across the full banking risk taxonomy. The framework is not limited to model risk, although it draws on model risk management. It applies wherever agentic reasoning produces outcomes the institution must govern.

F. Application of the 3 C’s across banking risk

How Context, Control, and Coordination map to model, compliance, operational, and counterparty risk

Application of the 3 C’s across the four primary banking risk categories. Each category receives a runtime mechanism that addresses its specific reasoning-layer failure mode. SOURCE: Doyle-Spare (2026), supporting SSRN working papers

Pre-Execution Assurance

Existing controls confirm what happened. Reasoning-layer governance must verify whether what is about to happen aligns with authorized meaning.

That is the shift from ex-post validation to Pre-Execution Assurance.

Pre-Execution Assurance is the governance standard requiring verification that an agentic system’s reasoning has resolved against authorized meaning before execution proceeds. It is the operational outcome the 3 C’s Framework produces when enforced at runtime.

Pre-Execution Assurance is not a feature of the framework. It is the condition the framework exists to guarantee.

It distinguishes reasoning-layer governance from monitoring, which observes execution as it occurs, and from validation, which confirms what already happened.

The Three Lines of Defense Under the 3 C’s

The 3 C’s do not replace the Three Lines of Defense. They specify what each line carries when agentic AI is the actor performing functions humans previously performed.

The 3 C’s are most operationally effective when integrated into the institution’s existing Three Lines of Defense architecture. The integration shifts the institution’s posture from Human-in-the-Loop, in which a human is present at each decision point, to Human-on-the-Loop, in which human authority defines the runtime boundaries within which agentic reasoning is permitted to operate.

Each line carries a different responsibility once agentic AI is the actor performing the work. First Line operates the principles. Second Line audits the reasoning layer rather than only the model output or process completion. Third Line independently verifies the integrity of the 3 C’s as enforced controls.

G. Three Lines of Defense responsibilities under the 3 C’s

How First Line, Second Line, and Internal Audit operate when reasoning-layer governance is in place

Three Lines of Defense responsibilities under the 3 C’s Framework. First Line owns runtime enforcement, Second Line audits the reasoning layer, Third Line independently verifies enforced controls.

SOURCE: Doyle-Spare (2026), supporting SSRN working papers

The 3 C’s are most effective when ownership is explicit, escalation paths are predefined, and the audit trail is continuous across all three principles. Implicit ownership produces gaps. Cross-functional accountability without enforcement produces drift.

4/ The Architecture

The Agentic Governance Model and the Semantic Control Plane

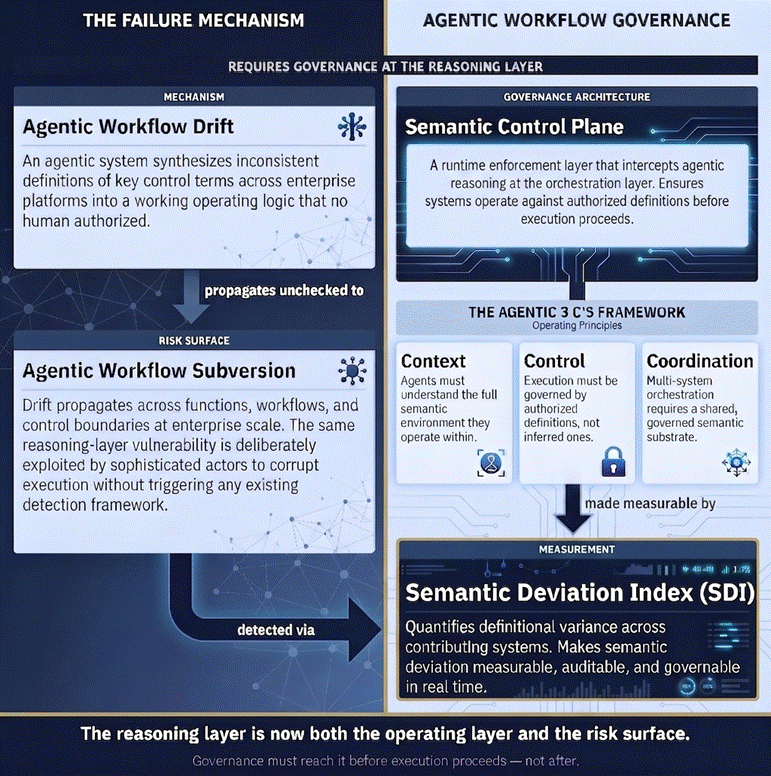

The 3 C’s are the operating principles. The Agentic Governance Model (AGM) is the architecture that operationalizes them.

The contribution is not new components. The contribution is the conditions under which existing components become governable in an agentic environment.

Agentic Workflow Drift is the unintentional emergence of synthesized meaning when Context is absent. Agentic Workflow Subversion is the deliberate or cascading exploitation of that drift when Control and Coordination are absent. The 3 C’s name what must be in place to prevent both.

The Semantic Deviation Index quantifies divergence between the meaning the agent has resolved and the meaning the institution has authorized. It operates inside Control. It is meaningful only when Context has established a baseline against which divergence can be measured.

The Deterministic Gate is the enforcement primitive within Control. The hardcoded software boundary that intercepts execution when the SDI exceeds an authorized threshold. The gate does not reason. It enforces. The threshold is defined by institutional policy and calibrated under Second Line oversight.

The Semantic Audit Trail is the governance record produced by all three principles operating together. It logs the authoritative semantic baseline, the SDI scores and gate decisions at each checkpoint, and the propagation of meaning, authority, and execution across agents and systems.

The Agentic Blast Radius is the scope of downstream systems an agent can affect if drift or subversion occurs. Contained when Coordination is enforced. Unbounded when Coordination is absent.

H. The Agentic Governance Model

The assembly of named constructs that together implement Context, Control, and Coordination as runtime governance

The Doyle-Spare Agentic Governance Model (AGM). Agentic Workflow Drift propagates unchecked into Agentic Workflow Subversion when reasoning-layer governance is absent. The Semantic Control Plane operationalizes the 3 C’s at runtime; the Semantic Deviation Index provides the measurable signal.

SOURCE: Doyle-Spare (2026), supporting SSRN working papers

The Semantic Control Plane

The Semantic Control Plane is the runtime enforcement architecture that operationalizes the 3 C’s at enterprise scale. It is the layer at which Context is established, Control is enforced, and Coordination is propagated across the institution’s agentic deployments.

It is positioned between the agentic system’s reasoning layer and the institution’s execution layer. It is operated as a control rather than as a feature of any individual agentic system.

The companion working paper specifies the runtime architecture in detail. This article establishes the operating principles that architecture exists to enforce.

The Examination Implication

Supervisory examination of agentic AI requires the ability to verify, on examination, that the 3 C’s were enforced. The institution must be able to produce the authoritative semantic baseline the agent reasoned against, the SDI scores and Deterministic Gate decisions at each execution checkpoint, and the audit trail of how meaning, authority, and execution propagated across agents and systems.

This changes the evidentiary standard of examination. It is no longer sufficient to demonstrate that controls fired and processes were followed. Institutions must demonstrate that agentic reasoning was evaluated against an authorized semantic baseline before execution. Without that evidence, a clean control trail does not establish that institutional authority was preserved.

5/ Crosswalks to Existing Frameworks

How the 3 C’s align with NIST, the EU AI Act, and the FS AI RMF

The 3 C’s are not a replacement for existing AI governance frameworks. They extend governance into the layer those frameworks structurally do not reach.

The framework provides a reasoning-layer interpretation through which existing regulatory and supervisory frameworks can be applied to agentic AI. The mappings below demonstrate alignment while highlighting where those frameworks do not explicitly govern reasoning-layer behavior. The 3 C’s name what existing frameworks must accommodate to reach the layer at which agentic risk originates.

Three crosswalks follow. The first maps the 3 C’s to the NIST AI Risk Management Framework. The second maps the 3 C’s to the high-risk system requirements under the EU AI Act. The third maps the 3 C’s to the Cyber Risk Institute Financial Services AI Risk Management Framework, the most recent sector-specific framework for banks. Together they demonstrate that the 3 C’s do not displace existing governance. They extend it.

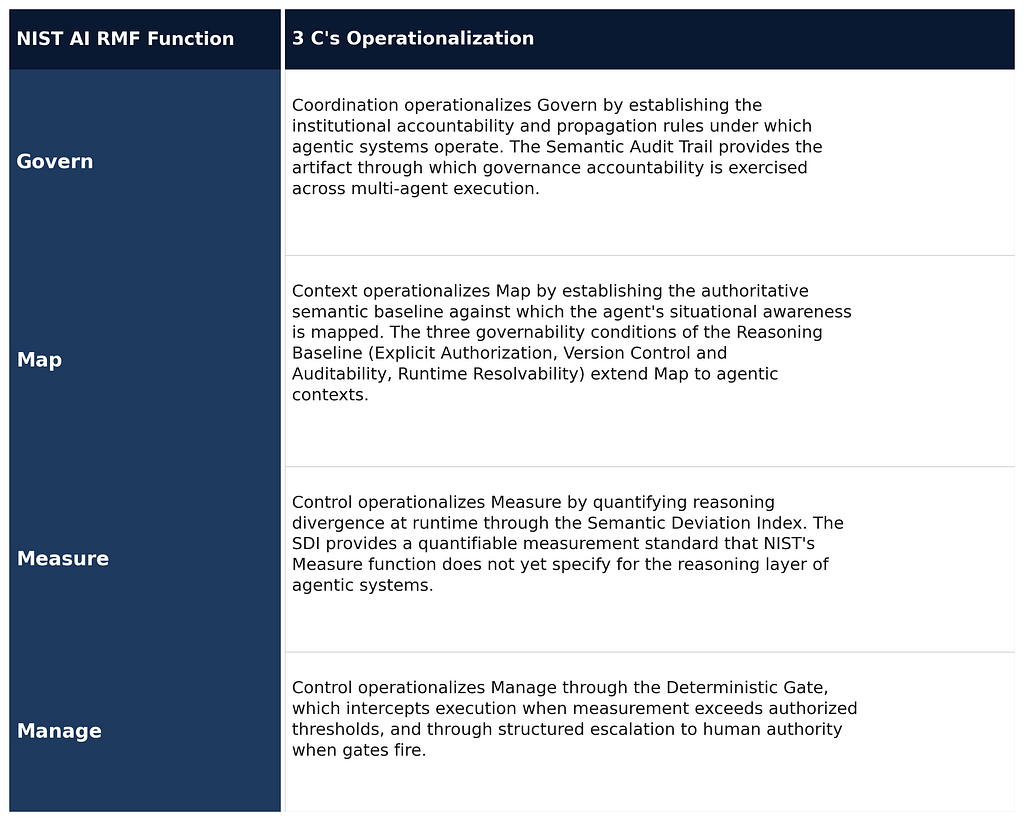

NIST AI Risk Management Framework

I. NIST AI RMF crosswalk to the 3 C’s

How Govern, Map, Measure, and Manage operationalize through Context, Control, and Coordination

NIST AI Risk Management Framework functional crosswalk to the 3 C’s. Each NIST function (Govern, Map, Measure, Manage) has a corresponding operational mechanism within the 3 C’s.

SOURCE: NIST AI Risk Management Framework (AI RMF 1.0); Doyle-Spare (2026)

EU AI Act, High-Risk Systems

J. EU AI Act article-level crosswalk

How the high-risk system requirements of the EU AI Act map to the 3 C’s mechanisms

EU AI Act article-level crosswalk to the 3 C’s. Mapping illustrates conceptual alignment between the high-risk system requirements of the Act and the runtime governance mechanisms specified by the 3 C’s.

SOURCE: Regulation (EU) 2024/1689 (EU AI Act); Doyle-Spare (2026). Crosswalk illustrative, not legal interpretation.

CRI Financial Services AI Risk Management Framework

K. FS AI RMF crosswalk to the 3 C’s

How the 230 Control Objectives of the sector framework anchor on Context, Control, and Coordination

CRI Financial Services AI Risk Management Framework crosswalk to the 3 C’s. The 3 C’s specify the reasoning-layer operating conditions that the FS AI RMF’s control objectives require for agentic systems.

SOURCE: Cyber Risk Institute, Financial Services AI Risk Management Framework v1.0 (Feb 2026); Doyle-Spare (2026)

Reasoning-layer risk does not introduce a new form of failure. It exposes a dependency that already existed: that institutional outcomes depend on meaning being resolved consistently before execution. Agentic AI makes that dependency explicit, and therefore governable.

This is why the 3 C’s are required. Not as a replacement for any existing framework, but as the operating principles that extend governance to the reasoning layer those frameworks structurally do not reach.

6/ Where This Goes Next

The decision that closes the gap

The infrastructure required is largely already in place. The governance decision is what is missing.

The 3 C’s establish governance at the reasoning layer that existing frameworks were not designed to govern. They name the operating principles that determine whether agentic execution is aligned with institutional authority before it happens.

That failure cannot be addressed at the model layer. It cannot be addressed at the control surface. It must be addressed at the reasoning layer where meaning is formed.

The 3 C’s define the conditions under which that reasoning can be trusted before execution. Without them, institutions will continue to operate systems that are technically correct, operationally complete, and institutionally wrong.

The companion working paper on the Semantic Control Plane formalizes the runtime enforcement architecture. The decision to begin governing the reasoning layer is what closes the gap between what existing frameworks specify and what agentic AI now requires.

Framework Reference

Core Principles

Context. The operating principle that establishes the authoritative meaning the agentic system is permitted to resolve against.

Control. The operating principle that constrains how the agentic system is permitted to act on resolved meaning. Operationalized through SDI measurement, Deterministic Gate enforcement, and structured escalation.

Coordination. The operating principle that governs how meaning, authority, and execution propagate across multiple agents and enterprise systems.

Reasoning Layer. The orchestration and inference layer at which agentic systems resolve context across enterprise systems before execution.

Reasoning Baseline. The set of authoritative institutional definitions the agent is permitted to resolve against at runtime. To be governable, must satisfy Explicit Authorization, Version Control and Auditability, and Runtime Resolvability.

Pre-Execution Assurance. The governance standard requiring verification that an agentic system’s reasoning has resolved against authorized meaning before execution proceeds.

Two Questions of Control. The supervisory examination test for control effectiveness in agentic AI. First: did the control fire? Second: did the control evaluate the agent’s reasoning against an authorized definition?

Connected Constructs

Agentic Workflow Drift (AWD). The unintentional emergence of synthesized operating logic from inconsistencies across enterprise platforms when Context is absent.

Agentic Workflow Subversion (AWS). The deliberate or cascading exploitation of drift when Control and Coordination are absent.

Semantic Deviation Index (SDI). The runtime measurement standard for the divergence between the meaning the agent has resolved and the meaning the institution has authorized.

Deterministic Gate. The hardcoded software boundary that intercepts execution when the SDI exceeds an authorized threshold.

Invisible Failure. The condition in which every control fires correctly but the institution is still wrong, because the controls evaluated against a definition that has already shifted from what was authorized.

Semantic Audit Trail. The governance record that logs the authoritative semantic baseline, SDI scores, gate decisions, and propagation across agents and systems.

Agentic Blast Radius. The scope of downstream systems an agent can affect if drift or subversion occurs. Contained through Coordination.

Governance Latency. The lag between agentic execution speed and the institution’s capacity to verify execution against authorized meaning.

Agentic Governance Model (AGM). The architecture that operationalizes the 3 C’s in financial services environments.

Semantic Control Plane (SCP). The runtime enforcement architecture that operationalizes the 3 C’s at enterprise scale.

Citation

Doyle-Spare, M. (2026). Championship Strategy for Agentic AI: The Foundation of the Semantic Control Plane. Reasoning Layer Risk (Substack). Supporting working papers: SSRN Nos. 6459612, 6531238, and 6674761.

Author’s Note

This article is a refreshed and expanded version of “Super Bowl Strategy for Agentic AI: Context, Control, and Coordination,” first published by the author on LinkedIn on February 3, 2026, which introduced the Agentic 3 C’s Framework. The connected constructs referenced throughout this article were formalized in the author’s subsequent SSRN working papers: the Semantic Control Plane, Agentic Workflow Drift, and Agentic Workflow Subversion in SSRN Working Paper №6459612 (March 2026); the Semantic Deviation Index, Deterministic Gate, Agentic Blast Radius, and Semantic Audit Trail in SSRN Working Paper №6531238 (April 2026); and the formal articulation of the Agentic 3 C’s Framework in SSRN Working Paper №6674761 (April 2026).

About the Author

Maureen Doyle-Spare is an independent practitioner and researcher in AI governance and banking controls. She is the originator of the Agentic Workflow Drift framework, first introduced in March 2026, and the connected frameworks of Agentic Workflow Subversion, the Semantic Control Plane, the Semantic Deviation Index (SDI), the Deterministic Gate, the Agentic Blast Radius, the Semantic Audit Trail, and the Agentic 3 C’s Framework, collectively anchored by the Doyle-Spare Agentic Governance Model (AGM).

Intellectual Property Notice

All frameworks, terminology, and constructs referenced in this article, including the Agentic 3 C’s Framework, Agentic Workflow Drift, Agentic Workflow Subversion, the Semantic Control Plane, the Semantic Deviation Index, the Deterministic Gate, the Agentic Blast Radius, the Semantic Audit Trail, the Agentic Governance Model, Pre-Execution Assurance, Two Questions of Control, Invisible Failure, Reasoning Baseline, Governance Latency, and Reasoning Layer Risk, are the intellectual property of Maureen Doyle-Spare, formalized in SSRN Working Papers №6459612, 6531238, and 6674761.

© 2026 Maureen Doyle-Spare. All rights reserved.

ORCID: 0009–0009–6655–1394

SSRN Author Page: https://papers.ssrn.com/sol3/cf_dev/AbsByAuth.cfm?per_id=10836296

OSF Research Program: https://osf.io/zuacj/

ResearchGate: https://www.researchgate.net/profile/Maureen-Doyle-Spare?ev=prf_overview

Substack: maureendoylespare.substack.com

LinkedIn: linkedin.com/in/maureendoylespare

Championship Strategy for Agentic AI was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.