By Sudheer Narra · Co-Founder and CTO, InclusiveAI | CognitiveBotics

May 2026 · 8 min read · myinclusive.ai | cognitivebotics.com

The Problem We Set Out to Solve

At CognitiveBotics, our mission is to make structured early intervention accessible to every child on the autism spectrum — regardless of geography, therapist availability, or economic circumstance. As we scaled across India and the UAE, one challenge became increasingly clear: content personalization at scale is fundamentally a hard engineering problem when your users are children with Autism Spectrum Disorder (ASD).

Unlike traditional e-learning platforms where content is largely static and user behavior is relatively predictable, children with ASD present with high intra-individual variability. Two children at the same developmental age may have completely divergent sensory profiles, attention spans, prompt dependency levels, and modality preferences. A child who responds well to animated visual prompts may show complete inattention to speech-driven cues — and this profile can shift week to week as the child progresses through therapy.

Architecture: The Modalities Engine

Our answer was the CognitiveBotics Modalities Engine — a multi-modal content delivery and personalization layer built on top of our core Learning Management System (LMS). Rather than treating content as static media assets, we modeled each learning objective as a parameterized instructional unit that can be rendered across three primary modalities: Speech, Vision, and Animation.

Each learning objective in our system is defined by:

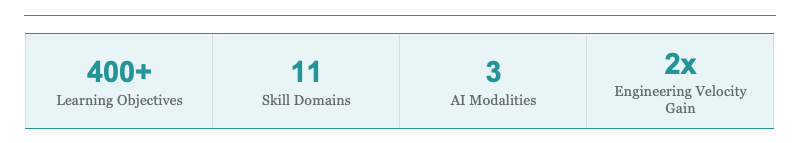

- A skill taxonomy node mapped to our curriculum graph (11 foundational skill domains, 400+ learning objectives)

- A prompt hierarchy aligned to Applied Behavior Analysis (ABA) principles — from full physical prompts to fully independent responses

- A modality weight vector that is updated in real time based on the child’s response data

At runtime, our content orchestration layer selects the optimal modality — or combination of modalities — for each trial, using a multi-armed bandit reinforcement learning framework. This means the system continuously explores and exploits modality preferences, converging on the highest-engagement delivery format for each individual child without requiring manual therapist configuration.

Modality 1: Custom Pediatric Speech Recognition

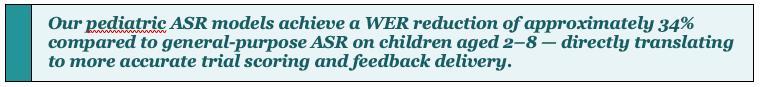

Speech is one of the most technically complex modalities in our stack, for a reason that is not immediately obvious: children’s vocal frequency profiles are fundamentally different from adults.

The fundamental frequency (F0) of adult speech typically ranges from 85–180 Hz for males and 165–255 Hz for females. Children aged 2–8 produce F0 values in the range of 250–400 Hz, with significantly higher formant frequencies (F1, F2) due to shorter vocal tract length. Most off-the-shelf ASR models — including Whisper, wav2vec 2.0, and many commercial APIs — are trained predominantly on adult speech corpora, resulting in significantly degraded Word Error Rates (WER) on young children’s speech.

To address this, we trained our own pediatric ASR models on a curated dataset of children’s speech in English, Hindi, Telugu, Tamil, and Arabic, using Vosk as our base ASR framework and SarvamAI for Indian language support. Key techniques applied:

- Spectral augmentation during training to improve robustness across children’s voice variability

- Transfer learning from child-speech corpora including CMU Kids and PF-STAR, fine-tuned on in-house recordings

- Noise-robust feature extraction using log-Mel filterbank features to handle real-world therapy center acoustics

- Arabic support via Voxtral, making us the only platform serving MENA early intervention with language-native speech evaluation

Modality 2: On-Device Computer Vision with Heuristic Mapping

Our Vision modality powers gaze detection, pose estimation, and imitation-based learning objectives. We use MediaPipe as our core inference framework for real-time facial landmark detection, hand gesture recognition, and pose estimation, running entirely on-device via edge inference — a deliberate architecture decision with significant privacy and performance implications.

By running vision models at the edge, we eliminate the need to transmit raw video or image data to our servers. This is not merely a performance optimization; it is a privacy-by-design decision ensuring compliance with HIPAA, COPPA, and GDPR without requiring data processing agreements for biometric data. No facial imagery ever leaves the device.

For learning objectives requiring visual classification, we use TensorFlow Lite models quantized to INT8 for efficient on-device inference, achieving sub-100ms latency on mid-range Android devices.

The Heuristic Mapping Layer

Raw pose and gaze outputs from MediaPipe are inherently noisy — a child’s attention gaze is not a clean binary signal. We built a rule-based heuristic engine that sits between raw model outputs and clinical scoring:

- Temporal smoothing using exponential moving averages over gaze vectors to reduce false-positive attention signals

- Configurable dwell time thresholds to distinguish intentional fixation from incidental gaze

- Gesture confidence score mapping to prompt dependency levels in the ABA prompt hierarchy

- Graceful occlusion handling — critical when children frequently look away or cover the camera

Modality 3: Sensory-Safe Animation Games

Perhaps the most nuanced engineering challenge in our stack is the Animation modality. Children with ASD frequently present with sensory processing differences — hypersensitivity to high-contrast visuals, fast motion, sudden audio cues, or screen flicker. Standard gamification frameworks optimized for neurotypical children can actively increase anxiety and reduce engagement in ASD children.

Our animation engine is built on Three.js and React Three Fiber, giving us fine-grained control over every visual parameter. Our internal UX research team — working alongside occupational therapists and behavioral analysts — defined a sensory design system governing:

- Maximum contrast ratios capped at levels proven not to trigger visual hypersensitivity

- Animation velocity curves using ease-in-out bezier curves rather than linear or snap transitions, reducing motion shock

- Reward animation timing — positive reinforcement animations are brief (< 1.5 seconds), non-looping, triggered only on independent correct responses

- Desaturated, low-chroma color palettes for backgrounds; high-chroma accent colors reserved only for target stimuli based on ABA visual salience principles

- Spectrally flat audio reinforcement with volume normalization to prevent sudden loudness changes

Every animation asset in our library is procedurally parameterized — we do not use pre-rendered video assets. This allows us to dynamically adjust visual parameters at render time based on the child’s individual sensory profile, without maintaining a combinatorially exploding library of asset variants.

Engineering Velocity: AI-Assisted Content Development

The CognitiveBotics engineering team uses a suite of AI-assisted development tools embedded directly into our custom Content Management Platform (CMP). Over the past six months, we have seen a 2× increase in content engineering throughput, driven by several key integrations:

- a fine-tuned content generation model generates first drafts of learning objective descriptions and prompt scripts, reducing average authoring time from ~45 minutes to ~18 minutes per objective LLM-assisted curriculum authoring —

- computer vision models automatically tag uploaded visual assets with sensory attributes (contrast level, motion intensity, color temperature), feeding directly into the modality selection engine Automated asset tagging —

- GitHub Copilot integrated with our internal component library generates boilerplate modality scaffold code, reducing implementation time for new objective types Code generation for modality templates —

- a custom test harness runs 400+ visual and behavioral regression tests across our modality engine on every deployment Automated regression testing —

What’s Next

We are currently expanding our modalities engine in three directions:

- Adaptive Prompt Fading — using reinforcement learning to automate prompt hierarchy decisions in real time, reducing therapist manual adjustments while maintaining clinical precision.

- Multilingual NLP for Parent Reporting — using fine-tuned LLMs to generate parent-facing progress summaries in Hindi, Telugu, Tamil, and Arabic from raw session data, removing the language barrier that limits parent engagement in tier 2 and 3 Indian cities.

- Federated Learning for Model Improvement — exploring privacy-preserving federated learning approaches to improve our pediatric ASR and vision models across our growing user base, without centralizing any child data.

Closing Thoughts

Building technology for children with Autism requires a different engineering philosophy than most consumer or enterprise software. Every technical decision — from ASR model architecture to animation velocity curves — carries a direct clinical consequence. The margin for error is low; the stakes for the children and families we serve are high.

What we have built at CognitiveBotics is not just a content delivery platform — it is a clinically-informed, multi-modal, adaptive learning system designed to meet each child exactly where they are. We are still early in that journey, but the engineering foundation we have laid gives us confidence that we can scale this to every child who needs it.

Building a Personalized Content Engine for Children with Autism: A Technical Deep Dive was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.