Learning zero, and what SLT gets wrong about it

This is a first in a pair of posts I’m hoping to write about Singular Learning Theory (SLT) and singularities as a model of data degeneracy. If I get to it, the second post is going to be more general-audience; this one is more technical.

Introduction

To me, SLT is an important source of toy models which point at an interesting class of new statistical phenomena in learning. It is also a valuable correction to an older and (at this point) largely-defunct story of learning being fully controlled by Hessian eigenvalues and “nonsingular basins”. Practitioners of SLT have been instrumental for developing and refining the practice of Bayesian sampling (used by physicists in papers like this one) to empirical models. And the theory’s founder Sumio Watanabe is a once-in-a-generation genius who saw and mathematically justified crucial statistical and information-theoretic concepts in ML long before they appeared in “mainstream” theory.

However there is a frequently repeated statement in SLT papers – one that doesn’t affect empirical results – which I think is wrong in a load-bearing way. This is the statement that models that appear in machine learning are singular in the infinite-data limit, and that a measurement associated with this singularity, called the RLCT, controls generalization and free energy in cases of interest.

This isn’t a fixable detail, but rather an unavoidable structural issue I’ll spell out below. I think it’s unfortunate that an elegant and useful theory is linked with an incorrect statement, and it causes potential for future disappointment and for research stuck in a less-useful direction. Many theory and empirics results associated with SLT are important and, I think, interpretability-relevant, independently of the question of whether singularity “explains degeneracy” in practice. As I’ll explain below, I think that singular models correctly occupy the same role as symmetries in condensed-matter physics. Many key phenomena, in particular symmetry-breaking phase transitions, were originally discovered in more idealized models with symmetry, but the resulting physical phenomenology extends to a huge class of phase transitions of models with no symmetry involved.

I’ve explained to several people the key arguments for why the singularity story is flawed. Several (most recently Yevgeny Liokumovich, to whom I’m indebted for really good discussions) liked the key example and asked me to write this up – so I will do so in this post. In this post I’ll focus on the more subtle second part of the statement (the first part – whether ML models are singular at all in the infinite-data limit – is the subject of the future companion post). I’ll show that the true degeneracy, correctly measured by the lambda-hat parameter,[1] is in fact much stronger in realistic learning contexts, and hence generalization behaves much better than a purely singularity-based prediction would imply.

The key insight of this post is contained in the Hermite mode graph below. It shows that even upon biasing the odds in favor of the SLT theoretical model: looking at a clean, singular model where the SLT limit is understood, its regime of applicability only sets in at astronomically large (roughly exponential in model size) data. At any realistic data scale, the load-bearing structures that control degeneracy cannot be (at least exclusively) associated with singularities, and require a distinct thermodynamic notion of “effective theory” which is not purely geometric.

What doesn’t need fixing

First, let me say some things that I believe to be true quite generally, and do not need fixing in the SLT story:

- Model generalization in the Bayesian regime is controlled by measurements of free energy of a low-loss basin (i.e. the region of the loss landscape where the loss is between two values and where is the best possible loss in the basin).

- Understanding this low-loss basin for different , and understanding its geometric, physical and information-theoretic properties is valuable for interpretability. In particular there is much evidence that in practice, learning-relevant phenomena in Bayesian settings occur also in other kinds of learning (such as SGD).

- For models that generalize well, this basin will tend to be larger. (There is a version of this that is a theorem.)

- The size of this basin at a given loss sensitivity parameter can be measured via the “lambda-hat estimator”, which is often (informally) called the (estimated) “learning coefficient” in SLT papers[2] (and converges to the true lambda-hat value in a suitable limit).

What’s wrong

What is false here is that the measured value lambda-hat captures information that is primarily the geometry of a singularity in any cases of interest. In particular the nomenclature “learning coefficient” (which is a geometric invariant of a singularity) is incorrect in almost all settings.

There are two settings where identifying lambda-hat with geometric information makes sense:

- A version of it is true and useful for highly-symmetric tasks on linear models and for shallow quadratic models (for example in this paper).

- A version of this is true for models of very low dimension (order of magnitude of perhaps 20 parameters), as seen in e.g. this paper.

However outside of these two cases singularities are not the right measurement to understand generalization, at least when taken by themselves. The key issue (that I hope to expand a bit in the companion post) is that the phenomenon of a loss function being singular is unstable. This is similar to how a general continuous function on an interval is not a polynomial (though it can be arbitrarily well approximated by a polynomial spline). Thus asking “what is the singularity controlling generalization of a task” can be similar to asking “what is the polynomial degree of the function “. There may be invariants similar to polynomial degree that are interesting, but the notion of degree by itself as a nice algebraic invariant breaks down.

I think that to see how the singularity story breaks down it’s best to look at a setting where the theory actually works perfectly in the limit, but exceptions (1) and (2) above don’t hold. This means that we should look at a model:

- With non-polynomial activations and

- With (mildly) high parameter dimension.

We’ll furthermore take a setting with a nontrivial and well-understood singularity.

The best example here is to consider a 2-layer model learning the zero function

Here the story I’ll tell depends a lot on the activation function (though the general “generalization behavior” is broadly activation-independent at more permissive values of ). Let’s choose the activation function (this is associated to a cleaner energy function as we’ll see). To make life easier, let’s write down a model without biases[3]:

Here are h-dimensional vectors ( for “hidden dimension”).

In particular the parameter dimension in this case is To have “moderately high parameter dimension” let’s take h = 128, so .

The theory

So far we haven’t talked about data. When we have a learning problem, we should have a data distribution on inputs . In this case the inputs are in a 1-dimensional space and a natural distribution is the normal (Gaussian) distribution of variance one:

In particular when we are talking about loss singularities, we care about the infinite data loss. In other words loss averaged over all drawn from this distribution. It is nevertheless conventional to also include a parameter in SLT analysis, which is the number of training samples. Recall that I used a “basin height” value to define the basin volume (if we directly set , the volume is zero and the entropy is ). In physics, this is called the temperature[4].

Infinite data, and the parameters and .

In SLT work, the value is replaced by a multiple of .[5] Importantly, Watanabe proves that in order to get accurate information about the loss minima within accuracy, one needs on the order of datapoints (up to some logarithmic factors that we can ignore – for mathematicians, our asymptotic notation should be read as “tilde-notation” here). While this is proven at an asymptotic scale, it is borne out at realistic scale in toy models (more generally one direction of the associated inequality can be established in much more generality).

This means that typically for a deep network, measuring the infinite-data loss of a given neural net to accuracy requires on the order of data points. It turns out that for one-layer networks, one can often circumvent actually taking data averages and compute the infinite-data loss directly, or at least to exponential accuracy. Indeed, the infinite-data loss is an integral, and integrals can often be rewritten via (something like) a Taylor series, with exponential rate of convergence. In the 2-layer case, a nice formula to use is the Gauss-Hermite quadrature formula.

The SLT prediction

Before going on, let’s write down the SLT prediction, which is clean in this case. SLT theory predicts that in the true limit, the value of lambda-hat will stabilize to a known value associated to the singularity, called the RLCT. For a generic loss function, the expectation here is that the RLCT is (this is for example true for the problem of learning a single Hermite mode, such as .[6]

Here we are in a highly symmetric case, and the target has a true degeneracy. Moreover it is singular at the point . The singularity can be well understood here (this is an important SLT result), and we have:

(To see this: note that if we take , the output is always the zero function, no matter what we choose for . This leaves us a degeneracy of dimension , which implies a bound of . There are other 64-dimensional degenerate subspaces, for example ; but we can check by analyzing the singularity that the added degeneracy produced is not strong enough to reduce the RLCT).

This implies that in the limit , the asymptotic lambda-hat measurement must return 64. The key wrinkle shows up when we look at what “the limit” actually entails.

Hermite modes and excitations

For finite , we can heuristically estimate the lambda-hat value, and the number of degrees of freedom, in a different way. The resulting value for measures the effective degeneracy at a finite dataset, and is the relevant quantity for generalization at the corresponding dataset size.

Recall that we’re learning the zero function . This means that in the space of functions, the loss of a given function (maybe given by a weight parameter ) is where is the Gaussian pdf “data distribution” function. We can rewrite this integral as

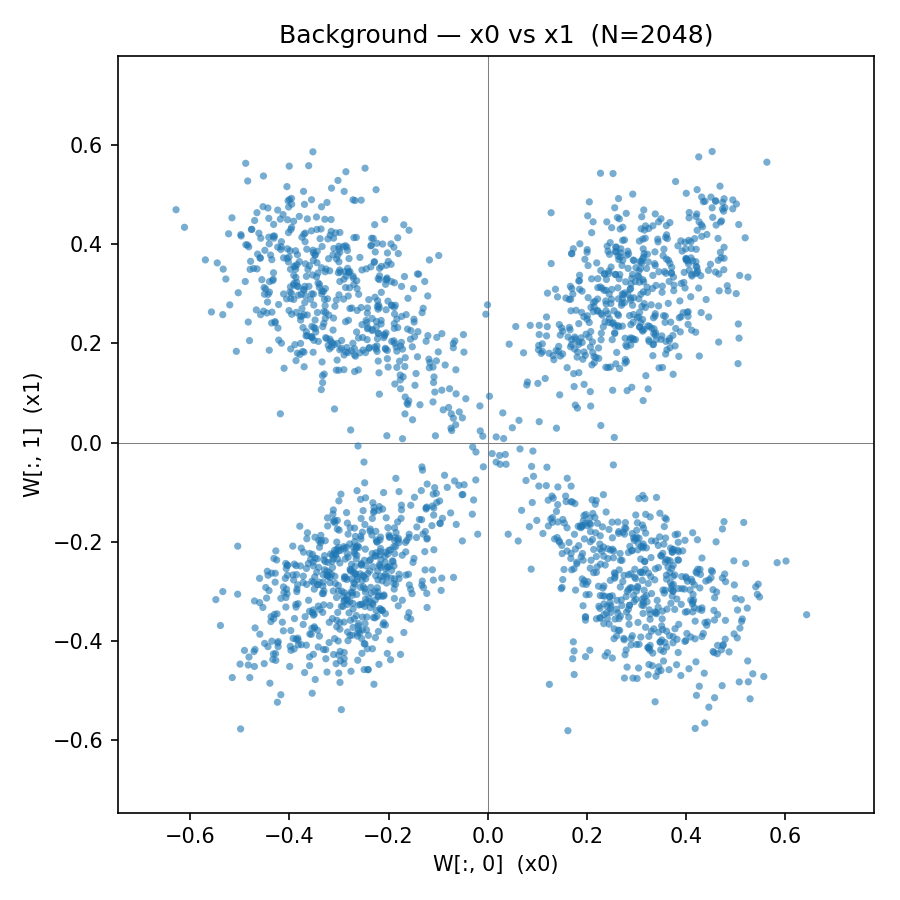

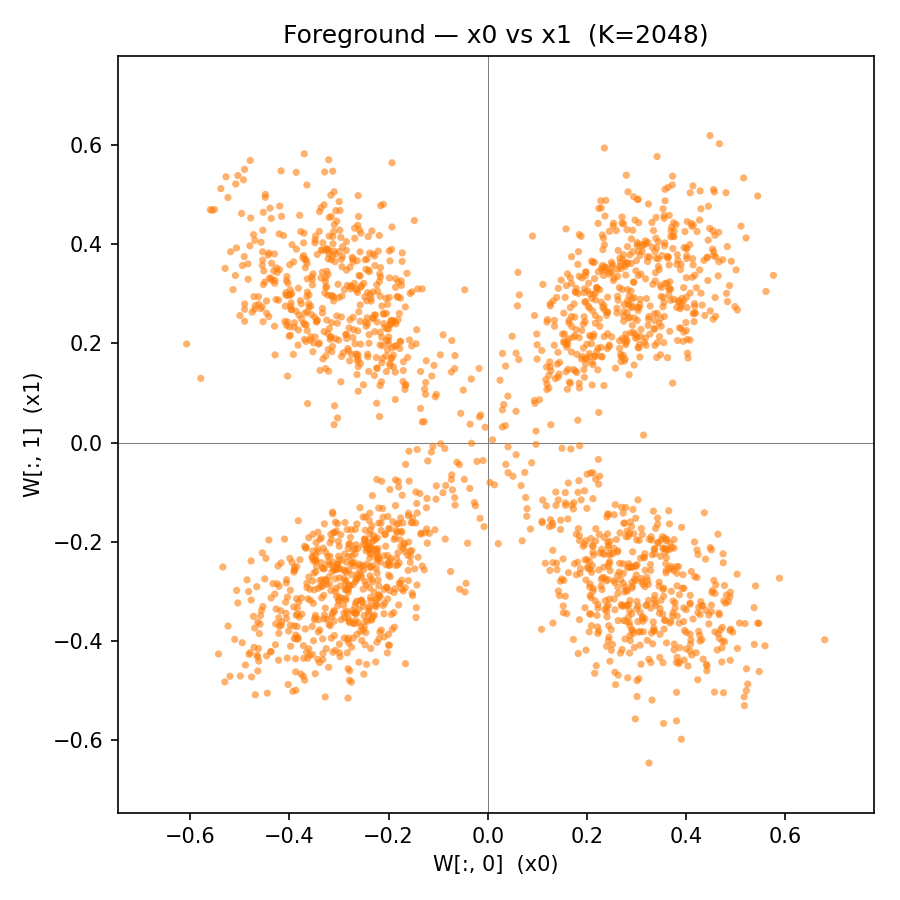

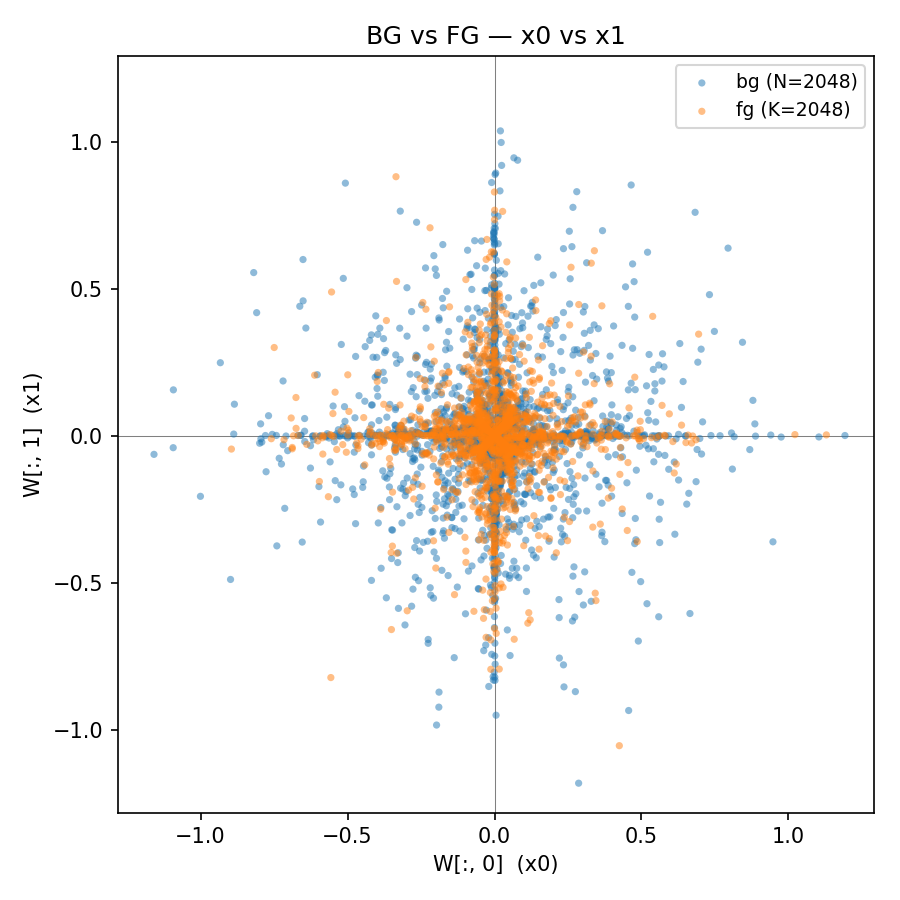

Here the numbers are the coefficients of in an elegant orthonormal basis called the basis of Hermite polynomials. Now for neural nets with an analytic activation function (such as tanh), we can show that this series not only converges but it converges very quickly. In particular, the following is a logarithmic plot of the coefficients for a random neural net of width , where the embedding weights are uniformly chosen between -1 and 1:

The red line is the average across several (blue) samples. Since tanh is odd, only odd Hermite polynomials are nonzero and recorded here. On the right we see that the squared coefficients follow a predicted law of for the kth coefficient.

Note that I’m using the Gauss-Hermite quadrature formula here to compute the infinite-data function to accuracies of this order. In order to get comparable accuracy with random sampling I’d have to use on the order of samples, more than the training corpora for the biggest existing models. The scaling law on the square Hermite coefficients behaves asymptotically like , and we can even theoretically predict the slope[7].

We can think of this situation as a physical system with many different excitation modes indexed by the integer k. Higher modes are “heavier”, or harder to excite. They become relevant only as we require more precision (or “degree of resolution”) of our system – in physics lingo they are “UV modes”.

An upshot for the lambda-hat calculation is that so long as our value n (corresponding to the number of samples or ) is significantly less than , the kth mode doesn’t matter: we can completely ignore loss coming from that mode. In particular, so long as our number of samples is less than or so, the space explored by the model has effectively less than 100 degrees of freedom. By a simple argument we can then deduce that any lambda-hat value measured at datapoints is less than (the measure roughly counts the number of “effective degrees of freedom”). This is important enough to write out explicitly:

Note that (a more careful version of) this inequality is easy to prove rigorously by making formal the Hermite mode decay arguments above.

This inequality shows that we can’t even begin to saturate the “true singularity” RLCT = 64 before exponentially high numbers of data.

This means that we have demonstrated the following observation:

In order to see the true RLCT in a simple 256-parameter singular model, we need a data sample of size

In practice, the free energy (and the associated lambda-hat measurement) is much lower at any “realistic” data scale. This is actually good, and what we expect: it’s saying that “return roughly to 0” is actually very easy to learn, and requires very little data in practice.

Addendum: the actual lambda-hat scaling (Ansatz and experiment)

To prove that the effective degeneracy value undershoots the RLCT, we used an inequality between and which I believe is straightforward to make rigorous. This leads to a natural Ansatz that this is an asymptotic equality up to a suitable factor, and is a quadratic function of . The Ansatz here comes from the Hermite mode scaling: any value of n sees at most Hermite modes as relevant degrees of freedom. If we were to assume these have no relations between them, the lambda-hat scaling would be exactly .

From this we get a prediction that the free energy in this setting is cubic in log-n:

This contrasts with the SLT limit where the free energy is linear in

I ran a sampling experiment to confirm this, and the result agrees with the Ansatz. In the graph the parameter (beta) is what I was calling n here.[8]

Measurements of the lambda-hat values for different values of , on the usual logarithmic scale for working with free energy. The heuristics in this section predict that this function should be quadratic. The image on the right shows lambda-hat as a function of ; the linearity here is the quadratic Ansatz.

It’s worth reiterating that this quadratic behavior is very much an effective phenomenon as we change the scale of interest. In the true singular limit (very large n), this roughly quadratic growth should plateau out at .

Measuring lambda-hat depends on sampling at low temperatures, a notoriously finicky process, so it’s plausible that the measurement in this experiment is flawed. Extrapolating this graph, we would expect the RLCT to plateau to the true value at roughly , or when roughly Hermite modes become relevant.

The effective theory

In the previous sections we have been contrasting a purely geometric SLT prediction with a physical effective theory which posits a sea of coupled excitation modes that gradually become relevant at larger and larger n. Note that the improved theory does not predict that the free energy is captured by the Hessian eigenvalues (thus reducing it to the defunct “classical learning theory” that assumes all basins are quadratic). Indeed, all Hessian directions in our example are exactly zero, and any meaningful theory must see the singularities. The reality here instead is that the singularities are not the whole story. In order to understand the way that a network of any realistic size learns, we need to understand a physical volume. This volume can have “hard” geometry at the limit (singularities). But it can also have “soft” geometry, where for finite values of the valley has real finite “thickness” parameters that scale differently in different regimes. In some cases these can be viewed as generalized and nonlinear “widths” of the basin’s free directions. Since the number of free directions is huge and thicknesses combine multiplicatively, these can (and in our example do) easily dominate the limiting singular structure (if it exists) at any “sub-astronomical” data size. These numerical widths can come from Hessian eigenvalues, or they can happen in a Hessian-flat region and combine with singularities as in the example above. More generally the free energy measurements can see complicated thermodynamic structure nonlinearly coupling numerical size parameters associated to many degrees of freedom.

Physics has a set of tools for understanding such high-dimensional structures that interacts richly with the energy scale, and these physics approaches have been applied in an ML context. The best tools we have tend to come from multi-particle thermodynamical systems, where numerical and geometric degrees of freedom of microscopic particles combine nontrivially to give an effective energy landscape on macroscopic parameters.

One setting where this is done cleanly is the body of knowledge known as “NN field theory”. This theory exists in a large-width limit and is, like SLT, incomplete. But it has the advantage of giving the correct effective theory for models with simple targets which have “reasonably large” size, giving a kind of complement to SLT.

This theory is undergoing active development in a manner that resembles the SLT update with respect to the old “Hessian basin” story. In this case an old story of Gaussian processes is being extended to a much more expressive theory, by expanding around a nontrivial/ strongly-coupled vacuum – aka a mean field theory. In cases where they apply, these theories tend to come pre-equipped with a hierarchy of effective theories that for example extend the Hermite excitation story above.

A theory that combines mean field and singularities is much needed. In fact the existing statistical field theory literature already can account for simple cubic and quartic singularities, called “Airy” and “Pearcey” corrections, respectively, but we currently lack a more unified low-temperature picture that can track general singularities. There’s an interesting potential precedent here: when physicists observed tensions between large symmetry groups and field theory, the resulting notion of gauge theory unified the two fields and generated a new family of insights that transformed modern physics. Building a corresponding theory for singularities – a theory that combines singular phenomena with high-dimensional field theoretic phenomena in neural nets – strikes me as an unusually promising direction for a version of SLT that is empirically faithful while retaining interesting geometric structures (though it should be noted that singularities without additional structure are pretty significantly different from symmetries in a physics setting, and the analogy is not guaranteed not hold up).

Is this example special?

The example is chosen to be clean, but the conclusion doesn’t depend on its cleanness; if anything, the gap between the singular prediction and reality widens in messier settings. Settings where a clean physics story is known are very rare, but similar (and indeed nicer, though slightly less “natural-seeming”) examples exist. The choice of activation function and data distribution generalizes completely: so long as the activation is analytic, it’s relevant in terms of the details of exponential growth rather than its presence. In particular a very nice context to use (that I considered building this post around) is a two-dimensional input distribution restricted to a circle , where the different excitation modes correspond to group representations[9] and the exponential growth of energies at different degrees is cleaner. A similar (though more complicated) story exists for the cube in d-dimensional space.

But moving away from this kind of clean setting cuts in a particular direction: it makes both sides of the picture worse, not just one. Higher-dimensional or less regular data or non-analytic activations give messier spectra[10] (or no clean spectrum at all), and the same features also weaken the singular structure that SLT needs. Genuine singularities for NNs at infinite data are a feature of analytic, low-dimensional models; making the model less clean tends to wash them out. Depth introduces a separate problem. Denoising effects (learned by realistic models) can turn high-loss solutions of a shallow network that are far from a potential singularity into very low-loss solutions that are indistinguishable from singular minima at any sub-astronomical n, even though they are not singular in the SLT sense.

Ultimately I’m sympathetic to SLT phenomena “contributing in a relevant way” to models (more in the next section); but my honest view is that as we move away from this clean example, the possibility of singularity fully explaining degeneracy at realistic scales becomes more and more remote.

The upshot

At the end of the day, my personal view is that loss landscape singularities do matter for realistic models. But the way they matter happens at specific, relatively coarse, values of the cutoff , and in low-dimensional reductions – like the low-dimensional Fourier modes of modular addition, or otherwise localized components of a larger and messier model. They should only be visible once the dominant – but likely less interesting – bulk effects (like the above spectra) have been taken into account. To me this picture is very similar to the story of symmetry in statistical physics. Here symmetry groups typically produce neat and mathematical corrections – factors of 2 and the like – to messier statistical effects special to large systems. Though sub-dominant, the structures associated with symmetry are often instrumental for crystallizing out new structures and phenomena. I also buy Watanabe’s reasoning that singularities, more than symmetry, cause interesting degeneracy and generalization behavior in the statistical physics of learning models. This makes it likely that singularities are a “fundamental idealization” in learning similar to how symmetries are a “fundamental idealization” in physics.

Nevertheless this is speculation. The solid corrective to take home is that degeneracy in realistic models should not be too readily identified with singularities. The SLT notions of “effective degeneracy” and free energy remain valuable whether or not the degeneracy is produced by singular structure, and the lambda-hat estimators that measure these values (always at a finite or effective scale!) remain well-grounded. The effective story is crucial for learning, but is not necessarily geometric. In the cases where these are best understood, and in fact where singularities most cleanly appear, the actual “hard” singular structure is only visible at data sizes larger than the size of the visible universe.

As usual, reality is more complicated than a simple story. And as usual, the simple stories point at real phenomena that are incredibly important for reality.

- ^

The terminology “lambda-hat” for an important and easy-to-measure physical quantity is unfortunate. It seems that physics lacks a more canonical name for it. I’ll stick with lambda-hat for this post.

- ^

Mathematically, the lambda-hat estimator converges to more or less the derivative of the free energy, i.e. roughly the “entropy”, or log volume, of the basin with respect to epsilon: this follows a standard pattern in physics where derivatives of the free energy tend to be easier to compute than the energy itself. The full free energy can be computed by taking a numerical integral of this value (appropriately scaled) over different epsilon. In practice, if we are interested in a ballpark measurement of the free energy, just taking lambda-hat at a single cutoff of interest is sufficient, and tends to be associated with generalization in the way you want.

- ^

This doesn’t matter for the asymptotics here.

- ^

In both physics and SLT, the basin “walls” are not hard step functions at loss = but rather soft “logistic” walls – this doesn’t change the phenomenology in practice.

- ^

The law often has a log factor in SLT papers, so or similar. As it’s a small multiplicative factor compared to , it’s often ignored. When working straightaway with infinite data as we will be doing, the important variable is the “temperature” and setting is a notational choice.

- ^

Note that in our model readout weights are regularized, which leads to the same asymptotic as having readout weights be bounded, so we can’t cheat by for example writing .

- ^

We can in fact read off the exact asymptotic multiple here from the Hermite coefficients of the function. The value here (doubled since we’re looking at the square coordinates) is the minimal distance of a pole of the analytic continuation of the hyperbolic tanh function from the real line: tanh is singular at , since .

- ^

Recall that in our loss valley discussion, the valley’s “height” is a temperature parameter, and is an inverse temperature parameter, often denoted beta.

- ^

I think the circle in particular is a mathematically very nice example. The spectrum there is, in particular, exponential without a square root.

- ^

In particular non-smooth activations like relus have less extreme spectra at infinite data.