I have no good gears-level model of AI, and the expert views are all over the place (see AI Doc), so the only remaining argument is my physical intuition and a black-box view. Which, in this case, is based on two principles (best called rules of thumb):

- Copernicanism, where one assumes that humanity is not unique or special among the stars

- The "Law" of Straight Lines: https://slatestarcodex.com/2019/03/13/does-reality-drive-straight-lines-on-graphs-or-do-straight-lines-on-graphs-drive-reality/, https://www.lesswrong.com/posts/xkRtegmqL2iyhtDB3/the-gods-of-straight-lines and https://www.astralcodexten.com/p/the-sigmoids-wont-save-you

Let's start with the latter: the main argument for x-risk, as I understand it, is that AI will become smarter than us in every way, becomes an agent with its own goals and will just take the Earth from us and use it for its own purposes. This seems like a reasonable possibility, given how things are going so far. Extrapolating a straight line that far means visible cosmic consequences: a normal planet or a star rather suddenly starting to behave very much unlike what we expect from the known physics: growing very bright, or very dim, or disappearing completely. Copernicanism says that if it can happen here, it probably happens in countless places elsewhere. And yet we do not see it. Why?

Hanson's Grabby Aliens is a proposed way out, where we do not see the aliens coming until they are upon us. This, too, does not survive even the modicum of Copernicanism: the Universe has been around for 14 billion years, our local group of galaxies for almost 10 billion years, and it contains over a trillion stars and is only 20 million light years across, and many stars there are as old as the Sun. So, if Grabby Aliens developed anywhere in the local group within the last 0.1% of the Universe's lifetime, we would have been eaten already. And maybe we have been, but then the argument is moot.

So we have a contradiction between the law of straight lines and Copernicanism: we expect visible effects of AIpocalypse elsewhere, while there are none. What gives? The Fermi paradox says that naive Copernicanism fails, and we are not like anyone else, to the degree where we cannot see anyone else. What gives? Either we are unique and the universe is not a good guide, or the straight lines stop being straight and peter out before Big Bad things happen.

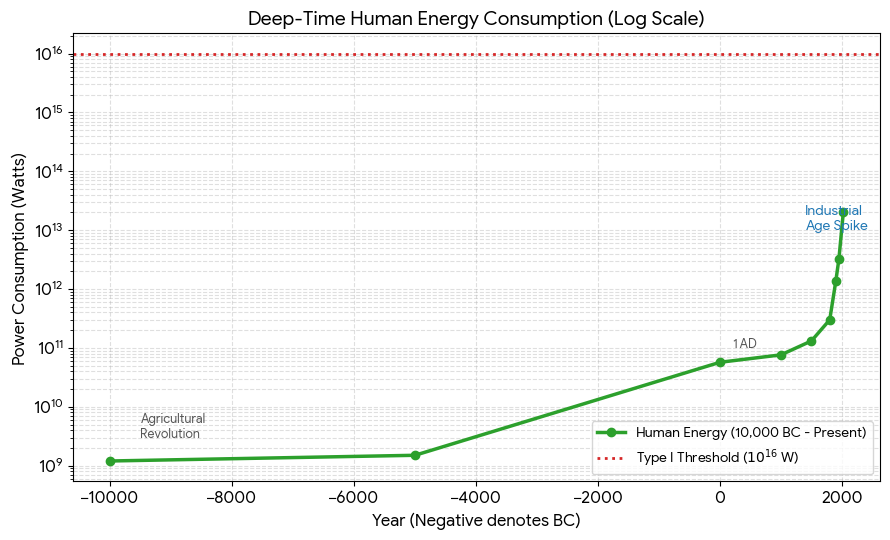

There are still a fair few orders of magnitude between now and Astronomically Visible Big Bad. Probably between 3 (Kardashev I) and 13 (Kardashev II). For comparison, the energy consumption by humanity has increased by 7 orders of magnitude so far:

Credit: Gemini.

So, unless we are unique, some limiting factor will be at play somewhere between now and 5-7 orders of magnitude from now, a bit less than what the humanity has scaled so far.

What might be this limiting factor? Who knows. It may well be self-annihilation, but that is materially different from the predictions based on AI x-risk due to unbounded resource consumption growth by the indifferent amoral bots. So there is a big unknown ahead, and that means that the probability of x-risk, the proverbial p(doom) is not a useful number to wave around.

So there you have it: no good reason to worry about conventional x-risk, a lot of good reasons to worry about the Great Filter.

Discuss