tl;dr: with multiple agents, control attempts tend to create conflict, because control attempts shut down communications channels, which leads to feedback loops in the form of intensifying tug-of-war over variables. intentionally relaxing control to better understand the other agents can break the cycle, and forms the basis of many therapeutic and mediation techniques.

[epistemic status: mostly quite confident this is real and a common source of large amounts of suffering+blind spot, but i'm making a fair few claims i'm not giving the full justification and reasoning trace for here, please check this against your experience and try it out rather than expecting me to prove this]

Control in multiplayer settings

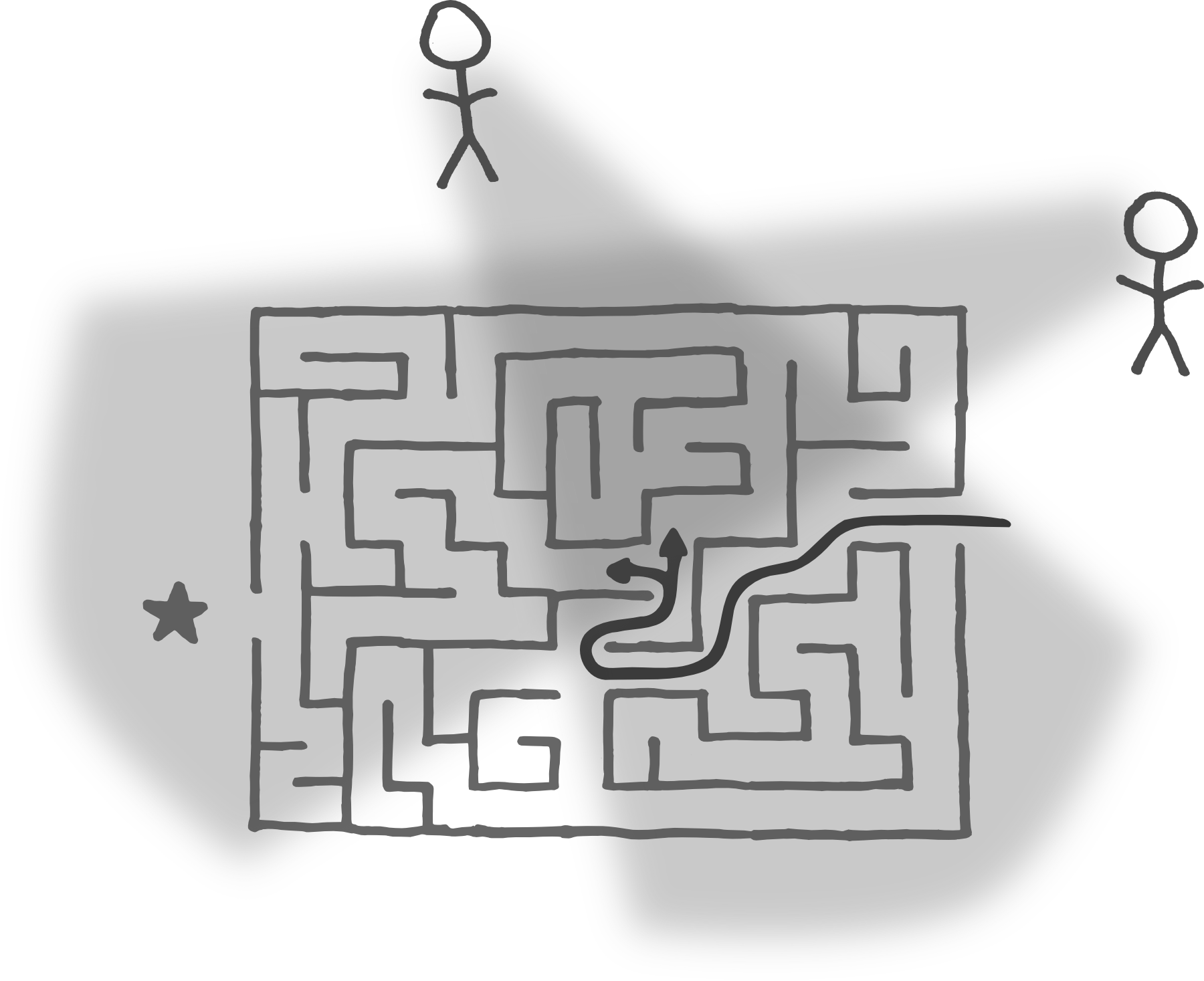

When multiple agents try to control[1] the same variable to different set points, they don't just waste resources in zero-sum competition, they also tend to close up their information-sharing surfaces in a way that blinds them both to a wider space of possibilities.

Each agent's attempts to adjust reality to their goal-models land as painful prediction error for another who has different preferences, leading to "weaken the other agent" as an instrumentally convergent goal. When incoming information might be an attack vector, closing communication channels becomes instrumentally convergent,[2] but those very channels were the ones needed to accurately model each other and notice better ways forward!

If neither agent steps outside the narrow frame of optimizing that variable, conflict can consume arbitrary cognitive resources while it continues, with the cycle of conflict ending only when one agent is subjugated and becomes, in at least the relevant domain, effectively a managed subagent. This is often true even when there are solutions which both agents would have been very happy with, but there was not enough shared context about each other's preference landscapes to locate them.

Opening - An escape from control cycles

Whenever you notice your cognitive slack is being eaten by conflict and that damn variable won't stay where you put it, and you have enough slack to handle some potentially disruptive incoming data or trust that the other agent won't defect on a resolution process,[3] consider opening instead.

Any agent can step up to de-escalate a control cycle. Simply moving into curiosity, genuinely trying to understand why the other might want something different (aka passing their Ideological Turing Test), is often enough. With both your own and the other's perspective contained in one brain, it's often straightforward to see third ways that are strongly positive-sum.

Sometimes a more formal process is helpful, like in mediation where both parties have a place to speak, be heard, and hear that they have been heard. It can also be helpful to hold just the true end-state in mind, putting aside your current best plan for achieving that outcome.

Examples at different scales

Interpersonal: Classic household tension—dirty dishes in the sink.

"I've told you a hundred times—the dishes are your job. I don't want to hear why they're not done, I just need them done." (control)

Resources go to forcing compliance, not understanding what's actually happening for the other person.

"I feel overwhelmed when I see dishes piling up. Can we discuss how to manage this?" (opening)

Resources shift to understanding what's actually going on for both of you. This isn't merely "being nicer"—it's a different information-processing strategy that enables discovering solutions neither party saw initially.

Intrapersonal: When we notice an unwanted emotion, the default is often direct control:

"No, we can't be upset now. This is important. Just push through it."

One subsystem overrides another; the suppressed one amplifies its signals; resources deplete in the struggle. This is why control often intensifies the states it tries to suppress.

"I notice I'm upset. Part of me feels urgent about this work. What's going on?"

This creates space for information exchange across internal boundaries. Techniques like Focusing and Internal Family Systems work precisely by replacing control with curiosity toward internal resistance.

Societal: Consider US gun policy polarization. Urban populations directly experience community gun violence, quick police response, limited legitimate use cases. Rural populations directly experience guns as practical tools, long police response times, responsible gun culture. Each side's model includes mechanisms the other hasn't experienced.

After shootings, urban groups try to control "gun availability"; rural groups perceive tools they see as essential threatened and amplify resistance; urban groups see this as confirming the danger. Each cycle increases certainty in each group's model while reducing ability to hear the other's actual concerns—the same pattern as the interpersonal and intrapersonal examples, at societal scale.

"But the variable is in the wrong place!"

If you try to optimize over an agent which cares about things you're not aware of or mindful of, you will by default set things they care about to states which are extreme and undesirable to them. Or, as Stuart Russell puts it:

A system that is optimizing a function of n variables, where the objective depends on a subset of size k<n, will often set the remaining unconstrained variables to extreme values; if one of those unconstrained variables is actually something we care about, the solution found may be highly undesirable.

The harder you optimize, the more extreme this will be. A better informed version of yourself would also likely not approve of those outcomes, especially if you value that system being effective, healthy, and cognitively flexible or have shared goals.

Agents which seem to be resisting you are caring for something. Remaining open to information about what they are caring for is necessary to avoid both conflict and catastrophic failures of myopic optimization and control spirals.

This doesn't speak against trying to shift variables, just against blindly forcing through resistance from other agents you want to be healthy. Variables are often in the wrong place! It's just that other agents often have relevant information you don't. Resistance is a signal the agent you're meeting has something to offer your models, if you make space to receive it.

The Evolution of Integration

Local incentives seem to convergently drive control spirals across many scales of agency, from the intrapersonal dynamics that Internal Family Systems works with, through personal relationships, all the way up to superagents on the scale of social movements and political parties.

On the bright side, the cost imposed by these control spirals creates pressure for the emergence of meta-systems which bring the warring subsystems together under a framework that encompasses both to help integrate that conflict.[4]

- ^

Slightly more precisely: When different agentic processes select among possible futures they are modelling, but select for futures with different values for some property they both care about. In the agency as time-travel frame, there's a tension between the tugs towards different futures.

- ^

If two computer hackers are trying to break into each other's devices, they'll both use firewalls to restrict incoming information!

- ^

You do need to have enough trust that the other agent won't use the fact that you'll hear them out and model them in more detail to disrupt you in ways you're not willing to risk, but assuming good faith on the meta layer is often (though not always) safe.

- ^

such as legal systems, principles frameworks for mediation or communication, therapeutic techniques, meditation practices, the SSC culture war comments threads, etc

Discuss