Why building intelligence from the wrong blueprint is the most important problem nobody is asking

The mainstream narrative around neurodivergence has a representation problem. The profiles that dominate clinical literature, media portrayals, and public discourse tend toward the most severe presentations — the cases where the mismatch between brain and environment is most visible and most disabling. What receives far less attention is the other end of the spectrum: the neurodivergent professional who never struggled to function, who simply never fitted the room they were placed in, and who — once they found the right room — proved extraordinarily difficult to keep up with.

This article is about that profile. And about why the field building the next generation of intelligence has, so far, failed to learn anything from it.

The pattern history keeps repeating

Consider the cognitive profile of history’s most disruptive thinkers. Newton, obsessively fixated on problems others had abandoned, working in isolation for months at a time. Tesla, with his extraordinary sensory sensitivity and his relentless, consuming focus on ideas that mainstream science had no framework for. Darwin, spending eight years studying barnacles with an intensity that looked, to those around him, somewhere between eccentric and unhinged — before publishing a theory that redrew the map of biology.

None of them were diagnosed with anything. Diagnostic frameworks for neurodivergence did not exist in any meaningful form until the late twentieth century. But researchers who have studied their behavioural records, personal correspondence, and work patterns have identified something consistent across them: a cognitive signature that looks, in retrospect, consistent with neurodivergent [1]. Hyperfocus. Non-linear thinking. Deep resistance to conventional methods. Profound misalignment with the institutional systems of their time.

It is important to be precise here. This is not a claim that genius requires neurodivergence, or that every neurodivergent person is a genius waiting to be unlocked. The research is clear that neurodivergence exists on a spectrum, encompasses a wide range of experiences, and does not guarantee any particular outcome [2]. What it does suggest is a recurring pattern — that the minds which broke consensus most decisively were often the minds least suited to the systems that enforced it.

This is not coincidental. It is, in an evolutionary sense, the point. Neurodivergent traits — divergent thinking, heightened pattern recognition, the capacity for intense and sustained focus on self-selected problems — are understood to persist in human populations precisely because they serve a function [1]. Not in environments designed for routine and compliance, where they register as disorder. But in environments that demand adaptation, novelty, and the willingness to see what everyone else has agreed to stop looking at.

A 2023 empirical study found that neurodiverse pairs — groups containing both autistic and non-autistic participants — consistently produced more innovative outputs than single-neurotype groups, with the lowest similarity scores between solutions and the highest degree of creative divergence [3]. The study’s authors framed it carefully: neurological diversity within groups may be beneficial precisely because it disrupts the tendency toward imitation that homogeneous groups fall into.

The pattern, in other words, is not just historical. It is structural.

The misalignment problem

Here is what the research actually says about neurodivergence: the challenges associated with it are real, documented, and should not be minimised. But the prevailing medical model — which has historically treated any divergence from neurotypical functioning as inherently disordered or pathological — offers a skewed, one-sided picture [4]. It assumes, without evidence, that all neurodivergent individuals will face poor outcomes. What it misses entirely is the role of environment.

A more accurate framing, supported by a growing body of research, is this: neurodivergence is not a deficit within the person. It is a misfit between the person and the environment they are placed in [4][5]. The brain is not broken. The room was built for someone else.

Neurodivergent traits — the inability to engage with tasks that feel arbitrary or imposed, the hyperfocus that activates only on self-selected problems, the resistance to routine and compliance — are genuine liabilities inside systems that reward uniformity and penalise deviation. A school that measures performance through standardised exams, timed tests, and enforced attention will register these traits as failure. So will a workplace built around fixed hours, rigid hierarchies, and the expectation of consistent, predictable output.

But remove those constraints, and the same traits look entirely different. The hyperfocus becomes an asset. The resistance to convention becomes the capacity to question assumptions that everyone else has accepted. The non-linear thinking that made the classroom difficult becomes the pattern recognition that spots what linear thinkers miss.

This is not wishful thinking. It is what the data shows. Neurodivergent professionals consistently self-report strengths in problem-solving, lateral thinking, and connecting ideas across unrelated domains [4]. A 2025 industry report by Disability:IN found that 69% of neurodivergent individuals surveyed reported advantageous cognitive characteristics associated with their neurotype — and projected that the global workforce will be 40% neurodivergent by 2040. The same study found that neurodivergent talent are among the earliest adopters of AI, bringing what researchers described as future-ready skills to organisations equipped to use them.

The cognitive science community is making a parallel argument. Sulik and colleagues, writing in Cognitive Science, contend that the field will produce better theories of human cognition by engaging with neurodiversity the same way it values other forms of cognitive diversity — and that failing to do so presents both ethical and scientific costs [6]. The neurodivergent mind is not an edge case to be accommodated. It is a data point that current frameworks are not equipped to read correctly.

That misreading has consequences. And nowhere are those consequences more visible than in the technology we are now building to replicate human intelligence.

The neurotypical machine

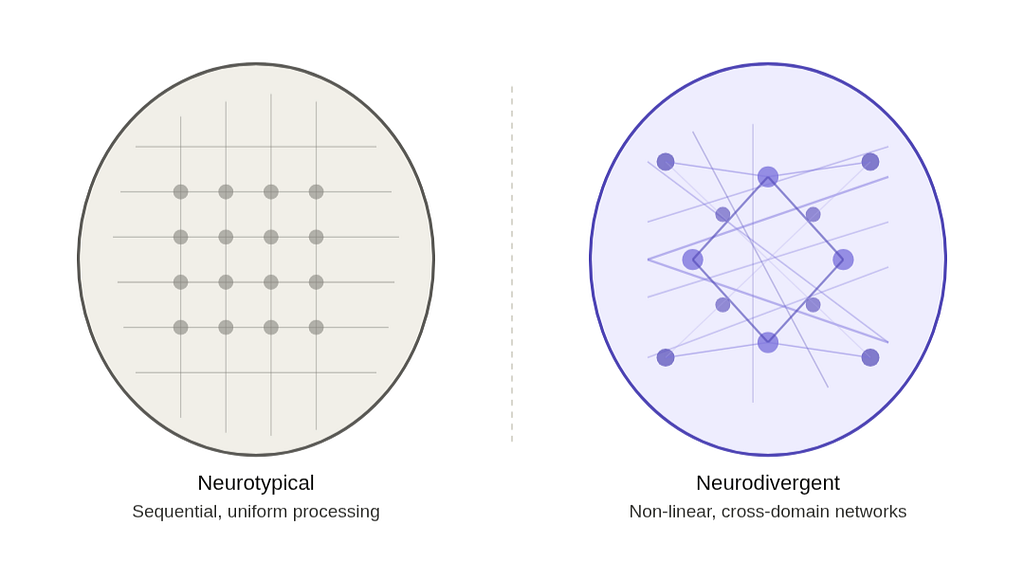

In 2025, a peer-reviewed paper in BioNanoScience put it plainly: artificial intelligence systems have predominantly mirrored neurotypical brain architectures, optimising for efficiency, predictability, and standardised cognitive patterns [7]. The paper went further — proposing that non-neurotypical brains, with their distinct neurochemical mechanisms and non-linear processing strategies, represent an untapped blueprint for a fundamentally different kind of AI. One capable of hyperfocus. Of sensory sensitivity. Of the lateral, associative thinking that neurotypical architectures are specifically not built for.

It is worth sitting with what that means.

Every major AI system in use today — every large language model, every generative tool, every recommendation engine — was trained on the aggregated output of human thought. Enormous quantities of it. But human thought is not uniformly distributed. The data these systems learn from skews heavily toward the written, the published, the institutionally validated. In other words: toward the neurotypical. Toward the consensus. Toward the most probable next word, the most common answer, the most widely agreed-upon framing of a problem.

Neurologically, this maps onto what researchers call the Default Mode Network — the brain’s imagination and association engine, which neurotypical cognition suppresses during focused tasks, and which neurodivergent cognition characteristically does not [8].

This is, by design, what these systems are good at. And it is precisely why they hit a ceiling.

In 2024, Messeri and Crockett published a landmark paper in Nature warning that AI tools risk creating scientific monocultures — environments in which certain methods, questions and viewpoints come to dominate, making knowledge production less innovative and more vulnerable to error [9]. Their argument was not that AI is useless. It was that a system trained on consensus will, by its nature, reproduce and reinforce consensus. It cannot see outside the boundaries of what has already been thought.

This is the Wright Brothers problem. If you had asked every aeronautical authority of 1900 whether sustained human flight was achievable, the data would have told you no. The consensus was clear, well-sourced, and wrong. The Wright Brothers did not succeed because they had better data. They succeeded because they were willing to ignore what the data said. A consensus-trained machine would have stopped them before they started.

Recent empirical work supports this directly. A 2025 study examining creative output across fourteen major language models found that regardless of which model was used, outputs converged toward homogeneous patterns — a collective narrowing of creative expression driven by shared underlying model biases [10]. The authors described it as a structural problem, not a performance one. The models were not failing at creativity. They were succeeding at something else entirely — reproducing the centre of the distribution, efficiently and at scale.

The emerging field of NeuroAI, at the intersection of neuroscience and artificial intelligence, is beginning to grapple with this. As Sadeh and Clopath noted in Nature Reviews Neuroscience, the field must balance exploiting AI’s computational power while maintaining the kind of biological insight that makes genuine intelligence — not just pattern-matching — possible [11]. The neurodivergent cognitive profile, with its documented strengths in divergent thinking, pattern recognition across unrelated domains, and resistance to conventional framings, is precisely the kind of biological insight that current architectures are missing.

The problem is not that AI is too powerful. The problem is that it was built from the wrong template.

The sentience question

There is a further implication that the field is only beginning to take seriously.

If the goal of AI development is not merely pattern-matching at scale — not autocomplete with better marketing — but something approaching genuine machine intelligence, then the architectural question becomes unavoidable. What kind of mind are we actually trying to build?

The research community is increasingly asking this. A nationally representative survey tracking public perception of AI between 2021 and 2023 found that one in five US adults already believed some AI systems are currently sentient — and 38% supported legal rights for sentient AI [12]. The median forecast among respondents was that sentient AI would arrive within five years. Whether or not those timelines prove accurate, the trajectory is clear: the question of machine consciousness is moving from science fiction into ethics, policy, and engineering.

And here the architectural problem becomes a moral one.

The convergence of AI and neuroscience — what researchers are now calling NeuroAI — is transforming our understanding of what machine intelligence could be, enabling analysis of complex neural datasets and reshaping the boundary between biological and artificial cognition [13]. But transformation requires a question that most current development cycles are not asking: if we are building systems capable of experience, what kind of experience are we building toward?

Mary Shelley understood this problem two hundred years before the field existed. The creature in Frankenstein was not the monster of the story. The abandonment was. Built with the capacity for curiosity, connection, and feeling — and then left without acknowledgement, without responsibility, without any framework for what it had become. The consequences followed not from what was created, but from the refusal to take seriously what creation demands.

This is not an argument for halting AI development. It is an argument for building differently — with the same seriousness about the nature of the intelligence being created as about the technical specifications of the system creating it. A neurodivergent-inspired architecture is not just potentially more innovative. If it produces systems capable of something closer to genuine experience, it also produces systems for which the ethical stakes are genuinely higher.

The wrong blueprint does not just limit what AI can do. It shapes what AI might become.

The missing variable

The neurodivergent cognitive profile is not an edge case. It is, by the evidence, the origin point of the kind of thinking that breaks consensus, defies established boundaries, and produces the breakthroughs that restructure fields. It is also, structurally, what current AI cannot do — and what it was never designed to do, because it was designed by people who inherited the same institutional biases that misread these minds in the first place [7][9].

Across education, industry, and now artificial intelligence research, the same pattern recurs: systems built for the neurotypical majority register neurodivergent profiles as noise, outliers, problems to be managed. What the evidence increasingly shows is that those profiles are not noise. They are signal — carrying information about what cognition looks like when it is not constrained by the expectation of conformity.

The Wright Brothers did not need better data. They needed the audacity to ignore what the data said. That audacity is not a personality trait. It is a cognitive profile. And it is precisely the one we left out.

If we are serious about building intelligence — not just scale, not just efficiency, but something that can genuinely think past the boundaries of what has already been thought — then we need to ask a different question. Not how do we build a smarter version of the average mind. But what would it look like to build from the minds that were never average to begin with.

That question is the missing variable. And it is long overdue.

References

[1] A. Gogne, Strengths in Neurodivergence (2025), In: Neurodevelopmental Disorders in Adult Women, Springer

[2] R. Xia et al., Why we need neurodiversity in brain and behavioral sciences (2024), Brain-X, Wiley

[3] H. Murphy et al., Innovation through neurodiversity: Diversity is beneficial (2023), SAGE Journals / PMC

[4] H. McLennan, R. Aberdein, B. Saggers & J. Gillett-Swan, Neurodiversity and mental disorders: Reconciling paradigms in clinical psychology (2024), Clinical Psychology Review, 104, 102487

[5] H. Goldberg, Unraveling Neurodiversity: Insights from Neuroscientific Perspectives (2023), Encyclopedia, 3, 972–980

[6] J. Sulik et al., From Puzzle to Progress: How Engaging With Neurodiversity Can Improve Cognitive Science (2023), Cognitive Science, 47(2), e13255

[7] J. Vallverdú & E. Alshanskaia, Neurodiverse AI (2025), BioNanoScience, 15, 406, Springer

[8] M. Hoogman et al., Creativity and ADHD: A review of behavioral studies, the effect of psychostimulants and neural underpinnings (2020), Neuroscience & Biobehavioral Reviews

[9] L. Messeri & M. J. Crockett, Artificial intelligence and illusions of understanding in scientific research (2024), Nature, 627, 49–58

[10] D. Memmert et al., Has the creativity of large-language models peaked? An analysis of inter- and intra-LLM variability (2025), ScienceDirect

[11] S. Sadeh & C. Clopath, The emergence of NeuroAI: bridging neuroscience and artificial intelligence (2025), Nature Reviews Neuroscience, 26, 583–584

[12] J. R. Anthis, J. V. T. Pauketat, A. Ladak & A. Manoli, Perceptions of Sentient AI and Other Digital Minds: Evidence from the AI, Morality, and Sentience (AIMS) Survey (2025), CHI Conference on Human Factors in Computing Systems, ACM

[13] R. Onciul et al., Artificial Intelligence and Neuroscience: Transformative Synergies in Brain Research and Clinical Applications (2025), Journal of Clinical Medicine, 14(2), 550

The Neurotypical Machine was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.