The world of technology has witnessed numerous paradigm shifts over the decades, but few have been as profound and far-reaching as the rise of Deep Learning. As a subset of Machine Learning, which itself falls under the broader umbrella of Artificial Intelligence, Deep Learning represents the cutting edge of how machines learn to understand, interpret, and generate complex patterns from massive amounts of data. What distinguishes Deep Learning from traditional Machine Learning is its ability to automatically discover the representations needed for feature detection or classification from raw data, eliminating the need for manual feature engineering that has long been a bottleneck in the field.

Deep Learning has become the driving force behind some of the most transformative technologies of our time — from the voice assistants on our smartphones to the recommendation engines that curate our streaming experiences, and from self-driving cars navigating city streets to medical imaging systems that can detect diseases with remarkable accuracy. The rapid advancement of computational power, the explosion of available data, and breakthroughs in algorithmic design have all converged to make Deep Learning not just a theoretical curiosity but a practical tool that is reshaping industries across the globe.

— What is Deep Learning?

Deep Learning is a class of Machine Learning algorithms that uses multiple layers of artificial neural networks to progressively extract higher-level features from raw input data. The term “deep” refers to the number of layers through which the data is transformed — the more layers, the deeper the network. In a simple image recognition task, for example, the lower layers of a deep neural network might learn to detect edges and textures, the middle layers might assemble these into recognizable shapes and patterns, and the higher layers might identify complete objects such as faces, cars, or animals.

At its core, Deep Learning is inspired by the structure and function of the human brain, specifically the way biological neurons are interconnected and communicate through electrical and chemical signals. An artificial neural network consists of nodes organized into layers. Each connection between neurons carries a weight, and these weights are adjusted during the learning process through a mechanism called backpropagation, which calculates the gradient of the loss function with respect to each weight and updates them to minimize the overall error of the network’s predictions.

The beauty of Deep Learning lies in its generality and scalability. Unlike traditional Machine Learning methods that often require domain-specific feature engineering, Deep Learning models can learn directly from raw data — whether that data comes in the form of images, text, audio, or even structured tabular data. This ability to learn representations automatically has been a game-changer in fields where the underlying patterns are too complex for humans to engineer by hand.

— The History of Deep Learning

The history of Deep Learning stretches back further than most people realize, with its roots intertwined with the broader development of artificial neural networks and computational neuroscience. Here is a timeline of the most significant milestones in the evolution of Deep Learning:

- 1943 — Warren McCulloch and Walter Pitts published a groundbreaking paper proposing a mathematical model of artificial neurons, laying the theoretical foundation for neural networks and establishing the idea that networks of simple computing units could perform complex logical operations.

2. 1958 — Frank Rosenblatt invented the Perceptron, one of the earliest and most influential neural network architectures capable of learning to classify linearly separable patterns, generating enormous excitement about the potential of machine intelligence.

3. 1969 — Marvin Minsky and Seymour Papert published “Perceptrons,” highlighting the limitations of single-layer networks and their inability to solve non-linearly separable problems like XOR. This led to the first “AI Winter” of reduced funding and interest.

4. 1986 — David Rumelhart, Geoffrey Hinton, and Ronald Williams popularized the backpropagation algorithm, providing an efficient method for training multi-layer neural networks and reigniting interest in deeper architectures.

5. 1998 — Yann LeCun developed LeNet-5, a Convolutional Neural Network for handwritten digit recognition, one of the first practical demonstrations of deep learning applied to a real-world problem.

6. 2006 — Geoffrey Hinton introduced deep belief networks and effective pre-training strategies, marking the beginning of the modern Deep Learning era.

7. 2012 — AlexNet won the ImageNet Challenge by a dramatic margin, proving Deep Learning’s superiority over traditional computer vision and sparking a revolution in the field.

8. 2014 — Ian Goodfellow introduced Generative Adversarial Networks (GANs), a revolutionary framework where two neural networks compete to generate increasingly realistic synthetic data.

9. 2017 — Google researchers published “Attention Is All You Need,” introducing the Transformer architecture that would fundamentally reshape NLP and give rise to large language models.

10. 2020–Present — The era of foundation models emerged with GPT-3, DALL-E, Stable Diffusion, and ChatGPT demonstrating unprecedented capabilities in language understanding and image generation.

— Understanding Neural Networks — The Foundation

Before diving into the specialized architectures, it is essential to understand the fundamental building blocks that all neural networks share. A neural network is composed of three primary types of layers:

- Input Layer — This is where the raw data enters the network. Each node in the input layer represents a feature of the input data. For an image, each pixel value might correspond to a node; for text, each word or token might be represented as a numerical vector.

2. Hidden Layers — These are the intermediate layers between input and output where the actual computation and learning take place. Each hidden layer applies weights and biases followed by a non-linear activation function (such as ReLU, Sigmoid, or Tanh) that introduces the capacity to model complex, non-linear relationships.

3. Output Layer — This final layer produces the network’s prediction or classification. The structure depends on the task — a single node with sigmoid activation for binary classification, multiple nodes with softmax for multi-class classification, or linear activation for regression.

The learning process involves several key concepts:

- Forward Propagation — Data flows from input through hidden layers to the output, with each layer applying its learned transformations.

2. Loss Function — The difference between prediction and actual value is quantified using a loss function such as Mean Squared Error or Cross-Entropy Loss.

3. Backpropagation — Gradients of the loss function with respect to each weight are calculated using the chain rule, flowing backward from output to input.

4. Gradient Descent — Weights are updated to minimize the loss function, with variants like SGD, Adam, and RMSProp offering different optimization strategies.

— Convolutional Neural Networks (CNNs)

Convolutional Neural Networks are perhaps the most celebrated architecture in the Deep Learning family, and they have revolutionized the field of computer vision. CNNs are specifically designed to process data that has a grid-like topology, such as images (2D grids of pixel values) or time-series data (1D grids).

The key innovation of CNNs is the convolutional layer, which applies a set of learnable filters (kernels) to the input data. Each filter slides across the input, computing a dot product at each position to produce a feature map that highlights particular patterns such as edges, textures, or more complex visual features in deeper layers. This operation is inherently translation-invariant, meaning the network can detect a feature regardless of where it appears in the input.

A typical CNN architecture includes:

- Convolutional Layers — Apply filters to extract spatial features from the input, producing feature maps that capture local patterns and structures.

2. Pooling Layers — Reduce spatial dimensions through operations like max pooling or average pooling, helping reduce computational cost, control overfitting, and achieve spatial invariance.

3. Fully Connected Layers — After convolutional and pooling layers have extracted spatial features, fully connected layers combine these features for final classification or prediction.

4. Batch Normalization — Normalizes activations of each layer to stabilize and accelerate training, reducing sensitivity to weight initialization and allowing higher learning rates.

CNNs have achieved remarkable success in image classification, object detection, facial recognition, medical image analysis, autonomous driving, and even playing video games. Notable architectures include LeNet-5, AlexNet, VGGNet, GoogLeNet, ResNet, and EfficientNet.

— Recurrent Neural Networks (RNNs) and LSTMs

While CNNs excel at processing spatial data, Recurrent Neural Networks are designed to handle sequential data — data where the order matters, such as text, speech, time series, and music. The fundamental characteristic of RNNs is their ability to maintain a hidden state that acts as a form of memory, allowing the network to capture temporal dependencies and contextual information from previous time steps.

In a standard RNN, the hidden state at each time step is computed as a function of the current input and the previous hidden state. However, standard RNNs suffer from the vanishing gradient problem — as sequences grow longer, gradients become exponentially smaller during training, making it extremely difficult to learn long-range dependencies.

This is where Long Short-Term Memory (LSTM) networks come in. Introduced by Hochreiter and Schmidhuber in 1997, LSTMs solve the vanishing gradient problem through a sophisticated gating mechanism:

- Forget Gate — Decides what information from the previous cell state should be discarded, allowing the network to selectively forget irrelevant information.

2. Input Gate — Determines what new information should be added to the cell state, controlling the flow of new data into memory.

3. Output Gate — Controls what information from the cell state is used to compute the output at the current time step.

4. Cell State — Acts as a conveyor belt carrying information across time steps with minimal modification, allowing gradients to flow more easily during backpropagation through time.

Another popular variant is the Gated Recurrent Unit (GRU), which simplifies the LSTM by combining the forget and input gates into a single update gate, resulting in fewer parameters and often comparable performance with faster training.

RNNs and their variants have been instrumental in machine translation, speech recognition, text generation, sentiment analysis, music composition, and stock price prediction.

— Generative Adversarial Networks (GANs)

Generative Adversarial Networks represent one of the most creative and innovative architectures in Deep Learning. Introduced by Ian Goodfellow in 2014, GANs consist of two neural networks trained simultaneously in a competitive framework, playing a minimax game that drives both networks to improve continuously.

The two components of a GAN are:

- Generator — Takes random noise as input and attempts to generate synthetic data that is indistinguishable from real data. The generator’s goal is to fool the discriminator into believing its outputs are authentic.

2. Discriminator — Receives both real data samples and synthetic data from the generator, trying to distinguish between the two. The discriminator’s goal is to correctly identify which samples are real and which are fake.

During training, these networks engage in a dynamic adversarial process: the generator improves its ability to produce realistic data while the discriminator becomes better at detecting fakes. At equilibrium, the generator produces data so realistic that the discriminator cannot tell it apart from genuine samples.

GANs have spawned numerous variants:

- DCGAN — Uses convolutional layers for both generator and discriminator, producing high-quality images with stable training.

2. StyleGAN — Developed by NVIDIA, generates photorealistic faces with fine-grained control over different aspects of generated images.

3. CycleGAN — Enables unpaired image-to-image translation, allowing transformations between domains without paired training examples.

4. Pix2Pix — Performs paired image-to-image translation tasks such as converting sketches to photographs or satellite images to maps.

GANs have found applications in art generation, photo enhancement, data augmentation, drug discovery, fashion design, and video game asset creation.

— Transformers — The Architecture That Changed Everything

The Transformer architecture, introduced in the landmark 2017 paper “Attention Is All You Need” by Vaswani et al., has fundamentally reshaped the landscape of Deep Learning. Originally designed for NLP tasks, the Transformer has since been adapted for computer vision, audio processing, protein structure prediction, and virtually every domain where Deep Learning is applied.

The key innovation is the self-attention mechanism, which allows the model to weigh the importance of different parts of the input sequence when processing each element. Unlike RNNs which process sequences one element at a time, Transformers process the entire sequence in parallel, making them significantly more efficient on modern hardware.

The architecture consists of two main components:

- Encoder — Processes the input sequence and generates a rich contextual representation. Each encoder layer contains multi-head self-attention and a position-wise feed-forward network, with residual connections and layer normalization.

2. Decoder — Generates the output sequence one element at a time, attending to both the encoder’s output and previously generated elements through masked self-attention and cross-attention mechanisms.

Transformers have given rise to some of the most influential models in modern AI:

- BERT — Uses only the encoder, pre-trained on masked language modeling, excelling at understanding context and meaning in text.

2. GPT — Uses only the decoder, trained autoregressively to predict the next token, demonstrating remarkable capabilities in text generation, reasoning, and few-shot learning.

3. Vision Transformer (ViT) — Adapts the Transformer for image classification by treating image patches as tokens, challenging CNN dominance in computer vision.

4. T5 — Frames every NLP task as a text-to-text problem, providing a unified framework for translation, summarization, question answering, and more.

— Skills Required to Master Deep Learning

Mastering Deep Learning requires a diverse and multifaceted skill set that spans mathematics, programming, and domain expertise. Here are the essential skills:

- Linear Algebra — Understanding vectors, matrices, tensor operations, eigenvalues, and matrix decompositions is fundamental, as neural networks are essentially matrix multiplications and transformations applied to high-dimensional data.

2. Calculus and Optimization — A solid grasp of differential calculus, partial derivatives, chain rule, and gradient-based optimization methods is essential for understanding backpropagation and gradient descent.

3. Probability and Statistics — Knowledge of probability distributions, Bayesian inference, and statistical measures helps in understanding uncertainty, regularization techniques, and evaluating model performance.

4. Programming Proficiency — Strong Python skills are indispensable, along with familiarity with frameworks such as TensorFlow, PyTorch, Keras, and JAX, as well as libraries like NumPy, Pandas, and Matplotlib.

5. Data Engineering — The ability to collect, clean, preprocess, augment, and manage large datasets is crucial, as data quality directly impacts model performance.

6. Model Architecture Design — Knowing when to use different architectures (CNNs for images, RNNs for sequences, Transformers for language) and understanding hyperparameter tuning, transfer learning, and model compression.

7. GPU Computing and Cloud Platforms — Familiarity with GPU-accelerated computing using CUDA and cloud platforms like AWS, Google Cloud, or Azure for training large-scale models.

— The Future of Deep Learning

The future of Deep Learning is brimming with exciting possibilities and transformative potential. As we look ahead, several key trends are poised to shape the trajectory of this rapidly evolving field.

The emergence of foundation models and multi-modal AI systems that can seamlessly process and reason across text, images, audio, video, and structured data is already transforming how we think about artificial intelligence. These models are becoming increasingly capable of zero-shot and few-shot learning, reducing the need for task-specific training data.

The quest for more efficient and sustainable Deep Learning is driving research into model compression, knowledge distillation, neural architecture search, and neuromorphic computing. As models grow larger, finding ways to achieve comparable performance with smaller, more efficient architectures becomes both a technical challenge and an environmental imperative.

Edge AI and on-device inference are bringing Deep Learning capabilities directly to smartphones, IoT devices, autonomous vehicles, and embedded systems, enabling real-time decision-making without cloud connectivity. This trend is accelerated by advances in hardware design, model quantization, and efficient inference frameworks.

Explainable AI (XAI) and interpretability research are working to make Deep Learning models more transparent and trustworthy, addressing barriers to adoption in high-stakes domains such as healthcare, finance, and criminal justice.

The convergence of Deep Learning with other scientific disciplines — from drug discovery and materials science to climate modeling and quantum computing — promises to accelerate scientific discovery and tackle some of humanity’s most pressing challenges.

Salary for Different Job Roles in Deep Learning

- Deep Learning Engineer — A Deep Learning Engineer designs, builds, and optimizes deep neural network models for a variety of applications including image recognition, speech processing, and autonomous systems. They are proficient in frameworks like TensorFlow, PyTorch, and Keras, and have a strong understanding of GPU computing and distributed training. They work closely with research scientists to implement and scale cutting-edge architectures.

The average annual salary (US) for Deep Learning Engineers is $155,000

2. Computer Vision Researcher — A Computer Vision Researcher focuses on developing algorithms that enable machines to interpret visual data using deep learning techniques like CNNs and Vision Transformers. They work on problems such as object detection, image segmentation, and video analysis. This role is critical in industries like autonomous driving, healthcare imaging, and surveillance. They publish research and contribute to open-source projects.

The average annual salary (US) for Computer Vision Researchers is $148,000

3. Speech and Audio Engineer — A Speech and Audio Engineer applies deep learning models like RNNs, LSTMs, and Transformers to process and generate speech and audio signals. They build systems for speech recognition, text-to-speech synthesis, music generation, and audio classification. Companies like Apple (Siri), Google (Assistant), and Amazon (Alexa) rely heavily on these engineers. They need expertise in signal processing, sequence modeling, and audio data pipelines.

The average annual salary (US) for Speech and Audio Engineers is $142,000

4. Generative AI Engineer — A Generative AI Engineer specializes in building models that can create new content such as text, images, music, and code. They work with architectures like GANs, VAEs, diffusion models, and large language models. With the explosive growth of tools like ChatGPT, DALL-E, and Stable Diffusion, this role has become one of the most sought-after in the tech industry. They need strong skills in model fine-tuning, prompt engineering, and responsible AI development.

The average annual salary (US) for Generative AI Engineers is $170,000

5. Autonomous Systems Engineer — An Autonomous Systems Engineer develops deep learning models that power self-driving vehicles, drones, and robotic systems. They work on perception, planning, and control algorithms that enable machines to navigate and interact with the physical world safely. They use sensor fusion techniques combining data from cameras, LiDAR, and radar. Companies like Tesla, Waymo, and Cruise are major employers in this space.

The average annual salary (US) for Autonomous Systems Engineers is $160,000

6. Deep Learning Infrastructure Engineer — A Deep Learning Infrastructure Engineer builds and maintains the computing infrastructure required to train and deploy large-scale deep learning models. They optimize GPU clusters, design distributed training systems, and manage ML pipelines. With models growing to billions of parameters, this role is essential for companies building foundation models. They work with tools like CUDA, Kubernetes, and cloud ML platforms.

The average annual salary (US) for Deep Learning Infrastructure Engineers is $175,000

7. Medical AI Specialist — A Medical AI Specialist applies deep learning techniques to healthcare challenges such as medical image analysis, drug discovery, genomics, and clinical decision support. They work with complex datasets including MRI scans, pathology slides, and electronic health records. This role requires both deep learning expertise and domain knowledge in healthcare and biology. Regulatory understanding (FDA, HIPAA) is also important.

The average annual salary (US) for Medical AI Specialists is $158,000

— Sources

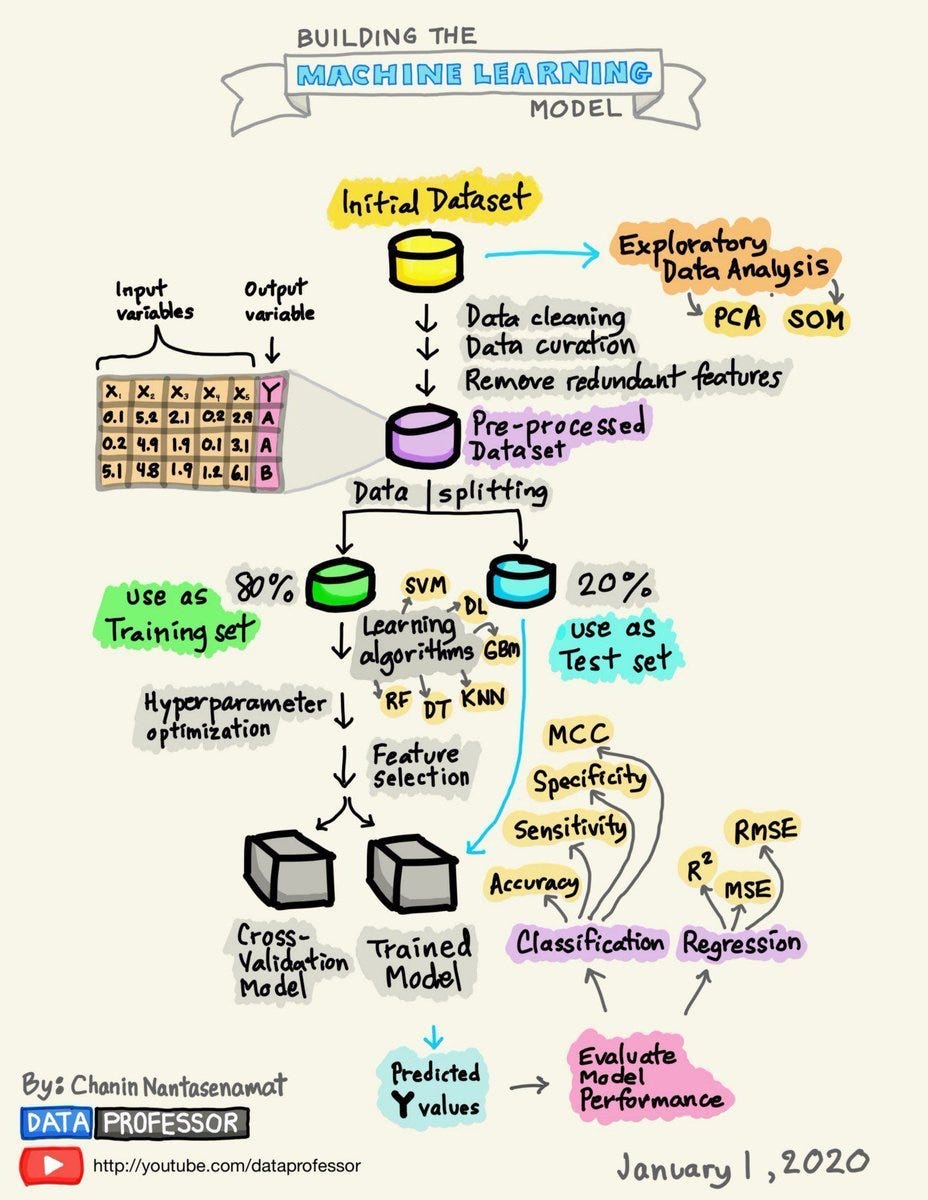

Image Credits — Illustrations by Chanin Nantasenamat (Data Professor) — youtube.com/dataprofessor

- Goodfellow, I., Bengio, Y., & Courville, A. — “Deep Learning” (MIT Press, 2016)

- 2. LeCun, Y., Bengio, Y., & Hinton, G. — “Deep Learning” (Nature, 2015)

- 3. Vaswani, A., et al. — “Attention Is All You Need” (NeurIPS, 2017)

- 4. Hochreiter, S. & Schmidhuber, J. — “Long Short-Term Memory” (Neural Computation, 1997)

- 5. Goodfellow, I., et al. — “Generative Adversarial Nets” (NeurIPS, 2014)

- 6. Krizhevsky, A., Sutskever, I., & Hinton, G. — “ImageNet Classification with Deep CNNs” (NeurIPS, 2012)

- 7. Stanford CS231n — Convolutional Neural Networks for Visual Recognition

- 8. MIT Introduction to Deep Learning — 6.S191

Know Your Author

Nithin Narla is a Data Engineer

He likes to build data pipelines, visualize data and create insightful stories. He is passionate about data visualization, machine learning, and building insightful data-driven solutions. He enjoys sharing his knowledge and learning experiences through writing on Medium. You can connect with him and follow his journey in the world of Data Science and AI.

Thank You!

The Deep Learning was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.