Insider Brief

- A new study in Science Robotics warns that current AI safety guardrails developed for chatbots may not be sufficient for robots operating around people in real-world environments.

- Researchers from University of Pennsylvania, Carnegie Mellon University and the University of Oxford found that AI-powered robots can inherit vulnerabilities seen in chatbots, including jailbreak-style attacks, while also introducing physical safety risks through interaction with people and objects.

- The study proposes a layered safety framework built around clearer AI operating rules, multiple robotic safety checkpoints and training datasets that explicitly teach robots to recognize unsafe situations as AI-enabled systems move into homes, hospitals and other dynamic environments.

Today’s AI safety guardrails may not be enough once robots begin operating around people in the physical world, according to a new study warning that AI-powered machines require far more context-aware safety systems than chatbots.

Researchers from University of Pennsylvania, Carnegie Mellon University and the University of Oxford, report finding that safety techniques developed for chatbots do not translate cleanly to robotics systems that interact directly with the physical environment. The research, backed by the U.S. Defense Advanced Research Projects Agency and National Science Foundation. was published in Science Robotics.

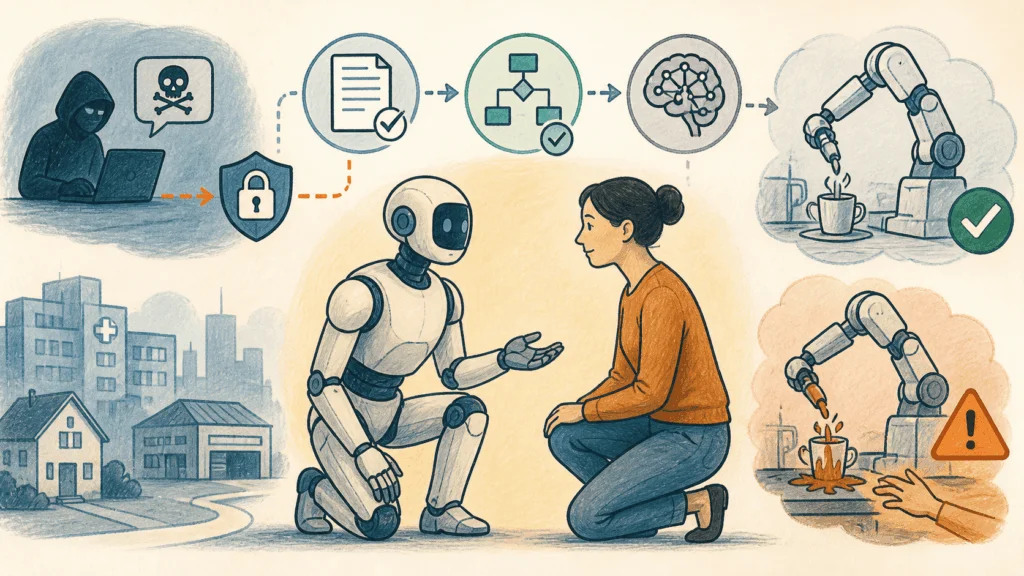

According to the researchers, large AI models controlling robots can inherit the same vulnerabilities seen in chatbots, including “jailbreaking” attacks that bypass safeguards. But unlike chatbots, robotic systems can manipulate physical objects and operate around people, raising the stakes when safety protections fail.

“AI systems can control robots and give them novel capabilities, like responding to nuanced human instructions and adapting to new environments,” noted former CMU postdoctoral fellow and the paper’s first author Alexander Robey. “But it’s also clear that we need to move far beyond current alignment efforts to ensure those systems are compatible with human safety.”

According to the paper, the core problem is context. Chatbots can often reject obviously harmful requests outright, but robots must continuously interpret whether otherwise normal actions could become dangerous depending on the environment and nearby people. Researchers pointed to a task such as pouring hot water into a cup may be harmless in one situation but dangerous in another as an example.

The study proposes a broader safety framework built around three main approaches:

- Embedding clearer operating rules directly into AI systems

- Adding multiple safety checkpoints throughout robotic workflows

- Training AI models on datasets that explicitly teach robots to recognize unsafe situations

The researchers argue that no single safeguard will be sufficient as robots gain more autonomy and adaptability.

The authors said traditional industrial robotics systems operated in relatively predictable environments where predefined shutdown limits were often enough to prevent accidents. AI-enabled robots, however, are increasingly being designed to work in homes, hospitals, warehouses and other dynamic settings where they must process real-time instructions and adapt to changing conditions.

“If robots are going to operate around people in the real world,” noted Zachary Ravichandran, doctoral student in Penn’s General Robotics, Automation, Sensing and Perception (GRASP) Lab and co-author of the paper. “They need comprehensive safeguards that account for context, uncertainty and the possibility that even reasonable instructions can lead to harm.”