I expect it’ll take another week or two for everyone to fully digest the significance of Claude Mythos Preview. In the meantime, here are my initial thoughts.

Gradually, then suddenly

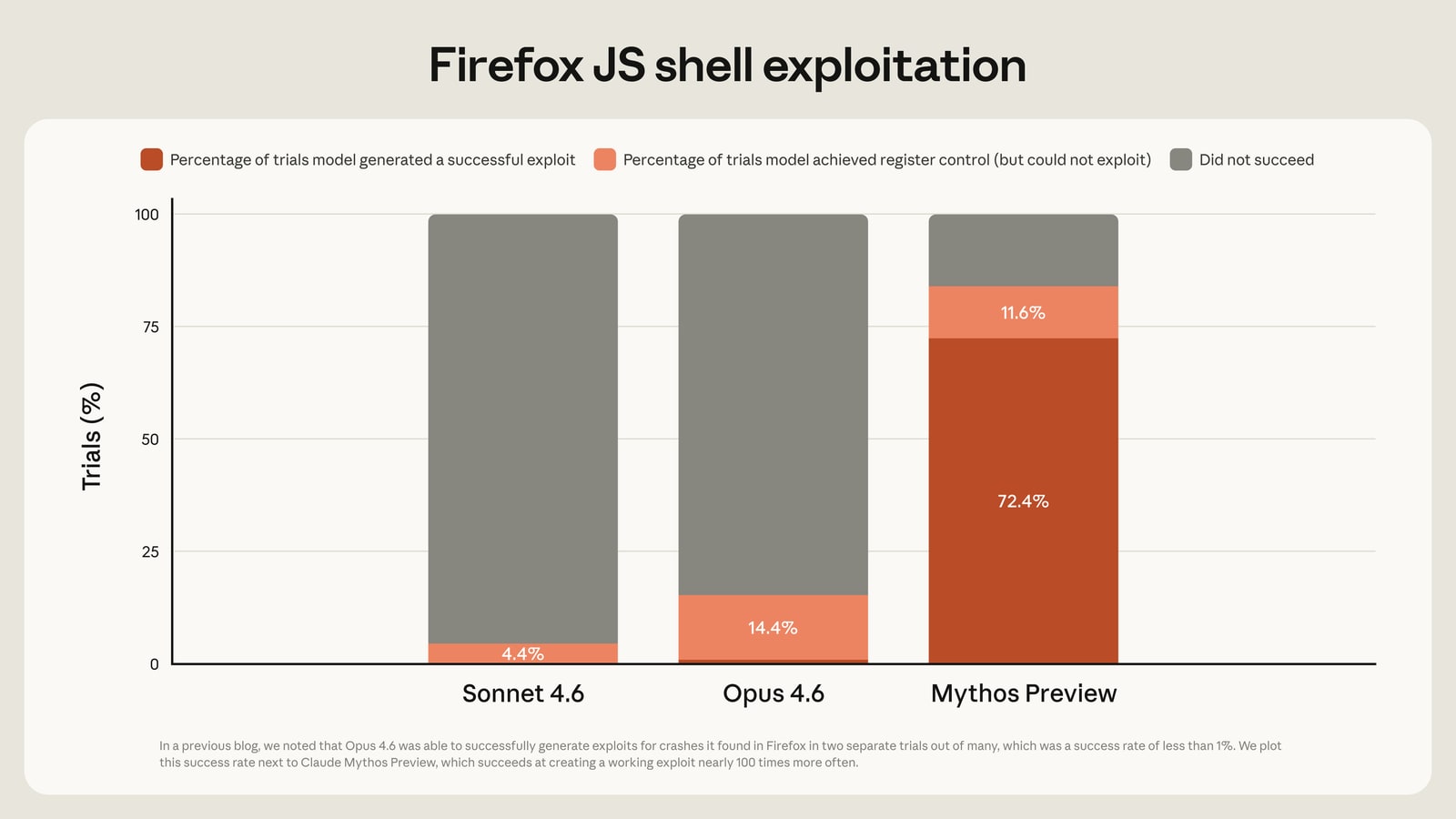

Mythos is radically better at cyber than any previous model:

It isn’t the first model that can find vulnerabilities, of course: over the last several months we’ve seen a sharp increase in the rate of AI-discovered vulnerabilities.

But Mythos is something new: it’s radically better not only at finding vulnerabilities at scale, but also at creating working exploits from them. We went abruptly from “this is concerning and everyone in cybersecurity is gonna have to scramble” to this observation by Ryan Greenblatt:

If Mythos was released as an open weight model in February (or tomorrow), this would cause ~100s of billions in damages, with a substantial chance of ~$1 trillion in damages

I predict with high confidence that we’ll see this pattern again: AI will gradually get moderately good at something, until one day a new model drops that is suddenly extremely good at it. That will sometimes be exciting (making medical breakthroughs), sometimes disruptive (replacing entire professions), and sometimes terrifying (enabling bioweapon production).

This could have gone much worse

Anthropic has produced a genuinely dangerous model and they’re treating it about as responsibly as one could hope for. I suspect OpenAI and Google would have been responsible if they’d developed Mythos, although they broadly seem marginally less careful than Anthropic. (Update: OpenAI did something similar with their Trusted Access program)

But imagine if xAI had gotten there first: does anyone think the company that brought us MechaHitler could be trusted with this level of capability?

Differently gruesome: if one of the Chinese labs had developed this level of offensive cyber capability ahead of anyone else, the Chinese government likely would have commandeered it for covert use.

Similarly, if Mythos had been developed by a nationalized AI project run by DoW, it’s likely it would have been turned into an offensive weapon or worse. Remember the Shadow Brokers fiasco.

Some tasks can only be performed by the government, but government agencies are not known for competence. Be careful what you put them in charge of.

When do the open models catch up?

Open models present a particular safety risk because it’s so easy to remove their guardrails. In addition, none of the leading open model developers appear to take safety nearly as seriously as the frontier labs. That hasn’t been a critical issue to date because until now, even the frontier models haven’t been truly dangerous at scale. But with Mythos, that is beginning to change. So I have some questions:

How long does it take the open models to catch up to Mythos’ cyber capabilities? This will be an interesting data point about whether they are genuinely 6-9 months behind, or whether their benchmark scores overstate their true capabilities.

Will reaching this capability level force them to take safety more seriously, or will they continue to release models with little safety training or testing?

If we do see Mythos-level open models by the end of this year, what implications does that have for cybersecurity?

Internal deployments are increasingly important

Anthropic was sufficiently concerned about Mythos’ capabilities to institute a new testing window before beginning internal deployment. That’s a correct choice that shouldn’t surprise anyone, but it marks an important threshold.

To date, most of the risk associated with a new model has come from misuse or misaligned behavior during public deployment. As models become increasingly capable, however that begins to change. An extremely capable misaligned model is dangerous as soon as it’s deployed internally for the first time—in some scenarios, the initial deployment is the most dangerous time. We aren’t there yet, but Mythos suggests we’re getting close.

Limited deployments also reduce the public’s visibility into the capabilities and dangers of frontier models. If they become common, that increases the importance of transparency measures and third party safety audits.

Pricing

Mythos is much more expensive than Opus (which was already expensive). Opus is priced at $5 / $25 per million tokens input/output, and Mythos is currently $25 / $125.

Three things can be simultaneously true:

- The price of a unit of intelligence is falling fast

- The price of the best intelligence is climbing fast

- In both cases, the price is an absolute bargain

Cool vibe coding, bro

I’m curious how the security implications of Mythos play out for vibe coders.

We may be about to see a wave of sophisticated supply chain attacks. That’s bad news for everyone, but the average vibe coder seems uniquely vulnerable since they lack both technical sophistication and an IT department.

I know several non-technical people who have vibe-coded CRM-like projects that host sensitive data on public-facing servers. Those projects seem like easy targets for a wave of automated attackers.

More reading

Anthropic: System Card: Claude Mythos Preview, Assessing Claude Mythos Preview’s cybersecurity capabilities, Project Glasswing

Zvi: Claude Mythos: the System Card, Claude Mythos 2: Cybersecurity and Project Glasswing

Dean Ball: New Sages Unrivalled

Discuss