Your model learns patterns beautifully. It just cannot explain why it made a call. That gap is exactly what neuro-symbolic AI was built to close.

There is a running joke in enterprise ML. You spend six months training a model, it hits 91% accuracy on your holdout set, leadership loves it, you ship it, and then on day two someone asks: “But why did it flag that customer?”

You shrug. The model shrugged back. Nobody has a clean answer.

This is not a data problem. This is not a model architecture problem. It is a fundamental limitation of how pure neural networks process the world. They learn correlations from raw data. They are very good at it. But they do not reason. They do not apply rules. And when they fail, they fail quietly, with no breadcrumb trail left behind.

Neuro-symbolic AI is the field’s answer to that. And in 2026, it has quietly moved from academic papers into production systems at JPMorgan, EY, and enterprise platforms built on Snowflake. Let me break down what it actually is, why it matters now, and what data scientists should be thinking about.

First, let us talk about the two camps AI has always lived in

Before neural networks dominated everything, AI ran on rules. Explicit, hand-crafted, symbolic rules. “If the customer’s debt-to-income ratio exceeds 0.45, flag the application.” Clean. Auditable. Easy to explain. But brittle. Add an edge case, and the rules break. Scale it to millions of inputs, and you cannot write rules fast enough to keep up.

Then came neural networks, and especially deep learning, which flipped everything. No more hand-written rules. Just data, gradients, and a loss function. The model figures it out. Suddenly you could do things that were impossible before: image recognition, language understanding, protein folding. But you traded away explainability almost entirely.

Neuro-symbolic AI refuses to pick a side. It says: let the neural half learn from data, and let the symbolic half reason, constrain, and explain. The two systems work together, passing information back and forth.

Neural networks are good at spotting patterns. Symbolic systems are good at logic. Separately, each has a critical weakness. Together, they cover each other’s blind spots.

So what does the architecture actually look like?

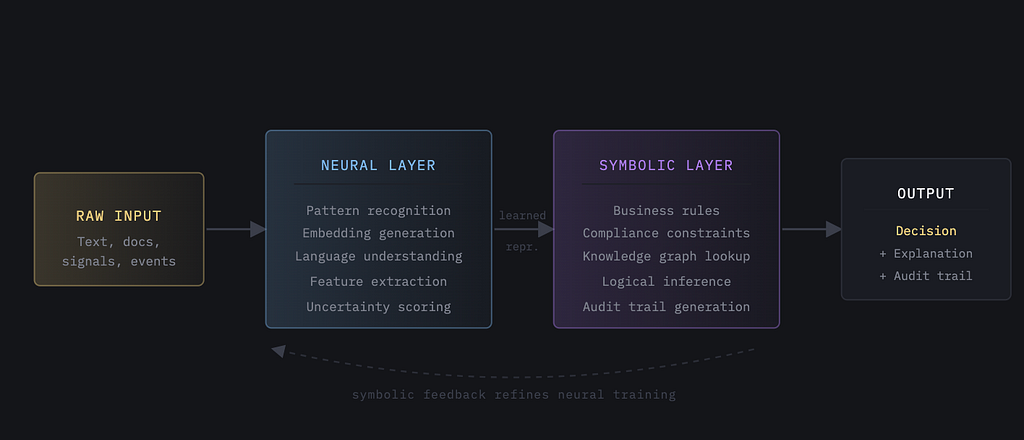

There is no single standard architecture here. Think of neuro-symbolic as a design philosophy with several implementation flavors. But the core idea is always the same: a neural perception layer that processes raw, unstructured input, feeding into a symbolic reasoning layer that applies structured logic.

A few ways this plays out in practice. In a sequential setup, the neural model processes the raw input first, then hands a structured representation to the symbolic reasoner. Think of it as the neural model doing the heavy lifting on language and pattern extraction, and the symbolic layer applying the company’s actual decision logic on top of those extracted features.

In a parallel setup, both systems run simultaneously and a fusion module combines their outputs. More compute, but you capture cases where the symbolic layer would have missed a signal that the neural model caught.

The most interesting flavor for ML practitioners is when the feedback runs both ways. The symbolic layer’s logical constraints flow back as training signals into the neural network. Essentially, you are baking business logic directly into the gradient updates.

Why everyone is talking about this in 2026 specifically

This architecture has existed in research for years. So why is it suddenly in production systems this year?

A few things converged at once.

Regulatory pressure became real, not theoretical. The EU AI Act moved from policy discussion into enforcement. It requires traceability, explainability, and documented accountability for high-risk AI systems. If your model makes a credit decision, a hiring recommendation, or a medical flag, you now need a paper trail. Pure neural networks simply cannot provide that. Neuro-symbolic systems can.

Enterprise AI graduated from the pilot stage. For the past two years, most companies were running AI experiments in sandbox environments. Those experiments are now moving into production, where failures have real costs. When you are processing a billion dollars in loan applications or triaging emergency room patients, “the model said so” is not a sufficient answer.

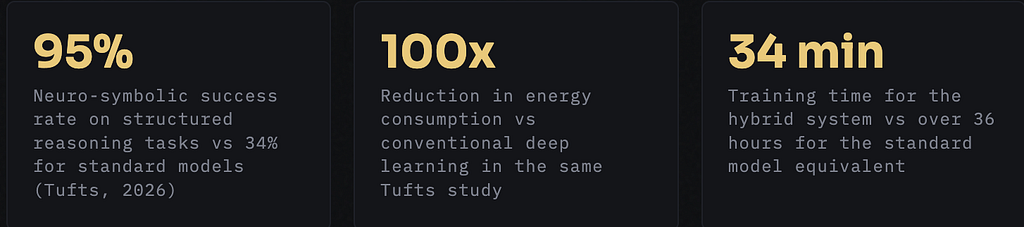

Actual research breakthroughs landed this year. Researchers at Tufts University published results showing a neuro-symbolic system that cut energy consumption by up to 100 times compared to standard deep learning models, with dramatically better performance on structured reasoning tasks. In robotics tests, the hybrid system hit a 95% success rate on logic-heavy problems where standard models managed only 34%. That is not an incremental improvement. That is a category shift. The research is being presented at the International Conference on Robotics and Automation in Vienna this year.

And practically speaking, EY-Parthenon launched a commercial neuro-symbolic platform this year, actively being deployed for financial services, industrial, and consumer goods clients. JPMorgan reclassified its AI investments as core infrastructure rather than experimental R&D. The capital is following the architecture.

Where does RAG fit into all of this?

If you work in LLM systems, you have almost certainly built a RAG pipeline. What you may not have noticed is that RAG is itself a lightweight form of neuro-symbolic AI.

Think about it. The retriever operates over a structured knowledge source: a vector index, a knowledge graph, a database of curated documents. The generator is a neural language model. You have a symbolic retrieval step feeding a neural generation step. That is the neuro-symbolic pattern, just applied in the context of language model grounding.

RAG is neuro-symbolic AI with a familiar face. You have been doing this already. The frontier version just takes the symbolic side much further, adding formal reasoning engines, constraint verification, and explicit logical inference.

The more advanced setups go beyond retrieval. GraphRAG, for instance, uses a knowledge graph as the symbolic component, allowing multi-hop reasoning across entity relationships rather than single-shot similarity lookups. The symbolic layer is doing actual logical traversal, not just cosine similarity over embeddings.

Agentic AI frameworks are pushing this even further. When an agent decides whether to invoke a tool, route to a sub-agent, or escalate to a human, that routing logic is symbolic reasoning running on top of neural perception. The LLM understands context. A policy layer decides what is allowed. That is exactly the neuro-symbolic split.

The practical reality for data scientists right now

You are not going to rearchitect your entire stack overnight. And you probably do not need to. Here is where I would actually put your attention.

If you are building LLM systems

Start adding a symbolic constraint layer on top of your LLM outputs. This does not have to be fancy. A rule engine that validates your model’s outputs against business logic before they are acted on is already a step in the right direction. Document those rules. That documentation is the beginning of your audit trail.

If you are building classical ML models for regulated domains

Consider a hybrid setup where your neural model generates features and confidence scores, and a symbolic layer applies decision logic on top of those scores rather than using the raw probabilities directly. You maintain the pattern-recognition power of the neural model while making the final decision logic explicit and auditable.

If you are evaluating RAG pipelines

Look carefully at your knowledge source. A well-structured knowledge graph as your symbolic layer will give you more precise, multi-hop retrieval than a flat vector index. It is more work to maintain, but the reasoning depth improves substantially, particularly for complex domain-specific queries.

If you are watching the tooling landscape

Keep an eye on Snowflake’s Open Semantic Interchange, which is a new open standard for machine-readable semantic context that they co-founded with BlackRock, S&P Global, dbt Labs, and Sigma. The idea is to give agents a shared semantic layer so that when they reason across data sources, they are operating on consistent definitions. That is a symbolic knowledge infrastructure play, and it will matter for how agentic systems are governed.

What this actually changes about how we build AI

The mental model shift matters more than any specific tool. For the past several years, the dominant paradigm in applied ML has been: collect more data, train a bigger model, evaluate on a holdout set, ship. Explainability was an afterthought. Often literally implemented as a post-hoc wrapper (SHAP, LIME) rather than something intrinsic to how the model made decisions.

Neuro-symbolic AI inverts that priority. You design the reasoning structure first. What are the constraints this system must respect? What business logic must be provably satisfied? What is the audit trail that needs to exist? The symbolic layer answers those questions. Then the neural layer fills in everything the rules cannot capture, which is usually most of the hard perceptual and linguistic work.

This is a healthier way to build AI systems for the enterprise. Not because it is morally superior. Because it is operationally more sustainable. Models built this way fail in more predictable ways. They can be audited. They can be corrected without retraining from scratch. And they can be explained to stakeholders who will not accept “it’s a black box” as a final answer.

2026 is the year the industry stopped treating explainability as a nice-to-have and started treating it as a first-class engineering requirement. Neuro-symbolic AI is the architecture that makes that possible at scale.

The question for data scientists is not whether neuro-symbolic AI will matter. It clearly already does. The question is how much symbolic structure you are willing to design upfront, and whether your organization is ready to treat that design work as real engineering rather than a paperwork exercise.

Start small. Add one explicit rule layer on top of your next model. Document it. Build the audit trail. See what questions that raises in your organization. The answers will tell you exactly how far to go next.

Neuro-Symbolic AI; Explained Simply was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.