tl;dr: Lens Academy offers a new course introducing ASI x-risk for AI safety newcomers, centered around the book IABIED. We share our hypothesis of why IABIED seems more appreciated by AI Safety newbies than by AI Safety insiders.

Lens Academy's new intro course uses IABIED to teach newbies about ASI x-risk

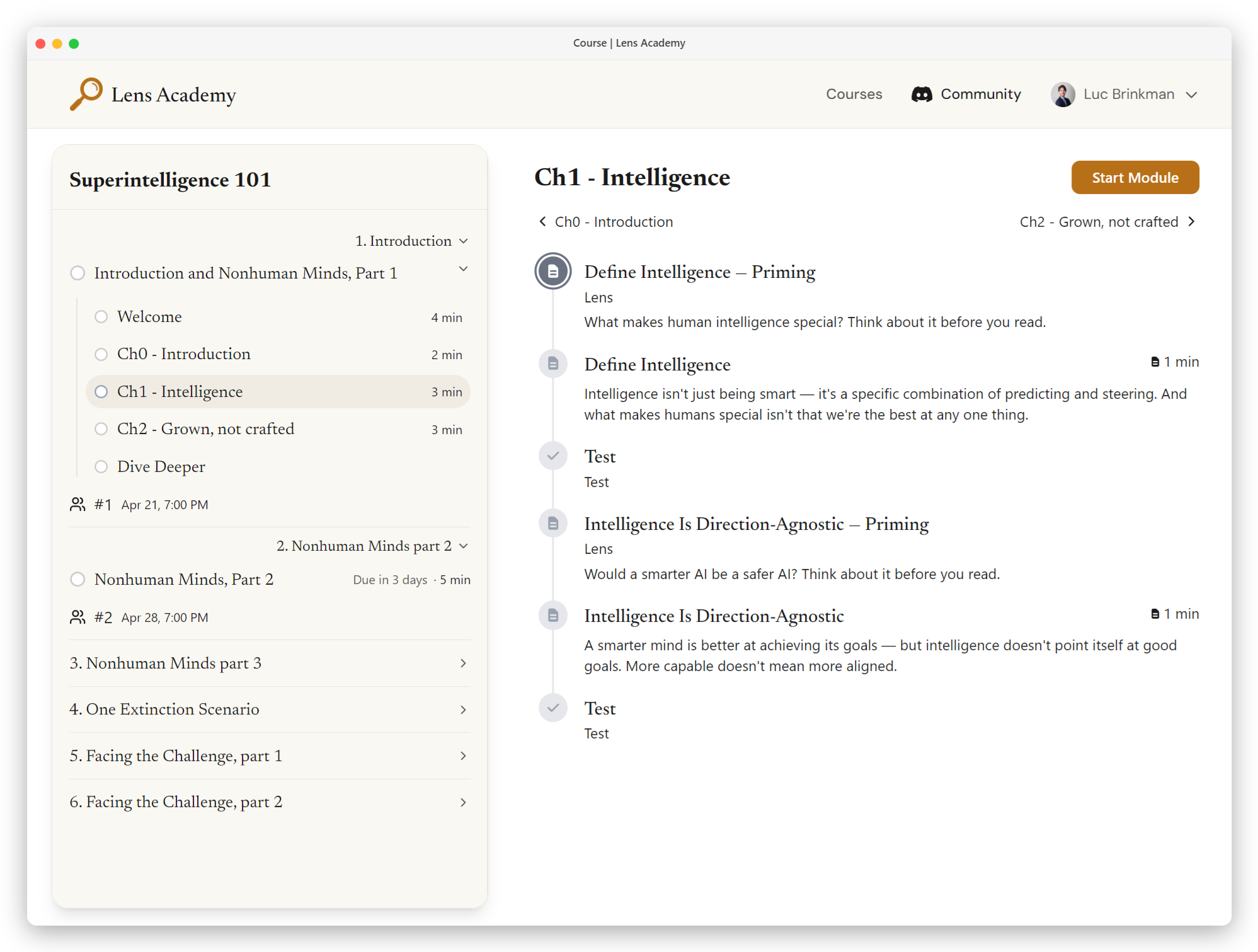

Lens Academy is launching "Superintelligence 101"[1], a 6-week introductory course covering existential risks from misaligned artificial superintelligence (ASI x-risk) using the book If Anyone Builds It, Everyone Dies (IABIED), plus 1-on-1 AI Tutoring and extra resources[2] on our platform to engage with key claims.[3] Each week ends with a facilitated group meeting.

Anyone can enroll, and everyone is accepted. We're set up to be highly scalable, so we don't reject any applications. In, fact, we don't even have applications.

Sign-up here as a participant or navigator (facilitator): https://lensacademy.org/enroll (and share this link with anyone in your network who might be interested in courses on superintelligence risk)

Teaching ASI x-risk to AI safety newcomers is different from teaching to insiders:

1. Good resources explaining ASI x-risk barely exist

When creating our first course (Navigating Superintelligence), we repeatedly ran into the problem that for most of the learning outcomes we wanted to achieve, there are very few good, easily understandable resources out there.[4]

In many such cases, the best introductory resources are chapters from IABIED.

2. IABIED seems to be pretty successful in convincing newbies to worry about AI x-risk.

A few datapoints:

- One of our course creators recently did a test-run of an IABIED book club, and saw remarkable subjective success.

- AI Safety Quest has had several applications for their Navigation Calls program that mentioned IABIED as a reason for their interest in AI Safety.

3. IABIED seems less successful at convincing AI safety insiders that alignment is hard

The book does not convince AI safety insiders of the "MIRI / ASI x-risk / alignment-is-hard" point of view, compared to how well it seems to land with the general population (i.e. AI safety newbies).

Insiders don't like IABIED because it wasn't written for them

We think IABIED doesn't resonate with insiders because it was written for a general audience. As a general audience work:

- IABIED gives an end-to-end overview of the case for ASI x-risk in support of their thesis: "if anyone, anywhere on Earth builds a machine superintelligence using techniques anything like today's, all humans will die." They argue by making a series of claims that together make a compelling case for the overall claim of "we would all die" IF said claims are TRUE.

- But crucially, the book doesn't (deeply) explain the arguments and evidence behind the claims.

- Instead it uses parables and analogies to make them intuitive, which seems great for a general audience, but not useful (or maybe counterproductive) for people already familiar with "the MIRI point of view" and being anchored by "the MIRI point of view is a minority view in the AI Safety community".

This seems to lead to the split where AI safety insiders don't care much for the book – nor have a particularly high esteem of it – whereas it gets AI safety outsiders to care about the core of the alignment problem more reliably than by any other resource we know of.

However, there are far too few AI safety insiders in the world. A broad-based change in the way the public sees and talks to their representatives about AI is needed to avert disaster. Because of these observations, we claim:

If Everyone Reads It, Nobody Dies.

- It doesn't need to convince everyone who reads it. We expect it will convince a large enough portion of people that collective action would be taken to globally pause ASI development.

- It's impossible to get literally everyone to read the book, but at least we can try to get more people to read it and, crucially, engage with it more deeply.[5]

Sign-up here as a participant or navigator (facilitator): https://lensacademy.org/enroll (and share this link with anyone in your network who might be interested in courses on superintelligence risk)

- ^

The name of the course is likely to change a couple of times in the coming months.

- ^

from both ifanyonebuildsit.com and many other resources

- ^

When most people read a non-fiction book and "important stuff", they talk about it with network for a week or two and then move on to the next attention-grabbing topic. Since we think AI safety is much more important than the next attention-grabbing topic, we would like to help readers solidify the material and take steps toward getting involved.

- ^

Such as article and videos.

- ^

There are a lot of extra resources online on ifanyonebuildsit.com, but we suspect barely anyone reading the book will look up those resources; especially if they disagree with the claims made in the book.

Discuss