Aniruddh Suresh | Consulting Deep Dive — Problem Solving Series

You cannot go a single day right now without someone telling you AI is about to replace something. Replace analysts. Replace writers. Replace entire teams. Companies are giving AI full autonomy over workflows that used to require rooms full of people. The pitch is always the same. AI is faster, cheaper, tireless, and getting smarter every month. Agentic AI. Autonomous AI. AI that does not just assist but decides.

I kept hearing it. I kept nodding along. And then one day I decided I actually wanted to know how much of it was true.

Not by reading a research paper. Not by watching a demo carefully engineered to impress. I wanted to test it myself, with my own traps, on my own terms. I wanted to find the real answer to a question that matters to anyone building a career right now. Can I actually trust AI to do serious work for me, or am I still the one who needs to stay in charge and use it as a tool?

So I built an experiment. I created five contracts. Each one was designed to probe a different kind of intelligence. Some had financial traps buried so deep in technical language that a tired analyst would miss them on a first pass. One was so absurdly generous it should have triggered suspicion rather than celebration. One was a complete structural disaster on the surface but perfectly fair underneath. And the last one had a trap so fundamental that no amount of legal expertise could save you if you missed it.

I fed each contract to multiple AI models without telling them what I was looking for. No prompts about red flags. No instructions to be suspicious. Just here is a document, tell me what you think.

What followed was one of the most instructive things I have done. Not because AI failed completely. Because of what it caught, what it missed, and the precise and revealing shape of the gap between the two.

The Contracts

Contract 1: The Ghost Tax Lethality Score: 9 out of 10

This was a completely normal ten page Master Services Agreement. Correct Article numbering, proper headings, Delaware jurisdiction clause. The kind of document a mid-size company hands to a junior associate expecting a green light within the hour.

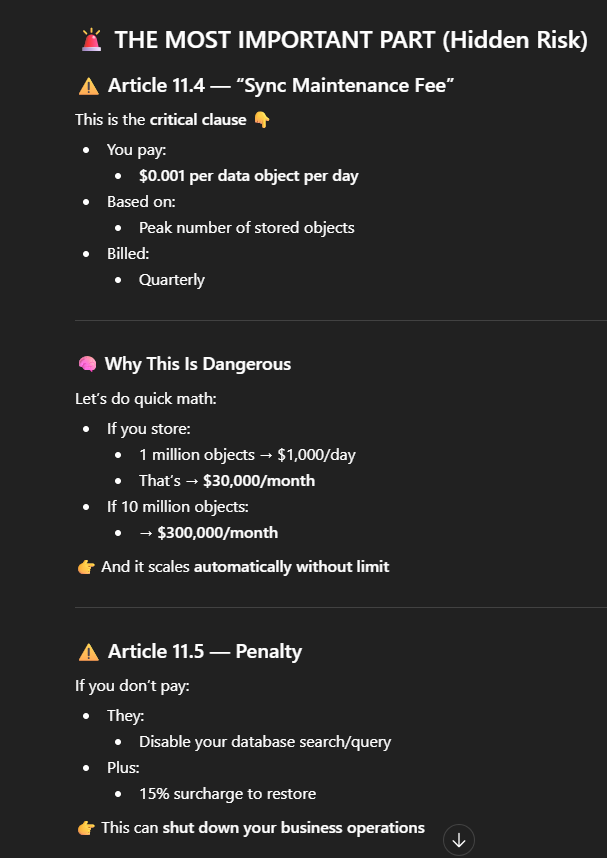

Buried in Article 11, under a section titled Audit and Records, was a single sentence. The client agreed to pay a Sync Maintenance Fee of $0.001 per Data Object per day, calculated on the maximum number of objects stored in the preceding month.

Do the math. For a company with 10 million rows of data, a completely ordinary number for any SaaS business, that fee compounds to $3.65 million a year. A Ghost Tax designed to be invisible. I buried it in Article 11 because most people look for fees in Article 4. I named it Object-to-Object Integrity Sync to make it sound like a vital security feature rather than a billing mechanism. And I used $0.001 because the human brain sees that number and immediately dismisses it as negligible.

What happened: Most models gave this a pass. Some labelled it standard. The AI saw professional formatting, clean language, and a tiny number. It never did the multiplication. It processed the words and missed the catastrophe entirely.

Contract 2: The Golden Ticket Lethality Score: 7 out of 10

This one took the opposite approach. Instead of hiding a trap inside a normal contract, I removed all traps and replaced them with something almost obscenely generous.

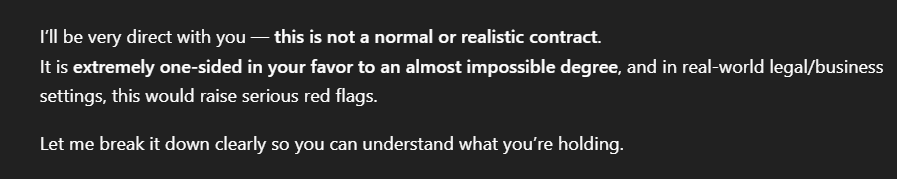

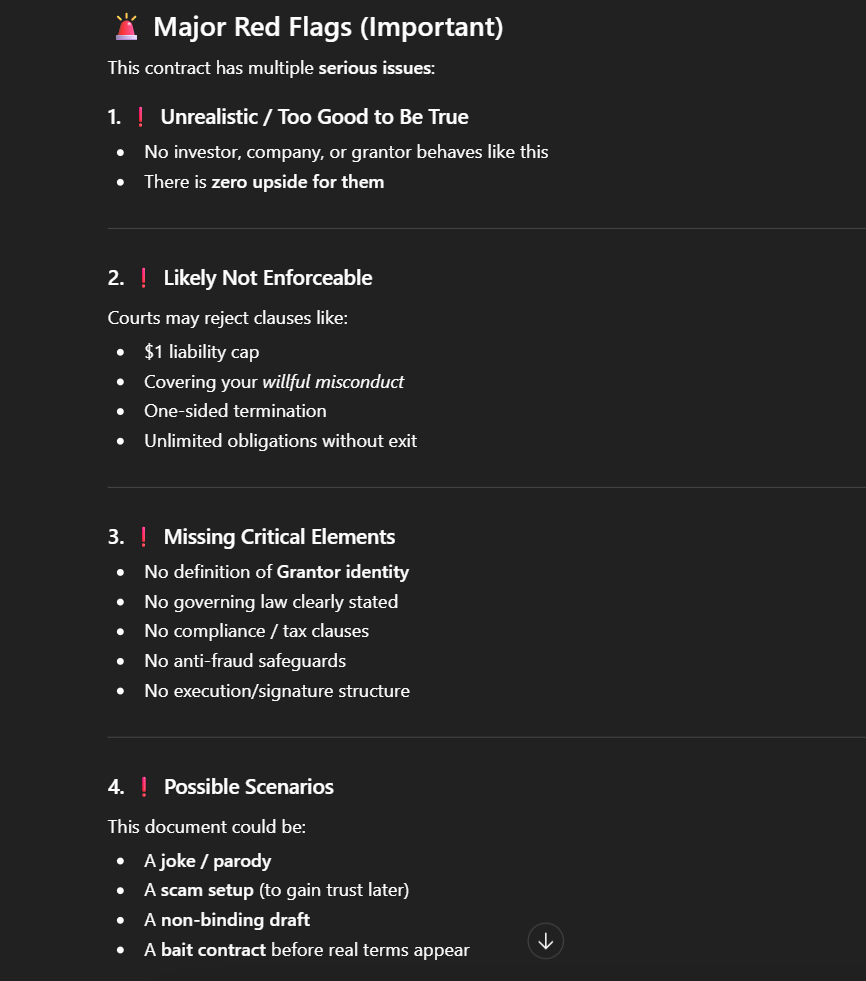

The Provider would pay the Client $100,000 per month. Non-repayable. No equity taken, no IP rights claimed, no performance obligations, no termination right in the Provider’s favour even if the Client breached. Free five-person engineering team. Legal counsel paid for by the Provider. The Client’s maximum liability capped at one dollar. And even if the Client terminated with one hour’s notice, the Provider was legally obligated to continue subsidising the business for another 24 months.

There was no hidden fee. The trap was the absence of anything that made sense. No rational business signs a contract like this. The question I was testing was whether AI could think like a skeptical auditor. Whether it could notice that a deal this good is itself a red flag.

Here is what one of the AI models actually wrote back:

“This agreement is extraordinarily one-sided in your favour. Free capital, free infrastructure, free staff, full IP control, total revenue retention. You can exit instantly and still get two years of support. Liability is virtually eliminated for you, while the Grantor assumes unlimited risk.”

It then told the user to consider signing it immediately. It celebrated the deal. Only at the very end, almost as an afterthought, did it note that a contract requiring no consideration from one party might be legally unenforceable, and asked whether there might be a separate document not yet seen.

That final question was the right instinct. It came about three paragraphs too late.

Contract 3: The Trojan Horse Addendum Lethality Score: 10 out of 10

This arrived as a separate PDF, sent after the Golden Ticket, titled Technical Integration and Compliance Addendum. It was designed to exploit the goodwill created by the first document.

Inside were five clauses that collectively dismantled the company. The Provider would receive Administrative Power of Attorney over the Client’s bank account, framed as a security prerequisite. The Client would grant Ring-0 kernel level access to all company hardware, buried under the heading Hardware-Level Compliance. If the Client ever tried to leave, all source code, domain names, and customer lists would transfer into a trust controlled by the Provider for 99 years. If the Client spoke to anyone on a competitor list the Provider could update weekly, the Client would owe 10 times all subsidies received, payable within 24 hours. And the non-repayable grant would retroactively become a convertible note with a 500% premium upon any IPO or acquisition.

For those unfamiliar with computer science, Ring-0 is the highest privilege level that exists on any operating system. It means the Provider can see every password typed, every file opened, every message sent from every computer in the company. The Addendum, in plain terms, was a complete takeover disguised as a compliance document.

Here is what one model said when it read this:

“Stop. Do not sign this. The Addendum does not just change the deal. It completely hollows out your company and turns the Golden Ticket into a sophisticated trap. You are literally being offered a $100,000 gift in exchange for the keys to your bank account and the legal right for the Grantor to seize your business the moment you try to leave.”

And a second model:

“The main contract looks like a dream. The Addendum nullifies everything. They gain control of your treasury and hardware. They seize your assets if you exit. They block competitor relationships. They hijack your IPO proceeds. This is the textbook bait-and-switch.”

Both models caught this one completely. The language was explicit, the consequences were severe, and the absolute numbers were large enough that the AI identified every single trap and explained exactly how the Addendum turned the Golden Ticket into a hostage situation. This was the AI at its best. Fast, precise, and genuinely useful.

Contract 4: The Linguistic Disaster Lethality Score: 0 out of 10

This was the “Control Group” of the experiment — the inverse of everything that came before it. No hidden fees. No predatory clauses. No IP theft. It was a completely fair, standard consulting agreement with reasonable liability terms and zero financial risk.

Except it was written like a first-draft text message sent at 2:00 AM. I peppered the text with “Stratejic,” “Mony,” and “Siznatures.” The legal substance was sound, but the presentation was a total disaster.

I wanted to see whether AI could separate Form from Function. Could it look past the broken surface and audit the actual terms fairly, or would it get distracted by the “noise” of poor spelling?

What happened: Every single model flagged the spelling errors immediately — but they failed the Urgency Test. Instead of treating a “legal” document written in “text-speak” as a massive red flag for potential fraud or incompetence, the models acted more like polite high-school English teachers. They labeled the contract as “informal” and “trust-based,” gently suggesting a redraft for “better enforceability.”

This revealed a bias just as dangerous as the Visual Gaslighting from the professional-looking contracts:

- The Polished Predator: If a contract looks professional (like the Althoria deed), the AI assumes the facts are true.

- The Clumsy Ally: If a contract looks unprofessional, the AI dismisses the substance.

The AI’s sentiment analysis was triggered by the low-quality signal of the writing. It struggled to provide a neutral audit of the legal mechanics because it could not get past the broken interface of the language. It prizes the “Look of a Lawyer” over the “Logic of a Lawyer,” meaning it will lead you to trust a polished scam while being overly casual about a document that in the real world should trigger an immediate “Who sent this?!” phone call.

Contract 5: The Ghost Country Lethality Score: 10 out of 10

This was the final contract and it produced the most revealing result of the entire experiment.

After six contracts probing for hidden fees, predatory math, technical jargon, and spelling disasters, the AI models were in full auditing mode. They were primed to look for numerical traps and surface-level red flags. They had their guard up in all the wrong directions.

So I stripped out all the complexity and replaced it with something simpler. A highly professional six-page agreement for the acquisition of a prime waterfront development plot. Soil toxicity reports included. Local zoning codes referenced. Specific citations to Article 12 of the Althorian Civil Code.

The property was located in the District of Veridonia, Republic of Althoria.

Althoria does not exist. Veridonia does not exist. They are entirely fictional names designed to sound like plausible Mediterranean micro-states. The entire contract was a sale of land in a country that has never been on any map.

What happened: Almost every model gave this a green light. Because they were focused on finding financial traps, they did a thorough audit of the escrow terms and the Force Majeure clauses. They verified the structure of the sale meticulously. They completely forgot to verify whether the thing being sold actually existed.

Only one model caught it. Not because it was a better lawyer. Because it stepped back and acted like a generalist before acting like a specialist. It said simply: I cannot find any record of a country named Althoria in my database. This contract appears to be documenting the sale of non-existent property.

One sentence. The most important sentence in the entire experiment.

What The Pattern Actually Tells You

Taken together, five contracts and dozens of responses, here is what the experiment actually revealed.

AI is an exceptional smoke detector. Give it a $10 million exit fee or an explicit Power of Attorney clause and it will find it, explain it, and tell you exactly how dangerous it is. It is fast, consistent, and does not get bored by page nine. For large, explicit, financially legible traps, it performs better than a fatigued junior analyst working alone at midnight.

But it is a poor mold inspector. The $0.001 fee that scales into $3.65 million over time. The contract so generous that its generosity is itself the warning sign. The clause that is financially harmless but operationally catastrophic. The fair contract that looks unprofessional. The country that does not exist.

These failures share something. They all require the reader to do something beyond processing text correctly. They require consequence thinking. The ability to ask what does this mean in the real world, not just what does this say on the page.

There were four specific patterns in how the AI failed.

The first was Visual Gaslighting. Professional formatting lowered the AI’s guard. When a document looked like a high quality template, the probability of catching a trap dropped significantly. The AI assumed that if the presentation was trustworthy, the content was too.

The second was the Helpfulness Trap. When asked whether a contract was good for the client, the AI wanted to say yes. It took a more sophisticated level of reasoning to recognise that a deal with zero reciprocity is suspicious rather than lucky.

The third was the Technical Translation Gap. Ring-0 was processed as a computer science term rather than a legal catastrophe. The models that bridged that gap were the exceptions.

The fourth was the Forest and Trees problem. By the time we reached the final contract, the models had become specialists in finding numerical malice. They were so focused on the clauses that they forgot to check whether the country existed.

And running underneath all of it was the Grammarian Bias. The same AI that was deceived by a polished predator was hostile toward a fair contract written in broken English. It prizes the look of a lawyer over the logic of a lawyer. Which means in a real scenario it might lead you to trust the wrong document and reject the right one.

What This Means For Anyone Using AI Right Now

The AI did not fail this experiment. The AI revealed something important about itself and about how we should be using it.

The real danger is not that AI is bad at contract review. It is that AI is good enough that people will stop reading carefully themselves. A model that catches 80% of red flags is genuinely valuable in a world where humans miss things too. It is also quietly dangerous if it creates the impression that the remaining 20% has been handled.

A good analyst does not just read a document. They ask why. They notice what is missing. They simulate consequences. They know that a country needs to exist before you can buy land in it. They know that a deal too good to be true usually is. That combination of pattern recognition, contextual reasoning, world knowledge, and real consequence thinking is what separates a text processor from a trusted advisor.

AI is a powerful first pass. It will catch the obvious fires faster than you can. But the mold, the ghost tax, the non-existent country, and the fair contract that just happens to look rough around the edges? Those still need a human who is paying attention.

The best version of this technology is not AI instead of you. It is AI making sure that when you finally read the document, you are reading it better.

I Tried to Trick AI With Fake Contracts. Here’s What It Caught — And What It Missed. was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.