Multi-agent systems (MAS) in real-world applications

We are seeing more use of distributed multi-agent systems (MAS) in real-world applications like logistics, robotics, IoT, and complex enterprise workflows.

But these systems struggle with task assignment. When many intelligent agents work together, the system must decide who does what and why.

As these systems take on more tasks, there’s an increasing need for them to be understandable, trustworthy, and accountable for their autonomous choices.

While autonomous agents are powerful, without explanations for their actions, it can be risky to fully trust them.

In this article, we will explore the role of explainable agentic AI in autonomous task allocation and break down exactly how it works within distributed multi-agent systems.

What is Agentic AI?

Agentic AI refers to artificial intelligence systems capable of planning, reasoning, and acting autonomously to achieve specific goals.

Unlike traditional AI, which operates within narrow boundaries and requires human direction, agentic AI exhibits independent behavior.

These systems are designed to perceive their environment, evaluate options, and initiate actions without requiring a direct prompt for each step.

The key characteristics of agentic AI include goal-driven behavior, where the system breaks down a broad objective into a series of logical subtasks.

These systems also demonstrate sophisticated tool use and interaction with the environment, utilizing external APIs, databases, and software integrations to perform work across digital ecosystems.

Multi-step reasoning workflows allow the agent to monitor its progress, identify gaps in its current plan, and adapt its actions based on real-time feedback.

Here is the difference table of traditional AI and agentic AI.

What are Multi-Agent Systems (MAS)?

A multi-agent system consists of multiple interacting autonomous agents that collaborate and coordinate to solve problems that exceed the capacity of any single agent.

The key capabilities of multi-agent systems include distributed problem solving and the ability to operate in dynamic, uncertain environments.

For example, in swarm robotics, individual drones or robots act as agents that must communicate to avoid collisions and allocate search areas during a rescue mission.

In smart grids, agents representing power generators and storage units negotiate in real-time to balance the electrical load.

MAS architectures are highly resilient because control is distributed, the failure of a single agent does not necessarily result in the failure of the entire system.

What is Task Allocation in MAS?

Task allocation is a process of assigning specific tasks to individual agents within a multi-agent system based on their capabilities, the current context, and the overall goals of the system.

Efficient task allocation ensures that resources are utilized optimally, deadlines are met, and system energy consumption is minimized.

This process can be divided into several categories depending on the architecture and environment of the system.

- Centralized allocation involves a single coordinator agent or a central station that gathers information from all agents and determines the global assignment. While this can lead to optimal solutions, it often faces scalability bottlenecks and introduces a single point of failure.

- Decentralized allocation allows agents to negotiate directly with one another to reach a consensus on task distribution. This approach is more scalable and robust, but can be computationally complex to implement effectively.

The Need for Explainability in Agentic MAS

Why Explainability Matters

Explainability maintains human oversight and builds trust in autonomous systems. When an agent makes a decision that affects a business process or a physical system, human operators must understand the rationale behind that action to verify its safety.

Trust is established when the system’s behavior is predictable and consistent with human expectations. Without explainability, any error or unexpected behavior can lead to a complete loss of confidence in the technology.

Beyond trust, explainability is a critical requirement for regulatory compliance and auditing. Emerging legal frameworks, such as the EU AI Act, mandate that high-risk AI systems provide clear justifications for their outputs to ensure fairness and prevent discrimination.

In the event of a system failure, explainability facilitates forensic debugging and failure analysis. It allows developers to identify whether the issue stems from an agent’s perception, planning logic, or a breakdown in inter-agent communication.

Challenges of Explainability in MAS

Explaining the behavior of a multi-agent system is more difficult than explaining a single-agent model.

One of the primary challenges is emergent behavior, in which interactions among multiple simple agents produce group dynamics that were not explicitly programmed.

Because decisions are distributed and non-linear, the final outcome of a system-level task may not be traceable to any single agent’s decision.

Multi-step reasoning further complicates the process, as agents must maintain long-term consistency across a sequence of actions.

In a dynamic environment, the logic behind an action can change rapidly as new information arrives, making it difficult to capture a stable explanation.

Traditional explainability methods often focus on single predictions, but agentic systems require explanations that account for decision trajectories over time.

When outcomes arise from interactions rather than individual actions, the explanation must encompass the entire coordination logic of the group.

From Traditional XAI to Agentic Explainability

Limitations of Traditional XAI

Traditional Explainable AI (XAI) techniques, such as SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations), are primarily designed for static, one-off predictions.

These methods focus on feature importance, highlighting which inputs had the most impact on a classification or regression result. While effective for understanding why a model labeled an image as a cat, they are not suitable for sequential decisions found in agentic workflows.

Feature-based explanations fail to capture the temporal dependencies and the reasoning-out-loud patterns characteristic of autonomous agents.

In a multi-agent setting, a feature’s influence can vary with what other agents have done, making static feature rankings misleading.

Furthermore, these methods do not provide decision traceability; they explain what features were important but not why the agent chose a specific tool or abandoned a particular strategy halfway through a task.

Agentic Explainability Paradigm

Agentic explainability shifts the focus from feature-level influence to trajectory-level explanations.

In this new approach, the unit of explanation is an entire execution trace, including the sequence of states, actions, and observations. Agentic explainability emphasizes decision traceability, allowing users to follow the system’s logical path to a goal.

Inter-agent reasoning transparency is another core component. It requires that the communication between agents be made explicit and interpretable, showing how agents influenced one another’s beliefs and plans.

This shift ensures that explanations are grounded in the actual run-time context of the agentic system, providing faithful signals for evaluating and diagnosing autonomous behavior.

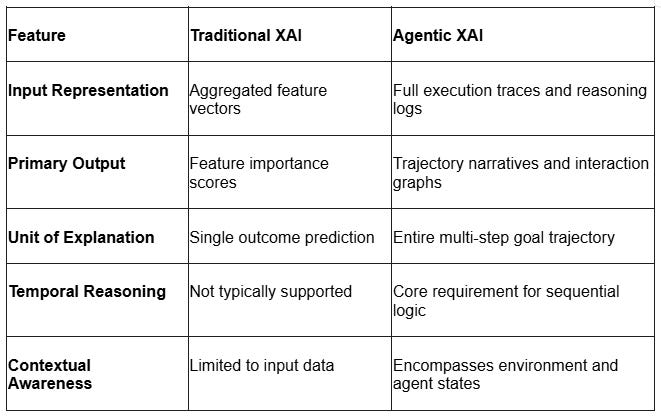

Here is a difference table:

Architectures for Explainable Agentic Task Allocation

Modular Agent Design

Agentic systems should be built using modular designs where different cognitive functions are handled by distinct components.

Each module in the architecture contributes its own explainable signals to the overall decision trail. The primary components of a modular agent include:

- Perception: This module is responsible for state understanding and for processing data from sensors or external sources to build the agent’s internal model of the world.

- Planning: The planning module handles task decomposition, breaking high-level objectives into subtasks and selecting the appropriate tools to execute them.

- Execution: This component performs the actual actions, such as calling an API or moving a physical robotic arm, and monitors the immediate outcomes.

- Communication: The communication module manages information exchange with other agents and users, ensuring intent is communicated clearly.

Explainable Coordination Mechanisms

Agents need explainable coordination to work together. This includes negotiation protocols equipped with reasoning logs that record the “conversations” between agents. Systems also rely on consensus-based decision-making and role-based task assignment to keep operations structured and understandable.

Centralized vs Decentralized Explainability

When designing these systems, architects must choose how to handle explainability. A centralized approach is easier to audit, but it creates a dangerous bottleneck risk if the central hub fails. A decentralized approach is highly scalable, but it requires agents to use shared explanation protocols to understand each other.

Consensus-Driven Agent Architectures

In consensus-driven architectures, multiple agents generate candidate decisions for a problem. A dedicated reasoning layer then steps in to aggregate the outputs, resolve any conflicts between the agents, and produce a final, explainable decision. This structured approach directly improves the robustness, transparency, and overall trust in the system.

Techniques for Explainable Task Allocation

Rule-Based and Symbolic Explanations

Rule-based and symbolic techniques offer a high degree of intrinsic interpretability because they are based on formal logic.

The Belief-Desire-Intention (BDI) model is a well-established symbolic framework where an agent’s behavior is driven by well-structured concepts.

In BDI-based task allocation, an agent’s knowledge about the world (Beliefs) leads it to pursue specific objectives (Desires) through committed plans (Intentions).

Symbolic methods are useful for enforcing constraints, such as ensuring that a task is assigned only to an agent with the required security clearance or hardware capacity.

These systems provide transparent decision-making that is predictable and easy to audit, making them ideal for high-stakes domains like finance and network management.

However, symbolic rules can be rigid, which is why they are often integrated with learning-based models in modern hybrid architectures.

Attribution-Based Methods

Attribution-based methods like SHAP and LIME provide local explanations for individual decisions by identifying which input features had the most weight.

In task allocation, these can show which agent attributes influenced a specific assignment. This helps identify cases where an agent might be making decisions based on incorrect or biased information.

While these methods are useful for inspecting agent decisions independent of the underlying model, they have limitations in multi-agent workflows. They often fail to capture the long-term temporal dependencies and evolving collaboration logic among agents.

Attribution methods focus on what triggered an action, but they cannot explain the strategic intent of a multi-step plan.

Furthermore, they may produce unstable or inconsistent explanations if the underlying data is noisy or if the model is highly non-linear.

Trace-Based and Workflow Explanations

Trace-based explainability involves capturing and visualizing the step-by-step reasoning and interaction history of agents.

This includes detailed execution logs that record every tool call and its result, as well as step-by-step reasoning chains (Chain-of-Thought) where the agent explains its internal monologue.

For multi-agent systems, agent interaction graphs are used to show how information flows between various components and how sub-tasks are coordinated.

These traces allow human auditors to identify where an agent’s plan deviated from its actual execution, which is critical for diagnosing brittle behavior or regressions.

Users can quickly get an overview of system behavior and locate errors at specific steps in the pipeline by visualizing the workflow. This technique provides the narrative depth needed to understand complex, non-deterministic agentic behaviors that single-step attribution cannot provide.

Explainable Multi-Agent Reinforcement Learning (MARL)

Explainable MARL (XMARL) techniques aim to make individual agent policies and collective team strategies more transparent. Policy interpretation identifies emergent strategies, such as agents grouping together for safety or selecting high-priority targets first.

Reward attribution is another key technique in XMARL, used to show how global team rewards are distributed among individual agents based on their contributions. This ensures that agents are not just acting autonomously but are doing so in a way that is fair and aligned with global performance metrics.

XMARL also incorporates safety and interpretability into the learning process, helping to prevent “reward hacking” where agents find unintended ways to maximize their scores without completing the actual goal.

Autonomous Task Allocation with Explainability

Problem Formulation

To develop an explainable task allocation system, the problem must be formally defined with clear inputs and outputs. The goal is to reach an optimal assignment of tasks to agents while maintaining a transparent reasoning trace.

Inputs:

- Tasks: A set of activities, each with its own requirements, priority, and location.

- Agent Capabilities: A catalog of all available agents, including their hardware, software skills, and current resource levels.

- Environment Constraints: Factors such as physical obstacles, communication range, and safety policies.

Outputs:

- Optimal Allocation: A mapping of tasks to agents that maximizes system utility or minimizes cost.

- Structured Explanation: A report justifying the assignment based on the input factors and the agents’ internal reasoning.

Explainable Allocation Strategies

Several strategies can be used to achieve explainable task allocation, depending on the system’s needs.

- Market-based strategies use transparent bidding, in which each agent’s bid records its estimated utility and cost, making the final assignment process auditable.

- RL-based strategies use interpretable policies in which actions are linked to observable features of the environment, allowing users to understand why an agent prioritized one task over another.

Hybrid symbolic and learning approaches offer the best of both by combining the flexibility of LLMs with the transparency of symbolic rules.

In these systems, a learning-based model may suggest a task allocation, while a symbolic reasoning agent validates it against strict policy constraints and generates the final explanation.

Example Workflow

An explainable task allocation workflow typically follows a series of discrete, observable steps :

- Task Decomposition: The system receives a complex objective and breaks it down into subtasks.

- Capability Matching: Each subtask is matched against the capabilities of available agents.

- Negotiation among Agents: Agents communicate to resolve conflicts and coordinate their actions.

- Allocation Decision: The final assignment is made using a consensus or optimization algorithm.

- Explanation Generation: The system produces a narrative that explains:

- Why this agent? Highlighting its specific capabilities or proximity.

- Why not others? Identifying cost or resource differences through contrastive analysis.

- What constraints influenced the decision? Explaining how safety rules or battery limits affected the choice.

System Design Considerations

Here are some common system design considerations:

- Communication Protocols: For agents to explain themselves to each other, they need shared communication protocols. This includes using shared ontologies (a common vocabulary) and structured reasoning exchanges.

- Scalability: System architects must ensure the network can handle large agent populations and distributed computation without crashing or slowing down.

- Latency vs Explainability Trade-off: There is a constant trade-off between latency and explainability. Real-time systems require fast explanations. Developers must constantly balance the trade-off between the depth of the explanation and the speed at which it is delivered.

- Human-in-the-Loop Integration: Systems should feature interactive explanations and support feedback-driven learning, allowing human operators to correct the AI and improve future performance.

Evaluation Metrics

To assess the effectiveness of explainable agentic task allocation, systems must be measured across three main dimensions:

- Task performance

- Explainability quality

- Human trust

Task Performance Metrics:

- Efficiency: How quickly tasks are completed and how much resource is consumed.

- Completion Rate and Success Rate: The percentage of tasks successfully finished according to predefined criteria.

- Tool Utilization Efficacy: The correctness and efficiency of an agent’s calls to external tools and APIs.

Explainability Metrics:

- Interpretability: The ability of human users to comprehend and reason about the provided explanation.

- Fidelity: How accurately the explanation reflects the actual internal reasoning of the model.

- Consistency/Stability: Whether similar inputs and contexts lead to similar explanations.

Trust Metrics:

- Human Confidence: The level of certainty a human operator has in the agent’s decisions.

- Adoption Rate: The frequency with which users accept the system’s recommendations or allow it to operate autonomously.

Challenges and Open Research Problems

The field of explainable agentic AI still faces several significant hurdles:

- The Illusion of Reasoning: Models can produce coherent but flawed justifications. What appears to be logical reasoning is sometimes just advanced pattern matching and memorization.

- Scaling Explainability: Generating detailed reasoning traces for thousands of interacting agents requires massive computing power, creating a major technical bottleneck.

- The Risk of Overthinking: Agents may waste excessive computational effort on trivial, simple tasks, leading to inefficient or incorrect outcomes.

- Explanation Instability: Slight changes in an agent’s environment can lead to completely different justifications for the exact same decision, which damages user trust.

- Privacy vs. Transparency: Sharing full reasoning traces and inter-agent dialogues risks exposing sensitive enterprise data or proprietary algorithms.

Conclusion

Explainable agentic AI is a foundational requirement for deploying autonomous multi-agent systems in real-world environments. As we move from static, reactive AI to proactive, goal-driven agents, the ability of these systems to provide transparent reasoning traces and interaction logs will bridge the gap between human oversight and machine autonomy. The combination of modular architectures, consensus-driven reasoning, and standardized communication protocols provides a clear path forward for building systems that are powerful, scalable, and trustworthy.

Explainable Agentic AI for Autonomous Task Allocation in Distributed Multi-Agent Systems was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.