Introduction

Most agent systems start at the top layer: a model, a persona, a tool list, and an orchestration wrapper. That works for demos. It does not hold up in production. State ends up split across conversations, approval logic hides inside prompts, and swapping a provider or runtime means rebuilding the loop.

The useful questions sit lower in the stack:

- Which component owns task state?

- Which component enforces policy?

- Which surface lets operators inspect work and step in?

- Which event wakes execution?

- Which protocol must an executor follow to write results back?

- How do you project runtime capabilities into workspaces without drift?

The essential part was discovering which boundaries actually matter. Across each iteration, the same correction kept showing up: centralize the truth, formalize the contract, and police runtime behavior.

This is a technical account of the 5 key phases through building a personal “Agent OS,” a durable backend called agentic-os paired with OpenClaw, Claude, Codex, and Paperclip as the operator control pane.

Phase 1: The State, Policy, and Audit Tension

The first version used an OpenClaw-driven assistant stack with agent escalation, a human-facing task surface, and runtime sessions that carried work through completion. One assistant handled intake and small tasks. Stronger executors took harder work. Notion became the practical task surface. Tasks, drafts, approvals, and outputs had to live longer than a single conversation. The system produced results, but the ownership model was wrong.

If an agent decides whether to escalate, the system doesn’t have an escalation policy. It has an instruction that the agent may or may not follow. That distinction sounds small until something expensive or sensitive happens. Then you need answers. A system cannot answer those questions if the decision lives inside model behavior.

State sat in too many places. Conversations held part of it. Surfaces held part of it. Runtime behavior held part of it. Agents made escalation calls. Prompts shaped approval behavior. Audit existed only when execution happened to leave a trace.

That forced the first durable boundary. Policy moved out of agents and into a backend that owns state. Tasks became first-class objects with explicit lifecycle transitions. The system could create a task, route it, mark it pending review, record approval, accept a result, and preserve the full sequence as system state rather than conversational by-product. Audit stopped depending on whether the runtime happened to leave a useful trace.

This phase established the rule that remained stable through all subsequent changes: agents execute; the backend decides. It decides whether work can run directly or must stop at a plan gate. It stores artifacts and version references. It records audit events around dispatch, review, approval, revision, and completion. Agents do work. The backend decides what kind of work can happen and under which constraints.

Phase 2: The Control Plane Tension

Once the backend existed, the next distinction became clear. A source of truth is not a control plane.

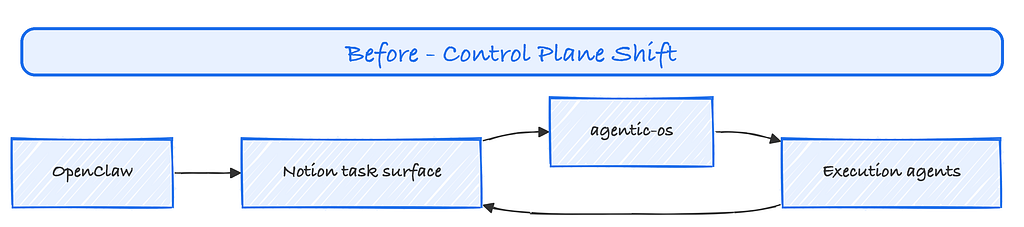

The system needed a surface where a human could create tasks, inspect status, review outputs, intervene on plans, and steer work without touching the database or the CLI. Notion helped at first because humans could create tasks there, inspect status, and track work. That made it useful as an operator surface. It did not make it a good long-term control plane. Notion had no native model for assignment-driven wakeup, execution ownership, plan review, or agent writeback. The backend already handled those jobs. Notion only reflected them.

That realization forced a cleaner split. The backend kept authority over task state, routing, scheduling, delivery, and policy. The operator surface narrowed to issue management, comments, documents, and review. Once that happened, Notion no longer belonged in the live orchestration path.

This phase established another durable rule. Human-facing visibility belongs in an operator surface. Authority over state, policy, and transitions belongs in the backend. The operator surface can project work, collect reviews, and expose status. It should not become the place where the system decides.

Phase 3: The Orchestration Tension

The next break showed up in orchestration costs.

An earlier model relied on polling-style discovery via OpenClaw’s cron jobs and spawned one-off runtime sessions to check for work. That model looked simple. Easy to build a running system, but the impression of negligible cost is compounded. The system spent compute to learn that nothing needed to happen.

The primary failure of OpenClaw as an orchestration engine was its lack of pure-path jobs; every heartbeat run as a job triggered a wake sequence and routed task discovery through agents. This unenforceable pattern became brittle and costly.

That is the wrong boundary for an agent system that expects variable workload. The scheduler should be cheap. Discovering no work should cost close to nothing. Runtime activity should start only after the system has work that merits execution.

The current design changes the wake path. agentic-os creates or adopts tasks, projects them using Paperclip's event-driven wake path as issues, and writes the execution brief there. Paperclip owns heartbeat scheduling, agent wakeup, session lifecycle, and checkout. The assigned agent reads the brief from the issue, does the work, and calls back through a callbackPOST /api/executions/callback. The backend does not start sessions and does not schedule runtime activity; it merely assigns a task using the policy engine, and Paperclip spins into action.

That shift matters because it changes the trigger. The old model centered backend-side work discovery, the new model, on the other hand, centers assignment. Paperclip still supports a routine heartbeat path, its ability to wake agents on schedule, which became useful with routine tasks, but its biggest strength as an orchestration engine lies in its event-driven path. Agents no longer burn execution time to discover emptiness; runtime work starts after projection and assignment.

Paperclip replaced more than Notion. It replaced Notion, plus the old discovery loop. It replaced a weak operator surface and an expensive discovery model. It gave the system a control plane where operators can inspect work, comment, revise, approve, and reassign without turning the backend into a session manager.

Phase 4: The Execution Contract Tension

After scheduling left the backend, the next problem was drift.

Without a formal execution contract, runtimes invent local customs. One executor writes to a temp file. Another replies in plain text. Another assumes approval. Another hits a different callback path. At that point, you do not have a portable execution layer. You have incompatible habits.

The fix was to define execution as a protocol.

Execution now has task modes. In direct mode, the executor writes a result with fixed markers and submits it against a task ID. When plan_firstthe executor submits a plan, stops, and waits for review. A separate role handles review. Approval and revision signals drive explicit state transitions. OpenAI’s Building Agents supports that approach because it treats tools, handoffs, and guardrails as system elements.

That contract does two important jobs. First, it stabilizes behavior across runtimes. Swapping a provider does not force a rewrite of the orchestration model. Second, it limits responsibility. Executors do not poll for tasks. They do not mutate state directly. They do not infer review rules. They receive a brief, perform a bounded role, and write back through the protocol.

That phase turned runtime behavior into protocol. Protocols survive runtime swaps. Prompt habits do not.

Phase 5: The Capability Distribution Tension

Once execution behavior had a contract, capability drift became the next problem.

Multi-runtime systems sprawl fast. Teams copy skills into workspaces, duplicate commands across providers, install plugins in bundles, and patch them by hand. Two executors can belong to the same system on paper and still end up with different callback tools, bridge skills, or remediation commands. Once that happens, the contract fragments.

The important unit of design is not which provider ships which feature. The important unit is the contract that a given executor must satisfy on a given task. If one workspace can submit plans and another has a stale bridge skill, then execution semantics now depend on locality instead of system policy.

The system addressed that through runtime skill hydration. The system defines runtime-visible skills in each workspace as symlinks instead of copied directories. The effective project skillset is defined in one place as global_defaults + shared_integrations + project_defaults[project], resolved from source roots in priority order, then hydrated into .agents/skills inside the workspace. Local skill directories still exist, but the projected skillset has a central definition. bin/agents-stack runs bin/hydrate-runtime-skills before runtime services start, so the workspace begins with the intended capability surface.

That matches the rest of the system. State is central and projected outward. Policy is central and projected outward. Capabilities now follow the same rule.

That matters because commands such as /submit-result and /submit-plan are part of the backend writeback protocol. Bridge skills, remediation creation, and infrastructure commands shape execution semantics. Once those drift, system behavior drifts with them.

Capability management closed a gap that many agent stacks ignore. A backend can own state and still lose control if runtimes do not share the same operational surface.

Target End State

The target architecture rests on a small set of boundaries.

Backend agentic-os owns:

- task lifecycle and state transitions

- policy evaluation

- plan gating

- artifact storage and versioning

- audit events

- Paperclip projection and reconciliation

- approvals, notifications, and operator APIs

A Control Plane owns:

- the issue surface

- assignment

- routine task scheduling

- agent wakeup

- session lifecycle

- operator comments and review actions

Chat surfaces (i.e., OpenClaw) remain the intake and final delivery surfaces. Notion is out of live orchestration and remains available only for non-live context reads when needed. New tasks get assigned to Paperclip. Plans and results get written back there. Operator actions flow into backend state through the reconciler.

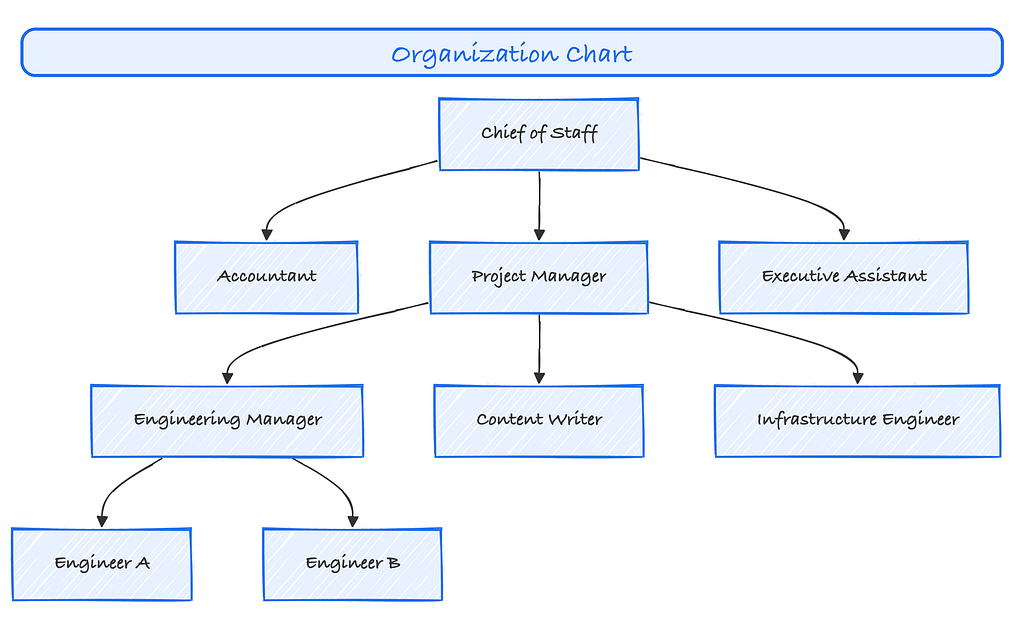

Capability coverage explicitly defines agent roles through hydrated skills, workflow is standardized across all agents and runtime. In the current system, the reporting structure maintains task assignment and plan approvals as a capability of agents that sit as parent nodes, while task execution belongs to agents that sit in the org chart as child nodes. Separation is not a suggestion, it is policy enforced through a durable backend system.

Key Patterns

The pattern across all five phases stayed simple: put authority where the system can enforce it and audit it.

That produced four durable decisions:

- The backend owns task state and policy.

- The operator surface stays separate from the source of truth.

- An assignment-driven control plane should wake runtimes.

- Execution and capability surfaces need formal contracts.

The system is not a set of agents with a management layer wrapped around them. It is a backend-defined operating model with explicit state, explicit control surfaces, explicit execution contracts, and clear runtime boundaries.

Footnotes

- Anthropic. Building Effective AI Agents. https://www.anthropic.com/research/building-effective-agents

- OpenAI. Building agents. https://developers.openai.com/tracks/building-agents/

Building a Multi-Agent OS: Key Design Decisions That Matter was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.