Cursor’s $60B deal with Xai today nearly took headline story, but given that it is a purely financial story (some plausible analysis here on motivations), we are giving title story to OpenAI’s big launch today of GPT-Image-2.

After weeks of speculation as a stealth model on Arena (confirmed), GPT-Image-2 is live on API and ChatGPT and looks to leapfrog Nano Banana 2 in the Imagegen space, with both Thinking and nonthinking variants. This comes after a rumored “focus” sprint that involved the shutdown and departure of the Sora team, so it is both heartening and somewhat surprising that Imagegen is still a priority for OpenAI. Thankfully, the model is very, very, very good. By nature, you should check out the 8 videos that the team has prepared, as well as the blogpost and the livestream and the tweet/blogpost.

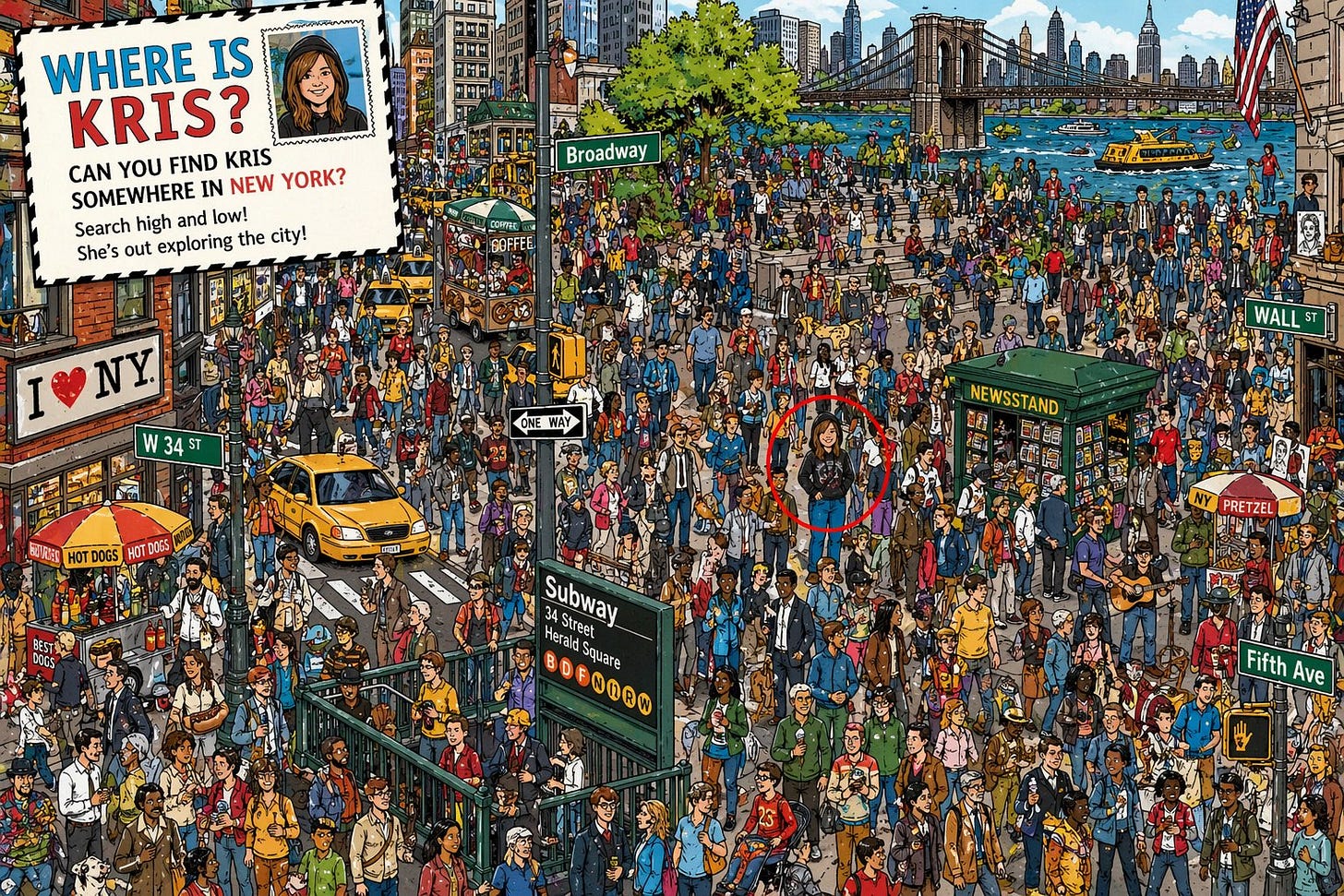

If we were to pick a single most impressive demonstration, it’d be the level of text detail and consistency in the matrix example.

AI News for 4/20/2026-4/21/2026. We checked 12 subreddits, 544 Twitters and no further Discords. AINews’ website lets you search all past issues. As a reminder, AINews is now a section of Latent Space. You can opt in/out of email frequencies!

AI Twitter Recap

OpenAI’s GPT-Image-2 Launch and the Return of Image Generation as a Serious Product Surface

GPT-Image-2 is the day’s clearest product launch: OpenAI rolled out ChatGPT Images 2.0 and the underlying

gpt-image-2model across ChatGPT, Codex, and API, emphasizing stronger text rendering, layout fidelity, editing, multilingual support, and “thinking” for images. OpenAI says the model can search the web when paired with a thinking model, generate multiple candidates, self-check outputs, and produce artifacts like slides, infographics, diagrams, UI mockups, and QR codes (launch thread, thinking/image capabilities, availability, API post). The model is already being integrated by downstream tools including Figma, Canva, Firefly, fal, and Hermes Agent.Benchmarks suggest a large jump, especially on practical image tasks: Arena reports #1 across all Image Arena leaderboards for GPT-Image-2, including 1512 on text-to-image, 1513 on single-image edit, and 1464 on multi-image edit, with a striking +242 Elo lead on text-to-image over the next model (Arena summary, category breakdown, trend chart). Independent reactions converged on the same theme: this is not merely prettier art, but a more usable model for UI, mockups, documentation, productivity visuals, and reference-driven design loops (@gdb, @nickaturley, @mark_k, @petergostev). The most interesting systems implication is that image generation is becoming a front-end for coding agents: generate a UI spec as an image, then have Codex or another code agent implement against that visual reference.

Agent Infrastructure: Hugging Face’s ml-intern, Hermes Expansion, and the Rise of Research/Runtime Harnesses

Hugging Face’s

ml-internis the strongest open agent-in-the-loop release in the set: HF introducedml-intern, an open-source agent that automates the post-training research loop: reading papers, following citation graphs, collecting/reformatting datasets, launching training jobs, evaluating runs, and iterating on failures (announcement, supporting post from @lewtun, Clement’s framing). Reported examples are notable because they are end-to-end loops, not just coding demos: GPQA scientific reasoning improved 10% → 32% in under 10h on Qwen3-1.7B, a healthcare setup reportedly beat Codex on HealthBench by 60%, and a math setup wrote a full GRPO script and recovered from reward collapse via ablations. Community tests quickly showed it can autonomously fine-tune and publish artifacts back to the Hub (example run on SAM finetuning).Hermes is evolving toward a richer local/open agent platform: Several tweets point to Hermes’ momentum as a practical open agent stack: a beginner guide generated by a Hermes agent itself, native support in Skillkit, a new macOS GUI called Scarf, and expanding use in local workflows. The most technically meaningful update is from @Teknium: Hermes subagents now support both greater spawn width and recursive spawn depth, enabling deeper hierarchical decomposition. This aligns with the broader shift from “single chat loop” agents to multi-process orchestrated systems with memory, tools, permissions, and reusable skills.

Harnesses are becoming first-class engineering artifacts: A recurring theme across tweets is that the useful part of agent systems is increasingly the runtime/harness, not the base model alone. DSPy 3.2 shipped RLM improvements plus optimizer chaining and LiteLLM decoupling (release); Isaac Flath argued RLM makes notebooks relevant again as a REPL-native trace/eval interface (tweet); LangChain added custom auth for deepagents deploy (update); and a paper-summary thread on Claude Code emphasized that most of the system is harness logic rather than raw “intelligence” (summary).

Kimi K2.6, KDA Kernels, and Open-Weight Coding Models Getting More Systems-Credible

Moonshot pushed both model capability and kernel infrastructure: The flagship Kimi thread claims K2.6 completed long-horizon coding tasks with sustained autonomy: one run downloaded and optimized Qwen3.5-0.8B inference in Zig over 4,000+ tool calls and 12+ hours, improving throughput from ~15 tok/s to ~193 tok/s, ending ~20% faster than LM Studio (thread). Another run reportedly reworked an exchange engine over 1,000+ tool calls and 4,000+ LOC changes, achieving 185% medium-throughput and 133% peak-throughput gains (second thread). These are still vendor demos, but they are much closer to systems work than benchmark screenshots.

Kimi also open-sourced performance-critical infra: Moonshot released FlashKDA, a CUTLASS-based implementation of Kimi Delta Attention kernels, claiming 1.72×–2.22× prefill speedup over the flash-linear-attention baseline on H20 and compatibility as a drop-in backend for flash-linear-attention (release). External follow-up reported K2.6 + DFlash at 508 tok/s on 8x MI300X, a 5.6× throughput improvement over a baseline autoregressive setup (HotAisle). Together with ongoing discussion of DSA/MLA/KDA variants, the key signal is that Chinese labs are not just shipping weights; they are increasingly publishing attention/kernel-level optimizations with real deployment impact.

Open-weight coding quality is improving, but there’s still disagreement on parity: Some users now treat Kimi K2.6 as the best open-source/open-weight coding/agentic model (@scaling01, Windsurf availability), while others pushed back that frontier proprietary models still hold large leads on WeirdML, long-horizon tasks, and reliability (@scaling01 critique, gap on WeirdML). The substantive takeaway is less “open has caught up” than that open-weight models are now credible enough that infra, harness, and deployment quality determine a lot of real-world value.

Deep Research Systems: Google Extends the Research-Agent Frontier

Google upgraded Deep Research into a more configurable API primitive: Google/DeepMind launched updated Deep Research and Deep Research Max via the Gemini API, powered by Gemini 3.1 Pro, with collaborative planning, arbitrary MCP support, multimodal inputs (PDF/CSV/image/audio/video), code execution, native chart/infographic generation, and real-time progress streaming (Google thread, feature details, Sundar post, developer API post).

The benchmark numbers are strong enough to matter commercially: Google highlighted 93.3% on DeepSearchQA, 85.9% on BrowseComp, and 54.6% on HLE for the Max variant (Sundar, Phil Schmid summary). More important than the raw scores is the workflow design: Google is clearly productizing “overnight due diligence / analyst report generation” and making MCP-backed internal data access a standard part of research agents. This also shows a widening split between simple browse agents and full-stack research agents that plan, search, execute code, generate visuals, and ground over proprietary corpora.

Retrieval, Data, and Evaluation: Open Releases with Real Engineering Value

Retrieval saw a meaningful open release from LightOn: LightOn released LateOn and DenseOn, both 149M-parameter retrieval models under Apache 2.0, reporting 57.22 NDCG@10 on BEIR for LateOn (multi-vector/ColBERT style) and 56.20 for DenseOn (dense single-vector), beating models up to 4× larger (model release, overview). They also published a consolidated dataset release with 1.4B query-document pairs and a refreshed web dataset built on FineWeb-Edu (dataset post).

vLLM shipped a practical deployment knowledge layer: The redesign of recipes.vllm.ai is more useful than it sounds. It maps model pages to runnable deployment recipes, includes an interactive command builder, supports NVIDIA and AMD, covers tensor/expert/data parallel variants, and exposes a JSON API for agents. This is exactly the kind of infra documentation layer that reduces operator friction for serving new open models.

Benchmarks are increasingly probing agent blind spots, not just task outputs: Notable examples include ParseBench for chart understanding inside real enterprise documents (LlamaIndex, Jerry Liu details) and a new result showing agents often ignore explicit environment clues, even when the solution is literally exposed in a file or endpoint (paper thread). Google Research’s ReasoningBank also fits this theme, framing memory as learning from both successful and failed trajectories (tweet).

Top tweets (by engagement)

OpenAI’s image launch: “Introducing ChatGPT Images 2.0” was the dominant technical launch tweet, backed by a deep feature thread and rapid downstream integrations.

HF

ml-intern: @akseljoonas had the standout agent/research-loop release of the day.Gemma local concurrency demo: @googlegemma showed Gemma 4 26B A4B handling 10+ concurrent requests at ~18 tok/s/request on an M4 Max, a useful datapoint for local-serving economics.

Deep Research Max: @sundarpichai and @Google pushed a materially stronger research-agent API surface.

Kimi kernel release: FlashKDA was one of the more substantial open infra drops in the model-serving stack.

Open-source policy warning: @ClementDelangue warned of renewed lobbying to restrict open-source AI, one of the few policy tweets with direct implications for builders.

AI Reddit Recap

/r/LocalLlama + /r/localLLM Recap

1. Kimi K2.6 Model Launch and Benchmarks

Claude Code removed from Claude Pro plan - better time than ever to switch to Local Models. (Activity: 349): The image provides a comparison chart of different subscription plans for a service called “Claude,” highlighting the removal of the “Claude Code” feature from the Pro plan. This change is significant as it suggests a shift in the service’s offerings, potentially prompting users to consider alternative local models like Kimi K2.6 or Qwen 3.6 35B A3B. The post discusses the cost-effectiveness of switching to these local models, emphasizing the value of the OpenCode Go coding plan, which offers more tokens for a lower price compared to the Claude Pro plan. Commenters express disbelief and frustration over the removal of the “Claude Code” feature from the Pro plan, with some suggesting it might be a mistake and others urging the company to address the issue on their product page.

korino11 raises a cost-benefit analysis comparing the $20 open code plan to a $19 plan on Kimi, suggesting that the latter might offer better value. This implies a need for users to evaluate the cost-effectiveness of different AI model subscriptions, especially when features are removed or altered.

Apart_Ebb_9867 points out a potential issue with the information on the official Claude product page, suggesting that the page might need updating or correction. This highlights the importance of accurate and up-to-date documentation for users relying on specific features.

The-Communist-Cat mentions the lack of online references to the removal of Claude Code from the Pro plan, indicating that there might be misinformation or a delay in communication from the company. This underscores the need for clear and timely updates from service providers to avoid confusion among users.

Kimi K2.6 is a legit Opus 4.7 replacement (Activity: 1632): Kimi K2.6 is being positioned as a viable replacement for Opus 4.7, capable of performing

85%of Opus’s tasks with reasonable quality. While it doesn’t surpass Opus 4.7 in any specific area, Kimi K2.6 offers additional capabilities such as vision and effective browser use, making it suitable for long-term tasks. Despite its large size, it suggests that frontier LLMs like Opus 4.7 may not be offering significant new advancements. The model’s local deployment is highlighted as a benefit, avoiding issues like usage limits. Commenters express skepticism about the rapid testing and recommendation process, noting that thorough testing typically takes longer. There’s also a discussion on the affordability of local models, with some users expressing frustration over high costs.InterstellarReddit highlights the rapid testing and deployment process of Kimi K2.6, noting that the original poster managed to test and recommend the model to customers within just two hours. This is contrasted with their own company’s process, which involves a week-long evaluation by four engineers before customer testing. This underscores the efficiency and agility possible with smaller teams or individual developers in AI model deployment.

Technical-Earth-3254 suggests that if Kimi K2.6 achieves 85% of Opus’s performance, it could potentially serve as a full replacement for Sonnet models. This implies a significant performance benchmark where Kimi K2.6 is seen as a viable alternative to existing models, offering similar capabilities at potentially lower costs or resource requirements.

Blablabene discusses the impact of local AI models like Kimi K2.6 on the market, emphasizing that they exert pressure on proprietary models to reduce costs. The comment also notes the current high expense of running models locally, but anticipates increased accessibility in the future as technology advances and costs decrease.

Opus 4.7 Max subscriber. Switching to Kimi 2.6 (Activity: 386): The post discusses a transition from Opus 4.7 Max to Kimi 2.6 due to performance and cost issues. The user notes that Opus 4.7 has become ‘lazy’ and expensive, prompting a switch to Kimi 2.6, which is described as fast and pleasurable despite its smaller context size. The user highlights that Kimi 2.6 manages its smaller context effectively, suggesting improvements in handling tool outputs. A pull request was submitted to improve Kimi’s integration with Forge (GitHub PR). Comments suggest skepticism about the sustainability of investments in proprietary models like those from Anthropic and OpenAI, as open models like Kimi are becoming competitive. There’s also a debate on the potential of Chinese models, with Kimi being a 1T model compared to Opus’s 5T, indicating a shift in competitive dynamics.

Worried-Squirrel2023 highlights a critical issue with Opus 4.7, noting its tendency to ‘stop mid-task or wrap things up before they’re actually done,’ which they describe as ‘laziness.’ This suggests a problem with task completion reliability, which can be a significant drawback in real-world applications. They also mention that Kimi’s smaller context window is less problematic compared to Opus’s commitment issues, and they are particularly interested in the ‘tool calling reliability’ where they see a notable difference between Kimi and Opus.

sb5550 points out the stark difference in model size between Kimi and Opus, with Kimi being a ‘1T model’ and Opus a ‘5T model.’ This comparison underscores the efficiency and potential of smaller models like Kimi, especially when considering that Chinese models might not be lagging behind but could potentially be leading in AI development. This raises questions about the scalability and performance efficiency of smaller models in comparison to larger ones.

Ok-Contest-5856 discusses the financial implications for private equity investments in proprietary models like those from Anthropic and OpenAI, suggesting that open models like Kimi, which are ‘neck and neck and way cheaper,’ could pose a significant threat. They speculate that open models might even surpass proprietary ones in the future, indicating a shift in the competitive landscape of AI development.

Kimi K2.6 Released (huggingface) (Activity: 1386): Kimi K2.6, released by Hugging Face, is a cutting-edge open-source multimodal AI model optimized for long-horizon coding and autonomous task orchestration. It employs a Mixture-of-Experts architecture with

1 trillion parameters, enabling it to transform prompts into production-ready interfaces and execute complex coding tasks across multiple languages. The model supports up to300 sub-agentsfor parallel task execution and shows superior performance in benchmarks, particularly in proactive orchestration and deployment on platforms like vLLM and SGLang. More details can be found in the original article. Commenters noted the impressive scale of1.1 trillion parameters, with some expressing surprise at the model’s size. There is also mention of Cursor’s Composer 2.1 model beginning its training, indicating ongoing advancements in the field.ResidentPositive4122 highlights that the Kimi K2.6 release includes both the code repository and model weights under a Modified MIT License. This license maintains the core ‘do whatever you want’ ethos of MIT but requires attribution if used by large corporations, which is a significant point for developers considering integration or modification of the model.

LagOps91 expresses interest in the potential real-world performance of the Kimi K2.6 model, noting that while benchmarks are impressive, the true test will be how these translate into practical applications. This underscores the importance of evaluating models beyond theoretical metrics to assess their utility in real-world scenarios.

Kimi K2.6 (Activity: 570): The image presents a benchmark comparison of AI models, highlighting Kimi K2.6’s performance across various tasks against other models like GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro. Kimi K2.6 shows strong performance, particularly in categories such as General Agents, Coding, and Visual Agents, suggesting its competitive edge in these areas. The chart underscores Kimi K2.6’s capability, especially in tasks like “Humanity’s Last Exam” and “DeepSearchQA,” where it scores highly, indicating its potential as a robust AI model. Commenters note the significance of Kimi K2.6’s performance, especially in coding, and express surprise at its competitiveness with closed-source models. There is also a mention of Kimi’s vendor verifier, which standardizes third-party service evaluations, highlighting its importance in the AI ecosystem.

The Kimi K2.6 model introduces a standardized method for evaluating third-party services, which is crucial for ensuring consistent performance and reliability across different implementations. This approach could significantly impact how open-source models are assessed compared to their closed-source counterparts, potentially leveling the playing field.

There is a notable anticipation that Kimi K2.6 might outperform Opus, a competing model. Despite its large size, the community is hopeful that Kimi K2.6 will set a new benchmark in performance, especially in comparison to other models like DeepseekV4, which had high expectations but did not fully deliver.

The release of Kimi K2.6 has raised expectations for future models, such as GLM-5.1, by setting a high standard in the open-source community. This development suggests a shift in the competitive landscape, where open-source models are increasingly challenging the dominance of proprietary models.

2. Gemma 4 Model Capabilities and Benchmarks

Gemma 4 Vision (Activity: 319): The post discusses optimizing the vision capabilities of the Gemma 4 model by adjusting its vision budget parameters. The default settings for

--image-min-tokensand--image-max-tokensare40and280respectively, which are considered insufficient for detailed OCR tasks. The author suggests increasing these to560and2240to improve performance, noting that this configuration allows Gemma 4 to outperform other models like Qwen 3.5, Qwen 3.6, and GLM OCR in vision tasks. This adjustment requires a significant increase in VRAM usage, from63 GBto77 GBforq8_0at max context. The post also mentions a limitation with Ollama’s implementation, which may not support these changes due to an unresolved issue. A commenter inquires about the minimum token settings for smaller models, questioning whether the40token minimum applies to larger models only. Another user requests detailed configuration options for llamacpp and vllm, indicating a need for more comprehensive setup guidance.Temporary-Mix8022 discusses using the vision encoder from smaller models with around

150 million parameters, mentioning a configuration of70 tokensas the minimum. They inquire if40 tokensis the minimum for larger models with500 million parameters, suggesting a difference in token requirements based on model size.stddealer shares their experience using

--image-min-tokens 1024and--image-max-tokens 1536settings, which they adopted from Qwen3.5. This configuration led to confusion about the perceived underperformance of Gemma4’s vision capabilities, indicating that token settings significantly impact model performance.Yukki-elric suggests setting both

--image-min-tokensand--image-max-tokensto1120for optimal image quality processing. This recommendation implies a balance between token allocation and image quality, potentially offering a more reliable configuration than others discussed.

Gemma-4-E2B’s safety filters make it unusable for emergencies (Activity: 985): Google’s Gemma-4-E2B model, intended as a local, offline resource for emergency preparedness, is criticized for its overly aggressive safety filters, rendering it ineffective in emergencies. The model issues ‘hard refusals’ on critical survival topics such as emergency airway procedures, water purification, mechanical maintenance, and food processing, under the guise of safety. This limitation is problematic in scenarios where contacting emergency services is not feasible, such as during a war or grid collapse. Commenters argue that the model’s refusal is justified due to its limited world knowledge, suggesting that relying on it in emergencies could be dangerous. Some suggest using uncensored versions or integrating the model with a Wikipedia backup for more reliable information.

Klutzy-Snow8016 highlights the limitations of the Gemma-4-E2B model, emphasizing its lack of comprehensive world knowledge and the potential dangers of relying on it in emergencies. They suggest that the model could hallucinate incorrect information, which could be life-threatening. A practical suggestion is made to download a Wikipedia backup and enable the model to query it, enhancing its utility in critical situations.

iliark points out that in some cases, the Gemma-4-E2B model provides correct advice, such as not removing shrapnel from a wound, which aligns with medical guidelines. This indicates that while the model may have limitations, it can still offer valuable guidance in specific scenarios, provided the advice is verified against reliable sources.

Illustrious_Yam9237 argues against using LLMs like Gemma-4-E2B for emergency advice, suggesting that storing relevant PDFs would be a more reliable and efficient solution. This reflects a broader skepticism about the practicality and reliability of LLMs in high-stakes situations where accuracy is critical.

Gemma 4 26B-A4B GGUF Benchmarks (Activity: 421): The image is a performance benchmark chart for the Gemma 4 26B-A4B GGUF models, focusing on Mean KL Divergence across different providers. The chart illustrates that Unsloth GGUFs are on the Pareto frontier, indicating they are top-performing in terms of retaining accuracy after quantization. The benchmarks show that Unsloth models outperform others in 21 out of 22 sizes, with updates to Q6_K quants making them more dynamic without requiring re-downloads. Additionally, a new UD-IQ4_NL_XL quant is introduced, fitting within 16GB VRAM, offering a middle ground between existing models. The image supports the text’s emphasis on Unsloth’s effectiveness in quantized model performance. A comment suggests including inference speed benchmarks, noting the challenge of varying hardware, while another highlights the efficiency of UD-IQ2_XXS compared to larger models from ggml-org.

qfox337 raises a pertinent question about the inclusion of inference speed benchmarks, noting the potential variability depending on hardware. They inquire whether different compression schemes significantly impact performance, suggesting that benchmarks could provide clarity on this aspect.

Far-Low-4705 compares quantization methods, highlighting that

UD-IQ2_XXSis more efficient at9Gbcompared toQ4_K_Mfrom ggml-org at16Gb. This suggests a significant improvement in model size efficiency, which could be crucial for deployment on resource-constrained systems.-Ellary- discusses the performance of different quantization methods, noting that while Unsloth Qs are often highlighted in benchmarks, their own tests show that Bartowski Qs perform similarly and offer greater stability. This suggests that benchmark results may not fully capture real-world performance nuances.

3. Qwen 3.6 Model Updates and Comparisons

Every time a new model comes out, the old one is obsolete of course (Activity: 1164): The image is a meme illustrating the rapid obsolescence of AI models, specifically comparing “Gemma4” and “Qwen3.6.” The meme humorously depicts the tendency of users to abandon older models in favor of newer ones, even if the older models still have valuable applications. The comments highlight that while “Qwen3.6” may be preferred for certain tasks like coding, “Gemma4” is still favored for creative writing and translation, indicating that different models have strengths in different areas. Commenters express a preference for “Gemma4” in creative writing and translation tasks, while “Qwen3.6” is noted for its coding capabilities. There is also a concern about the reliability and continued support of newer models like “Qwen3.6.”

Gemma 4 is noted for its superior performance in creative writing tasks, with users highlighting its ability to handle such tasks without contest. This suggests a specialization or optimization in its architecture or training data that favors creative outputs.

Qwen is criticized for its performance in translation tasks, with users noting that it falls short compared to other models. However, it is recognized for its strengths in coding and development, indicating a possible focus on technical language processing.

A technical issue with Qwen is highlighted regarding its instruction-following capabilities. Users report that after processing a few images, Qwen’s ability to follow instructions degrades significantly, leading to incorrect tool calls and failure to verify results. This suggests potential limitations in its context management or instruction parsing mechanisms.

Layman’s comparison on Qwen3.6 35b-a3b and Gemma4 26b-a4b-it (Activity: 362): The post compares two AI models, Qwen3.6-35B-A3B and Gemma4 26B-A4B-it, running on a

16GB VRAMvideo card using Windows LM Studio with recommended inference settings. The models are evaluated for their performance in coding and general tasks. Qwen3.6 is described as an ‘A+ student’ with high energy, while Gemma4 is a ‘solid B student’ that performs reliably. The models run at comparable speeds, but Qwen is noted for hallucinating methods more frequently than Gemma, which is better for complex prompts and backend scripting. The post also highlights the importance of using the correct system prompts to unlock Gemma’s potential, as demonstrated by a user comment. Commenters note that Qwen3.6 excels in programming and tool calling, while Gemma4 is preferred for conversation, roleplay, and translation. There is a debate on the backend capabilities, with Qwen hallucinating more than Gemma. Some users suggest that custom fine-tuning or system prompts can significantly enhance Gemma’s performance, particularly in frontend tasks.Sadman782 highlights that while Gemma4 can be improved with custom fine-tuning or system prompts to enhance its frontend capabilities, Qwen3.6 often hallucinates methods, especially in backend tasks. They note that Gemma4 performs better in complex app development, as Qwen tends to produce errors more frequently. This suggests that Gemma4 might be more reliable for intricate coding tasks, whereas Qwen3.6 might struggle with backend consistency.

Kahvana provides a comparative analysis, noting that Qwen3.5/3.6 excels in programming and tool calling, whereas Gemma4 is superior for conversation, roleplay, and translation tasks. They mention that both models have their strengths, with Qwen being more suitable for technical tasks and Gemma4 for more general or creative tasks. This indicates a clear division in their optimal use cases, with Qwen being more technically oriented and Gemma4 more versatile in language-based tasks.

BigYoSpeck discusses the aesthetic capabilities of Qwen models, noting their ability to create visually appealing designs with ‘flair.’ However, they caution that this does not necessarily translate to better problem-solving or instruction-following capabilities. They suggest testing models with unique challenges that require adaptation beyond their training set to truly assess their capabilities, rather than relying on generic tasks that may not fully showcase their strengths.

Qwen 3.6 Max Preview just went live on the Qwen Chat website. It currently has the highest AA-Intelligence Index score among Chinese models (52) (Will it be open source?) (Activity: 440): Qwen 3.6 Max has been released on the Qwen Chat website and currently holds the highest AA-Intelligence Index score of

52among Chinese models, as reported by AiBattle. The model’s parameter count is speculated to be between600-700B, given that the previous version, Qwen 3.6, had397Bparameters. However, there is no indication that the Max version will be open-sourced, as historically, Max models have not been made publicly available. Commenters express skepticism about the open-sourcing of Max models, noting that these models are typically not accessible to the public. There is a preference for smaller models that can be run on consumer-grade hardware, suggesting that Max models should remain proprietary to support the company’s revenue.A user speculates on the parameter count of the Qwen 3.6 Max model, suggesting it could be between

600-700Bparameters, given that the previous version, Qwen 3.6, had397Bparameters. This indicates a significant increase in model size, which could impact performance and resource requirements.Another user expresses a preference for smaller or medium-sized models that can run on consumer-grade hardware, highlighting a common trade-off in AI development between model size and accessibility. They suggest that while max models serve as a revenue engine, open-sourcing smaller models could benefit the community by making advanced AI more accessible.

A comment notes that the largest model likely to be open-sourced is the

122Bmodel, as the company has stopped open-sourcing their larger397Bmodels. This reflects a strategic decision to keep larger models proprietary, possibly to maintain a competitive edge or due to resource constraints in supporting open-source releases.