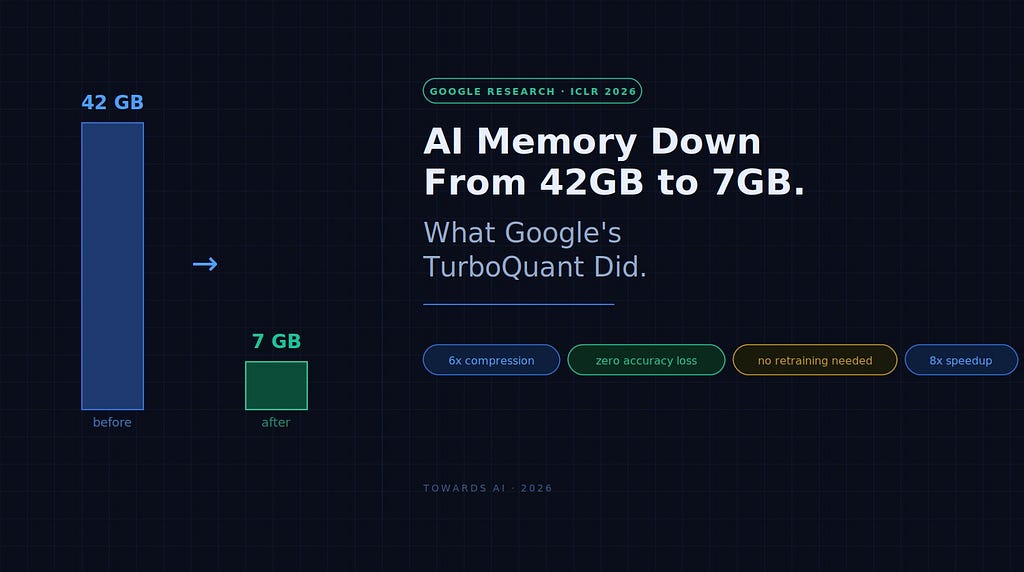

Google’s TurboQuant compresses LLM memory by 6x with zero accuracy loss. Here’s what that actually means for your infrastructure bill — and what to do about it today.

If you’ve ever tried to self-host a large language model, you’ve run into the wall.

Not the model weights — those are manageable. A 70B model in 16-bit precision takes around 140GB of VRAM. Heavy, but knowable. The thing that quietly destroys your GPU budget is something most developers don’t think about until it’s already the problem: the KV cache.

And in late March 2026, Google published a paper that attacks that problem directly. The algorithm is called TurboQuant. It was presented at ICLR 2026 in Rio de Janeiro in late April. The numbers are real, the math checks out, and the community has already been running implementations for weeks.

Here’s what it means — in plain terms, for people who pay infrastructure bills.

First, What the KV Cache Actually Is

When you send a message to an LLM, the model doesn’t just process your latest sentence. It holds onto the entire conversation — every token, every turn — so it doesn’t have to recompute it from scratch with each new response. That running record lives in something called the key-value cache, or KV cache.

Think of it as the model’s working memory for the session. Every new token generated adds to it. Every turn of conversation grows it. And the longer the context window — the more history the model can hold — the bigger that cache gets.

This is where it starts hurting.

A single request to a Llama 3 70B model at 128K context length consumes approximately 42GB of GPU memory just for the KV cache. That’s before you’ve loaded the model weights. On an 80GB H100 — which costs upwards of $2/hour to rent — you’re left with almost nothing for concurrent users. In practice, you’re often serving one user at a time on a GPU that should be handling many.

At short context lengths, model weights dominate memory. At long context lengths — which is where every interesting AI application is heading — the KV cache becomes the primary cost driver. The wall isn’t the model. It’s the memory it needs to think.

What TurboQuant Does

The standard way to store numbers in a computer uses 16 bits per value. Higher precision, more memory. Traditional compression methods for the KV cache try to reduce this — but when you squeeze numbers too hard, you lose information, and the model starts making mistakes.

The reason this is hard is a distribution problem. In a model like LLaMA-2–7B, the top 1% of KV cache values can have magnitudes 10 to 100 times larger than the median value. That extreme spread makes standard compression methods break down — they either distort the outliers or crush the precision of everything else.

TurboQuant solves this with a two-step approach. First, it rotates the data — multiplying the input vectors by a random orthogonal matrix that redistributes the values more evenly without changing the mathematical relationships between them. After rotation, each coordinate follows a well-behaved statistical distribution, which makes compression far more tractable. Then it applies a scalar quantizer tuned for that distribution.

The result: the KV cache compressed to 3 bits per value, down from 16. That’s a 6x reduction in memory. And because the rotation preserves the geometric relationships the model actually uses to reason, there’s no measurable accuracy loss.

On NVIDIA H100 GPUs at 4-bit compression, attention computation runs up to 8x faster compared to unquantized 32-bit keys. On standard benchmarks — LongBench covering question answering, code generation, and summarization; Needle-in-a-Haystack for long-context retrieval up to 104K tokens — TurboQuant matched full 16-bit precision performance across Gemma, Mistral, and Llama models. No retraining. No fine-tuning. No calibration data required. It plugs in at inference time.

That last part matters. Most optimization techniques require touching the model — retraining it, fine-tuning it, quantizing the weights. TurboQuant doesn’t. It works on any transformer-based model you already have.

What This Means for Your Infrastructure Bill

Let’s make this concrete.

That 42GB KV cache for a 70B model at 128K context? With TurboQuant, it drops to roughly 7GB. On the same 80GB H100, you go from serving one user to serving multiple concurrent sessions — on the same hardware, at the same cost.

One engineer who implemented TurboQuant on a production vLLM setup on H100s estimated annual savings of over $260,000 per serving cluster from this single change. The GPU cost stays the same. You just get dramatically more throughput from it.

For developers running smaller models on consumer GPUs, the implications are different but just as real. A 35B parameter model with a 64K context window currently pushes the limits of a 24GB consumer GPU. With 6x KV cache compression, that same setup becomes viable — potentially on hardware you already own, rather than rented H100s you’re paying by the hour.

For teams relying entirely on API providers rather than self-hosting, the effect plays out differently but still lands on your bill. Every major inference provider — Anthropic, OpenAI, Google — runs models at scale where memory efficiency directly affects their own infrastructure costs and, eventually, what they charge downstream. Inference costs for LLMs have dropped roughly 1,000x over the last three years. Techniques like TurboQuant are part of why that curve continues.

What TurboQuant Doesn’t Do

This is where most of the coverage gets a bit breathless and stops being useful. A few honest callouts:

It only compresses the KV cache, not the model weights. If you’re running a 70B model and your primary bottleneck is loading the model weights onto GPU, TurboQuant doesn’t help with that. It’s a targeted solution to one specific problem — the inference-time memory cost of long context windows.

The official implementation isn’t out yet. Google’s official code was expected in Q2 2026. As of early May, it hasn’t shipped. Community implementations exist — the TheTom/turboquant_plus repository on GitHub has thousands of stars, and 0xSero/turboquant provides Triton kernels with vLLM integration — but these are non-mainline builds. Integration into production frameworks like vLLM and llama.cpp is likely Q3–Q4 2026 at the earliest.

The sweet spot is large models at long contexts. For models under 13B parameters, the KV cache is proportionally smaller and the memory savings are less impactful. For short context workloads, the overhead of the compression step doesn’t pay back. The place where TurboQuant delivers its full return is exactly where the cost is highest: 70B+ models serving 32K+ token contexts at meaningful throughput.

There’s a sub-1% quality degradation at extreme compression ratios. For most applications — chat, summarization, code generation, search — this is genuinely imperceptible. For tasks that require exact numerical precision, it matters. Know your use case.

What to Do About It Today

If you’re self-hosting models and running long-context workloads, this is worth tracking actively rather than waiting for mainstream coverage.

The community implementations are already showing real results. The turboquant_plus build on GitHub has validated 100% exact match on Apple Silicon MLX with Qwen 35B at context lengths up to 64K tokens. The Triton kernel builds integrate with vLLM workflows for those comfortable running non-mainline inference stacks.

If you’re not ready to run a community build in production — which is a reasonable position — the practical move now is to watch the llama.cpp feature request (issue #20977 has hundreds of upvotes) and the vLLM integration thread. When official support lands, the adoption path will be straightforward. The algorithm requires no model changes and no calibration data — it’s a drop-in at the inference layer.

If you’re evaluating whether to self-host at all, TurboQuant changes the math meaningfully. The hardware requirement for running a capable long-context model drops significantly. A setup that required an H100 cluster six months ago starts to look feasible on two or three 24GB consumer GPUs with the right software stack. That’s a real shift in what’s accessible to small teams.

The Bigger Picture

TurboQuant isn’t an isolated paper. It’s part of a broader shift in where AI research attention is going.

For a few years, the dominant playbook was straightforward: bigger models, more compute, longer context. Every benchmark improvement came from scaling up. The infrastructure costs were enormous but the results justified it, and the people paying those bills were mostly large companies.

That playbook has started to change — not for ideological reasons, but economic ones. Infrastructure costs keep rising even as GPU prices fall. Inference optimization has gone from an academic exercise to a competitive necessity. The researchers who used to work on making models bigger are increasingly working on making them cheaper to run.

TurboQuant fits squarely in that trend alongside PagedAttention, speculative decoding, flash attention, and model weight quantization. Each of these attacked a different part of the inference cost stack. TurboQuant attacks the one that matters most at long context: the KV cache itself.

The effect compounds. Better inference efficiency means lower costs for providers. Lower costs for providers means lower API prices for developers. Lower API prices mean more applications become economically viable that weren’t before. The models don’t have to get smarter for that to matter — the same capability just becomes accessible to more people, on more hardware, for less money.

That’s the actual story here. Not a research paper. A change in who can afford to build with this technology, and at what scale.

AI Memory Down From 42GB to 7GB. Here’s What Google’s TurboQuant Actually Did. was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.