From Zachman to Three Amigos

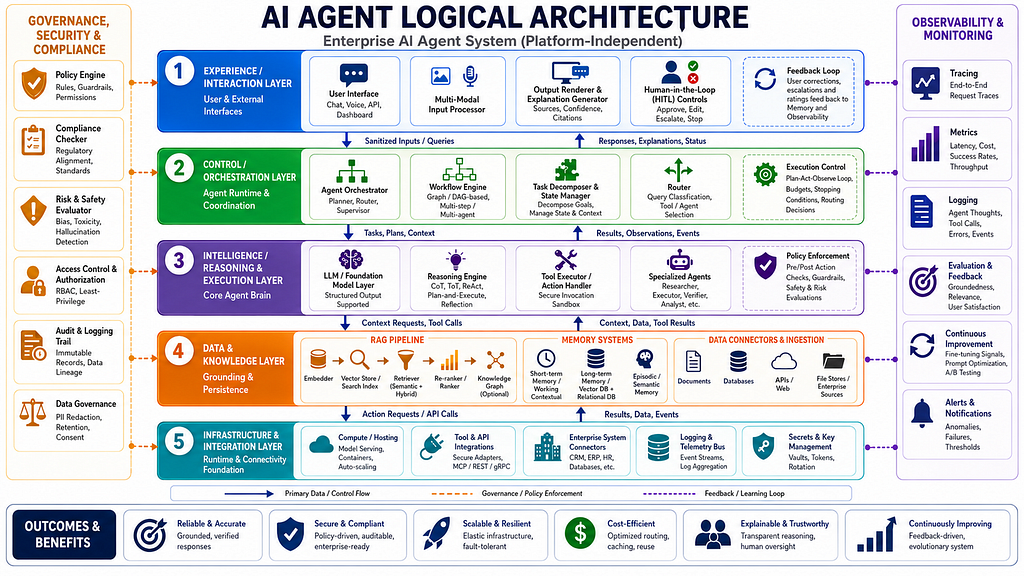

Everyone is rushing to build AI agents, but far too many teams are starting in the wrong place. They begin with a model, a framework, a vector database, or a cloud service and then try to “architect” the rest around those choices. That is backwards. A serious enterprise system begins with a logical architecture: a technology-neutral description of what the system is, how its parts relate, what decisions it must support, and what controls must exist before a single line of implementation code is written.

That discipline is not new. Enterprise architecture has long taught that you should understand the structure of the system before choosing the machinery used to realize it. Zachman’s framework is fundamentally a classification scheme for describing the enterprise comprehensively, while TOGAF gives a method for developing and governing architecture through disciplined iteration. RUP adds another important lesson: architecture should be established early, validated iteratively, and shaped by clear roles, responsibilities, and artifacts. The Three Amigos tradition reinforces the same truth in practical form: shared understanding must exist before implementation begins. The point is simple but often ignored — good systems are designed from first principles, not assembled from fashionable components.

AI agents make this more urgent, not less. Unlike older software that mostly executed predefined workflows, agents now interpret intent, plan actions, call tools, retrieve knowledge, and sometimes affect business processes directly. That means architectural mistakes are no longer limited to bad user experience or technical debt; they can become governance failures, compliance failures, security failures, and operational failures. If the architecture is vague, the agent becomes a clever but unaccountable improviser. If the architecture is explicit, the agent becomes a controlled enterprise capability.

Layer 1: Experience is only the entry point

The first logical concern is the experience layer, where people and external systems interact with the agent. This includes chat, voice, API interfaces, dashboards, and multimodal input/output surfaces. Its job is not to “be the agent,” but to capture intent cleanly, present responses clearly, and expose the right human controls when judgment is required. In mature systems, this layer also handles explanation, approval, escalation, and stop actions because trust begins where the user engages the system.

A strong experience layer reduces ambiguity. It should support structured inputs when needed, make context visible, and avoid forcing users to think in terms of implementation quirks. Enterprise users do not need a theatrical interface; they need one that helps them ask precise questions, review evidence, and intervene when necessary. The best interfaces make the system easier to govern because they surface the state of work instead of hiding it behind conversational polish.

Layer 2: Orchestration is the control plane

Once intent enters the system, orchestration becomes the controlling intelligence of the whole flow. This layer decides how a request is decomposed, what sequence of steps should happen, which route to take, and when the system should wait for human involvement. It is the difference between an agent that can talk and an agent that can operate. In enterprise environments, orchestration should be treated as a formal control plane, not an invisible convenience layer.

This matters because agentic work is inherently dynamic. A request may require classification, planning, retrieval, multiple tool calls, conditional branching, retries, or escalation. If orchestration is weak, the system becomes brittle and difficult to predict. If it is strong, the agent can manage state, enforce budgets, respect policies, and coordinate work across multiple tasks without losing control. That is why orchestration is the bridge between intent and execution.

Layer 3: Reasoning must be bounded

The reasoning layer is where language models, inference logic, tool selection, and specialized sub-agents collaborate to produce decisions. This is the most visible part of the system and the one people most often overvalue. But a model is not an architecture. It is a component inside an architecture. Treating the model as the whole system leads to dangerous overconfidence, because reasoning without boundaries can produce fluent but unsafe behavior.

In enterprise AI, reasoning must be constrained by policy, role, and execution context. The system may infer a plan, but not every plan should be executed. It may identify an action, but not every action should be authorized. That is why policy enforcement, verification, and tool sandboxing belong close to the reasoning layer. The architecture should let the model contribute intelligence while preventing it from becoming an unchecked authority. The right design uses the model as a decision-support engine inside a larger structure of control and oversight.

Layer 4: Knowledge is the grounding layer

An AI agent that lacks grounded knowledge is not truly useful in an enterprise setting. It may generate plausible language, but plausibility is not reliability. The knowledge layer provides the grounding that keeps the system tied to organizational reality: documents, databases, APIs, records, memories, retrieval pipelines, and knowledge graphs. This is where the agent gets context, evidence, and traceability.

A good logical architecture distinguishes between short-term context, long-term memory, episodic traces, and semantic knowledge. It also distinguishes between raw source material and validated enterprise knowledge. That distinction matters because business data is often incomplete, stale, duplicated, or sensitive. If the system cannot manage provenance and freshness, it will confidently produce poor answers. Knowledge architecture is therefore not a side feature of agent design; it is the basis of trustworthiness.

Layer 5: Infrastructure must follow design

The infrastructure and integration layer is where the system becomes real in operational terms. It includes compute, hosting, runtime connectivity, APIs, enterprise system adapters, logging buses, and secrets management. But this layer should never be mistaken for the architecture itself. Too many projects start by choosing cloud services and then backfill the logical model afterward. That usually produces technical sophistication without architectural coherence.

The proper sequence is the reverse. First define the behavior and responsibilities of the system, then choose the machinery that can support them. Infrastructure should enable the logic, not dictate it. This distinction becomes especially important when the agent must integrate with CRM, ERP, HR, identity systems, internal services, or regulated data environments. The logical model tells you what must happen; the infrastructure tells you how it can happen safely and reliably.

Layer 6: Governance must be built in

Enterprise AI cannot rely on after-the-fact governance. If an agent can access systems, move data, or trigger actions, then governance must be part of the architecture from the beginning. Policy engines, access control, compliance checks, audit trails, data governance, and risk evaluation are not optional extras. They are foundational controls that make the system defensible.

This is where enterprise architecture traditions still matter deeply. Zachman’s framework pushes us to describe the enterprise comprehensively. TOGAF reminds us that architecture is a governed discipline, not an improvisation. RUP reinforces the need for structured artifacts and iterative validation. In an AI agent system, governance should answer hard questions: who can do what, under which conditions, with which data, and with what evidence afterward. If those answers are not explicit, the architecture is incomplete.

Layer 7: Observability enables learning

A serious AI agent must be observable. Tracing, metrics, logs, evaluations, and alerts are how the organization understands what the system is actually doing. Without observability, failures become mysteries. With observability, failures become feedback. This makes monitoring a core architectural requirement rather than an operational luxury.

Observability is also what allows improvement over time. It reveals weak retrieval, poor routing, unsafe tool use, confusing interactions, and policy friction. Those signals should feed back into prompts, policies, workflows, and knowledge design. In a mature system, observability closes the loop between runtime behavior and architectural refinement. That is how an agent evolves from a prototype into a dependable platform.

Conclusion

The deepest mistake in modern AI agent design is starting with implementation and hoping architecture will emerge later. It will not. Logical architecture is what gives an AI agent shape, boundaries, accountability, and durability. It is what lets teams discuss the system in platform-independent terms before choosing vendors, frameworks, or code.

That is why the old frameworks still matter. Zachman teaches completeness, TOGAF teaches disciplined transformation, RUP teaches early architectural clarity, and the Three Amigos teach shared understanding. AI agents do not excuse us from that discipline; they demand it even more. If the goal is to build systems that are secure, explainable, scalable, and enterprise-ready, then the first step is not picking a model. The first step is designing the logic of the system itself.

AI Agent Logical Architecture was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.