The transition from deterministic graphical user interfaces to stochastic, agent-driven interfaces represents a fundamental shift in Human — AI interaction. This evolution — frequently categorised as Generative User Interface (GenUI) — moves toward real-time, context-aware interface construction powered by Large Language Models. Two distinct architectural paradigms have emerged to address this problem, and the choice between them has deep implications for security, performance, and portability.

Two Paradigms for Agent-Driven UI

Code-First: Generative UI

The code-first approach treats the LLM as a code generator. The agent produces framework-specific output — React Server Components, JSX fragments, or executable JavaScript — that the client compiles and renders directly. Vercel’s AI SDK is the canonical example: its ‘streamUI’ function streams RSCs from the edge, and the client mounts them as they arrive.

This model is flexible. The agent can produce arbitrary UI because it has the full expressiveness of the target framework at its disposal. But that expressiveness comes at a cost: the agent must know the client’s framework, the generated code must be sandboxed to prevent injection, and every response consumes tokens proportional to the complexity of the code rather than the structure it describes.

Contract-First: A2UI and Freesail

The contract-first approach treats the LLM as a composer, not a coder. The agent selects from a pre-approved vocabulary of components defined in a JSON schema (the ‘catalog’) and emits structured JSON messages that describe what to render — not how to render it. The client’s renderer translates these messages into native UI using its own component implementations.

The Agent-to-User Interface (A2UI) protocol formalises this model. Freesail is a full-stack implementation of A2UI v0.9 that adds a validation gateway, progressive catalog disclosure, and bidirectional state inspection on top of the base protocol.

Deep Dive: Freesail SDK Architecture

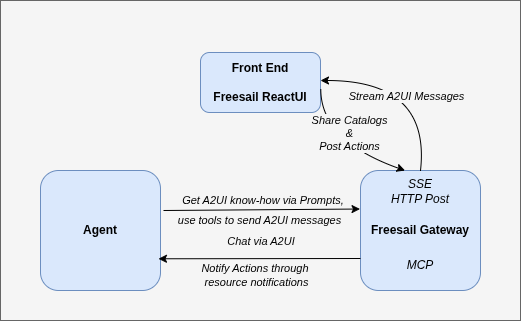

Freesail implements the contract-first model through a three-node architecture that separates decision-making, validation, and presentation into independent processes.

The Three Nodes

Agent (The Brain): The decision-making process — typically a LangChain, LangGraph, or custom LLM pipeline — that composes A2UI JSON messages using MCP tools exposed by the gateway. The agent never generates framework code. It calls get_catalogs to discover available components, get_component_details to understand their properties, then create_surface and update_components to build UI. The agent connects to the gateway via MCP Streamable HTTP and has no ingress — it makes only outbound calls.

Gateway (The Transport and Validation Layer): A standalone Node.js MCP server that sits between the agent and the frontend. Every update_components call is validated against the catalog’s JSON schema using compiled AJV validators before it reaches the browser. Invalid payloads — wrong property types, missing required fields, unknown component names — are rejected with structured error messages returned to the LLM as tool output. The agent sees exactly what went wrong and self-corrects on the next turn. The gateway also manages sessions, handles reconnection with a configurable grace period, coordinates actions via MCP resource subscriptions, and injects the catalog vocabulary into the agent’s system prompt.

Frontend (The Renderer): A stateful React layer that receives validated A2UI messages over SSE, renders them as native component trees, and maintains the local data model with two-way binding. The renderer never trusts the agent directly — it trusts the gateway, which has already validated everything. Additionally, the renderer can enforce its own rules via interceptor hooks (onBeforeCreateSurface, onBeforeUpdateComponents, etc.) that can block operations the gateway’s schema validation cannot catch — permission boundaries, device constraints, or UI state guards.

Communication Architecture

Freesail implements a dual-channel communication pattern:

Downstream (Agent → Frontend via Gateway): The agent calls MCP tools on the gateway. The gateway validates the payload, then streams the A2UI message to the frontend over a persistent SSE connection. Four message types flow downstream: createSurface, updateComponents, updateDataModel, and deleteSurface. Two additional Freesail extensions — getDataModel and getComponentTree — allow the agent to request the current client-side datamodel and UI state on demand.

Upstream (Frontend → Agent via Gateway): User interactions (button clicks, form submissions, chat messages) are sent from the frontend to the gateway via HTTP POST. The gateway queues them as MCP resources and pushes ResourceUpdated notifications to the agent. The agent drains the queue by reading the resource — no polling, no webhook configuration. Session ownership is explicit: the agent runtime calls claim_session on connect and release_session on disconnect.

Chat as an A2UI Surface

In Freesail, chat is not a separate protocol — it is an A2UI surface. The quick-start example React app bootstraps a __chat surface at startup with components from the chat catalog (message list, streaming token display, typing indicator, input box). All conversation state — user messages, agent replies, streaming tokens — flows through standard updateDataModel messages on the same SSE connection as every other surface.

Chat as an A2UI Surface has a significant architectural consequence: the agent process never exposes an HTTP endpoint, a WebSocket, or any ingress route. It sits entirely behind the gateway, making only outbound MCP calls. The browser has no route to the agent.

The Adjacency List Model

Freesail implements the A2UI adjacency list model: components are stored as a flat array with unique ID references rather than a nested tree. Updating a single component requires patching only that ID — O(1) rather than reconstructing the tree. Components reference the data model via JSON Pointer (RFC 6901) bindings, enabling reactive updates where the agent can change displayed data without re-sending the component structure. An example weather surface with its updateComponents message is given below.

{

"surfaceId": "weather_surface",

"components": [

{

"id": "root",

"component": "Column",

"children": [

"weather_card"

],

"padding": "16px"

},

{

"id": "weather_card",

"component": "WeatherCard",

"feelsLike": 14,

"background": "#607D8B",

"windSpeed": 20,

"humidity": 80,

"temperature": 15,

"condition": "cloudy",

"unit": "C",

"location": "London"

}

]

}Progressive Catalog Disclosure

Most A2UI implementations inject the full catalog schema into the agent’s system prompt. With real-world applications running 30–50+ components across composed catalogs, this burns tokens and dilutes the LLM’s attention.

Freesail takes a different approach. The gateway exposes three MCP tools for progressive disclosure: get_catalogs returns a slim index (names, descriptions, type definitions only), get_component_details returns full property references for specific components, and get_function_details does the same for functions.

The agent loads only the components it needs for the current task from the catalog. This has two advantages — tokens are not used more than necessary and agent memory is not overloaded with redundant information. This is listed on the A2UI roadmap as a future item; Freesail ships it today.

Comparative Analysis

Vercel AI SDK: Monolithic Code Generation

Vercel’s approach is monolithic — it assumes the developer owns the prompt, frontend, and data pipeline. The `streamUI` function streams React Server Components directly from the edge. The agent generates executable code, which the client compiles and mounts.

Strengths: Maximum flexibility. The agent can produce any UI that React can render, including complex interactive patterns that would require many catalog components to express declaratively. Tight integration with Next.js and the Vercel platform.

Weaknesses: Coupled to React and the Edge runtime. No validation layer between the LLM and the rendered output — security relies on sandboxing. Higher token consumption for complex UIs. No portability — the same agent cannot drive a Flutter or Angular client.

CopilotKit: Transport-First Runtime

CopilotKit focuses on the runtime infrastructure for agentic applications through the AG-UI protocol. AG-UI handles real-time bidirectional connections, state synchronisation, and human-in-the-loop approvals. CopilotKit supports A2UI as a rendering payload and provides high-level React hooks (useCopilotAction, useCopilotChat) for common patterns.

Strengths: Strong developer experience for common copilot patterns. AG-UI as a transport layer is well-designed for state synchronisation. Human-in-the-loop is a first-class concept. Growing ecosystem with Angular and Flutter renderers.

Weaknesses: The agent-initiated Human-in-the-Loop model is different from client-enforced interceptors — the agent decides when to ask for approval, not the client. No standalone validation gateway — schema validation happens at the renderer level, meaning invalid payloads reach the browser before being caught. Full catalog schema injected into the prompt rather than progressively disclosed.

Freesail: Contract-First with Gateway Validation

Freesail prioritises the contract. The catalog JSON schema is the single source of truth — enforced at build time by the CLI (freesail validate catalog) and at runtime by the gateway’s AJV validators. The agent cannot produce UI that drifts from the contract because every tool call is validated before it reaches the frontend.

Strengths: Zero hallucination surface — the agent’s vocabulary is bounded by the catalog. Gateway validation catches errors before they reach the browser. Token-efficient progressive disclosure. Chat over A2UI means the agent has no ingress. Renderer-side interceptors give the client additional enforcement authority independent of the gateway. Full stack is MIT-licensed.

Weaknesses: React-only renderer (no Angular or Flutter yet). The gateway is an additional process to deploy and operate.

Security and Performance Evaluation

Security: Declarative vs Executable

The fundamental security distinction is between declarative and executable output.

In code-first systems, the agent produces executable code. Even with sandboxing, the attack surface includes every construct the target language supports — event handlers, dynamic imports, arbitrary DOM manipulation. Defence is subtractive: start with everything and try to block the dangerous parts.

In Freesail’s contract-first model, the agent produces declarative JSON that references pre-approved components by name. The gateway validates every field against the catalog schema. The renderer maps component names to implementations that the developer wrote and registered. Defence is additive: start with nothing and explicitly approve each component. There is no path from agent output to arbitrary code execution.

For regulated industries — finance, healthcare, government — this distinction is often the deciding factor.

Performance: Token Efficiency

Code-first approaches consume tokens proportional to the syntactic complexity of the generated code. A simple card with a title, subtitle, and button might require 200–300 tokens of JSX. The same card in A2UI requires a flat JSON object with a component name and property values — typically 40–60 tokens.

Freesail’s progressive disclosure further reduces prompt-side token consumption. Rather than injecting the full catalog schema (which can exceed 3,000 tokens for a 30-component catalog), the agent fetches a slim index on session start and loads full property references only for the components it decides to use. In practice, this reduces the per-turn context window overhead for catalog knowledge significantly compared to full-schema injection.

Delta-based data model updates (update_data_model with a JSON Pointer path) allow the agent to change displayed content without re-sending the component structure, further reducing per-turn token cost for data-heavy applications like dashboards.

Conclusions

The code-first and contract-first paradigms represent genuinely different architectural choices, not incremental improvements on the same idea.

Choose code-first (Vercel AI SDK) when the agent needs maximum UI expressiveness, the application is tightly coupled to a single framework, and the deployment environment can absorb the security and token costs of executable output.

Choose a transport-first runtime (CopilotKit) when the primary need is copilot-style assistance within an existing application, agent-initiated human-in-the-loop is the right approval model, and the AG-UI ecosystem’s growing multi-framework support is a priority.

Choose contract-first (Freesail) when the application requires a validation gateway that catches errors before they reach the browser, zero hallucination risk from a bounded component vocabulary, token-efficient progressive disclosure, and a deployment model where the agent sits behind the gateway with no ingress. Freesail’s implementation of A2UI v0.9 — with its gateway validation, progressive disclosure, chat-as-surface architecture, and renderer-side interceptors — represents the most complete contract-first stack available today.

The broader trajectory of the ecosystem suggests convergence: The question is not whether contract-first will become the standard approach, but how quickly the tooling matures to make it as frictionless as code generation already is. Freesail’s contribution is demonstrating that it can be frictionless today.

Agent-driven UI — A Technical Analysis of the Freesail SDK was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.