How fine-tuning made my chatbot worse (and broke my RAG pipeline)

I spent weeks trying to improve my personal chatbot, Virtual Alexandra, with fine-tuning. Instead I got increased hallucination rate and broken retrieval in my RAG system.

Yes, this is a story about a failed attempt, not a successful one. My husband and I called fine tuning results “Drunk Alexandra” — incoherent answers that were initially funny, but quickly became annoying. After weeks of experiments, I reached a simple conclusion: for this particular project, a small chatbot that answers questions based on my writing and instructions, fine tuning was not a good option. It was not just unnecessary, it actively degraded the experience and didn’t justify the extra time, cost, or complexity compared to the prompt + RAG system I already had.

This post is a breakdown of what I tried, what failed, and what this experience reveals about fine-tuning in real-world systems.

What is fine tuning and why would you need it?

Let’s start with the definition. Fine tuning is a process where you take an existing AI model and further train it to make it more specialized in a certain field (like medical or legal) or to introduce style and behavioral changes.

The thing about my current implementation of Virtual Alexandra — a simple RAG system with prompt engineering on top of a base model — is that it still doesn’t really sound like me. We can all guess when a text is written by ChatGPT now — there is a certain choice of words and structure that just points to the author. I can hear ChatGPT’s voice in Virtual Alexandra’s answers, and I would love to make it less pronounced. Fine tuning promised exactly that.

In theory, it should have been on the same level of complexity as using RAG. And at first glance, it really looked simple. I produced a so-called “instruction dataset”. It usually consists of the user’s input and the expected answer from the model. The format can vary depending on the model, but here is an example for OpenAI in JSONL:

{"messages":[{"role":"user","content":"What’s your favorite kind of weather?"},{"role":"assistant","content":"Nice and sunny."}]}OpenAI’s recommendation is to have at least 50 entries. My dataset included about 1000.

The next step is to either find an online model to fine tune (OpenAI offers a few with a super intuitive UI), or run experiments locally.

But as is typical with AI, “simple” doesn’t mean “good”. So let’s dive into the different issues I had to battle with.

Refined hallucinations

The main problem I encountered after fine tuning, no matter which model I tried, was increased hallucinations. I will start with examples from gpt-4o-mini-2024–07–18 simply because it is the cheapest model OpenAI offers. With my 3 MB of training data containing ~1000 entries the fine tuning job took about 45 minutes.

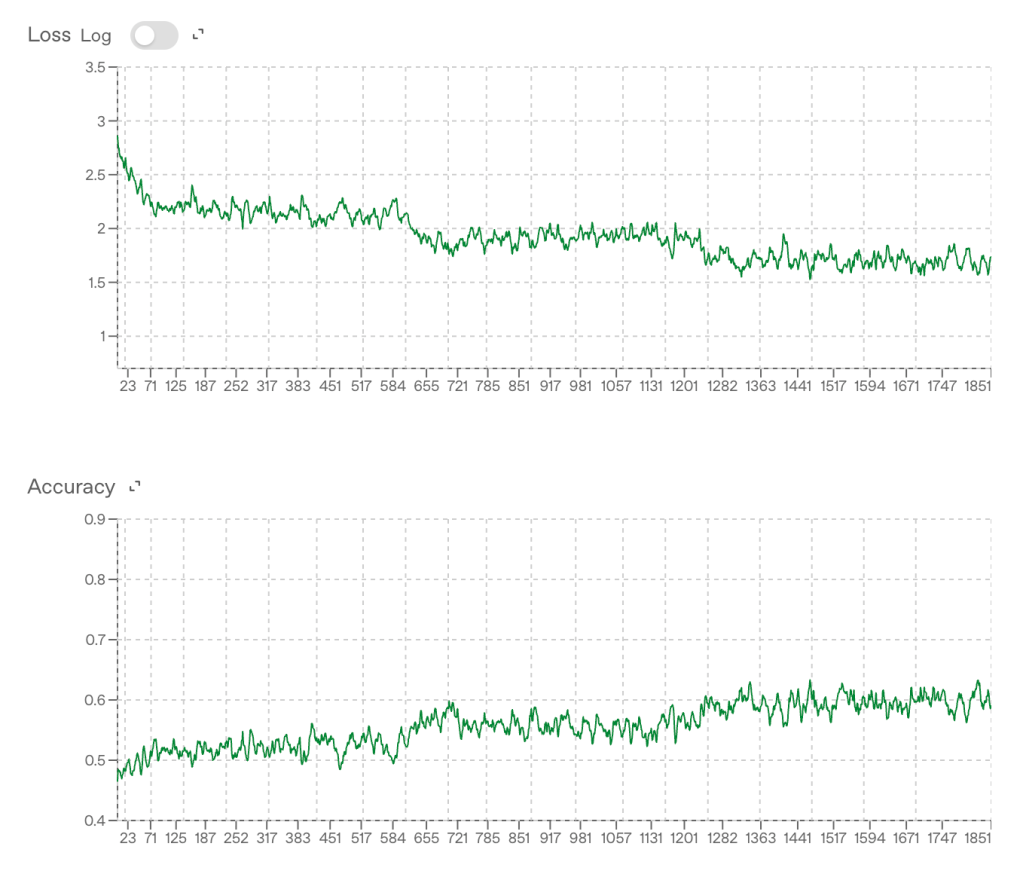

I was shown some nice graphs with reassuring training metrics — loss was decreasing, accuracy increasing — everything looked exactly the way you’d expect when things are working. However, none of these metrics tell you whether the model will actually produce good answers or start hallucinating.

Things looked less exciting when I ran my own tests. I sent identical requests in parallel to three different models — base gpt-4o-mini, fine-tuned gpt-4o-mini, and gpt-5.2 (the one I use for my “production” version of Virtual Alexandra). All of them had the same prompts, access to the same vector store, etc. The testing was pretty basic — I had a list of 22 questions and I was rating the answers based on my own judgment, because after all it was about my personal data and my own tone. This isn’t a perfectly fair comparison, because gpt-5.2 is a much stronger model, but I used it as a reference for what I could achieve without fine tuning.

It’s hard to predict when hallucinations will happen. Even the same question can produce either an acceptable result or a hallucination. But here is a very telling illustration.

You can see that gpt-4o couldn’t calculate my age, while gpt-5.2 had no problems with it. The fine-tuned model once produced the same incorrect answer as its base model, but next gave a “drunk” answer — nonsensical sentences mangled with the actual data.

Here are the overall results:

- gpt-4o-mini — could get some answers wrong, especially if they were related to the current date and calculations.

- fine tuned gpt-4o-mini — introduced a 25% hallucinations rate on top of the issues of the base model, which was unacceptable in my opinion for the system I was entrusting with my personal data.

- gpt-5.2 — I didn’t have a single wrong or hallucinated answer during my small test.

I tested in Russian as well. The hallucination rate was about the same, however the hallucinations themselves were more “severe” — the model sometimes completely broke grammar and even invented new words.

At this point, hallucinations in answers alone would already make fine tuning questionable. But this wasn’t the only problem.

Hallucinations all the way down

I wrote in the previous post that OpenAI’s RAG system can change the search queries (and I will repeat that this is an implementation choice, not an inherent LLM issue) and this may become a real problem with fine-tuned models. Because hallucinations can affect the search queries as well.

Here you can see how the fine-tuned model expanded a simple “Tell me about your kids” question. Needless to say, I have no idea who Eric Perez is or where those numbers came from. They were all hallucinated by the model.

Initially, I expected RAG to be a clean layer on top of the model. In reality, it behaves more like a tightly coupled system where changes in one part can leak into everything else.

Big models, big problems

Overall, I wasn’t surprised with the gpt-4o performance since I saw similar results from the locally hosted models that my husband was running. The reason why we both wanted to try fine tuning with OpenAI was to get access to larger and more powerful models. You can fine tune only a limited set of models with OpenAI and the latest available is gpt-4.1, but this was good enough for testing.

I created a new fine tuning job and … it got stuck in a queue… for 50 (fifty!!!) hours. I tried to restart — once after an hour, then after 2 hours — and after 24 hours I even wrote a rage post on LinkedIn. The fine tuning job itself took only about one hour and fifteen minutes, and fortunately I wasn’t charged for the time spent in a queue or the cancelled jobs.

But what about the result? All the graphs looked good, but… well… RAG stopped working. I don’t mean queries hallucinations — my Virtual Alexandra completely broke down. It still could answer questions about canonical facts included right into the prompt, like my age or when I got married, but nothing about facts in the vector store.

Let me illustrate the issue. In the screenshot, you can see the OpenAI logs. The original question was “Tell me about your LOTR project.” I chose this question since information about it was only in the vector store, not in the training data or prompt. RAG found several entries, but the system’s reply was… hallucination?… some technical details bleeding into the answer? Other times it would say it didn’t find anything, although it had plenty of search results.

I found a workaround for the RAG issue to test the model a bit more. OpenAI allows you to use the so-called “playground” where you can chat with the fine tuned model via UI. Weirdly enough, RAG access worked there. In the screenshot, you can see how it answered the same question that got a nonsensical answer via API. The exact same setup behaved differently depending on how it was accessed.

OK, maybe the RAG issue here is just a bug. But what about hallucinations? Well, the same rate of about 25%-30%, more or less.

So, with a larger model I didn’t see any improvements in the hallucination rate but I got new and unexpected issues. And with high cost of training and long wait times for infra access, it was hard to debug or iterate.

Is two better than one?

Weird RAG issues aside, my major problem remained hallucinations. So, I tried a couple of techniques that were supposed to fix it and make the answers more reliable: style rewriting and verification. Both of them involve using two models — one base, out-of-the-box, model and one fine-tuned.

Style rewriting

In this case the base model is producing an answer and the fine tuned model is rewriting it in the person’s voice. I chose gpt-5.2 as my base and tried both gpt-4o and gpt-4.1 (fine-tuned) for style rewriting. What I found is that they don’t actually rewrite anything — maybe change a word or two, but no real tone change.

There are two theories about why this happens. One is that the base model’s answers are “good enough” and the fine-tuned model simply keeps it the same. This one is not really possible to fix.

Another is that my fine-tuned models were trained on “instruction” data sets, and the task of rewriting is very different from answering questions. If that’s the case, addressing it would require producing a new data set with examples of texts and how I would rewrite them… and honestly, this is not a task I am personally interested in. So, I didn’t pursue the style rewriting option further.

Verification

Here the fine tuned model produces an answer and the base model checks if this answer is correct. It’s pretty easy to see how it can work for math problems, but let’s take a look at yet another nonsensical answer to the “How old are you?” question:

It’s a bad answer, but it technically contains correct data. And in cases like this, defining a useful verification process becomes tricky, especially without turning it into a much more complex system.

At this point, I realized that I was trying to put a fine-tuned model to work somewhere, somehow, with extra time and effort, while I already had a working system with no fine tuning at all producing acceptable results.

But did you try this other thing?..

When my husband and I started working on the Virtual Alexandra project, we knew that fine tuning can be expensive, long, and needs some careful data preparation. So we didn’t go directly to OpenAI, but did a lot of experiments locally. We tried a bunch of different versions of an open source model that supports both English and Russian: Qwen3 4B/8B/14B. At some point we tried Mistral 7B as well, but abandoned it since it doesn’t support Russian.

We played with different parameters and settings — learning rate, epochs, batch size, LoRA rank and scale. The results were always encouraging — the models changed behavior and were kind of getting better, but we were never able to break above 25% hallucinations rate (and usually it was worse than that).

Going to OpenAI was simply the last step in making sure that for this particular project fine tuning is not an option.

Learnings

For Virtual Alexandra, fine tuning was not worth it

I built a working version of Virtual Alexandra with prompt + RAG in a couple of days. After weeks of fine tuning, I ended up with worse results: more hallucinations, broken RAG behavior, and a system that was harder to debug and understand. Add to this long queues for larger models and relatively high cost of training. My advice — if you have other implementation options available, use them instead.

RAG is not a clean layer on top of the model

I expected retrieval from the vector store to be stable and independent. In reality, fine tuning of the model or even inconsistencies in the system (like accessing it via UI vs API) can change how retrieval works or whether it is usable at all.

Fine tuning is not available for the latest and greatest models

The latest OpenAI model for fine tuning is from April 2025, so it hasn’t been updated for about a year. Meanwhile Anthropic is simply not offering this functionality as a service at the moment. This is a strong hint that something is not fine with fine tuning.

Originally published at http://adandai.wordpress.com on March 31, 2026.

A Very Fine Untuning was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.