Yesterday, I wrote about the state of deep learning theory circa 2016,[1] as well as the bombshell 2016 paper by Zhang et al. that arguably signaled its demise. Today, I cover the aftermath, and the 2019 paper that devastated deep learning theory again.

As a brief summary, I argued that the rise of deep learning posed an existential challenge to the dominant theoretical paradigm of statistical learning theory, because neural networks have a lot of complexity. The response from the field was to attempt to quantify other ways in which the hypothesis class of neural networks in practice was simple, using alternative metrics of complexity. Zhang et al. 2016 showed that the standard neural network architectures trained with standard training methods could memorize large quantities of random labelled data, which showed that no such argument could explain the generalization properties of neural networks.

Today we’re going to look at the aftermath: how did the field of deep learning theory react to this paper? What were the attempts to get around this result using data-dependent generalization bounds? And why did Nagarajan and Kolter’s humbly titled Uniform convergence may be unable to explain generalization in deep learning serve as the proverbial final nail in the coffin of this line of work?

Let’s briefly return to what exactly the Zhang et al paper showed. Yesterday, I wrote:

The authors' results show that the same class of neural networks, trained with the same learning algorithm, can generalize when given true labels and memorize random ones. This shows that the hypothesis class of neural networks that are learnable with standard techniques cannot be simple in any useful sense, at least for complexity measures that depend only on properties of the hypothesis class and (data-independent) properties of the learning algorithm.

(emphasis added)

Notably, there was an important caveat to the results: what Zhang et al. showed was that for there existed some datasets where neural networks could learn to overfit. This left open the possibility of finding data-dependent generalization bounds, based on properties of particular neural networks trained on a particular datasets. For example, it remained a live possibility that a generalization bound could say, “if a trained neural network has small weight norm/is compressible/has large margin, and it was trained on sufficiently many data points, then its test error will be no more than epsilon higher than its training error.”

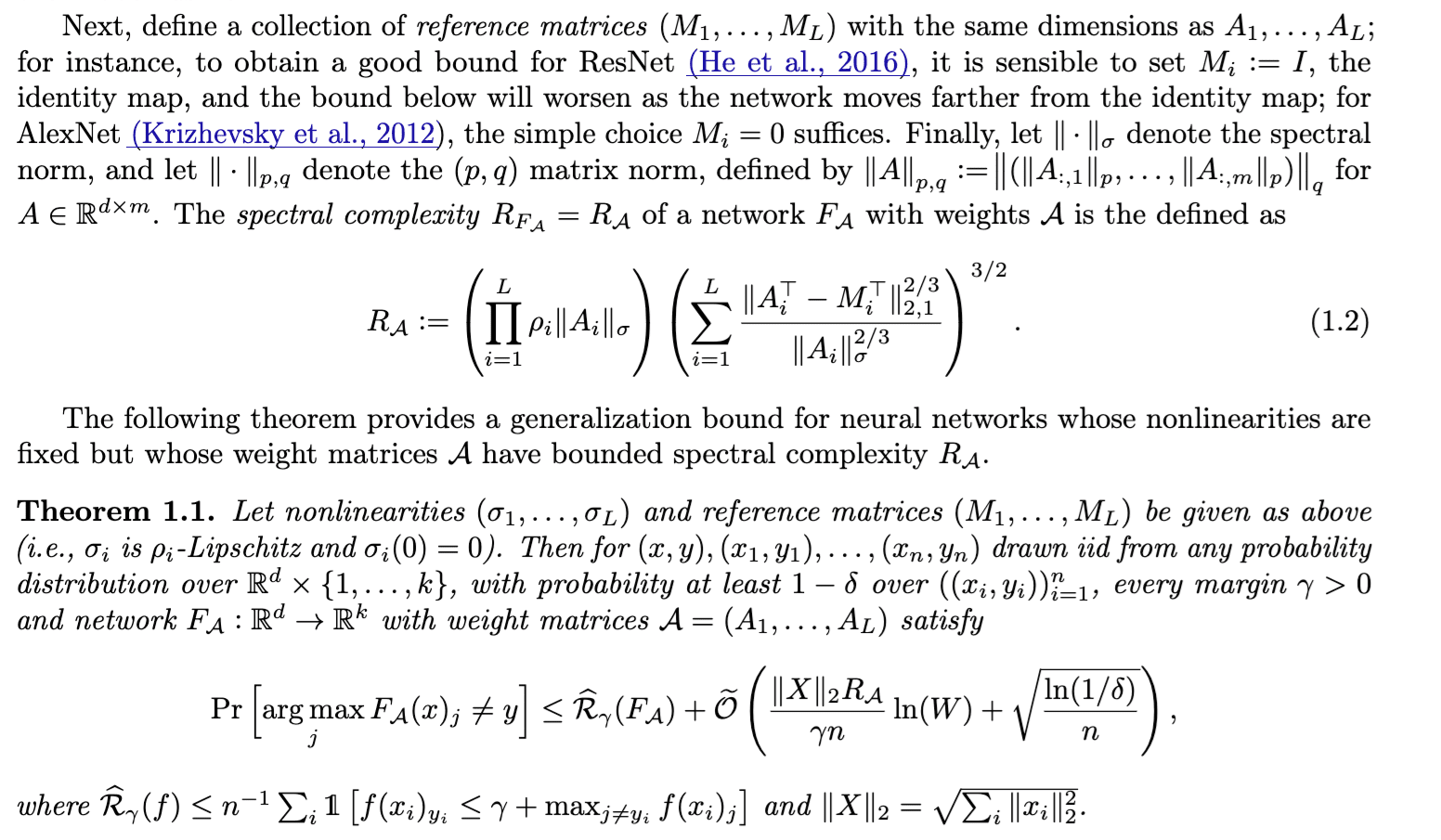

And that’s exactly some of the researchers in this field did. Bartlett, Foster, and Telgarsky’s Spectrally-normalized margin bounds for neural networks (2017) introduced a notion of complexity based on the spectral norm and reference matrices (“spectral complexity”); they then used the spectral complexity and the margin of a learned neural network classifier to bound its generalization error. Concurrently, Neyshabur, Bhojanapalli, and Srebro’s A PAC-Bayesian approach to spectrally-normalized margin bounds for neural networks (2018) also used a combination of spectral norm and distance from initialization to argue for generalization, albeit in a Bayesian framework. Even Nagarajan and Kolter gestured toward their own data-dependent generalization bound in their 2017 workshop paper Generalization in Deep Networks: The Role of Distance from Initialization, where the complexity of a neural network is related to how far its weights have changed from the initialization.

Bartlett, Foster, and Telgarsky’s spectral-complexity and margin-based bound. This was serious math! Astute readers may notice that, assuming each entry in the dataset X is bounded, the scaling factor of error with respect to data is still the classic 1/sqrt(n).

The common form of these bounds is that they bound the generalization gap in terms of some spectral-norm derived complexity measure of the trained weights, divided by the margin between training data and the decision boundary and the (square root of the) number of datapoints. Neural networks trained on real labels tended to have lower spectral norms and larger margin between their decision boundaries and training data points. Thus, when given enough data points, the generalization error would be low and they should be able to generalize!

An implicit assumption of all of these bounds is that they demonstrate uniform convergence – they apply regardless of what the true hypothesis is (more precisely, test error converges to train error uniformly across all possible true hypotheses[2]). It is at this entire genre of bound that Nagarajan and Kolter take aim in their 2019 paper.

So, what did Nagarajan and Kolter’s 2019 paper show?

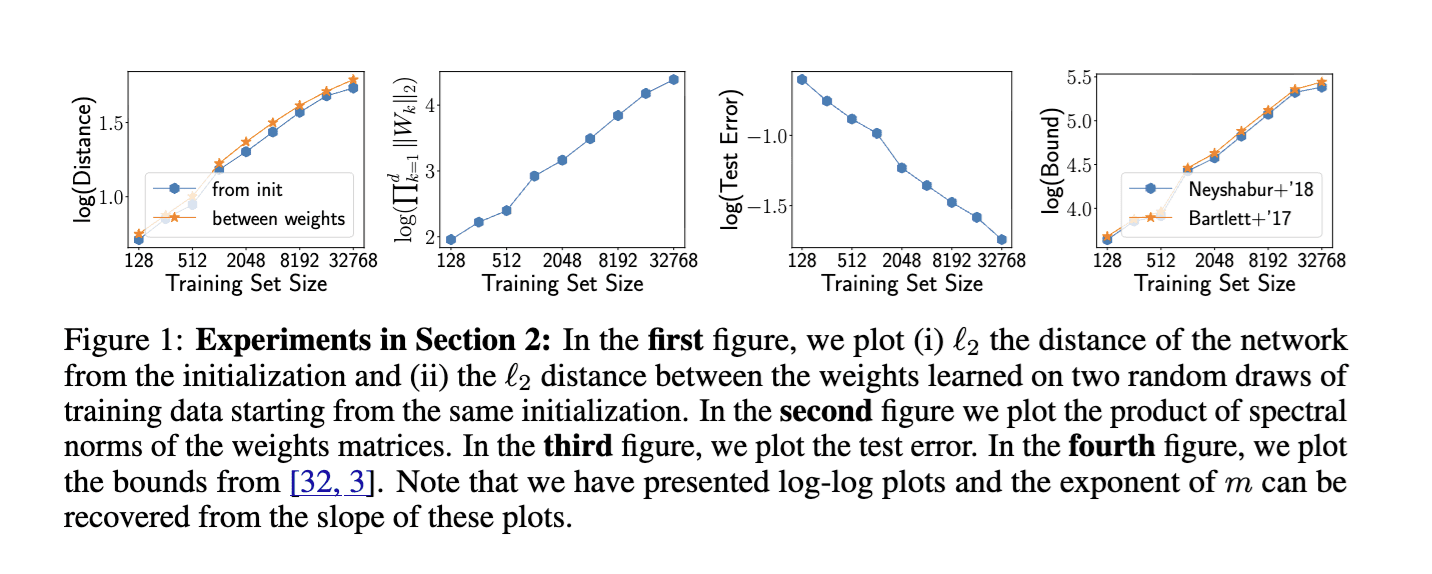

First, they show empirically that the bounds in the literature are not only vacuous, they scale in the wrong direction. Nagarajan and Kolter are able to easily train small, 5-layer neural networks on MNIST such that there’s a margin of at least 10 (in logit space) on 99% of the training data. As you might expect, in this setting, the test error of their trained networks decreases with the number of data points n, following a power law of test error scaling approximately at 1/n^0.43.

The problem is that in this setting, the complexity measures proposed by also have power law relationships. Notably, the spectral norm of the learned weight matrices scales linearly in n, and the distance to initialization scales n^0.4. The result is that, even though the actual generalization error goes down, the generalization bounds in the literature go up, scaling as n^0.68.

It doesn’t say so in the caption, but Nagarajan and Kolter’s figure 1 was a devastating rejoinder to the main approach taken in learning theory following Zhang et al. And this time, I’m certain this figure took fewer flops to produce than a single press of the integrated Claude button in the LW editor.

It’s worth emphasizing again how crushing this result is. Traditionally, in learning theory, the expectation is that the generalization error scales approximately 1/n^0.5; and similar results are observed empirically with modern scaling laws. Not only are the post-Zhang et al. spectral norm bounds provided in the literature off by two orders of magnitude, they also scale as n^0.68, in the wrong direction!

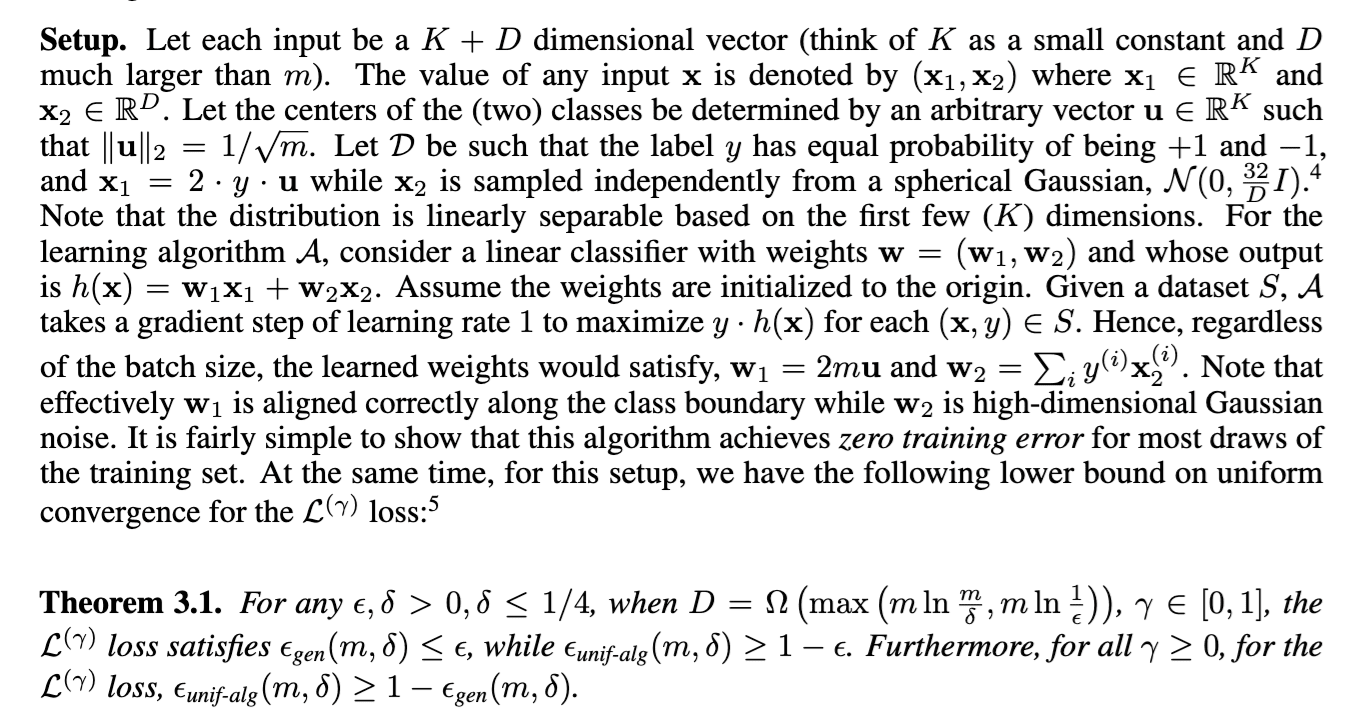

Secondly, they construct an overparameterized linear setting where (two-sided) uniform convergence bounds provably fail. The full construction is beyond the scope of this blog post, but I think the core idea is quite elegant. Readers interested in the mathematical details are encouraged to read the paper themselves.

Consider fitting a linear classifier on n training examples. The inputs to this classifier are K + D dimensional, where the first K dimensions contain a deterministic signal and the remaining D >> n dimensions are Gaussian noise, with zero mean and variance scaled to 1/D. After a single gradient step, a linear classifier will have the first K dimensions aligned with the signal, while the remaining D dimensions will be whatever the sum of n independent Gaussian noise vectors.

It’s easy to see why this linear classifier would generalize: for each new datapoint, the signal in the first K dimensions will be quite large (since it’s deterministic), but the D noise directions are sampled independently and the dot product of this noise vector the final D dimensions of our linear classifier (the sum of n independent Gaussians) will be concentrated near zero.

At the same time, consider what happens when we construct a natural “bad” dataset by reversing the D noise dimensions in our training dataset S. On this new bad dataset S’, if we pick the Gaussian noise our original classifier h will output the wrong example in every case; that is, the training error of h on S’ is 1. Since the training error is 1 but the test error can be made arbitrarily small, this leads to a vacuous generalization bound (|test error - train error| >= 1-epsilon).[3]

The actual mathematical construction from Nagarajan and Kolter, as well as the statement of the formal theorem that gradient descent provably does well while any uniform convergence bound is provably vacuous.

Third, they translate their theoretical overparameterized linear example into an empirical result on a shallow ReLU network.

The authors construct a dataset where each data point lies on one of two 1000-dimensional hyperspheres, of radius 1 or radius 1.1.[4] They then train a 2-layer ReLU network with 100k hidden units on this dataset, until 99% of the training data is classified with margin 10. They find the standard result that test error scales approximately 1/n^0.5 with dataset size.

They then construct a bad dataset S’ by taking their original training dataset S, and projecting the data points onto the opposite hypersphere, and swapping their labels. Their ReLU classifier consistently gets 0% accuracy on this projected dataset S’. This gives them the same results as in the overparameterized linear case, albeit in a case that is neither linear nor with overparameterized inputs.

The authors speculate that this is because SGD on neural network learns classifiers that are simple on the macroscopic scale, but complex on the microscopic scale – and the microscopic variance (analogous to the noise in their linear example) is what prevents uniform convergence bounds from working.

The results from Nagarajan and Kolter are a devastating blow to post-Zhang et al. deep learning theory: not only did it demonstrate that the data-dependent bounds created by the field scaled in the wrong direction, it provided an over-parameterized setting where the entire approach taken by statistical learning theory – uniform convergence bounds – provably did not work.

A more constructive way of thinking about Nagarajan and Kolter’s work is that it added further restrictions to what results could possibly explain neural network generalization. Namely, it showed that any such result needed to be to be algorithm- and data-dependent in a way data-dependent uniform convergence approach isn't. It needs to give up on bounding worst-case empirical error over hypotheses classes. And it needs to find some way to handle the complex microscopic structures produced by SGD on neural networks without losing sight of the macroscopic convergence properties.

But almost a decade later, we still don't have that theory. Here, I’ll pull an academic move, and leave that theory as an exercise to the reader.

- ^

To clarify, by “deep learning theory” I mean work “extending statistical learning theory to deep neural networks trained with SGD, in order to derive generalization bounds that would explain their behavior in practice” – that is, not any theoretical approach to deep learning, but specifically the attempts to construct a classical learning theory for deep learning.

- ^

Technically, there’s also a “over at least 1-delta fraction of possible training sets” (the “probably” part of “PAC’s probably approximately correct”, but that’s not important for now.

- ^

Astute readers may notice that this is in the opposite direction than is considered in machine learning: instead of test error being large and train error being small, we have train error being large and test error being small. This example clearly shows that the standard two-sided uniform convergence-based generalization bounds from statistical learning theory – bounds on the absolute difference between the train and test error – can be vacuous. A natural question is, can we escape this by resorting to one-sided bounds, where we only upper bound the test error using the train error? In the appendix, Nagarajan and Kolter show how many of the so-called “one-sided” PAC-Bayes generalization bounds in the literature are lower-bounded by a two-sided uniform convergence bound. Later work has tried to derive one-sided generalization bounds that escape their argument, albeit without much practical success.

- ^

This setting was first introduced by Gilmer et al. 2018’s Adversarial Spheres to study adversarial examples.

Discuss