In Only Law Can Prevent Extinction, Eliezer Yudkowsky argues that creating a strict, globally-enforced regulatory framework for artificial intelligence development is the best, and perhaps only, chance for humanity to prevent extinction from misaligned AI. This post is one of the most upvoted posts on Less Wrong in the past year, yet many people on this forum also seem to adhere to the idea that interacting with electoral politics is pointless or even counterproductive. For people who hold this view, I have a simple question: how do you expect ambitious AI regulation resembling the regulatory framework proposed by Yudkowsky to be implemented without the existence of a political movement that supports AI Safety?

The model for change for a lot of people on this form seems to approximate the following: perhaps due to a shocking display of AI capabilities, or AI having a tangible effect on employment, or a gradual shift in public opinion, enough politicians will independently realize that AI poses a credible threat to the long-term future of humanity and implement effective AI regulations before it is too late to meaningfully change the trajectory of AI development. While this story might feel credible at a glance, history has demonstrated that politicians are slow to change their minds on things, especially if there is no clear, electorally-minded pressure campaign focused on the issue.

There are numerous examples of popular policies not being implemented that demonstrate this. For one, take Marijuana Legalization. Majorities have supported Marijuana legalizaition since 2013, yet the drug remains illegal on the federal level to this day. America's embargo of Cuba is another clear example of popular will being derailed by legislative inertia. A plurality of Americans have supported ending the embargo against Cuba since 1999, but the embargo, which started in 1960, is still in place today, with only a temporary lull during the Obama administration. Finally, one can look to asbestos. Despite a lack of polling on the issue, asbestos has been known to be carcinogenic, with thousands of Americans dying each year due to asbestos-linked illnesses. Nevertheless, despite many Americans believing that an asbestos ban is already in place, it took until 2016 for Congress to delegate the full authority to regulate Asbestos to the EPA, and until 2024 for a ban on chrysotile asbestos, a type of asbestos with clear carcinogenic properties, to be enacted by the EPA. One can find other examples of legislative inertia, but the point is clear: Congress, in its current form, does not reliably pass even popular legislation into law when it relates to an issue that does not seriously threaten the reelection of its members.

"Ah, but AI is different from other political issues. The development of powerful AI threatens the lives of politicians and their families, so they will obviously create regulation once AI capabilities improve/ automate more jobs/ demonstrate scarier capabilities/ more consensus is built in AI research," you claim.

Wrong. AI risk is a highly complicated issue, and even top AI researchers have trouble agreeing on precisely how dangerous it is. Politicians, who on average have far, far less expertise in AI, are more likely to be lobbied by AI companies, are older, and are broadly less intelligent compared to top AI researchers, will not likely support the massive regulatory agenda espoused by Yudkowsky (anytime soon) without massive electoral pressure forcing them to do so. Furthermore, politicians are also more insulated from automation than almost anyone else in the country. AI systems cannot legally run for office, and even if they could in theory, I doubt most politicians would imagine they would lose an election to a non-human candidate.

AI is also different from other political issues because it has a massive lobby that seeks to oppose AI regulation. Ultimately, whether or not supporters of AI Safety engage with electoral politics, the AI accelerationists will do so regardless. Individuals and organizations who do not want the US government to seriously regulate AI have already concluded that electoral politics is a fruitful area to invest in and have pledged to sink billions of dollars into races around the United States. At the very least, should those who support AI Safety try to counterbalance the political influence of the Pro-Al Lobby?

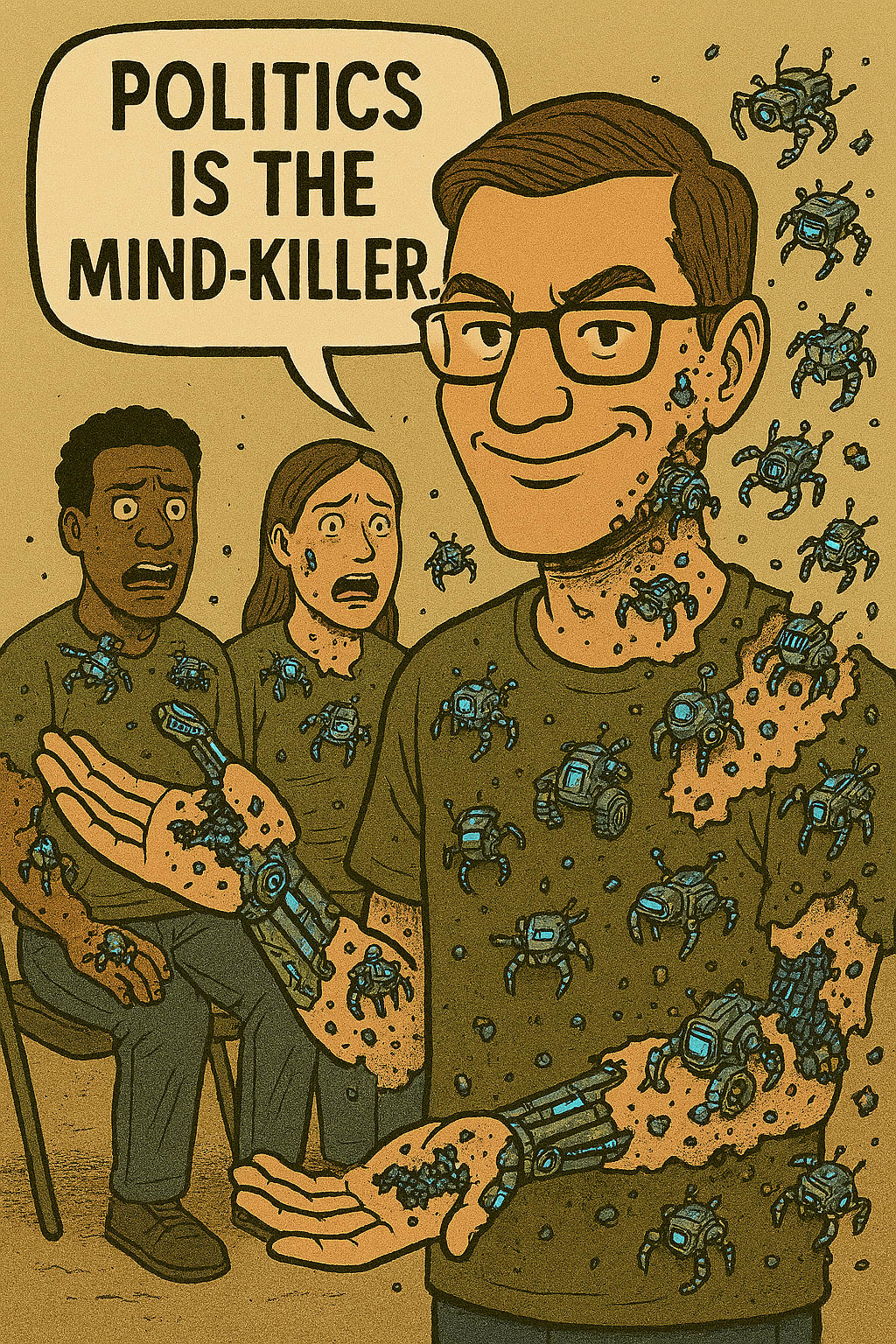

I am not going to sugarcoat things. Politics is highly complex and difficult to plan around. Even the best candidates can flame out for seemingly insignificant reasons, and even the best-run campaigns sometimes run into unexpected difficulties. Furthermore, given the highly polarized media landscape in the US and how people often intertwine their identities with their political positions, it can be difficult to have rational discussions with people about such issues. However, this moment is too important to continue to bury our heads in the sand. It is time to focus less on changing our politicians' opinions and focus more on changing our politicians: americansformoskovitz.com.

*Note: this title assumes Eliezer Yudkowsky's views on AI risk and not my own. I am not nearly as confident as he is about the likelihood that AI causes extinction. Essentially, I chose the title to emphasize that if you agree with Yudkowsky's post, you should agree with mine.

Discuss