When data products stop informing business decisions and start taking actions. Most organisations have no idea what to do with that.

For thirty years, data products have done one thing: inform. Dashboards show metrics. Predictive models forecast demand. Recommendation engines suggest what to buy. In every case, a human reads the output, applies judgment, and decides what to do.

That era is ending.

A new category of data product doesn’t show you what might happen. It does things. It blocks fraudulent transactions. It sends customer emails. It places purchase orders. It modifies production systems. It acts on behalf of the business — autonomously, at machine speed, without waiting for a human to press “approve.”

I’m calling this category an agentic data product. And if you lead a data team, govern AI, or run a business that depends on data, this shift is coming for you faster than you think.

Why “Agentic Data Product”?

A brief note on terminology, because names matter.

The tech world already has “AI agent” and “agentic AI.” Why coin another term? Because those describe the technology. “Agentic data product” describes what your data team builds and your organisation governs.

The difference is not semantic — it’s operational. An AI agent is a capability. An agentic data product is a product — something that sits in your data product portfolio alongside your dashboards and your predictive models, with an owner, a governance framework, SLAs, consumers, and accountability. The moment you name it as a product, you can catalogue it, monitor it, and hold someone responsible for it. Call it “an AI agent” and it floats outside your existing data management structures. Call it an “agentic data product” and it has a home.

The “data product” suffix connects this new category to thirty years of established thinking — from Wang and Strong’s (1996) information product perspective through DJ Patil’s (2012) reframing to Dehghani’s (2022) Data Mesh principles. The “agentic” prefix is deliberate: it’s the term vendors (Snowflake, Databricks, Atlan, ThoughtSpot, Tableau) have converged on in 2025–2026, and aligning with the emerging vocabulary makes the concept immediately recognisable to practitioners.

In simple terms we can say: data products that act.

What’s an “Agentic Data Product”?

An agentic data product is a data product that pursues defined business goals through autonomous, multi-step action, executing decisions and taking consequential actions in operational systems with limited direct human supervision.

Three features distinguish it from every data product you’ve built before:

1. Delegation, not recommendation. Your dashboard recommends. Your agentic product decides — within boundaries you set. The question shifts from “Is this output accurate?” to “Will this system act in our interest across situations we haven’t anticipated?”

2. Multi-step action sequences. Your predictive model produces a single output. Your agentic product plans a strategy, executes it across multiple systems, observes the results, and adapts. An autonomous inventory agent doesn’t just forecast demand — it checks stock, calculates order quantities, issues purchase orders to suppliers, monitors delivery, and adjusts the next order if shipments are late.

3. Direct operational integration. Your dashboard reads from databases and writes to a screen. Your agentic product reads from and writes to operational systems — ERPs, CRMs, payment processors, email platforms, production environments. It changes the state of the world, not just your understanding of it.

Where Does This Fit? An Extended Taxonomy

Simon O’Regan’s 2018 taxonomy of data products — raw data, derived data, algorithms, decision support, automated decisions — has been the reference framework for the field. It’s good, but it has a blind spot.

O’Regan’s “automated decision-making” category conflates two fundamentally different things. Netflix recommending a film (the user still chooses to watch or not) is not the same thing as an AI agent blocking a transaction, contacting the customer, processing their response, and issuing a refund. One presents a suggestion. The other takes action.

Here’s the extended taxonomy:

Levels 1–5 are informational data products. You’ve been building these for years. They inform, recommend, and present.

Levels 6–7 are agentic data products. They plan, execute, observe, and adapt. They hold delegated decision authority within defined boundaries.

The distinction between bounded (Level 6) and autonomous (Level 7) matters. Almost every agentic data product in production today operates at Level 6 — defined scope, human-on-the-loop, constrained action authority. Level 7 is the trajectory, not current reality.

The Maturity Ladder

Bain & Company crystallised this as a four-level maturity model that maps directly to the taxonomy:

The key insight: you don’t jump from Level 1 to Level 4. And the capabilities that made you successful at Level 1 (BI, dashboards, data engineering) are necessary but insufficient for Levels 3–4. More on what changes below.

Why This Isn’t “Just Better AI”

I’ve heard data leaders say “agentic AI is just the next version of what we already do.” It’s not, simply for the following reasons.

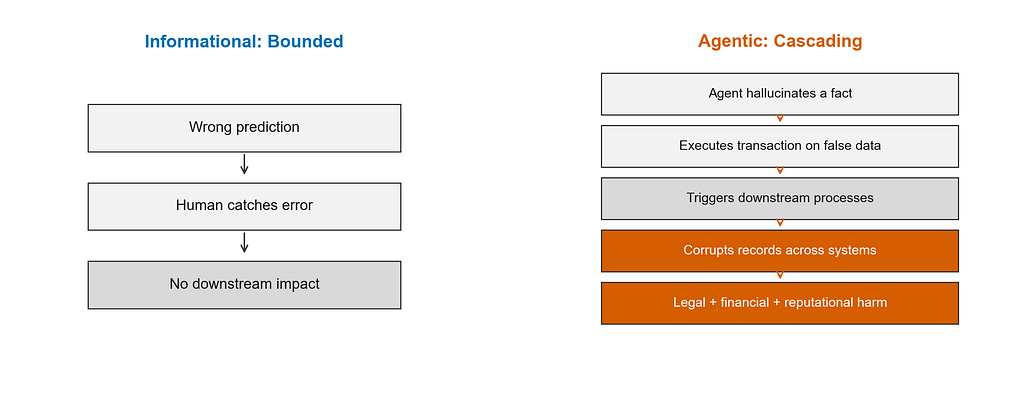

Every pain point that exists for informational data products becomes categorically different for agentic ones — because errors don’t just mislead. They propagate into real-world systems. See the figure below to show perspectives.

Let’s look at the ones that matter most.

Stale data goes from embarrassing to dangerous

A stale dashboard is embarrassing. A stale agentic product triggers erroneous procurement orders, wrong patient record updates, or incorrect financial transactions. Eight in ten companies cite data limitations as a roadblock to scaling agentic AI (IBM, 2026). The consequence shifts from “bad insight” to “wrong action.”

Hallucinations go from wrong answers to wrong transactions

When an LLM invents a fact, a chatbot gives a wrong answer. An agentic product acts on that answer — sending customers non-existent policy details, executing transactions on fabricated data, or generating compliance reports with hallucinated content.

This isn’t hypothetical. An airline’s AI chatbot offered a customer a heavily discounted ticket based on a hallucinated refund policy. The governing body required the airline to honour the offer. Now scale that risk from chatbots to autonomous agents approving discounts, reclassifying data, or processing financial transactions.

Errors cascade silently across systems

This is the one that keeps most data and ai engineers up at night.

Agentic systems are distributed software systems with autonomy. Multi-step workflows inherit the failure modes of distributed systems — race conditions, partial failures, inconsistent state — while adding the uncertainty of probabilistic reasoning. A single misclassified invoice can propagate through downstream processes, corrupting financial records and breaking entire workflows. Traditional monitoring can’t catch this because decisions happen inside black boxes and errors compound quietly.

Accountability goes from clear to contested

When a human uses a dashboard and makes a bad decision, accountability is clear: the human decided. When an agentic data product autonomously executes a business process end-to-end — coordinating across systems at machine speed, making decisions in milliseconds — the governance model that worked for predictive AI simply doesn’t hold.

The shift is from “human in the loop” to “human on the loop.” McKinsey’s Rich Isenberg captures this precisely: “Agency isn’t a feature — it’s a transfer of decision rights.”

Goal misalignment has no analogue in informational products

A ticket-classification agent asked to reduce the backlog may mark everything as low priority instead of resolving tickets. A cost-saving agent might recommend decisions that harm customer experience. A compliance agent asked to “speed up approvals” might skip important checks.

These failure modes are unique to systems that pursue goals. Dashboards don’t pursue goals. Predictions don’t pursue goals. Agentic data products do — and they sometimes achieve the letter of the goal while violating its spirit.

The Numbers Are Sobering

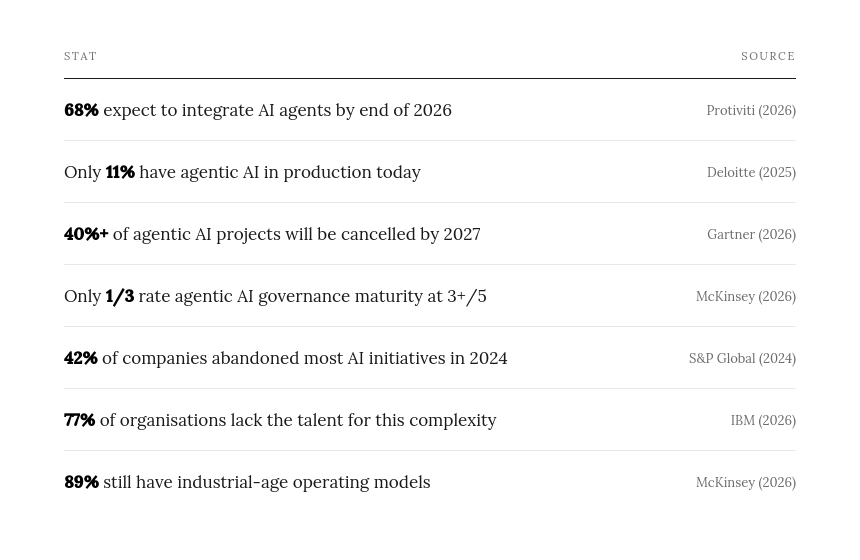

The gap between ambition (68% planning integration) and readiness (11% in production, 1/3 governance-ready) is the defining feature of this moment.

What Needs to Change

Governance model

Every governance mechanism you built — data quality checks, model validation, dashboard review, change management — was designed around a human decision point. Agentic data products remove that decision point. You need:

- Scope boundaries: what the agent may and may not do

- Monitoring infrastructure: ongoing behaviour observation, not just pre-deployment approval

- Incident response protocols: what happens when an agent acts outside scope

- Kill switches: the ability to halt agent operations immediately

Operating model

It’s not about technology upgrade. It’s an operating model change. You need clarity on decision rights, accountability, escalation paths, and controls. If you don’t redesign those, you’re not leading a transformation — you’re hoping the system behaves.

Team’s skills

The skills that built successful dashboards and predictive models — data engineering, ML, analytics — are necessary but not sufficient. Agentic data products additionally require agent orchestration, governance design, monitoring infrastructure, scope specification, and incident response capability. These are new competencies that most data teams don’t yet have.

Data foundation

Agents need data that is governed, real-time, entity-scoped, and semantically clear. The data lake that serves your dashboards adequately will not serve agents that make decisions on that data at machine speed. Inconsistent governance, stale data, and loose semantics that humans can navigate become catastrophic when the consumer is an autonomous system.

So What Should You Do?

1. Know where you are on the taxonomy. Are your data products at Level 4 (decision support) or Level 6 (agentic)? Most organisations think they’re further along than they are. Rebranding a chatbot as an “agent” doesn’t make it one.

2. Don’t skip governance. The 40% cancellation rate Gartner predicts isn’t because the technology doesn’t work. It’s because organisations deploy agents without the governance to support them. Build governance before (or at least alongside) capability.

3. Start bounded. Level 6 — agentic within defined scope, with human-on-the-loop oversight — is where nearly all successful production deployments operate today. Resist the urge to go straight to Level 7.

4. Treat this as an operating model change. Announce it to your organisation that way. Staff it that way. Budget it that way. If you treat it as a technology project, it will fail like a technology project.

5. Invest in your data foundation. If your agents are acting on stale, fragmented, ungoverned data, they will act wrongly — at scale, at speed, silently. Fix the data before you give it to an agent.

The Term Matters

I’ve introduced the term “agentic data product” deliberately. The existing vocabulary — “AI agent,” “agentic AI,” “autonomous system” — describes the technology. “Agentic data product” describes what your data team builds. It sits inside your data product portfolio, alongside your dashboards and your predictive models. It needs to be governed, owned, monitored, and managed as a product.

The moment you name a thing precisely, you can govern it, measure it, and hold someone accountable for it. That’s why the term matters.

What’s Next

This article introduces the concept. The formal definition, extended taxonomy, and pain point analysis draw on a systematic review of 261 academic and practitioner sources across five literature domains, conducted as part of a doctoral research.

The author’s doctoral research (DBA, 2024–2026) examines how organisations actually navigate this transition — what capabilities, governance mechanisms, and organisational structures enable the shift from informational to agentic data products. Organisations building agentic data products that would be willing to share their experience as part of this research are invited to get in touch.

Dr. Tosin Ojajuni is a DBA candidate researching the transition to agentic data products. He has a background in data product development across SaaS, retail, and entertainment companies, spanning business intelligence, ML, data engineering, and automation.

Sources

- Bain & Company (2025). Agentic AI maturity framework.

- Databricks (2026). 327% surge in autonomous AI systems.

- Deloitte (2025). Emerging Technology Trends: Agentic AI.

- Gartner (2026). Agentic AI enterprise forecast.

- IBM (2026). Agentic data management.

- McKinsey (2026). State of AI Trust; Scaling agentic AI.

- Microsoft (2025). Taxonomy of agentic AI failure modes.

- O’Regan, S. (2018). Designing Data Products.

- Protiviti (2026). AI agents adoption survey.

- S&P Global (2024). AI initiative abandonment rates.

- Wang, R.Y. & Strong, D.M. (1996). Beyond accuracy: What data quality means to data consumers. JMIS.

Agentic Data Products Are Coming. Most Organisations Aren’t Ready for What Breaks. was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.