Blackwell shipped at the start of 2025. By GTC 2026, NVIDIA announced Vera Rubin as the next architecture, shipping to customers in H2 2026.

Vera Rubin — named after the astronomer who first mapped dark matter — is not a minor revision. The per-GPU specs are substantially higher, the CPU-to-GPU interconnect doubled again, and there’s one genuinely new product with no Blackwell equivalent: the Rubin CPX, a separate GPU designed specifically for long-context inference. This article covers what changed at the hardware level and why it matters.

The Rubin GPU: 3nm and HBM4

The Rubin GPU keeps the dual-die design introduced in Blackwell — two reticle-sized chiplets connected at 10 TB/s — but moves to TSMC’s 3nm process instead of 4NP, pushing the transistor count from 208 billion to 336 billion (1.6x). That density translates into 50 PFLOPS of FP4 inference per GPU, up from 18 PFLOPS on B200 — a 2.8x improvement in raw compute throughput.

Memory gets a more significant upgrade. HBM4 replaces HBM3e, with each Rubin GPU carrying 288 GB at 22 TB/s bandwidth, compared to 192 GB at 8 TB/s on B200. For LLM inference — where the bottleneck is typically moving model weights from memory to compute, not the compute itself — the 2.75x bandwidth jump matters more than the FLOPS number.

A single Rubin GPU delivers 50 PFLOPS of FP4 inference — 2.8x more than B200, with 2.75x more memory bandwidth to keep the Tensor Cores fed.

Rubin vs B200:

• Transistors: 208B → 336B (1.6x)

• Process: TSMC 4NP → TSMC 3nm

• HBM capacity: 192 GB HBM3e → 288 GB HBM4 (1.5x)

• Memory bandwidth: 8 TB/s → 22 TB/s (2.75x)

• FP4 inference PFLOPS: 18 → 50 (2.8x)

• NVLink bandwidth: 1.8 TB/s → 3.6 TB/s (2x)

Vera CPU and Doubled Interconnects

Blackwell introduced Grace, NVIDIA’s first Arm-based server CPU, connected to B200 GPUs via NVLink-C2C at 900 GB/s. That was a step change from the PCIe connections used in Hopper systems, which topped out at ~128 GB/s and required explicit memory copies between CPU and GPU. Vera Rubin extends both numbers.

The Vera CPU has 88 Olympus cores on Arm v9.2-A, up from Grace’s 72 Neoverse V2. NVLink-C2C doubles to 1.8 TB/s, meaning the CPU can read and write GPU memory twice as fast as in a Blackwell system. For inference deployments where CPU-side work — orchestration, KV cache management, sampling — runs in tight loops with GPU compute, this keeps the GPU from waiting on the host.

NVLink 6 doubles the GPU-to-GPU bandwidth too, from 1.8 TB/s per GPU (NVLink 5 on Blackwell) to 3.6 TB/s per GPU. Collective operations — all-reduce, all-gather — used in both training and multi-GPU inference benefit directly from this without any software changes. Existing CUDA code runs without recompilation, but fully utilizing NVLink 6 in collective operations requires an updated NCCL version with Rubin support, following the same pattern as when GB200 required NCCL 2.25.2.

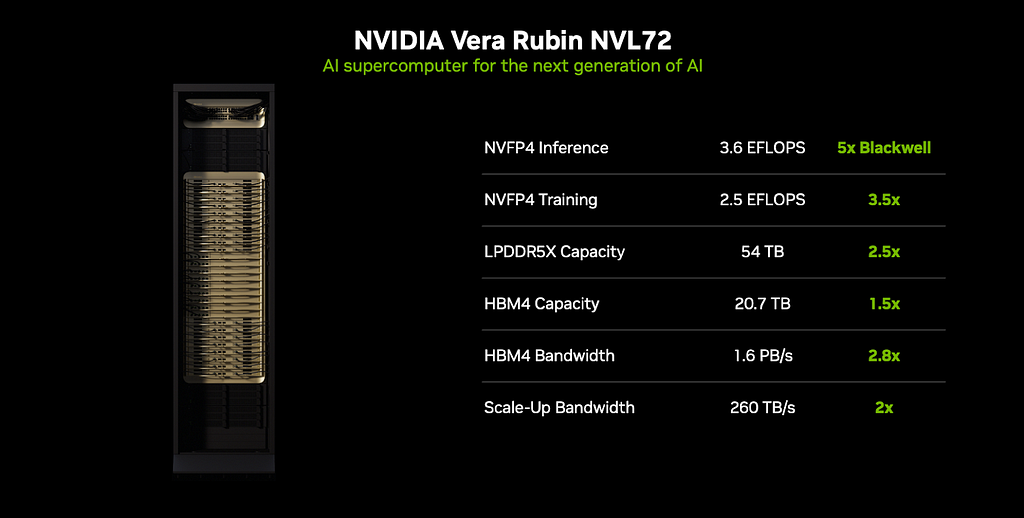

NVL72: Same Rack Form Factor, Very Different Scale

The Vera Rubin NVL72 follows the same physical blueprint as GB200 NVL72–72 GPUs, 36 CPUs, 9 NVSwitch trays, liquid-cooled. At rack scale, the per-GPU improvements compound into much larger numbers.

NVL72 — Blackwell vs Vera Rubin:

• HBM memory: 13.5 TB → 20.7 TB

• HBM bandwidth: 575 TB/s → 1.6 PB/s

• Scale-up fabric: 130 TB/s → 260 TB/s

• FP4 inference: ~0.65 exaFLOPS → 3.6 exaFLOPS (~5.6x)

• FP4 training: ~0.9 exaFLOPS → 2.5 exaFLOPS

(Scale reference: 1 exaFLOPS = 1,000 petaFLOPS = 1,000,000 teraFLOPS.

So 3.6 exaFLOPS in one rack is roughly equivalent to 200 individual B200 GPUs worth of FP4 compute.)

The rack-level inference gain comes from three compounding improvements: 2.8x per-GPU FP4 compute, 2.75x memory bandwidth (which reduces stalls waiting for weights to arrive from HBM), and 2x NVLink bandwidth (which speeds collective operations across the 72-GPU fabric). They don’t add — they multiply.

One detail worth noting from the hardware side: NVL72 rack assembly time dropped from 100 minutes (Blackwell) to 6 minutes (Vera Rubin), due to a modular mechanical redesign. At datacenter scale, faster assembly means faster hardware swap-out when components fail — particularly important for multi-week training runs where a single failed rack aborts the job.

Rack assembly: 100 minutes for Blackwell → 6 minutes for Vera Rubin. At datacenter scale, faster swap-out directly reduces the blast radius of hardware failures.

From Two Chips to Six

Blackwell was effectively a two-chip platform — B200 GPU and Grace CPU. The Vera Rubin platform ships with six:

• Rubin GPU — core training and dense inference compute

• Vera CPU — 88-core Arm v9.2 host processor

• Rubin CPX — long-context and KV cache inference offload

• BlueField-4 DPU — networking, storage, and infrastructure offload

• Spectrum-6 NIC — Ethernet switching

• Quantum-CX9 InfiniBand — 1.6 Tb/s photonics-based scale-out fabric

NVIDIA is increasingly building the full datacenter stack — not just the GPU. A Vera Rubin deployment today means NVIDIA silicon from the GPU down to the NIC, which is a significant shift from Blackwell, where customers mixed NVIDIA GPUs with third-party networking and x86 CPUs from Intel or AMD.

Frequently Asked Questions

Q: When does Vera Rubin actually ship?

The Vera Rubin NVL72 is on track for H2 2026, with CoreWeave among the confirmed early deployment partners. Rubin CPX and the NVL144 configuration follow by end of 2026.

Q: What is GB300 ?

GB300 is an updated Blackwell variant that shipped in early 2025. It uses the same B200 GPU die but with improved HBM3e configuration, offering higher memory bandwidth than the original GB200. When NVIDIA claims “10x lower cost per token” for Vera Rubin, the baseline is GB300 NVL72, not the original GB200 NVL72. Per-GPU compute comparisons (2.8x FP4) are B200 vs Rubin directly.

Q: Do existing CUDA workloads need to be rewritten for NVLink 6?

No. Existing CUDA workloads run without modification. Higher NVLink 6 bandwidth becomes available automatically for collective operations through NCCL. No code changes required.

Q: Does Rubin also ship in a standard server form factor?

Yes — just as B200 shipped as HGX B200 for OEM server vendors (Dell, HPE, Supermicro) connecting to x86 CPUs over PCIe, Rubin will ship in a comparable 8-GPU baseboard form factor. The NVL72 is the Vera CPU-based rack-scale product — a separate product line, not the only deployment option.

Q: What comes after Vera Rubin?

Rubin Ultra is on the roadmap for 2027, with Feynman following after that. NVIDIA is maintaining roughly an annual cadence on new architecture generations.

Bottom Line

Vera Rubin continues NVIDIA’s per-generation pattern: more transistors, faster memory, doubled NVLink bandwidth in the same rack form factor.

Per-GPU, the numbers are straightforward — 2.8x more FP4 compute, 2.75x more memory bandwidth, 2x more interconnect speed. At rack scale those compound into a 5–6x inference throughput improvement over GB200 NVL72. The structural addition — Rubin CPX for long-context workloads — signals where inference is headed: larger context windows, specialized memory hierarchies, and a platform that now covers the full datacenter stack.

Three things to take away:

• HBM4 at 22 TB/s addresses the actual bottleneck in LLM inference — moving weights from memory to compute — with nearly 3x the bandwidth of Blackwell • Rubin CPX is a new product category — a GDDR7-based GPU purpose-built for long-context workloads has no Blackwell equivalent and changes how you architect an inference cluster • Six chips per rack means NVIDIA is selling AI infrastructure, not just GPUs — the competitive moat is widening beyond just compute performance

References

NVIDIA Official

- NVIDIA Vera Rubin POD Technical Blog — seven chips, five rack-scale systems overview

- NVIDIA Rubin CPX Announcement — official specs and use cases

- NVIDIA HGX B200 — standard server form factor reference

Hardware Analysis

- ServeTheHome — Rubin CES 2026 Launch — verified rack specs including assembly time and NVL72 numbers

- ServeTheHome — NVL72 at GTC 2026 — physical rack photos and BlueField-4 DPU integration details

- Tom’s Hardware — Rubin roadmap — Rubin Ultra and Feynman timeline

Specifications based on NVIDIA GTC 2026 announcements. Vera Rubin platform shipping H2 2026 — final production specs subject to validation.

Follow @indiai for deep dives on AI infrastructure, GPU architecture, and the economics of building at scale.

B200 to Vera Rubin: What NVIDIA Changed Again was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.