Harness engineering, agent architectures, and our AI engineering cheatsheet.

Good morning, AI enthusiasts,

Most teams aren’t stuck on prompts anymore. They’re stuck on everything around them: how to structure systems, route decisions, and make outputs reliable.

This week’s issue focuses on that layer. Here’s what you’ll get:

- Where prompting ends and system design begins, and why harness engineering is becoming the new focus.

- What multimodal RAG looks like in practice, with unified embeddings across text, images, and more.

- How agent systems actually work, from ReAct loops to memory and guardrails.

- The foundations behind tools like Claude Code, so you understand what’s happening under the hood.

- When multi-agent systems help (and when they don’t), plus the trade-offs that matter in production.

We’re also sharing our internal AI engineering cheatsheet, a set of decision-ready references we use for writing, coding, and building agents at Towards AI.

Let’s get into it!

What’s AI Weekly

This week in What’s AI, I am breaking down Harness Engineering. If you’ve been hearing “harness engineering” everywhere lately and your first thought is, okay, so this is probably just prompt engineering with a nicer name, that’s the first misconception to kill. By the end of this, you should be able to tell the difference between prompt engineering, context engineering, and harness engineering, and more importantly, why the industry suddenly started caring about this now. Read the complete article here or watch the video on YouTube.

— Louis-François Bouchard, Towards AI Co-founder & Head of Community

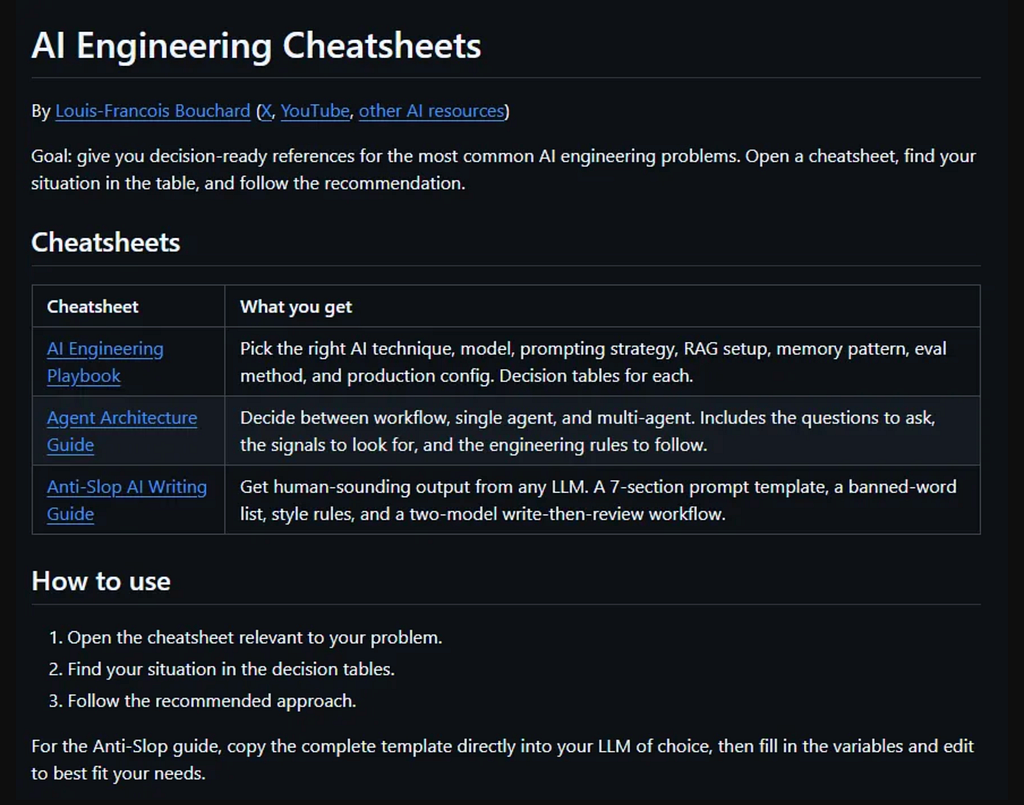

We are sharing our internal AI engineering cheatsheets, which include the AI engineering best practices we feed our agents.

It is a GitHub repo containing decision-ready references for the most common AI engineering problems, distilled into dense markdown files you can use mid-build or feed directly into Claude, so it works from decisions already tested on real systems.

They cover every decision that slows a build down: which model and setup to use, how to structure your agents, and how to get output that doesn’t sound generated. Open a cheatsheet, find your situation in the table, and follow the recommendation.

Learn AI Together Community Section!

Featured Community post from the Discord

Cap3rnicus has built Odyssey, a working prototype that packages an AI agent as a portable bundle, then runs that bundle through the same runtime contract across the CLI, TUI, HTTP server, and embedded Rust usage. The bundle defines the agent, the sandbox policy, the available tools, and the runtime defaults; the runtime turns that bundle into sessions, turns, events, approvals, and persisted history. Check it out here and support a fellow community member. If you have any questions or feedback, share them in the thread!

AI poll of the week!

This one’s interesting because the vote doesn’t crown a single “magic lever”; it splits almost evenly between better models and better orchestration, with prompts still pulling real weight and evals/retrieval getting less love than they probably deserve.

My read is that this reflects where production pain actually sits right now: most teams aren’t stuck because the model can’t write English; they’re stuck because the system can’t reliably route, call tools, recover from errors, stay within budgets, and behave consistently across edge cases. Model upgrades and orchestration improvements feel like immediate, visible wins, while evals and retrieval are slower, less glamorous, and harder to “feel” until something breaks.

What failure mode are you fixing this month? Share it in the thread, and maybe someone from the community can get you there faster.

Collaboration Opportunities

The Learn AI Together Discord community is flooding with collaboration opportunities. If you are excited to dive into applied AI, want a study partner, or even want to find a partner for your passion project, join the collaboration channel! Keep an eye on this section, too — we share cool opportunities every week!

1. Dragank99 is looking for a collaborator to build a FinTech project. If you are looking to practice by building, connect with them in the thread!

2. Npg_noob is looking to create or join a group in the field of AI image and video generation. If this is your niche and you are looking for a group, reach out to them in the thread!

3. Sauravxthakur has built an EdTech app for college students and is looking for help on improving it. If you can help with suggestions, share them in the thread!

Meme of the week!

Meme shared by valo_ai

TAI Curated Section

Article of the week

Multimodal RAG + Gemini Embedding 2 + GPT-5.4 Just Revolutionized AI Forever By Gao Dalie (高達烈)

Google released Gemini Embedding 2, its first native multimodal model that encodes text, images, video, audio, and PDFs into a single vector space. The author built a multimodal RAG pipeline using the model, pairing it with GPT-5.4, OpenAI’s new Sparse MoE model that supports 1.05M-token context. Each PDF page is embedded as a combined text-and-image vector, stored in ChromaDB, and retrieved at query time using cosine similarity.

Our must-read articles

1. Building ReAct Agents from Scratch: A Deep Dive into Agentic Architectures, Memory, and Guardrails By Utkarsh Mittal

ReAct agents power tools like Claude Code and Lovable, and this piece breaks down exactly how they work, from basic LLM calls through automation pipelines to autonomous reasoning loops. The author covers building a ReAct agent from scratch, including system prompt design, tool definitions, and graceful failure handling, then connects memory types (procedural, semantic, episodic) to real personalization patterns.

2. Claude Code Section 1: The Foundations — 8 Concepts Every Developer Must Know By Lokesh Shivalingaiah

Claude Code’s eight foundational concepts form the wiring diagram every developer needs before touching advanced features. The article covers what distinguishes Claude Code as an agentic CLI from a simple chatbot, then walks through terminal behavior, prompt construction, the permission model, core tools like Read, Write, and Bash, context window management, session history with resume capabilities, and token cost tracking.

3. Layer Normalisation: The Two-Line Operation Inside Every LLM You Have Ever Used. Nobody Has Explained Why It Works By Dr. Swarnendu AI

Layer normalisation runs at every sub-layer boundary in every major transformer, yet most practitioners treat it as boilerplate. This piece traces its origins from internal covariate shift through BatchNorm’s failure on variable-length sequences to the full LayerNorm derivation, including coupled gradient equations. It covers the Pre-Norm vs. Post-Norm split and explains why LLaMA adopted RMSNorm, dropping mean subtraction to save 20% compute with minimal quality loss.

4. Multi-Agent AI Systems: Architecture Patterns for Enterprise Deployment By Pratik K Rupareliya

Single-agent AI systems collapse under real production workloads, and the author uses a failed insurance claims agent to illustrate exactly why. The piece walks through four multi-agent architecture patterns: hierarchical orchestrator, collaborative swarm, pipeline, and event-driven reactive, each with code examples and trade-off tables. It also covers inter-agent communication protocols, cost management, failure handling, and a live customer support deployment that cut the confidence-but-wrong error rate from 12% to under 3%.

If you are interested in publishing with Towards AI, check our guidelines and sign up. We will publish your work to our network if it meets our editorial policies and standards.

LAI #120: Beyond Prompting was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.