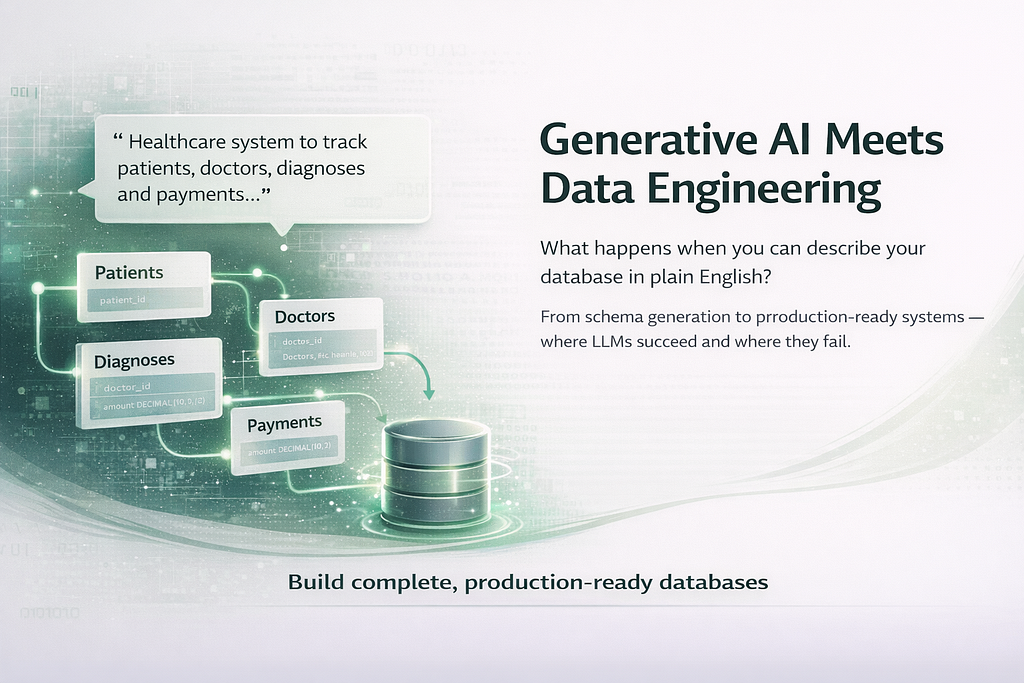

Generative AI Meets Data Engineering: What Happens When You Can Describe Your Database in Plain English

We often ask large language models to generate a schema from a business description. But what actually happens when we ask for this seemingly simple thing? Let’s dive in and try to understand the complexity of this request and where things start to fall apart.

1. The Paradigm Shift Nobody Announced

For most of database history schema design has been a contract between a human expert and a blank whiteboard. A data architect would spend time discussing the requirements of the business with stakeholders, would do some work to normalize the data, and would come out the other side with an ER diagram a couple of hours later. It was a very knowledge-based task. It was expensive, but it was reasonably fast and it worked because the person doing it understood both the business domain and the data model in real time.

This really does change the entry point for data modeling. Rather than having to translate business terms into some kind of data model, we can read the business terms and have the data model generated for us automatically, in a valid form. This isn’t a data modeling tool to make the job of the data modeller easier. This is a fundamentally different position, and I’m not sure we really understand what’s going to change, or what we’ll have to do in order to catch the different kinds of mistakes we’ll be making because we’re all doing data modelling more frequently.

This isn’t the first post to point this out, but I wanted to explicitly address the very practical question the title suggests. So, can an LLM produce valid and useful SQL DDL? The answer is yes. We’ll discuss why, and discuss some of the potential failure modes we’re seeing and that worry us. Most of all, we’ll focus on what we believe any production quality data pipeline will need to have in order to be truly reliable.

2. What the Model Is Actually Doing

When you ask an LLM, “I need a database for a healthcare claims system that tracks patient, doctor, diagnosis and payment information,” you’re asking for what? Clearly, the answer is the output of the LLM you submitted your request to. But to produce that output, the LLM had to be able to do several things at the same time.

•Entity extraction: identifying candidate tables (Patient, Provider, Claim, Diagnosis)

•Relationship inference: determining cardinalities (a claim can have multiple diagnoses; a provider handles many claims)

This lecture delves into type inference, which is the process of deducing the types of variables during the compilation of source code written in statically typed programming languages. To accomplish this, the lecture introduces the concept of type assignment, which involves assigning appropriate data types

- Constraint generation: introducing NOT NULL, UNIQUE and FOREIGN KEY constraints based on semantic analysis.

This does not involve the database at all. It is simply the data model applying the patterns it has learned from the billions of SQL schemas, documentation fragments and Stack Overflow answers it was trained on to make a probabilistic guess at what a valid schema for a table might look like.

This is the basic structure for a prompt to schema conversion function using OpenAI API in Python.

import openai

import json

client = openai.OpenAI()

SYSTEM_PROMPT = “””You are a senior data engineer. Given a business domain description,

generate a PostgreSQL DDL schema. Return ONLY valid SQL. No explanations.

Include primary keys, foreign keys, and appropriate constraints.

Use snake_case naming. Include created_at/updated_at timestamps on all tables.”””

def generate_schema(business_description: str) -> str:

response = client.chat.completions.create(

model=”gpt-4o”,

messages=[

{“role”: “system”, “content”: SYSTEM_PROMPT},

{“role”: “user”, “content”: business_description}

],

temperature=0.2 # lower temp = more deterministic schema output

)

return response.choices[0].message.content

schema = generate_schema(

“Healthcare claims system: patients, providers, diagnoses (ICD-10), claims, line items”

)

print(schema)

load: the prompt load for the task — using snake_case, including timestamps and requesting only SQL output for the response — structural output at 0.2 temperature, which generally prefers to keep the output structured and consistent rather than exploring creative possibilities

3. The Reliability Problem

The schema that comes back will almost always look correct. It’s valid. The table names are right. The foreign keys point to real tables. But it will usually have at least one of the following problems:

3.1 Missing Business Rules as Constraints

The model does not know: — that a claim with status = ‘PAID’ must have reimbursement_date to be non-null — that a diagnosis code must pass a Luhn check or the ICD-10 lookup These are business rules and therefore are not inferred from patterns in the data. So they will not be included in the schema.

3.2 Normalization Assumptions

Large Language Models (LLMs) will tend to go for 3NF database schema as this is what almost every basic SQL tutorial is based on. Data warehouses for analytics are a far cry from operational (OLTP) databases. Without any guidance, the model will tend towards the default normalization choice that it has seen most in the training data which will probably not be optimal for your specific case.

3.3 Type Selection Drift

This is a small one, but an important one. A data engineer will often create VARCHAR(255) columns where they should be TEXT, or a FLOAT instead of a DECIMAL(19,4) for money fields. And an INT column instead of a BIGINT for an ID column, because you probably won’t have more than 2 billion rows in 3 years. These are not small errors, they are common patterns that are favored in the training data.

Here is a validation wrapper that catches common type issues before the DDL reaches your database:

import re

from dataclasses import dataclass

from typing import List

@dataclass

class SchemaWarning:

severity: str # “ERROR” | “WARNING” | “INFO”

message: str

line: int

MONETARY_PATTERN = re.compile(r”\b(amount|price|cost|fee|balance|premium)\b”, re.I)

ID_PATTERN = re.compile(r”\b(id|_id)\s+(int|integer|smallint)\b”, re.I)

FLOAT_PATTERN = re.compile(r”\b(float|real|double)\b”, re.I)

def validate_schema(ddl: str) -> List[SchemaWarning]:

warnings = []

for i, line in enumerate(ddl.split(“\n”), 1):

# Flag FLOAT for any monetary column

if MONETARY_PATTERN.search(line) and FLOAT_PATTERN.search(line):

warnings.append(SchemaWarning(

“ERROR”, f”Monetary column may use FLOAT — prefer DECIMAL(19,4)”, i

))

# Flag INT for ID columns (overflow risk at scale)

if ID_PATTERN.search(line):

warnings.append(SchemaWarning(

“WARNING”, f”ID column typed as INT — consider BIGINT or UUID”, i

))

return warnings

# Example usage

issues = validate_schema(schema)

for w in issues:

print(f”[{w.severity}] Line {w.line}: {w.message}”)

4. The Iterative Refinement Pattern

So far we have seen production implementations of the NL-to-schema pipeline where outputs of the first LLM are not considered as the final output. Instead they are all used with the model in a feedback loop. An LLM can also be used to evaluate its own outputs at least partially, if it is given the right input.

One possible next step is to have the following step be an evaluation of the output from the previous step. The next question could be:

CRITIQUE_PROMPT = “””You are a database architect reviewing a schema for production use.

Evaluate the following DDL against these criteria:

1. Are all foreign key relationships logically consistent?

2. Are there any obvious missing indexes for high-cardinality join columns?

3. Are data types appropriate for the implied scale?

4. Are there any business rules that can be enforced by making certain fields required?

Return JSON: {“issues”: [{“severity”: str, “column”: str, “suggestion”: str}]}

No other output.”””

def critique_schema(ddl: str) -> dict:

response = client.chat.completions.create(

model=”gpt-4o”,

messages=[

{“role”: “system”, “content”: CRITIQUE_PROMPT},

{“role”: “user”, “content”: ddl}

],

response_format={“type”: “json_object”},

temperature=0.1

)

return json.loads(response.choices[0].message.content)

critique = critique_schema(schema)

for issue in critique.get(“issues”, []):

print(f”[{issue[‘severity’]}] {issue[‘column’]}: {issue[‘suggestion’]}”)

The generate-then-critique approach isn’t perfect and won’t catch all the issues. It won’t catch all the issues because issues exist whether you like it or not. The model won’t know whether an issue is important in the application domain without context. But it’ll catch all the structural issues it can find (like the need for an index on a foreign key column and inconsistent nullability across tables) and then it’s up to a human to review.

This is an engineering issue of separation. The input for the generators is different depending on whether we are generating or critiquing. If we leave the input the same for both the model over-explains the possible outputs and does not provide sufficient detail in each one due to having to handle both cases.

5. Where Human Expertise Is Still Non-Negotiable

There are some schema decisions that a model might not be able to make headway on, not because a model is lacking intelligence, but because the necessary information to decide what to do in a given situation isn’t described in the input prompt.

Partitioning Strategy

You’ll have to decide based on your workload, costs and SLA whether to partition the events table by date or by tenant ID. The model does not take this into account. It assumes a single table and will therefore produce a single schema for that table, leaving the partitioning decision to you.

Soft Delete vs. Hard Delete

Soft deletes are used in enterprise database design to track when something is deleted. They are very common with audit trails. Audit trails are used in databases to record events and transactions for auditing and historical purposes. Soft deletes are rarely implemented by default in Light Layer Models (LLMs). The database schema for implementing soft deletes is done at the application level and few basic examples of database schema for soft deletes exist. However, if you are required to have soft deletes for your business use case which is common for highly regulated industries you have to ask for it and verify that it is implemented across all tables.

Data Residency and Regulatory Constraints

We have a number of schemas, such as a healthcare schema for a US payer that must comply with HIPAA, and a financial services schema in the EU that must comply with GDPR data minimization. There are many considerations when designing a schema that must comply with data governance regulations. Decisions about column and field presence, the type of data to store and when to encrypt at rest are all driven by regulations like HIPAA and GDPR. An LLM has at best a very superficial understanding of these concepts and requires significant prompt tailoring and validation to ensure the output is relevant and appropriate to the domain and specific use case.

6. A Practical Architecture for NL-to-Schema in Production

Putting this together, a minimal production-grade pipeline has five stages:

•Prompt enrichment: bring in domain information (such as industry, company size, or regulatory requirements) to enhance the user input before it is passed to the model

•Schema generation: low-temperature LLM call with a structured system prompt

•Rule-based validation: deterministic checks for type safety, naming conventions, constraint completeness

•LLM critique: model-driven structural review against a checklist

•Human checkpoint: a data engineer reviews before any schema reaches staging

This step is not optional. NL-to-schema is intended to be a first-draft accelerator, not a replacement for human judgment. If the first draft is 80% of the way to a fully formed data model, rather than starting from zero that fundamentally changes the economics of data modeling, especially in the case of data products that are being brought to market in days or weeks.

7. The Deeper Shift

This has nothing to do with engineering mechanics. The fact that natural language interfaces for schema design exist is not a matter of whether it is faster to do the same amount of work. It is a matter of who does the work.

If a business analyst can explain a domain in plain English and get back a testable data schema in return, then the conversation in the review will be about something very different. The business analyst no longer explains their requirements to the data engineer, with the data engineer then implementing them. Instead, the business analyst and the data engineer are now both looking at the data schema and negotiating any required changes in plain English and SQL.

This is the new world. I don’t think it is because of the fact that more and more data engineering work is now carried out by computers, but I do think that the interface between the business layer and the data engineering layer is becoming dramatically thinner and also dramatically more bidirectional.

If you can manage to thin the layer enough meaning you know how to take in the right input, verify and validate the output, and put in the right places for human review you will be able to produce and deploy data products much more quickly than having to translate language one-to-one.

The real engineering work is not the actual generation, but the supporting structure that enables it.

Generative AI Meets Data Engineering: What Happens When You Can Describe Your Database in Plain… was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.