If you work in payments or e-commerce at an enterprise level, you know the “Monday Morning Dread.” It’s that email from Finance highlighting a weekend spike in chargebacks, usually originating from a specific region or involving a suspiciously high volume of digital goods.

For years, my role as a Business Analyst involved reacting to these spikes by updating our “rules engine.” We’d add logic like: IF transaction > $500 AND IP location ≠ billing address country AND items contain “gift card”, THEN block.

It felt productive, but it was a losing battle. Rules are brittle. They are reactive. Worst of all, they are blunt instruments that block legitimate customers (false positives) just as often as they catch fraudsters. In an Org processing millions of transactions, a 1% false positive rate means tens of thousands of angry customers and significant lost revenue.

The shift to AI-driven fraud detection wasn’t just a cool tech upgrade for our organization; it was an operational necessity. Over the last 11 months, I’ve been heavily involved in integrating and tuning these ML models. Here is what that journey looks like from the ground level, stripped of the marketing hype.

The Business Case: Why We Couldn’t Afford to Wait

Selling AI internally wasn’t hard once we quantified the pain of the status quo. My first job was gathering the data to justify the investment.

We identified three primary value drivers:

- Direct Financial Loss Reduction (Chargebacks): We were losing X% of revenue annually to actual fraud and the associated operational costs of managing chargebacks with payment processors.

2. The “Insult Rate” (False Positives): This was the hidden killer. Our rules were declining legitimate purchases. We estimated that for every $1 of fraud we stopped, we were declining $15 of good revenue. Marketing hated us.

3. Operational Overhead: Our manual review team was drowning. They were reviewing 5% of all transactions, and 80% of those reviews turned out to be legitimate. It was unscalable.

4. The Value Proposition: AI promised to move us from detecting fraud that already happened to predicting transaction risk in real-time, milliseconds before authorization.

How It Works (The Non-Data Scientist Version)

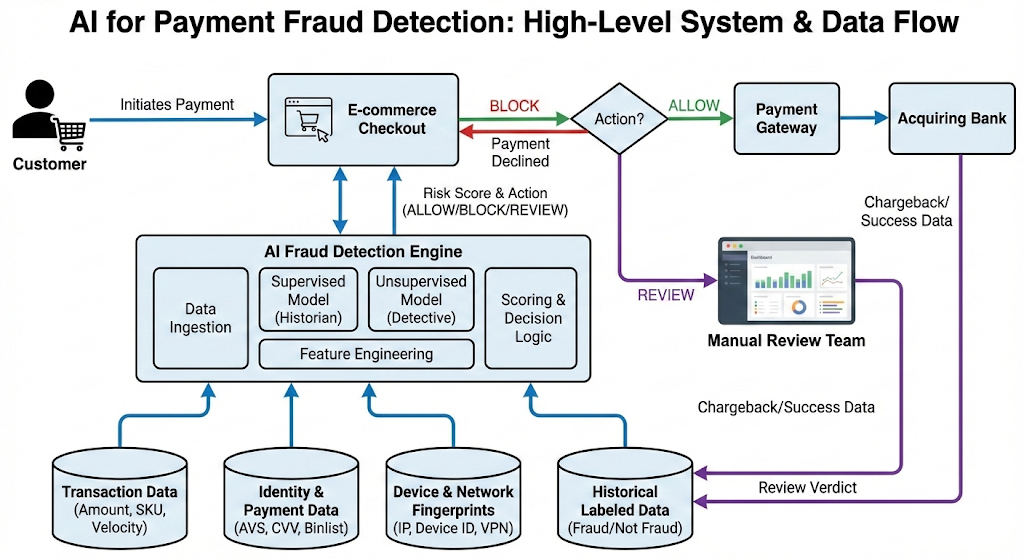

We didn’t replace the rules engine entirely; we augmented it. The rules handle the obvious stuff like the blocked countries sanctions. The AI handles the nuance.

We utilize two main types of machine learning:

- Supervised Learning (The Historian): We fed the model terabytes of historical transaction data, clearly labeled as “Fraud” or “Not Fraud” based on past chargebacks. The model learned the subtle patterns associated with past fraud (e.g., specific velocity patterns, device switching, time-of-day anomalies) that a human writing rules would never spot.

- Unsupervised Learning (The Detective): This is for new threats. The model doesn’t know what “fraud” looks like here; it just looks for statistical anomalies. It flags things like, “This user typically buys coffee at 9 AM in London, why are they suddenly buying five iPhones at 3 AM from an IP in Vietnam?”

The Fuel: Data Requirements and Features

The biggest lesson I learned? The model is only as good as the data pipeline. As a BA, I spent months mapping data fields from our checkout flow to the fraud engine’s requirements.

A generic transaction amount and card number are useless on their own. We needed rich contextual data. We broke our data requirements down into four pillars:

1. Transaction Data

- Amount and Currency.

- Velocity (How many transactions in the last hour/day with this card?).

- Item SKU details (Is it high-risk, like electronics or digital goods?).

2. Identity & Payment Data

- AVS (Address Verification Service) results.

- CVV match status.

- Binlist data (Issuing bank country, card type, prepaid cards carry higher risk).

3. Device & Network Fingerprinting (Crucial!)

This is where the magic happens. Fraudsters change cards, but they rarely change their actual device hardware for every attack.

- Device ID (unique hash of hardware components).

- IP Geo-location vs. Billing address vs. Shipping address distance calculation.

- Detecting use of VPNs, TOR browsers, or emulators.

4. Behavioral Biometrics

- Passive data like typing speed, mouse movements, or how quickly fields are auto-filled on a checkout page. (Bots fill forms instantly; humans take time).

The Plumbing: API Integration

The fraud detection system sits between our checkout microservice and the payment gateway.

It has to be fast. We have a strict sub-200ms budget for the fraud check to avoid cart abandonment.

JSON

// Request Payload (Sent BEFORE Payment Authorization)

{

"event_type": "$transaction",

"user_id": "cust_12345xyz",

"session_id": "sess_abc987",

"transaction": {

"amount_micros": 150000000, // $150.00

"currency_code": "USD",

"payment_method_id": "pm_card_visa123"

},

"payment_method": {

"bin": "424242",

The API Response: The system doesn’t just say Yes/No. It gives us a score and reasons. This is vital for the manual review team.

JSON

// API Response

{

"score": 85.5, // on a 0-100 scale, 85 is high risk

"action": "REVIEW", // Could be ALLOW, BLOCK, or REVIEW

"reasons": [

"VELOCITY_HIGH_FOR_USER",

"IP_IS_KNOWN_VPN",

"DEVICE_LINKED_TO_PREVIOUS_FRAUD"

],

"risk_id": "risk_998877"

}

Measuring Success: The KPIs That Matter

How do I report progress to the stakeholders? We don’t just look at “fraud rate.” That’s too simplistic.

Here are the KPIs on my dashboard:

- Precision (The “Boy Who Cried Wolf” metric): Of all the transactions the AI flagged as fraud, what percentage were actually fraud? If this is too low, our manual review team wastes time chasing ghosts.

- Recall (The Catch Rate): Of all the actual fraud that occurred, what percentage did the AI catch? We want this as high as possible.

- F1 Score: The harmonic mean of Precision and Recall. This is the single best number for balancing the trade-off between catching bad guys and bothering good guys.

- False Positive Rate (The Insult Rate): The percentage of legitimate customers we mistakenly blocked. Target: <0.5%.

- Manual Review Queue Volume: Did the AI successfully reduce the number of transactions requiring human eyes? Our goal was a 60% reduction, which we achieved.

Now, Lets Try To Visualise The Battlefield

I spend a lot of time looking at dashboards to understand model performance. While I can’t share our actual data, here are the visualizations that are most valuable to me:

1. The Confusion Matrix A simple 2x2 grid showing Predicted vs. Actual results. It’s the quickest way to see the balance between False Negatives (fraud we missed) and False Positives (good customers we blocked).

2. Fraud Velocity Graphs A time-series chart showing transaction attempts per user/IP/device over time. When an attack happens, this graph spikes violently. It’s highly effective for spotting bot attacks trying to test thousands of stolen cards quickly.

3. Link Analysis / Network Graphs This is the coolest visual. It shows nodes (users, emails, credit cards, devices, IPs) and the connections between them. You might see 50 different user accounts all originating from the same device ID, or using the same shipping address. It instantly reveals organized fraud rings that individual transaction analysis would miss.

The Realities!

It’s not magic. Implementing AI was painful and is an ongoing process.

- Cold Start Problem: We had to rely heavily on rules initially until the model gathered enough local training data.

- Model Drift: Fraudsters evolve. A model trained on 2023 data will start failing in 2024 as attack vectors change. We have to retrain the models weekly.

- Explainability (The “Black Box” issue): When a VIP customer gets blocked, the C-suite wants to know exactly why. Sometimes the model just says “complex statistical correlation,” which isn’t a satisfying answer. We are putting a lot of work into “explainable AI” features to provide human-readable reasons for blocks.

Moving to AI for payments wasn’t an option; it was survival. It transformed my role from writing endless “if/then” statements to managing data pipelines, interpreting complex KPIs, and strategizing on risk thresholds. It’s harder work, but infinitely more effective.

Beyond the Rules Engine: A Business Analyst’s Inside Look at AI for Payment Fraud Detection was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.