A complete explanation of what an AI lead qualification system is, how it works, and how small agencies use it to stop wasting time on bad-fit discovery calls.

There’s a specific kind of sales problem that shows up once an agency hits a certain size. Leads are coming in — enough that it feels like things are working — but the pipeline feels chaotic. Some leads get fast responses, others fall through the cracks. Discovery calls are booked with people who clearly weren’t ready to buy. Good leads occasionally go cold because follow-up was slow. And somewhere in the background, you’re aware that a meaningful chunk of your week is going toward conversations that don’t convert.

In practical terms, an AI lead qualification system is a structured workflow that collects lead data, scores it automatically, routes leads based on fit, and sends personalized follow-up — before you ever get on a call.

That’s the problem an AI lead qualification system is built to solve for agencies and solo operators.

Not in a grand, replace-your-sales-team way — in a much more specific way: handling the first stage of your pipeline automatically, so the right leads reach your calendar and the wrong ones don’t.

This article is the full explanation. What an AI lead qualification system actually consists of, how each part works, what role AI plays versus what rules and automation handle, and how agencies are using these systems in practice. If you’ve seen the term thrown around and want to understand it clearly — not the vendor pitch version — this is for you.

It’s also the article every other piece in this series links back to, so it goes deeper than most. Grab a coffee.

Start Here: What “Lead Qualification” Actually Means

Before the AI part, let’s be clear on the underlying concept, because qualification is one of those words that people use constantly while meaning slightly different things.

Lead qualification is the process of determining whether a prospect is worth pursuing. Simple enough in theory. In practice, it involves answering a handful of questions that sales teams have been asking for decades: Does this person have the budget to pay for what we offer? Is there a real, defined problem — or are they just browsing? Are they in a position to actually make a decision? And is the timing right, or are they six months away from being ready?

The classic framework is called BANT — Budget, Authority, Need, Timeline. It’s been around since IBM salespeople used it in the 1960s. It holds up because those four questions genuinely predict whether a deal will close. The problem was never the framework. It was always the method of applying it.

Traditionally, a human applied it — usually on a discovery call, sometimes via a few emails back and forth. That works when lead volume is low and you have time to give every prospect individual attention. It starts breaking down when volume picks up, when you’re stretched across multiple projects, when leads come in at odd hours, when follow-up requires remembering things you wrote down in three different places.

An AI lead qualification system doesn’t replace the BANT questions. It answers them automatically — collecting the data through a structured form, evaluating it through a scoring layer, and acting on it through automated routing — before any human time is spent.

The Four Layers Every System Has

“AI lead qualification system” is a phrase that gets applied to everything from a basic chatbot to a full enterprise sales pipeline. What I’m describing here is the version that actually makes sense for small agencies and independent operators — practical to build, maintainable without a dedicated ops team, and genuinely effective at solving the pipeline chaos problem.

It has four layers. They work together. Remove any one of them, and the system either stops being automatic or stops being useful.

Layer 1 — Intake: Collecting the Right Information

The system starts before any AI fires. It starts with what your intake form asks.

Most contact forms — name, email, message — are built to reduce friction. That’s fine for a general website, but it’s useless for a qualification.

A field that says “tell us about your project” gets wildly inconsistent responses that are almost impossible to score. Someone writes two paragraphs; someone else writes five words. Neither tells you reliably whether they’re a good fit.

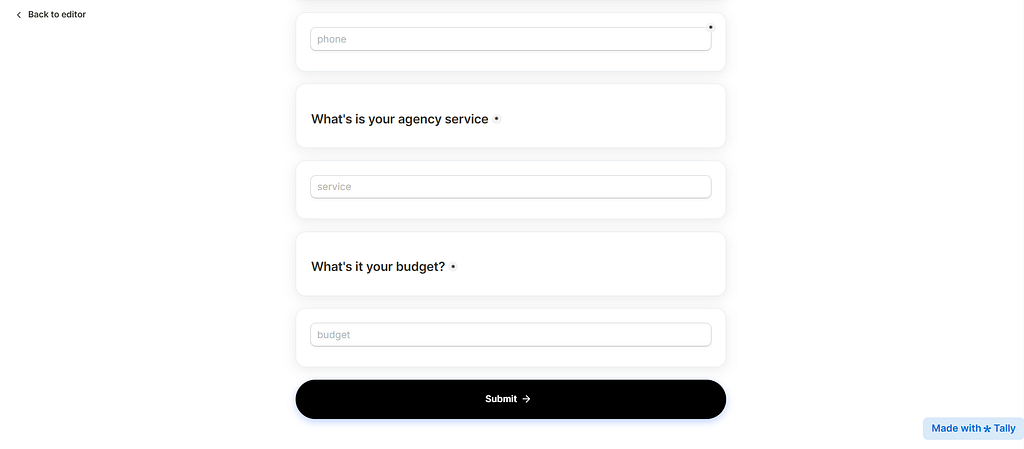

A qualifying intake form is different. It’s designed to collect specific, structured information that maps directly to your qualification criteria. Typically:

• Budget range — Offered as options, not an open field. “$1,500–$3,500” gives you a comparable data point. “What’s your budget?” gives you guesses and non-answers.

• Timeline — “When are you hoping to start?” with defined options, including “just exploring” — which lets low-intent leads self-identify without wasting your routing on them.

• Decision authority — “Are you the main decision-maker?” One question. The Surfaces committee deals with you before you’ve invested in a relationship.

• The core problem — A short free-text field. One or two sentences. The quality of someone’s answer here is often more informative than any dropdown.

• Prior attempts — “Have you tried solving this before?” Someone who’s already spent money on the problem is a fundamentally different buyer than someone who’s just starting to think about it.

Six fields maximum. Completion rates drop off noticeably beyond that, and every additional question has to earn its place by meaningfully changing how you’d respond.

The intake layer is the most underinvested part of most qualification setups. People spend time on scoring logic and automation while leaving a generic contact form on their site. Everything downstream depends on what the form collects. Get this wrong, and nothing else works properly.

Layer 2 — Scoring: Evaluating Without Reading Every Submission

Once a form is submitted, something has to evaluate the responses and produce a signal about how good the lead is. That’s the scoring layer.

You have two approaches, and they’re not mutually exclusive:

Rule-based scoring assigns point values to specific answers. Budget in your range = 25 points. Timeline within 30 days = 20 points. Decision-maker = 20 points. Clear problem description = 15 points. Prior attempts = 10 points. A lead scoring 70+ is a high priority; 40–69 is mid; under 40 is low. It’s straightforward to build, easy to audit, and predictable.

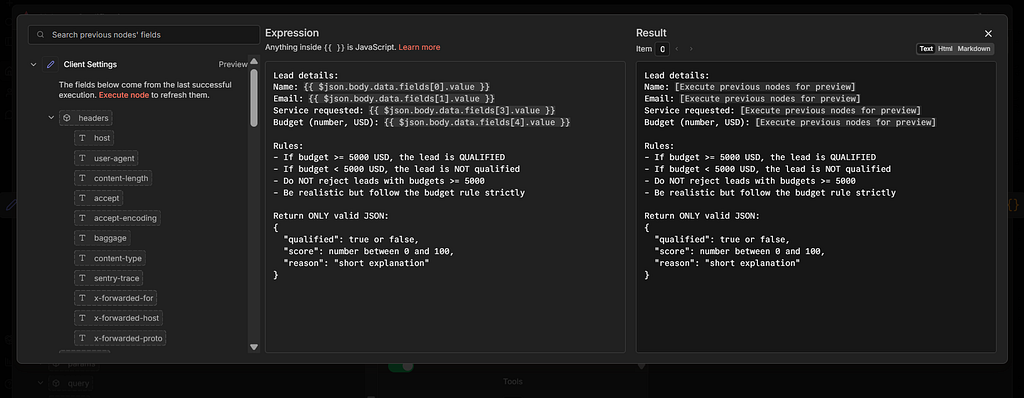

AI-based scoring sends the responses — particularly the free-text fields — to a language model that interprets meaning rather than just matching keywords to point values. This is where the AI layer earns its keep.

Consider the difference. A lead writes:

“We’ve been dealing with this problem for about 18 months. Tried two different approaches, neither worked. Spent roughly $12k total between them. We need something that actually sticks this time.”

A rules-based system reads the budget dropdown — let’s say they selected $3,000–$5,000 — and assigns the appropriate points. It doesn’t pick up on the 18-month struggle, the two failed attempts, the $12k already spent, or the urgency in “actually sticks this time.” A language model reads all of that and adjusts the score accordingly. High intent, high frustration, prior spend — that’s a motivated buyer.

Most small agencies do fine starting with rule-based scoring. The AI layer becomes genuinely useful when your form has meaningful free-text responses, and you’re processing enough volume that you want richer, more nuanced signals. It’s an upgrade, not a requirement.

One thing that matters more than which scoring method you use: writing down your criteria before you build. “I’ll know a good lead when I see one” doesn’t translate into automation. Be specific. Budget $3,500+ = yes. Timeline ‘just exploring’ = low score regardless of budget. Put it on paper first.

Layer 3 — Routing: Sending Leads Down Different Paths

A score is only useful if it triggers an action. That’s what routing does — it branches the workflow depending on where that lead is.

Three tiers, three possible outcomes:

• High score (65–70+): A personalized email goes out immediately after the lead completes the form, with a booking link. No human intervention is needed. A lead gets a prompt and relevant response while they’re still thinking about you.

• Mid score (35–65): A lead is put into a nurture sequence — typically three emails over seven to ten days. The first email is informative and appreciative of their situation. The second email tackles a potential objection. Third is a low-pressure invitation to talk when the timing is right.

• Low score (under 35): A brief, warm message. Not a hard sell. Something like: “Based on what you’ve shared, we’re probably not the right fit at this stage — but here’s something that might be useful.” Then, a relevant resource or pointer to a lower-cost option.

The routing logic itself is not complicated. In n8n.io, it’s a Router module with three branches and a filter on each. In Zapier, it’s a Paths step. Neither requires any coding. What takes thought is the criteria — where exactly to draw the line between tiers, and what each path actually does.

One thing worth adding for high-score leads: a notification to yourself — a Slack message or email — so you know a strong lead just came in and is already on your calendar. You don’t need to do anything. But it’s useful to know.

Layer 4 — Personalization: Where AI Makes the Biggest Visible Difference

This is the layer most people don’t think about until they see it working, and then they immediately understand why it matters.

Automated emails have a reputation problem — and mostly a deserved one. The routing fires, and an email is sent, and it reads exactly like

what it is: a template. “Hi [First Name], thanks for your interest in working with us. We’d love to connect.” The lead knows it within the first sentence. Whatever credibility you built with a professional form is immediately undermined.

The AI personalization layer fixes this. Instead of sending a static template, it generates a first-draft email for each high-score lead that references what they actually said in their form. Their specific problem. Their timeline. Their prior attempts. Something in their response signals you read it.

The email that goes to the lead who wrote about 18 months of struggle and $12k spent doesn’t say “thanks for reaching out.”

It says something like: “I saw what you wrote about the last two attempts not working — that’s a frustrating place to be, and I think I understand what’s been going wrong. Here’s my calendar when you’re ready to talk it through.”

That’s a different experience. And at scale — when you can’t personally read and respond to every submission — it’s an experience you can only deliver with AI.

“In practice, many teams start by automating the qualification decision first — and only later connect email, CRM, or calendar tools once the filtering logic is proven.”

How Agencies Actually Use These Systems

Theory is one thing. Here’s what it looks like in practice for a small agency running this day-to-day.

The intake form lives on a dedicated page — usually something like “work with us” or “get started” — not buried in a footer. It’s linked from the homepage, the services page, and any content that’s pulling in traffic. It’s not the only way to contact the agency, but it’s the primary path.

When a form submits, the agency owner gets a Slack notification within about two minutes: lead name, score, one-line summary of their situation. That’s all. They don’t need to do anything — the email already went out, the calendar slot is already available. But they know who’s coming before the call.

The Google Sheet logs every submission with the score, the summary, the routing decision, and whether the lead was booked. Once a month, the owner spends 20–30 minutes reviewing it. Looking for patterns: high-scorers who didn’t book (why not?), low-scorers who turned out to be good clients (scoring calibration issue?), nurture leads who converted weeks later (which email did it?).

Over 90 days, the system gets meaningfully better. The scoring criteria sharpen. The email copy improves. The form questions get refined based on which answers actually predicted fit. It’s not a set-it-and-forget-it situation — but it’s a low-maintenance one.

What changes most noticeably: the quality of discovery calls. They’re shorter and more focused because the pre-call context is richer. The lead already received a personalized email that acknowledged their situation. The owner had already read a one-line summary before picking up the phone. Both sides arrive with more information. The conversation starts further along.

What AI Can and Can’t Do in This Context

Worth being direct about this, because the hype around AI makes it easy to either overestimate or dismiss what it’s actually capable of here.

AI is genuinely good at reading language and drawing inferences from it. It picks up on urgency, intent, emotional state, red flags, and buying signals in a way that keyword-matching rules never can. It handles the messiness of how real people write — incomplete sentences, implicit budget hints, offhand mentions of prior failures — and produces a useful signal from it.

AI is also good at generating personalized copy at scale. Writing a tailored first email for every high-score lead is something a human can do for five leads a week. For 30 or 50, it becomes the bottleneck. AI removes that ceiling.

What it’s less reliable at:

• Nuanced judgment calls. A lead with a strange combination of signals — high budget, vague problem, odd timeline — still benefits from a human eye. AI produces a score and a summary; the final call is still yours.

• Short or minimal responses. “Looking for help with marketing” gives a language model almost nothing to work with. Scoring is only as good as the input.

• Industry-specific context. AI doesn’t know that in your particular niche, a $5k budget is actually a red flag because it suggests someone who’s underestimating the work. That kind of judgment lives with you, not the model.

• Consistency across model updates. Language models change. A prompt that worked well six months ago may produce slightly different outputs today. Worth checking periodically.

None of those are reasons not to use AI in this context. They’re reasons to keep humans in the loop for edge cases, and to treat AI as a layer that handles volume while you handle nuance.

The Tools That Run It — Kept Realistic

The tech stack for this does not need to be expensive or complicated. Here’s what a working version looks like at a small agency scale:

• Intake form: Tally (free) handles conditional logic cleanly and sends structured data that’s easy to automate. Typeform is slightly nicer to look at, but costs money. Either works.

• Automation: n8n.io free tier covers most small agency volume. Zapier is more approachable to start with, but it gets expensive. n8n is free if you’re comfortable with self-hosting.

• AI scoring and email generation: OpenAI’s API or Anthropic’s Claude API. Both have low per-request costs — at small agency volume, you’re probably spending $5–15/month on AI calls, not hundreds.

• Data logging: Google Sheets. Unglamorous, completely reliable, easy to review without logging into anything.

• Calendar: Calendly free tier. That’s genuinely all you need for booking.

Total monthly cost for a small agency running this at moderate volume: somewhere between $15 and $40, depending on which paid tiers you’re using. The constraint isn’t money. It’s setup time and getting the scoring logic right — which takes thought, not tools.

When This Is Worth Building (And When It Isn’t)

One thing I try to be honest about: this isn’t the right solution for every situation. A few useful tests.

It’s probably worth building if:

• You’re getting more inbound submissions than you can comfortably review manually — somewhere above 15–20 per week

• A meaningful percentage of your discovery calls are wasted on clearly wrong-fit leads

• Your follow-up is inconsistent — some leads get fast responses, others don’t hear from you for days

• You want to project a more professional, responsive image without adding headcount

It’s probably overkill if:

• Most of your work comes through referrals, where qualification is already baked into the introduction

• You’re early-stage and still figuring out who your ideal client actually is — locking in scoring criteria too soon can filter out useful discovery

• Your volume is low enough that you can genuinely read every submission and respond thoughtfully within a few hours

And a practical note: you don’t have to build the full AI-powered version to get most of the benefit. A well-designed intake form plus a basic scoring sheet — no AI, no automation — handles a significant portion of the value. The AI layer makes things smarter and more scalable. But the foundational work is the form and the scoring logic. Start there, see if it’s already solving the problem, and add layers when you hit the ceiling.

This is the pillar article on AI lead qualification systems — the one that explains the full concept end-to-end.

The articles below go deeper into specific parts of the system:

• How to Automatically Qualify Leads (Without Hiring or Complex CRMs) — a step-by-step guide to building the workflow

• AI Lead Qualification: How It Works and When It Makes Sense — when AI helps, when it doesn’t, and how to decide

• A Simple Lead Qualification Automation Workflow for Agencies — the full workflow mapped out, stage by stage

• Do You Really Need AI Agents for Lead Qualification? — agent hype vs simpler, more reliable systems

• Lead Qualification Example: Manual vs AI-Based Automation — the same lead processed two different ways

If you want the conceptual overview, start here.

If you want implementation details, those articles are the natural next step.

And if you want the pre-built AI lead qualification system — the full workflow already assembled — that’s what the product below is.

The Honest Summary

An AI lead qualification system is a structured workflow — intake form, scoring layer, routing automation, personalized outreach — with AI doing the parts that require language understanding and personalized copy generation.

It doesn’t replace your sales judgment. It handles the first stage of your pipeline so that by the time a lead reaches you, the obvious yes/no decisions have already been made automatically, the maybes are in a nurture sequence, and the time you spend on sales conversations is concentrated on the people most likely to actually become clients.

The agencies that get the most out of it aren’t the ones that built the most sophisticated version. They’re the ones who started simple, ran it for 30 days, looked at the data, and made it incrementally better. A system that runs reliably and gets reviewed monthly compounds over time in a way that a complex one you’re constantly fixing never does.

The value isn’t in the AI. The AI is a useful layer. The value is in the structure — a consistent, fast, repeatable process that treats every lead the same way, regardless of what else is happening in your week.

If you’ve read this far, you already understand the system. This just saves you the build time.

What Is an AI Lead Qualification System? (And How Agencies Use It) was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.