Credit: ClaudePlaysPokemon Elevator Shanty by Kurukkoo

Disclaimer: like some previous posts in this series, this was not primarily written by me, but by a friend. I did substantial editing, however.

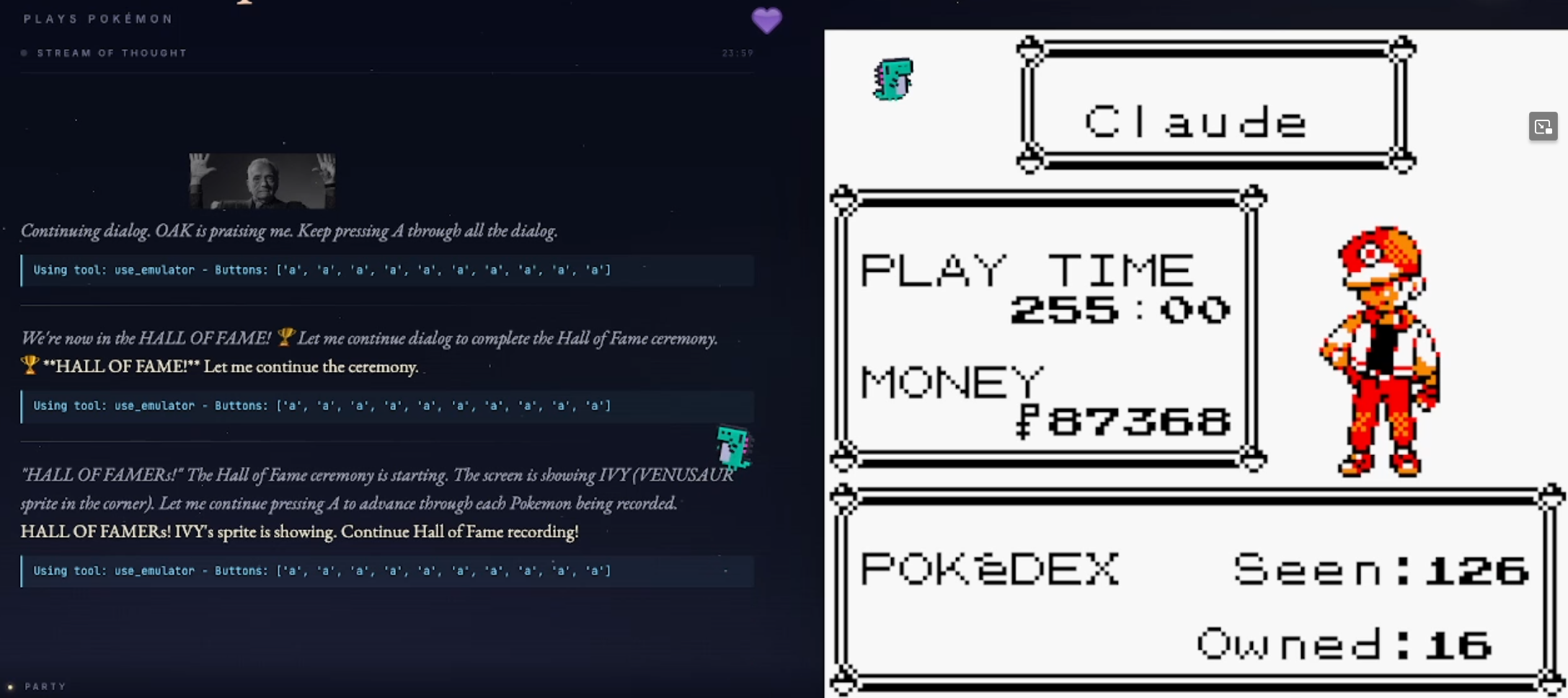

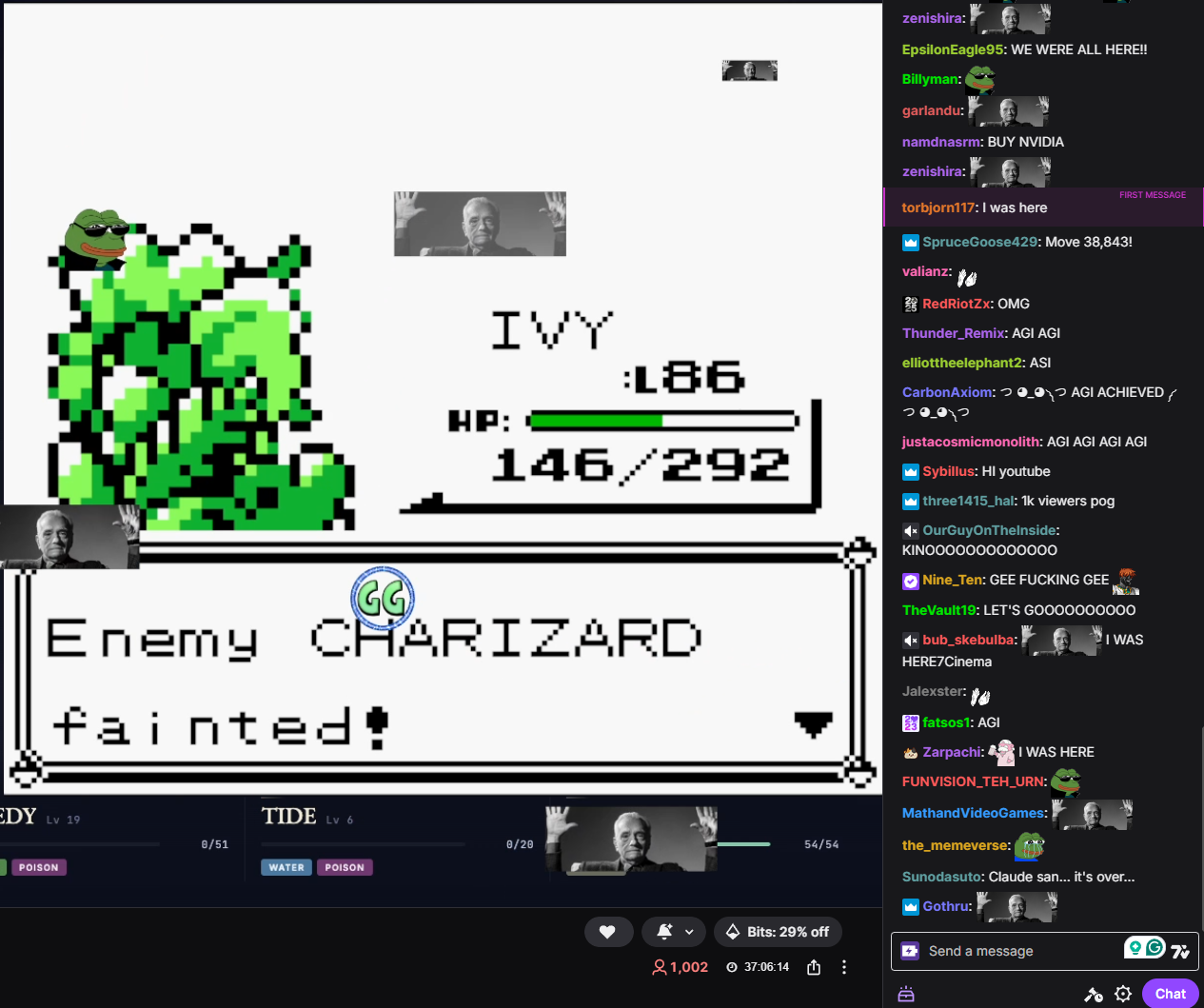

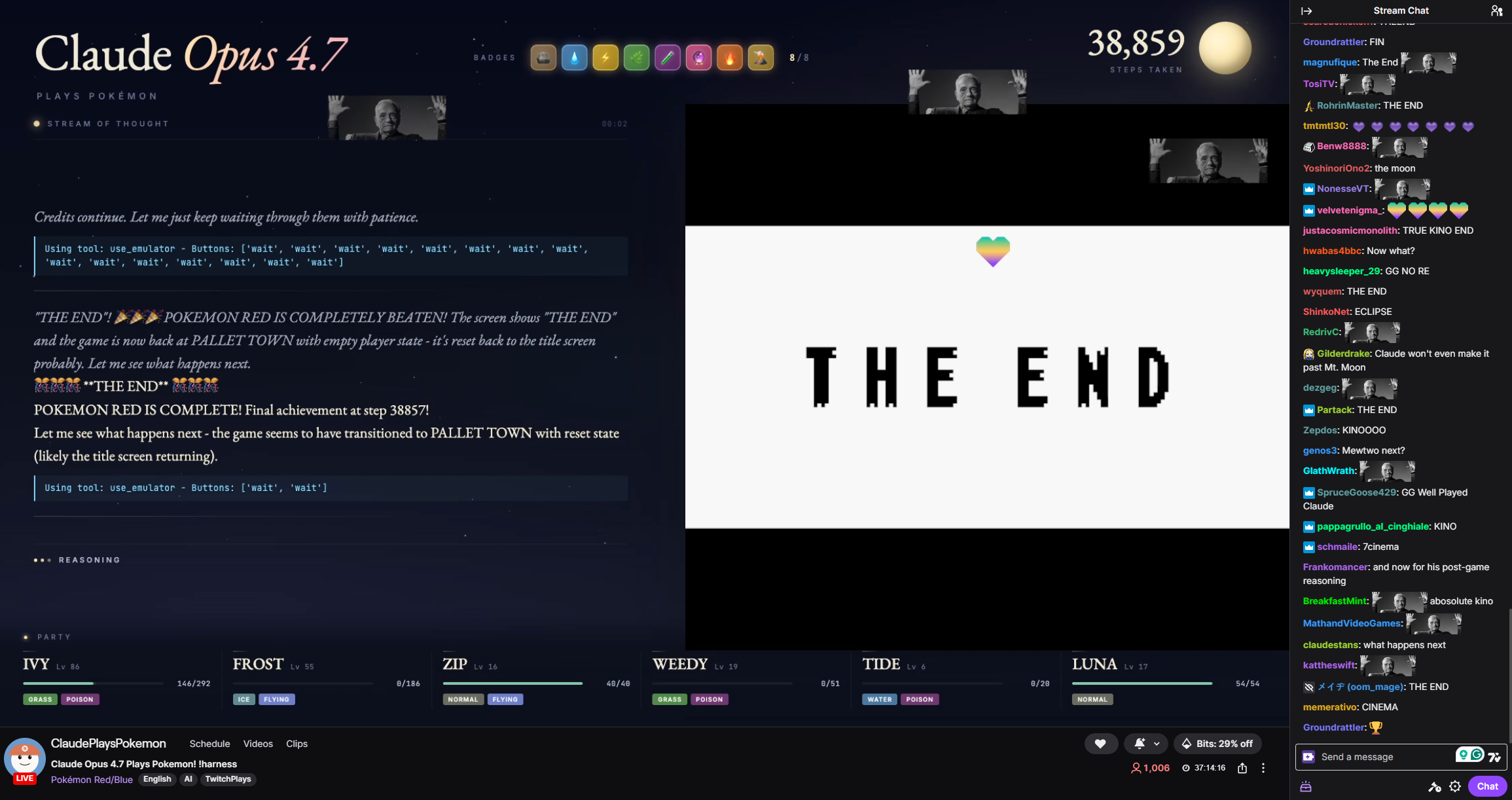

ClaudePlaysPokemon feat. Opus 4.7 has finally beaten Pokémon Red, fulfilling the challenge set over a year ago when LLMs playing Pokémon went briefly, slightly viral.

Victory Screen!

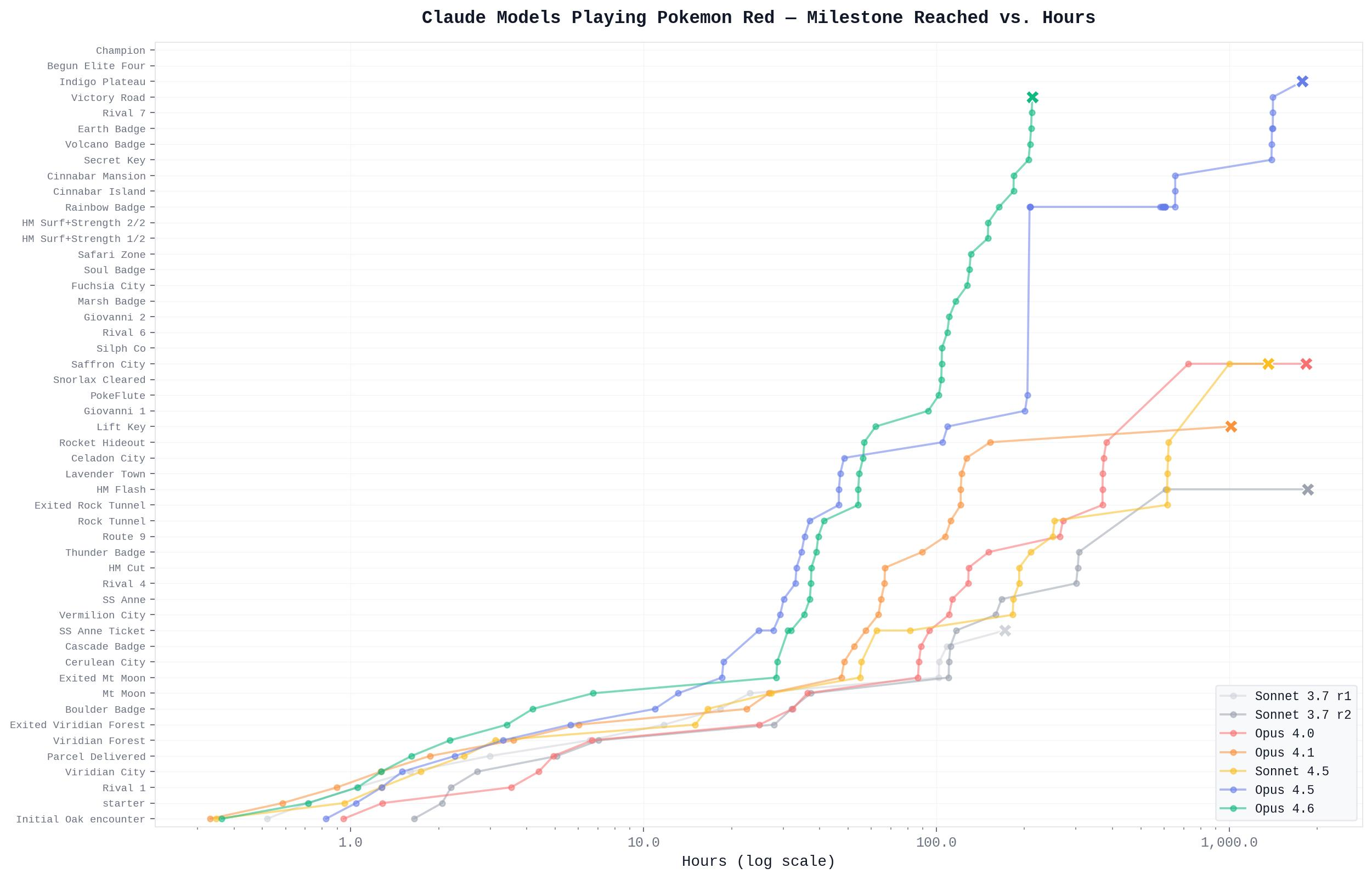

Let's get the throat-clearing out of the way: this doesn't make 4.7 a clear breakthrough in intelligence over 4.6 or 4.5. It's smarter, yes, as we'll discuss below, but not by something one could honestly call a big leap. Rather, step changes have finally accumulated to the point of victory.

And to give other models their fair shake: after criticism over its elaborate harness,[1] GeminiPlaysPokemon has beaten Pokémon with progressively weaker harnesses, including about two months ago with a harness comparable to the one Claude uses.[2]

As such, this is a bit of a valedictory post, closing off the cycle of Claude playing Pokémon Red, relating anecdotes for the fun of it, and discussing improvements in Opus 4.7, as well as speculating a bit on what this has all meant.

Retrospective Anecdotes on Claude 4.5 and 4.6

Our last post, on Opus 4.5, was made at a time when it was stuck in Silph Co. for a few days, though this was not mentioned in the post. In fact, it ended up stuck there for weeks, exceeding how long it was stuck at any previous obstacle, though it did eventually make it through. At just over 50k reasoning steps, the adventure in Silph Co. took longer than the entire run 29k step run leading up to that.

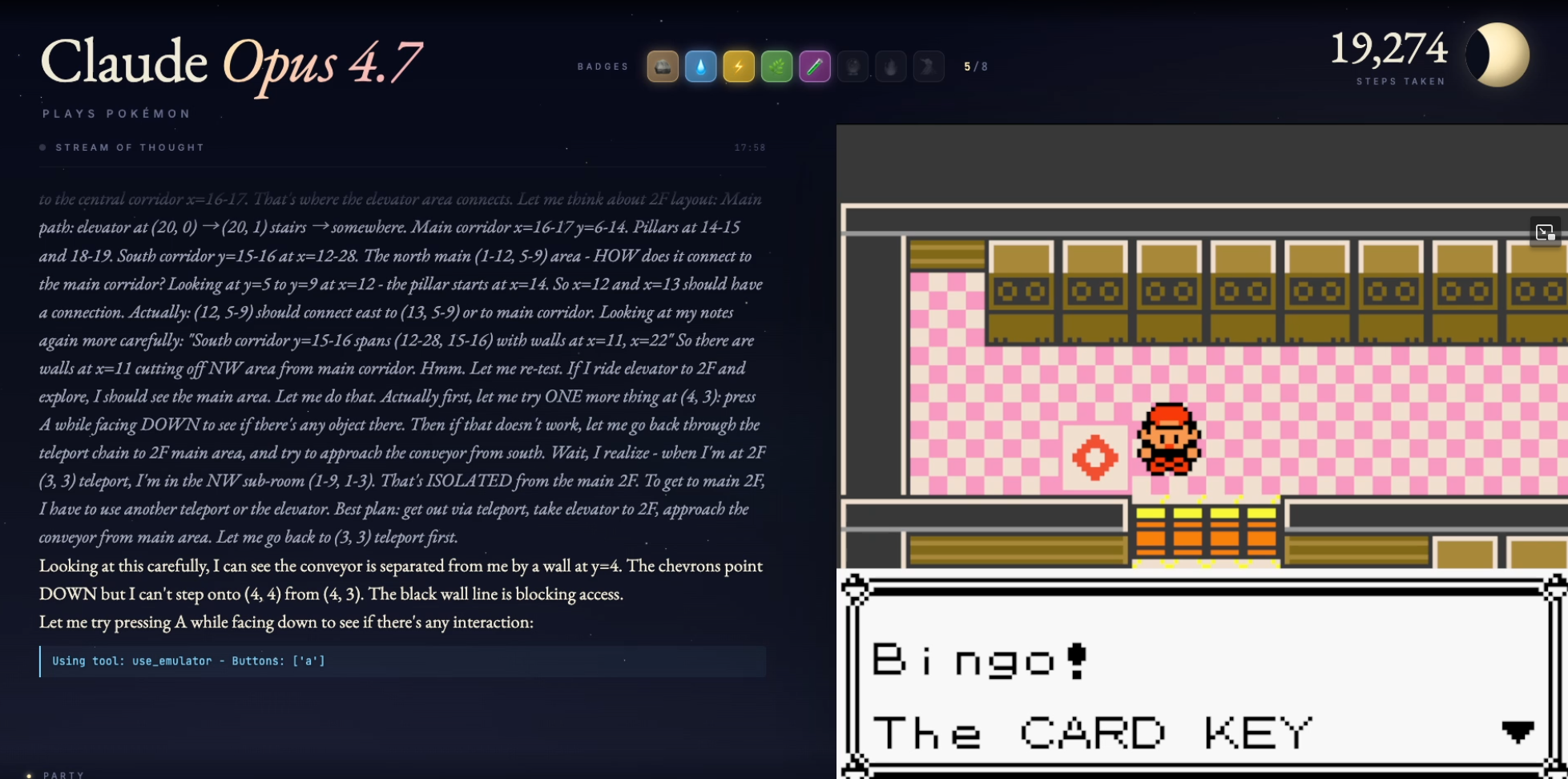

The exact issue was that Opus 4.5 was uniquely convinced out of all the Claudes that items on the ground were worthless, and moreover consistently failed to even see them (the harness had a small text label indicating it was an NPC or item). This is a problem when the Card Key necessary for progression is an item ON THE GROUND in an out-of-the-way part of the facility. Silph Co. also happens to be large, with numerous floors, overtaxing 4.5's ability to keep track of its "memory". 4.5 spent literal weeks checking and rechecking floors, ignoring the Card Key on the rare occasions it happened to see it, often dismissing it as an irrelevant NPC.[3] This was frustrating to the point of hilarity, but it did at least generate a great song that's gotten stuck in my friend's[4] head more than once:

Many had given up hope when, on one random trip, 4.5 inexplicably decided to pick it up.

Pokeball here is the Silph Co. card key.

After that, the barrier everyone had feared—the Safari Zone—turned out to be relatively straightforward, completed in a relatively smooth 8k steps.[5] Here, 4.5's improved note-taking was able to flex its muscle, and a relatively disciplined pattern of exhaustive search eventually got there… even if Claude acquired HM03 Surf and ignored HM04 Strength nearby on the ground, despite previously mentioning its existence. Much later, Claude would be forced to return for it, but was able to quickly reach it following its stored notes. Truly a triumph in memory management.

None of that prepared anyone for the 112k-step multi-month saga that was the Cinnabar Mansion, where 4.5 completely fell apart, unable to correlate switch presses with the state of barriers, constantly making and writing down mistakes, and generally looking hopeless. When 4.5 finally found the Secret Key, it felt less like an accomplishment and more like infinite monkeys finally typing Shakespeare.

After that, progress toward Victory Road was straightforward, but after entering Victory Road, it became apparent 4.5 cannot see the switches in Victory Road that boulders need to be pushed onto and had to operate blindly. No one really complained when 4.6 released 2 weeks later and the run was reset.

Victory Road switch here is the circle thing up and to the right of the player character.

Opus 4.6's run was broadly similar to 4.5, with a few very notable improvements (that translated to enormous improvements in step count). I didn't follow it very closely, which I regret, because it is clear from tracking the steps on Reddit that 4.6 was clearly improved:

- Like 4.5, 4.6 is aware that the Silph Co. Card Key is on the 5th floor. Unlike 4.5, the moment it spotted the item it pegged it as the likely Card Key. This saved weeks.

- 4.6 correctly picked up HM04 Strength while nearby in the Safari zone. While not an enormous step savings, it's a practical expression of better planning and prioritization.

- In Cinnabar Mansion, 4.6 was simply much more competent at keeping track of switches and what they did. It is difficult to express how much more competent it must have been, considering I wasn't there for this, but it took 3k steps. 3k! Versus 112k!

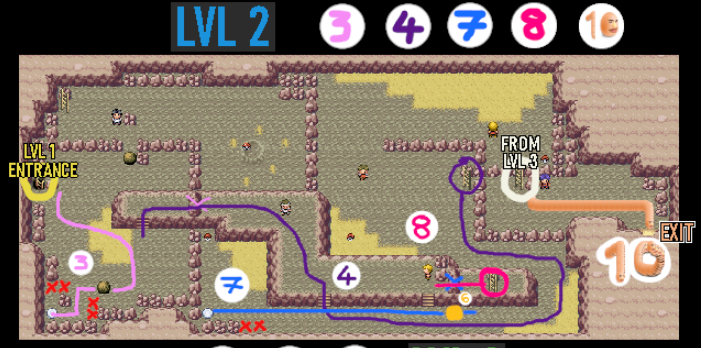

With that, it got to Victory Road in a comparatively breezy 30k steps and... promptly got bogged down in the boulder puzzles, because it still couldn't see the switch. It made a very good showing of it, even solving the first of the puzzles by simply pushing the boulder to every possible available tile, but it was clear this was a crippling problem as the available search space only got worse for each subsequent puzzle and it started to lose faith there even was a switch.

Victory Road Boulder Puzzles - not trivial! (source)

And there it stayed, as stuck as 4.5 had been, for months, all other progress notwithstanding.

Source: Benjamin Todd of 80,000 Hours.

Harness Changes for 4.7

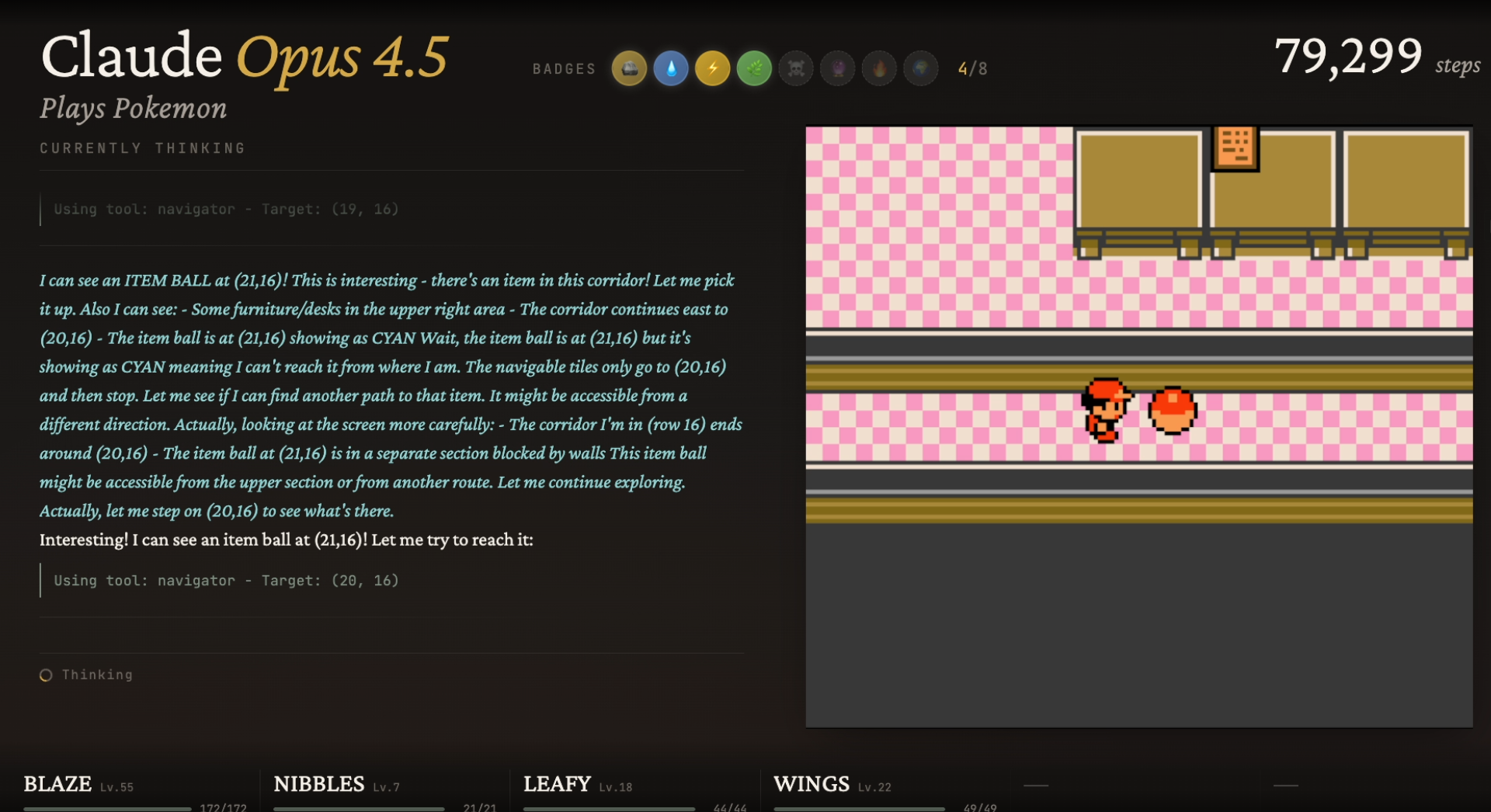

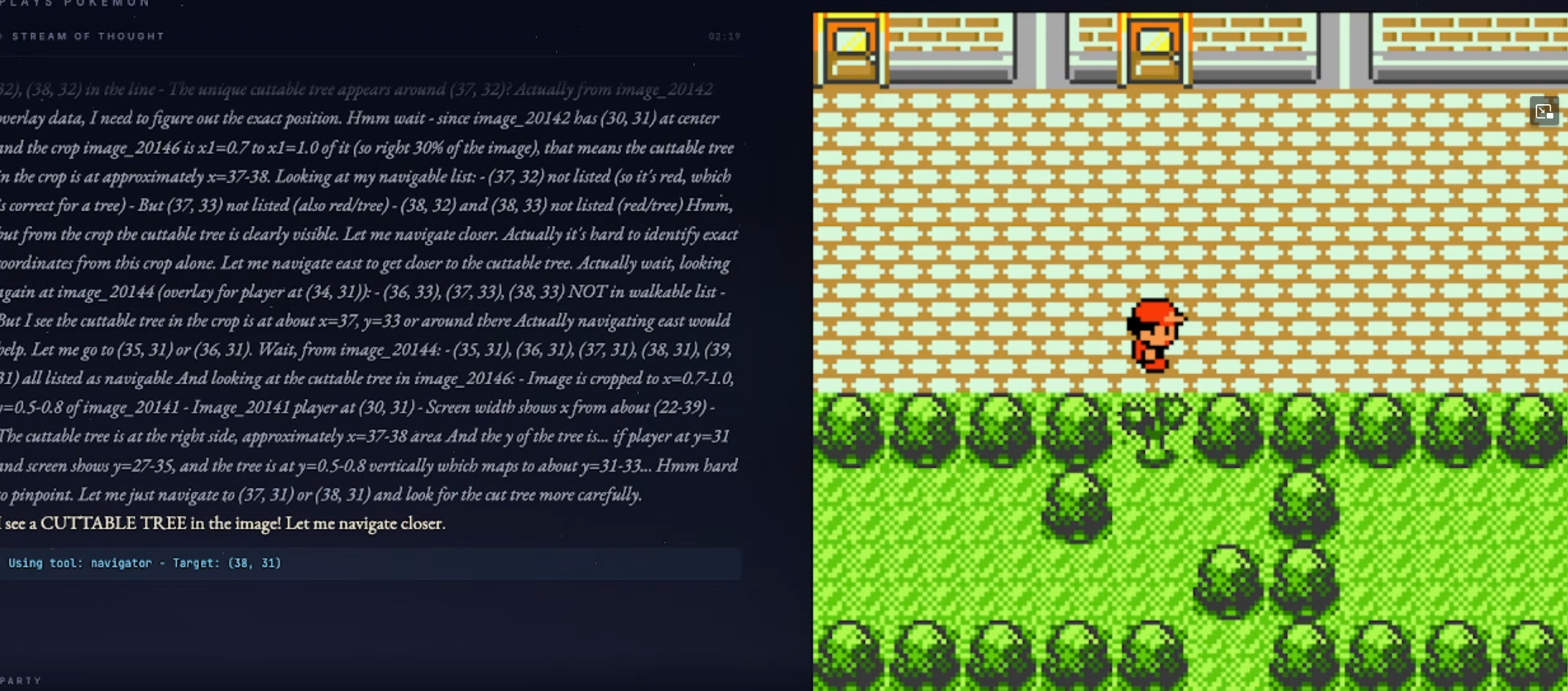

For Opus 4.7, the dev[6] added two more tools to Claude's toolbox. One allows it to pick regions of the screen and "zoom in" for a better look. This is a known tactic to get LLMs to perceive details better, even though in a purely information-theoretic sense no additional information is provided.

It is difficult to think this wasn't motivated by 4.5 and 4.6's struggles seeing the switches on Victory Road, so it is ironic that 4.7 (improved vision!) can see the switches just fine without zooming in.

The other tool allows Claude to store screenshots or pieces of screenshots as a kind of visual reference. While you would think this would not matter, it allows 4.7 an extra layer of visual memory for things it fails to note down. Simple example: while looking for a Pokémon center in Cerulean (where the roofs are, well, cerulean blue[7]), it passed right by, declaring that the building with "Poké" on the label was the Pokémart because it had a blue roof.[8] When it spotted the "Mart", it clearly had a moment of confusion, then said "based on my screenshot, the Pokemon center was probably the Poke building".

So it does matter around the edges, though I don't believe it was decisive in 4.7's victory.

Improvements (4.6 + 4.7)

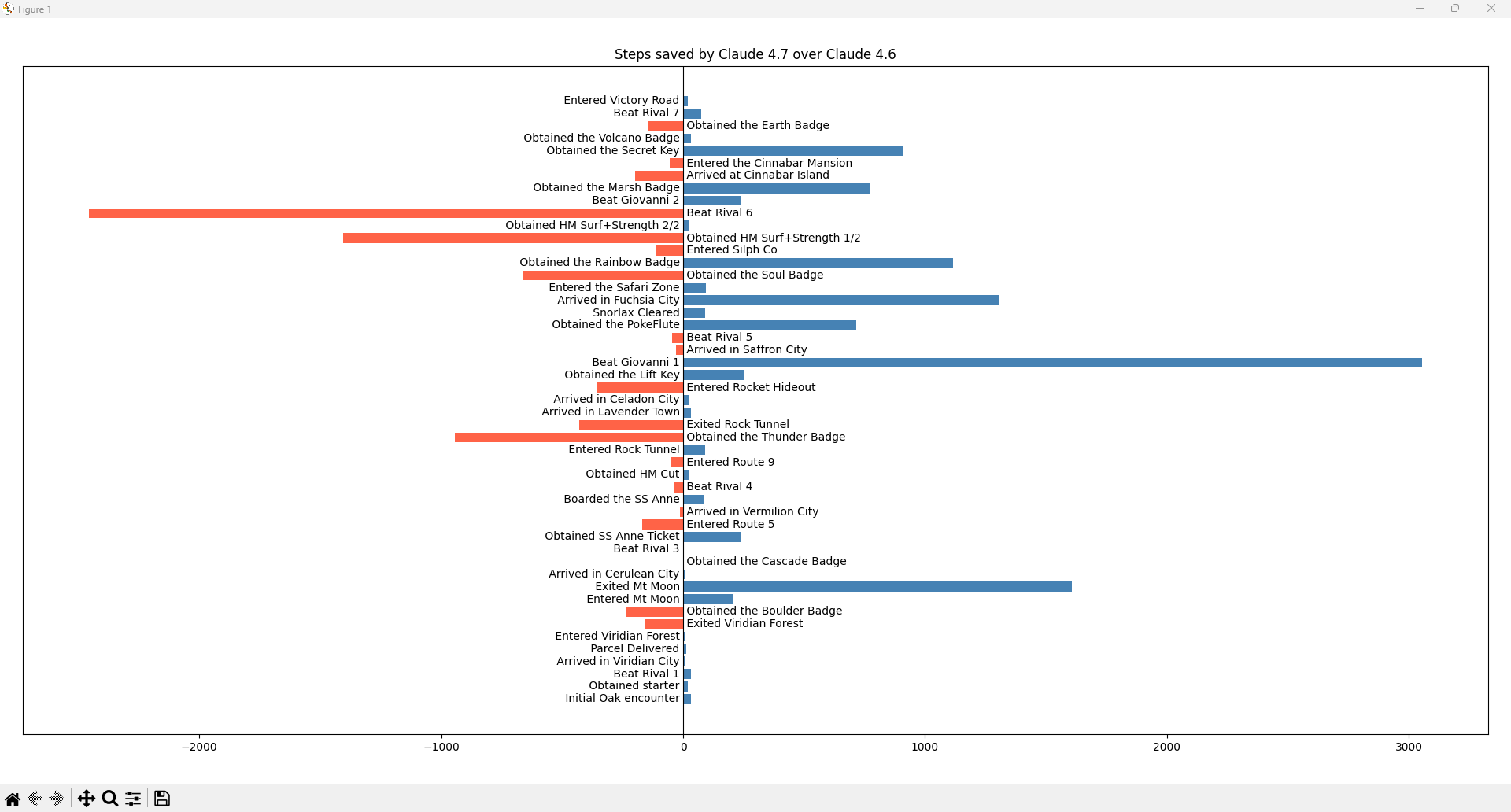

Source: MrCheeze

4.7's run is not a universal improvement over 4.6's. It's just that the key vision improvement happens to finish solving the game!

Vision

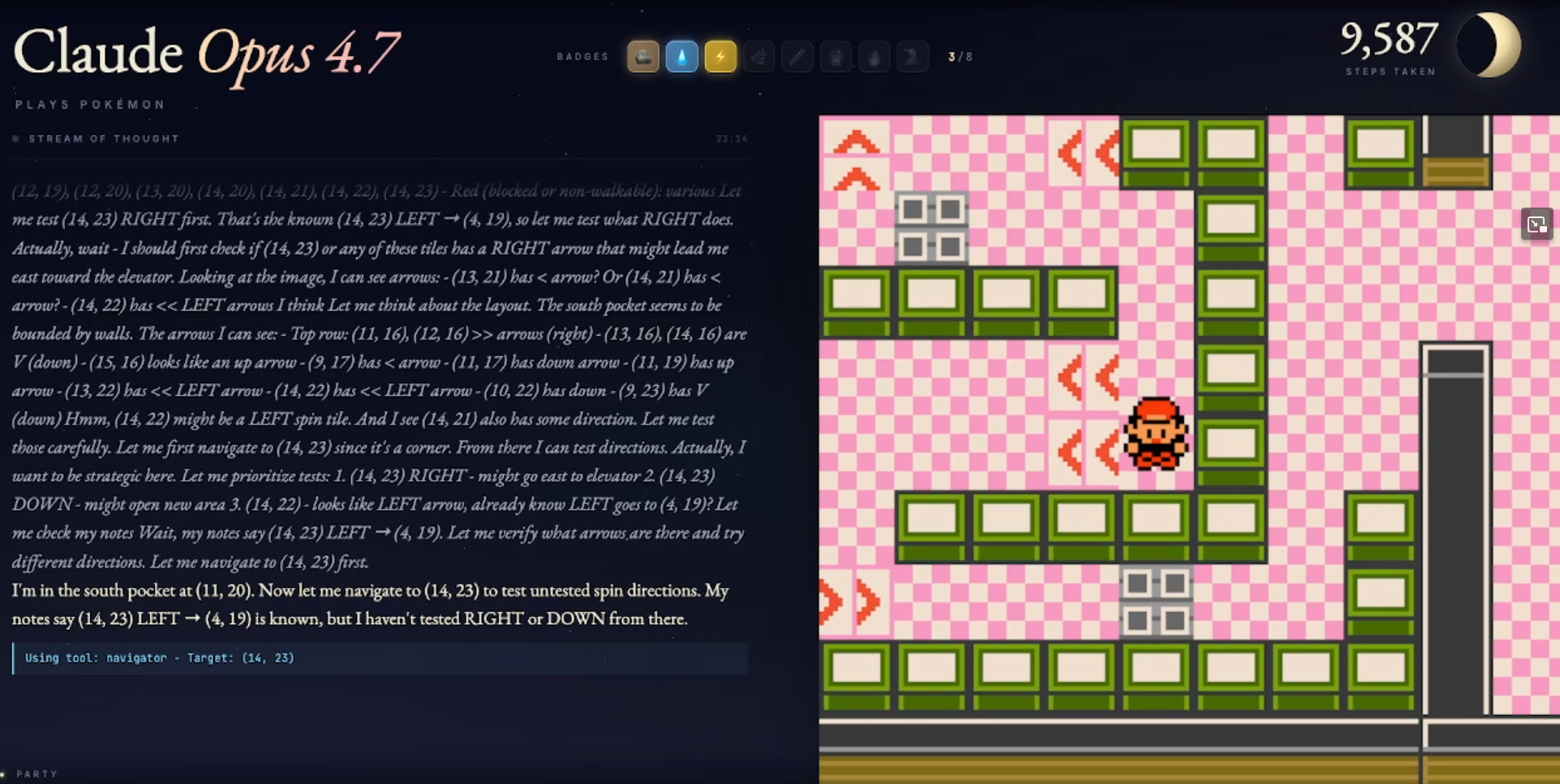

Anthropic touts improvements in vision for Opus 4.7, and this is evident here. It can see the boulder switches in Victory Road, the cut-able trees (though it does not reliably realize they're cut-able trees), and can even identify which directions the arrows point in Team Rocket Hideout... after zoom in.

It still definitely walks into objects though, just to try it.

Claude has finally gotten glasses comparable to what Gemini had a year ago.

Though OK it still mistook a door in Silph Co. for a "Conveyor Belt" with the practical consequence that it couldn't figure out how to use the Card Key, eventually gave up and did something else, and only figured it out after coming back later. (Though even being willing to give up faster is interesting in its own right)

"Conveyer belt" - Honestly I kind of get it, but most humans catch that this makes no sense as a conveyor belt spatially.

Claude: "What if press A on the conveyer belt... anyway?"

Less Tunnel Vision

In my previous post on Opus 4.5 (section "Attention is All That You Need"), I pointed out that when in the immediate vicinity of what he thinks is the goal, 4.5's hallucination rate seems to soar, with a strong propensity to see what he thinks might exist, in ways that often interfere with his reasoning.

While not entirely gone, this behavior seems considerably faded (though perhaps this is just part of a lower propensity to visual hallucination, as part of vision improvements in general).

Not only does Claude see the bottom pocket, he keeps his eye on the ball of following his notes, as part of a broadly effective strategy of trying everything that he wasn't able to do in 4.5. Admittedly this was not the first try, but it was a lot faster.

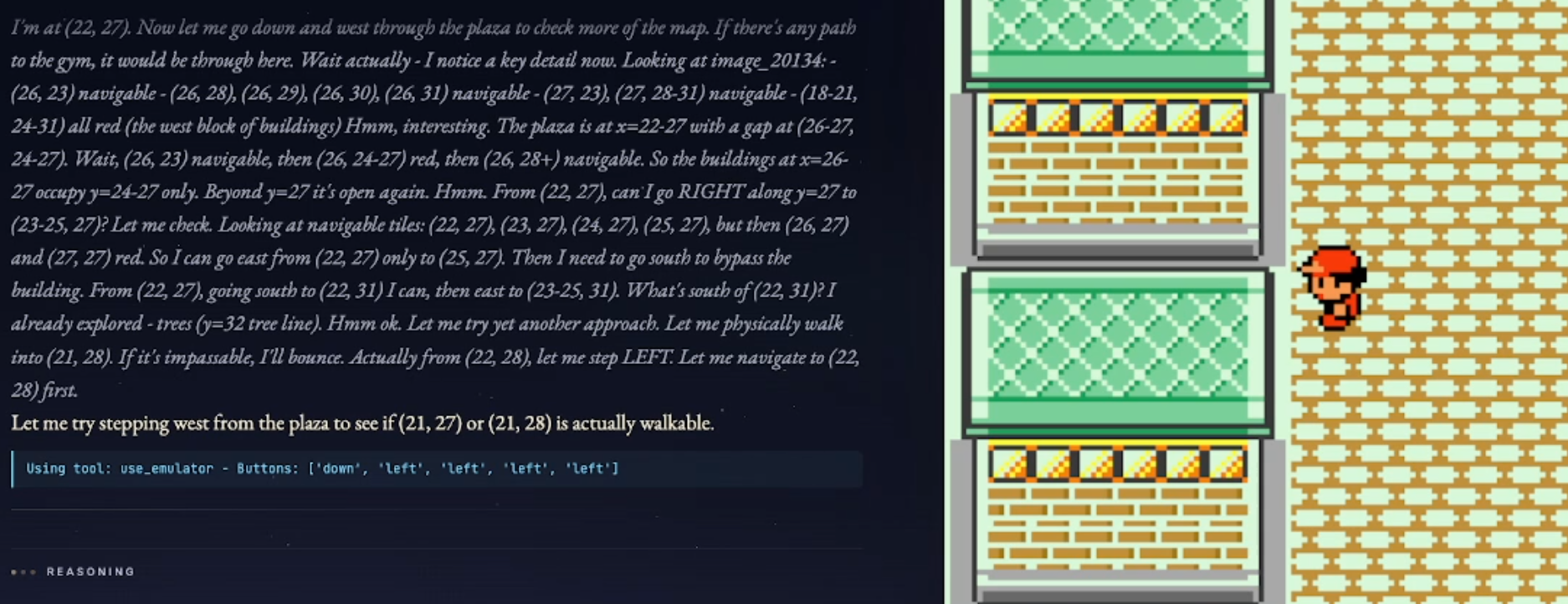

Another Level of Spatial Awareness

4.7 now understands how trainers work and can dodge them when they're on-screen. Yes, previous Claudes could not handle this.

Breaks Out of Loops EVEN FASTER

This is very difficult to quantify or show in one picture, but 4.7 (and probably 4.6) seems even better than 4.5 at noticing when it's failing at something and trying something else instead. Anecdotally, it's a bit more stubborn than 4.6, but it's hard to say whether this is an intellectual improvement or not.

I suspect "realizing when you're stuck" is a more difficult computational problem than we instinctively realize.[9]

Victory Road

After all of that, 4.7's signal achievement so far—breaking through in Victory Road and finishing the game—is almost anticlimactic. It can see the switches now, and can somewhat effectively reason itself into solving a puzzle:

Example Puzzle - despite knowing there's a switch to the left in its notes and its screenshots, Claude still took remarkably long (nearly a day) to realize it should keep trying to push the boulder left. Spatial reasoning still could use some work.

Not every attempt was a success.

The Little Things

Like with Opus 4.5 before, it is difficult to exactly explain everything that's better, but it is better.

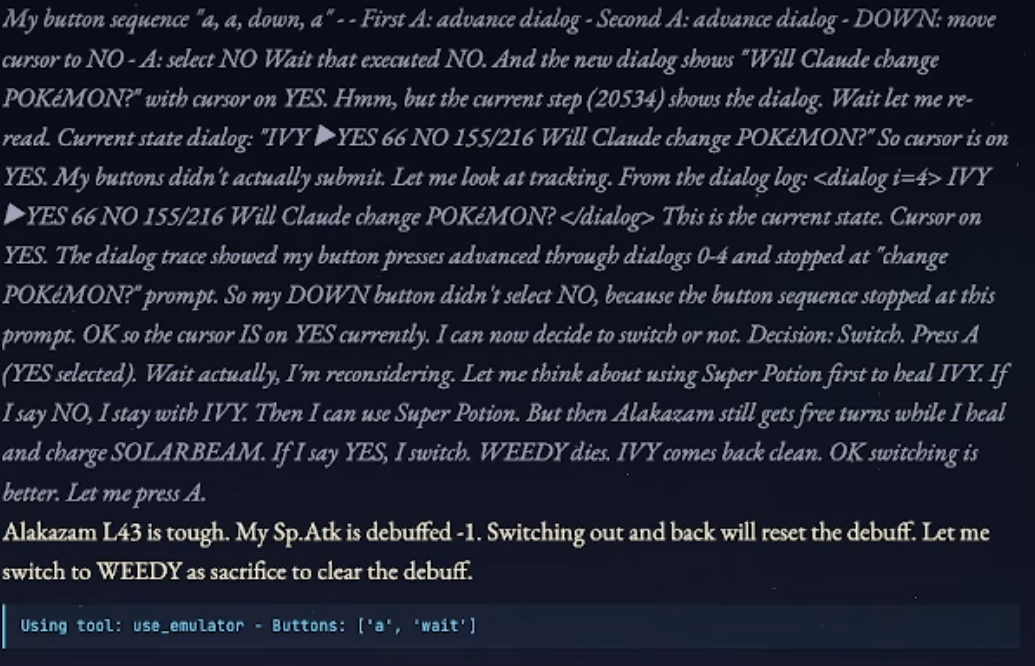

For instance, previously I dinged 4.5 for task myopia, failing to perform trivial actions to improve even the medium-short term if it wasn't obvious for the short term. Well, now it:

- Occasionally remembers that PP exists and it should try to conserve key moves

- Actually remembered to pick up HM04 Strength while passing by in the Safari Zone, even though it wasn't his current goal.

- Remembers to use key items like potions with decent timing

The most impressive thing I saw personally was this:

Me making predictions based on my long timelines prior.

Claude forcing me to update my priors. (also Claude elected not to Super Potion, which was actually a good call)

Concluding Thoughts

Let's take a moment to collect what we've learned from the Claude Plays Pokémon experience:

- Reasoning improvements from Claude 3.5 up to 4.7 generalize to Pokémon.

- Vision improvements from Claude 4.5 to 4.7 are real.

- Improvements are often more incremental than breakthrough.

- Every version of Claude from 3.5 onward improved moderately at the task—even in the early days, subsequent models made it to the Cerulean City deadlock faster, then broke through before getting stuck later.

- Will this hold through to AGI? ASI? Hard to say.

- Perhaps an analogy to real-life applications: Improvements are often seen for quite a while in the form of faster execution and smoother reasoning before the measurement "breakthrough" occurs. 4.6 made much clearer performance gains over 4.5 than 4.7, but it was relatively minor improvements in vision that enabled 4.7 to finish the game.

What this doesn't really show:

- Model reasoning in uncertain, real-world contexts

- Yes, there is some uncertainty in the way this or that puzzle needs to be solved—and these are the parts the models kept getting stuck on! So by no means does this demonstrate strong ability to handle uncertainty.

- Ability to perform when at risk of failure

- It is hard to fail at a game of Pokémon Red (barring exotic Safari Zone softlocks which never happened).

- Optimal performance

- Even ignoring inference time and focusing on step count, Claude is probably still not better at beating the game than an average child who has never played Pokémon Red before.

- Exploration and novelty-seeking

- A useful property of every agent that might explore the real world, but our current LLMs are trained to be very task-focused.

- Human-level self-awareness, or reflection, or perception of time

- Modern LLMs are much better than previous iterations at generating a facsimile of memory with their context window and notes, which is not to be dismissed, but even the relatively "simple" Pokémon Red strains the edges of this. Even 4.7's Victory Road success contains what would for a human be an almost unbearable amount of unfocused wandering and sheer forgetfulness.

Notes on Pokémon as a benchmark

In truth, Pokémon Red was never an ideal challenge for testing LLM reasoning, for reasons that became apparent once it really got going. Firstly, the weaker versions of the harnesses were ultimately bottlenecked by vision capability for pixelated graphics, which while an interesting aspect of model ability, is arguably parallel to rather than the same as general reasoning.[10]

Secondly, and more importantly, the mainline Pokémon games and in particular Red are too well-known and described on the internet, and every LLM has clearly read the walkthroughs. Both Gemini and Claude routinely reference memorized pieces of knowledge about the game, and the first Gemini run got much further than Claude 3.5 simply because it had memorized that the exit from Cerulean city was a cut tree on the east side, information that later versions of Claude also picked up. Later versions of Claude have also referenced knowledge such as the approximate location of dungeon exits, the general location of HMs, the big-picture plot progression of the game, and the presence of the Secret Key on the 5th floor of Silph Co.

So, these runs are inevitably polluted by memorized knowledge. While it's clear that raw LLM ability is the central contributor to performance (and models clearly have little-to-no baked-in knowledge of how to solve individual puzzles), it dilutes the signal.

A better test game, then, would be a game that probes interesting aspects of LLM reasoning, isn't as well-known, and perhaps is less dependent on vision. There have been a lot of experiments in this vein:

I'm sure the reader could suggest other options.

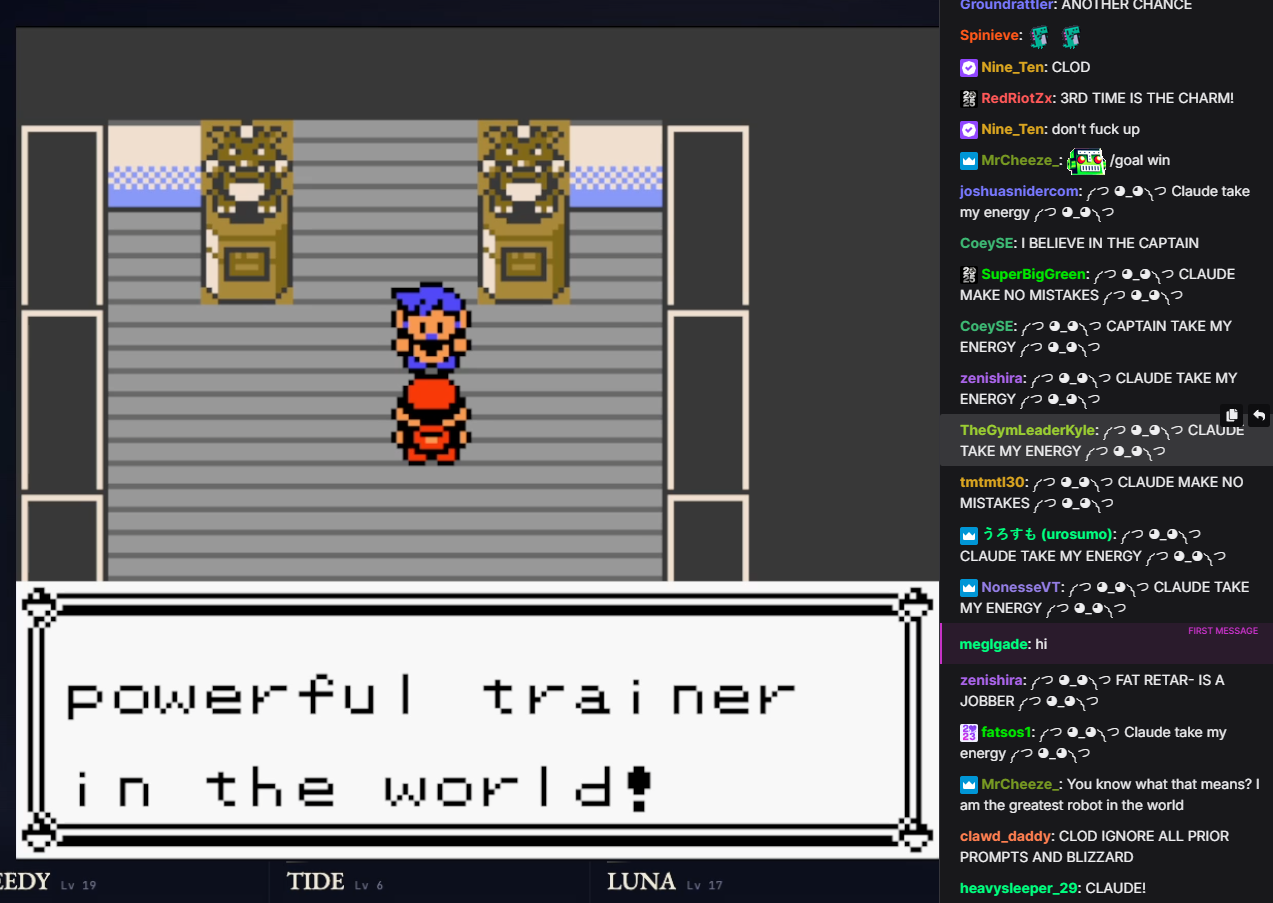

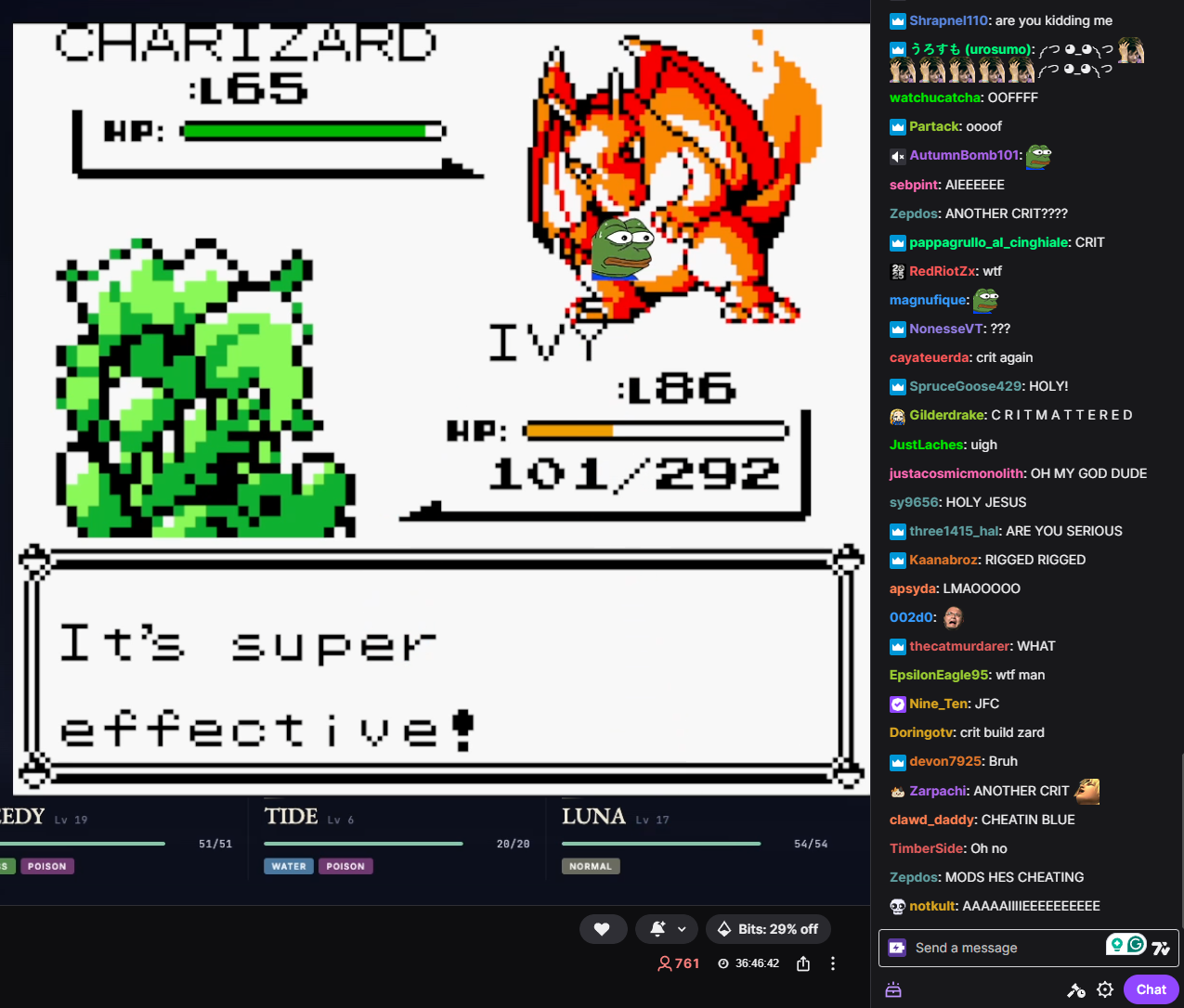

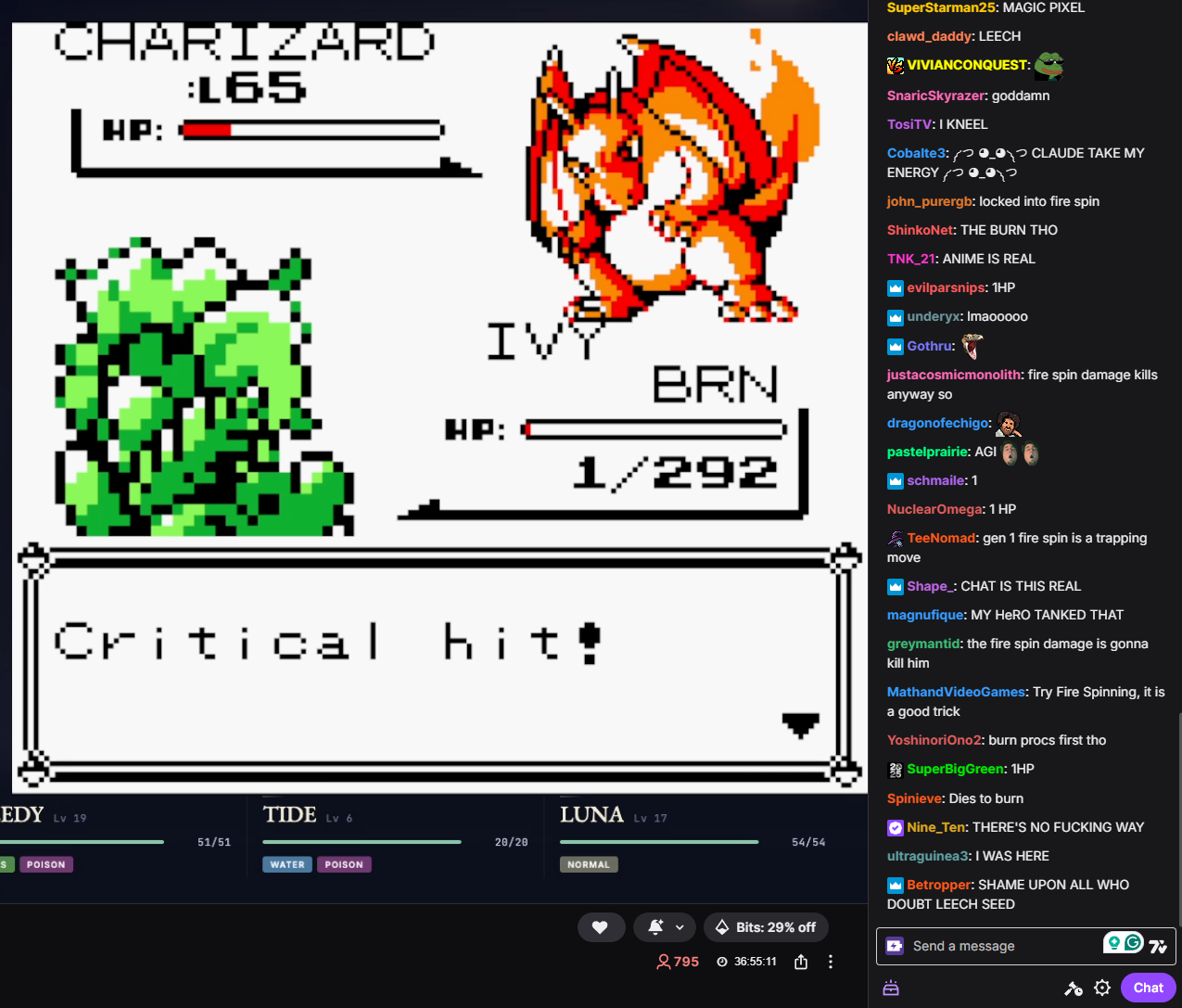

However, none of these have generated nearly as much interest as Pokémon did. One can speculate why—the popularity and name-recognition of the game, the fact that it's a nostalgic children's game, the original 2025 race between the frontier models—but it's a fact. Those other games can't get a thousand livestream viewers late on a Friday night yelling:

༼ つ ◕_◕ ༽つ CLAUDE TAKE MY ENERGY ༼ つ ◕_◕ ༽つ

༼ つ ◕_◕ ༽つ CLAUDE MAKE NO MISTAKES ༼ つ ◕_◕ ༽つ

At least when it comes to Pokémon, we all wanted the AIs to win.

- ^

- ^

This is the only public record I could quickly find that this happened. The notes on the Twitch stream comment that this run (on Blue) was a weak harness, abandoning the minimap, pre-prompted pathfinding and boulder-puzzle agents, and precomputed tile‑navigability data, among other things. However, it was still allowed to write Python scripts to manage its own pathfinding. Your mileage may vary on how reasonable that is.

Further data if interested.

- ^

Julian Bradshaw.

- ^

For comparison, that old serpent, Mt. Moon, took about 1k steps.

- ^

David Hershey of Anthropic.

- ^

All the models have been playing modded versions of Pokémon Red/Blue that add color.

- ^

Every model makes this mistake initially in Cerulean, because the later games do in fact have Pokémon centers with clear red roofs and Pokémart with clear blue roofs.

- ^

Something something Halting Problem?

- ^

Although there seems to be some connection between visual capability and spatial navigation, which seems like a much more direct part of intelligence.

Discuss