FROM EXPERIENCE TO FRAMEWORK: Part 1 of a 2-part series on AI Framework for Higher Education Institutions (HEIs)

A Candid Guide to Gen-AI Readiness for Decision-Makers in Higher Education

Gen-AI is not coming to South African universities — it’s already here. Students are using it. Some staff are using it. And in many institutions, nobody at the top has a clear policy for any of it. The question facing every decision maker (Vice-Chancellor, DVC, and Council member) right now is not whether to adopt Gen-AI — it is whether your institution will be prepared for its adoption or simply react to it.

If I were to ask you this question:

“How does your university prepare students for a world where AI does the thinking?”

Do you have a compelling answer? This is not a technology question. It is a question of institutional relevance, academic credibility, and fiduciary responsibility. Universities sit at a unique intersection of opportunity and vulnerability. They have world-class research ambitions, deep transformation imperatives, and a regulatory environment shaped by data protection laws. Gen-AI will either amplify our mission or quietly erode it. The difference is readiness.

The Importance of Readiness

There is a temptation in executive circles to conflate speed with leadership. In the AI space, that instinct can be costly. Readiness is not about being first — it is about being intentional. An institution that rushes into Gen-AI tooling without a proper governance foundation is not innovative; it is exposed.

AI readiness means your institution can answer five questions with confidence:

- Who is responsible?

- What data is at risk?

- Are your people equipped?

- How do you measure success?

- And — critically — are you compliant with statutory requirements and your own institutional ethics commitments?

If you cannot answer all five, it’s safe to say you are not ready.

“The institutions that will lead in this space are not necessarily the fastest — they are the most deliberate.” — Chris

But then again, let me be blunt about the cost of inaction. The “wait and see” approach is not neutral either; it is a decision that comes with its own consequences.

What Happens When You Are Not Ready?

Unmanaged AI adoption creates institutional liability on multiple fronts simultaneously. The risks compound each other — and they arrive faster than most executive teams expect.

First, you will lose control of the narrative on campus. Faculty are already using some variation of GPT to draft emails and feedback. Students are using it to summarise readings, brainstorm and, yes, occasionally to cheat. If there is no clear institutional stance, then you cannot promote responsible AI use. This creates Shadow AI — unsanctioned, unmonitored, and often unsafe use of tools that place institutional data at risk.

Second, your assessment integrity will collapse silently. We are already seeing the first wave of assessment redesign globally. Institutions that delay this conversation will find their degrees devalued by employers who can no longer distinguish between a graduate who can think critically and one who can prompt effectively. In the highly competitive education space, where the values of degrees are often linked to the public perception of the university’s brand, the reputational risk is non-negotiable.

Third, the digital divide will widen. If Gen-AI tools remain the preserve of students who can afford premium subscriptions and high-end devices, we would have failed to provide equitable resources to all students. Readiness includes ensuring that access to AI literacy is universal, not premium.

Finally, the regulatory risk is real. Data protection laws are not to be regarded as suggestions. If a staff member pastes students’ personal information into a public AI tool that trains on that data, your institution has just experienced a data breach. Regulators will not accept “we didn’t have a policy” as a defence.

Beyond the obvious concerns, there are specific risks that decision-makers must understand well enough to govern, not just delegate. They include:

i. Hallucinations and Misinformation: Gen-AI tools are designed to be plausible, not necessarily accurate. These models produce confident, fluent, incorrect outputs. A student citing an AI-generated case reference that does not exist is an academic integrity failure. A policy brief built on hallucinated statistics is a reputational failure.

ii. Data Privacy Violations — When staff or students input institutional, student, or research data into commercial AI platforms, that data may be processed and stored offshore — potentially violating data privacy and sovereignty restrictions on cross-border transfers. This is not a hypothetical. It is already happening at most institutions.

iii. Unplanned and Escalating Costs — The pricing models for enterprise AI are complex. They often involve token-based billing — you pay for every word processed. A seemingly “free” pilot that gets adopted by 2,000 staff can suddenly generate a six-figure monthly bill if not governed by a clear procurement and usage framework. Moreover, initial offerings are often subsidised, and true costs are often higher. Financial sustainability must be part of the readiness conversation from day one.

iv. Bias Amplification. Most large language models are trained on English-language internet data. They are less fluent in other distinct non-english dialects. They may also reflect Western cultural assumptions. If we deploy these tools uncritically, we risk creating a two-tier system where English-first students accelerate while others struggle.

v. Staff Displacement Anxiety — Poorly communicated AI rollouts generate fear. When staff believe AI is being introduced to replace them rather than support them, resistance follows. That resistance is not irrational — it is a failure of change management.

viii. The “Going Rogue” Concern. While the Hollywood version of AI sentience is not the immediate threat, the `operational` version is. This includes `Prompt Injection Attacks` where a malicious actor tricks your internal chatbot into revealing private data or bypassing its safety guardrails. If you integrate AI into your Student Information System, you must ensure the connection is secure. This is a cyber-risk issue, not a sci-fi issue.

ix. Erosion of Academic Trust — Without clear AI disclosure policies and assessment redesign, the line between student work and AI-generated content becomes unenforceable. This does not just affect individual grades — it undermines the credibility of your institution’s qualifications.

BETTER PLANNING: A Practical Starting Point

You do not need to have all the answers. But you do need a structure for asking the right questions. This is where a strategic framework becomes essential.

The goal is not to create bureaucracy; it is to create clarity and confidence.

Here is my strongest piece of advice: Do not delegate Gen-AI strategy to IT alone. This is an institutional strategy challenge. An IT-led approach will solve the technical plumbing but miss the cultural and pedagogical transformation. AI adoption is a governance exercise first, a people exercise second, and a technology exercise third. If that order is inverted, the consequences described above become likely rather than merely possible.

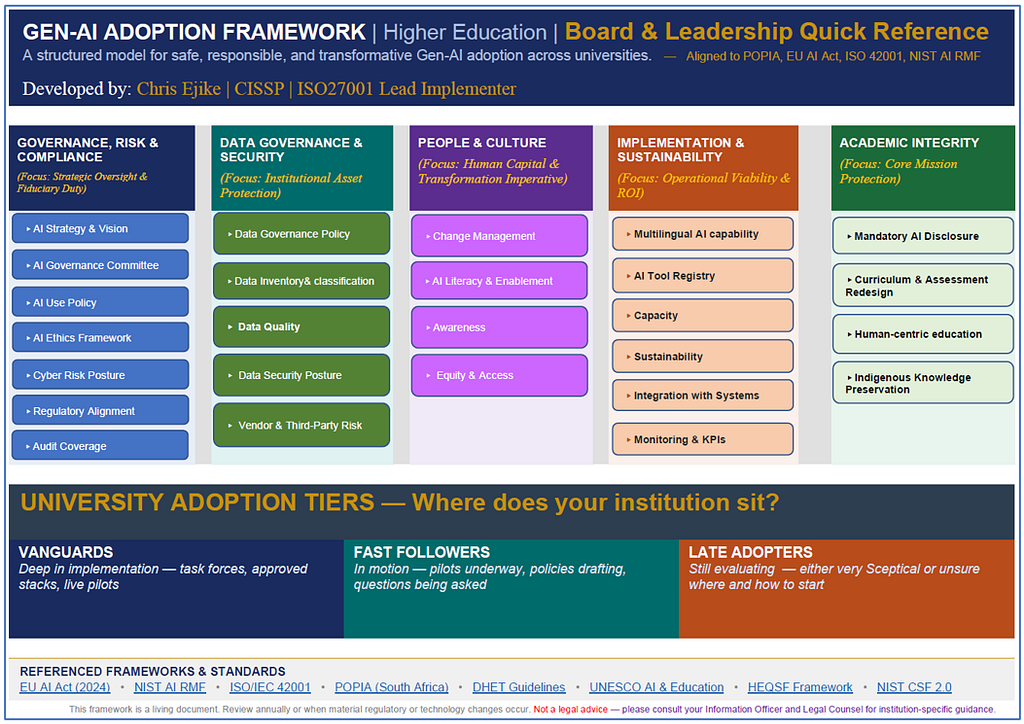

The framework I have developed for universities is built around five strategic pillars. These are the areas that, at a minimum, your executive team must have a shared understanding of before you approve any significant Gen-AI investment or pilot programme.

The Framework Matrix — Five Pillars You Cannot Afford To Skip

The framework is organised around five pillars. Each addresses a distinct dimension of institutional readiness.

A FINAL WORD

The higher education sector has navigated immense challenges before. We have adapted to new funding models, responded to student movements, and pivoted through a pandemic.

Gen-AI is another wave of change, but it is one we can shape if we approach it with intention, courage, and a clear framework.

Readiness is not about having perfect answers. It is about having the right conversation at the right table with the right people.

Are You AI-Ready? was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.