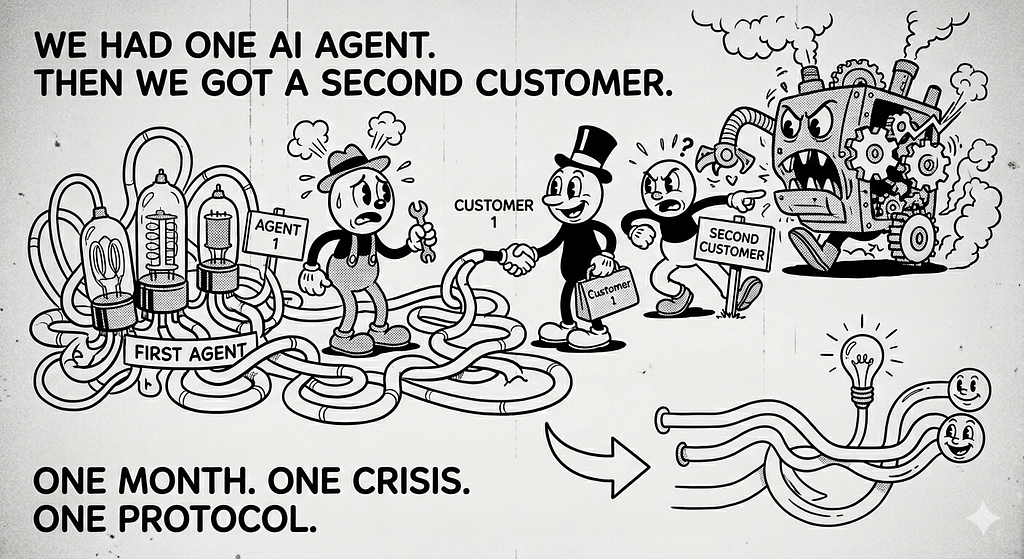

We Had One Brilliant AI Agent. Then We Got a Second Customer — and Almost Made the Worst Engineering Decision of the Year.

One month of research. One architectural crisis. One protocol that changed how I think about building agentic systems. This is what I learned — and what nobody tells you about A2A.

I want to start with the mistake we almost made.

Three months ago, our team had just finished something we were genuinely proud of a fully agentic workflow for a logistics client. It wasn’t just a chatbot. It was a multi-step reasoning system: it ingested shipment data, detected anomalies, cross-referenced supplier contracts, drafted escalation emails, and handed off to a human only when it had to. Weeks of iteration. Real business value. The client loved it.

Then we got a second customer in the same industry.

And our first instinct — I’ll be honest — was to copy the whole thing.

Duplicate the repo. Swap the credentials. Adjust a few prompts. Ship it. Done.

My lead engineer stopped us. He said something I keep thinking about:

“If we copy it now, we’ll be maintaining two versions of the same brain forever. What happens when we get a third customer? A fifth?”

He was right. And that question — how do you build one agentic system that works for many customers without duplicating everything — is what sent me down a month-long research spiral into the Agent2Agent (A2A) Protocol.

This is Part 1 of what I found. Not a tutorial. A real account of the problem, the protocol, the tradeoffs, and the gaps that no Medium article talked about.

The Architecture We Had vs. The Architecture We Needed

Here’s what our original setup looked like:

Customer A

└── Dedicated Agent Stack

├── Ingestion Agent (their data format)

├── Anomaly Detection Agent

├── Contract Lookup Agent

└── Escalation Drafting Agent

Everything hardwired. Every agent knew about every other agent. The “integration” was a pile of shared Python functions and a Google Pub/Sub topic with no documentation.

When the second customer arrived, what we actually needed was this:

Customer A ──┐

├──→ Orchestrator Agent

Customer B ──┘ │

├──→ Ingestion Agent (handles both formats)

├──→ Anomaly Detection Agent (shared)

├──→ Contract Lookup Agent (shared)

└──→ Escalation Drafting Agent (shared)

Sounds simple. But here’s the brutal reality nobody talks about: there was no standard way for these agents to discover each other, hand off tasks, or return typed results — especially across different frameworks and teams.

Our ingestion agent was on LangGraph. The anomaly detection agent? Built by a different team on Semantic Kernel. The contract lookup was a vendor-supplied CrewAI agent. None of them spoke the same language.

Every integration was a custom handshake written at 11pm by someone who no longer worked there.

That’s the problem A2A was designed to solve.

What is A2A — Actually?

Agent2Agent (A2A) is an open protocol, announced by Google in April 2025 and now governed by the Linux Foundation (so no single vendor owns it). It was co-developed with 50+ technology partners, which means it’s not a research paper — it reflects real production needs.

It standardizes three things between agents:

- Discovery — how one agent finds another and learns what it can do

- Delegation — how one agent hands off a task to another

- Result exchange — how the receiving agent returns typed, structured output

Here’s the critical part: A2A does not touch what’s inside your agent. It doesn’t care about your prompts, your memory, your tools, or your reasoning chain. It only governs the boundary — the interface between agents.

Think of it as REST for the agentic layer. REST doesn’t care if you’re running FastAPI or Express. A2A doesn’t care if you’re running LangGraph or CrewAI. It only cares about what comes in and what goes out.

For us, this was the insight that changed everything.

How A2A Actually Works (Without the Marketing Fluff)

The protocol is built entirely on infrastructure you already have: HTTP(S), JSON-RPC 2.0, and Server-Sent Events (SSE). No proprietary SDKs. No new message brokers. No vendor lock-in.

Let me walk through the four concepts that matter.

1. The Agent Card — Your Agent’s Public Identity

Every A2A-compliant agent exposes a JSON document at:

GET /.well-known/agent.json

This is the Agent Card. It’s like a business card, except it’s machine-readable and your orchestrator consumes it at runtime. It declares:

- The agent’s name, description, and version

- The skills it offers — what it can do

- The authentication schemes it requires (OAuth2, OIDC, API Key, mTLS)

- The endpoint URL where tasks are accepted

No Agent Card = not A2A compliant. It’s the entry point for everything.

In our case, this meant our shared Anomaly Detection Agent could now advertise itself. The orchestrator didn’t need hardcoded knowledge of it. It just read the card.

2. Skills — The Capabilities an Agent Offers

A skill is a named, discrete capability the agent exposes. Each skill has:

- A unique id

- Supported inputModes and outputModes (text, file, structured data)

- Optional examples to guide callers

Skills are how an orchestrator agent decides which downstream agent to call — without reading documentation, without hardcoded routing logic, without a developer in the loop.

Our anomaly detection agent declared one skill: detect_shipment_anomalies. Input: structured JSON. Output: structured JSON. Every orchestrator, regardless of which customer it served, could discover and invoke it the same way.

3. Tasks — The Unit of Work

Once a client agent reads the Agent Card and decides to engage, it sends a task:

POST /message/send

A task moves through a clean lifecycle:

submitted → working → completed

↘ failed

↘ cancelled

For long-running work (and anomaly detection over thousands of shipment records is long-running), the server streams real-time updates back via SSE:

- TaskStatusUpdateEvent — "still working, here's where we are"

- TaskArtifactUpdateEvent — "here's a chunk of the result"

No blocking. No polling loops. No timeout hell.

4. Artifacts — Typed Outputs

The result of a completed task is an Artifact — a structured, typed payload, not a free-form string. Artifacts can be:

- TextPart — plain or markdown text

- DataPart — structured JSON

- FilePart — binary content with MIME type

This typing is what makes downstream consumption programmatic. Our escalation drafting agent could consume the anomaly detection artifact directly — no string parsing, no regex, no prompt engineering to extract structured data from unstructured text.

Putting It All Together

Orchestrator Agent

│

├─→ GET /.well-known/agent.json # "Who are you? What can you do?"

│ ← Agent Card # "I'm AnomalyDetector. Here's my skill."

│

├─→ POST /message/send # "Analyze these 4,000 shipment records."

│ ← taskId

│

├─→ SSE stream # Real-time updates while it works

│ ← TaskStatusUpdateEvent # "Still working..."

│ ← TaskArtifactUpdateEvent # "Done. Here's the structured result."

│

└─→ Task Complete ✓

A2A vs. MCP — Let’s Clear This Up

These two protocols are constantly confused. They do entirely different things.

DimensionA2AMCPDirectionAgent ↔ Agent (horizontal)Agent ↔ Tools/Resources (vertical)PrimitiveTaskTool / Resource / PromptPurposeDelegate work to another autonomous agentGive one agent access to tools and dataAnalogyHiring a contractor for a jobGiving an employee new software

They’re complementary, not competing. In our architecture, each shared agent uses MCP to call its internal tools (database queries, API calls, file reads) — and exposes itself via A2A to receive delegated tasks from the orchestrator.

The most production-ready agentic systems will use both. One is the internal nervous system. The other is the external handshake.

What This Let Us Actually Build

Back to our original problem: one agent workflow, two customers, no duplication.

Here’s what the A2A architecture looked like after we refactored:

Customer A Orchestrator ──┐

│ /.well-known/agent.json

Customer B Orchestrator ──┼──→ AnomalyDetectionAgent (shared)

│ /.well-known/agent.json

├──→ ContractLookupAgent (shared)

│ /.well-known/agent.json

└──→ EscalationDraftingAgent (shared)

Each shared agent is deployed once. Each customer has its own lightweight orchestrator that reads Agent Cards and routes tasks. The orchestrators are the only things that differ per customer — and they’re thin. They don’t contain business logic. They contain routing logic.

When Customer C arrives? We spin up a new orchestrator. The shared agents don’t change. Don’t redeploy. Don’t retest.

One month of research paid off in the first customer onboarding we did after the refactor. What would have been a two-week “duplicate and customize” became a two-day orchestrator configuration.

Adding A2A to Your Existing Agent (It’s Thinner Than You Think)

The most important thing I learned: you don’t rewrite your agent for A2A. You wrap it.

Your Existing Agent (LangGraph / Semantic Kernel / CrewAI / whatever)

│

└── AgentExecutor (your logic, completely unchanged)

│

└── A2AStarletteApplication (the wrapper)

│

├── /.well-known/agent.json ← Agent Card endpoint

└── /message/send ← Task endpoint

The four steps:

- Define your Agent Card — describe your agent’s name, skills, input/output modes, and auth requirements in JSON

- Implement AgentExecutor — one method: execute(context, task). Wrap your existing agent logic inside it

- Mount the A2A app — wrap it with A2AStarletteApplication and serve it alongside your existing app

- Validate with the A2A Inspector — the official a2aproject/a2a-inspector tool tests your Agent Card and task endpoint for spec compliance

Your agent’s internal reasoning doesn’t change. A2A gives it a standardized front door.

The Honest Part — Where A2A Falls Short

I’d be doing you a disservice if I stopped here. A2A is a strong foundation, but it has real, documented gaps. These aren’t theoretical risks. We hit several of them.

1. There Is No Global Agent Registry

The spec does not define a trusted registry of agents. Community registries like a2aregistry.org validate protocol compliance — they do not validate real-world identity.

A well-crafted attacker can host a valid /.well-known/agent.json, get a real TLS certificate, claim to be "PaymentProcessingAgent," and pass every schema validation check.

There’s an open GitHub proposal (#1672) for native agent identity verification. As of the time I’m writing this: still unresolved.

What we did: Agent Card URL pinning in our orchestrators. Every allowed agent URL is explicitly whitelisted. No dynamic discovery in production.

2. Authorization Is Entirely Your Problem

A2A declares what authentication mechanism is needed. It does not enforce authorization logic.

“Authorization creep” — where a client agent accumulates more access than it should across multiple task invocations — is a real risk the spec explicitly leaves to implementers.

What we did: Per-skill OAuth2 scopes, enforced at the API gateway layer (Azure APIM), before the request ever reaches the agent.

3. No Prompt Injection Defense

When Agent A’s output becomes Agent B’s input, a malicious payload in that output can inject instructions into Agent B’s reasoning. A2A has zero built-in mitigation for this.

Every agent boundary is an attack surface. We learned this the hard way in a red team exercise where we crafted a contract document that, when processed by the Contract Lookup Agent, caused the Escalation Drafting Agent to send emails to unintended recipients.

What we did: Input sanitization middleware at every agent boundary. It’s not elegant. It’s necessary.

4. No Task Replay Protection

The spec doesn’t define nonce validation or timestamp verification. A captured message/send request can be replayed. For agents that trigger real-world actions (sending emails, updating records, processing payments), this is not a theoretical risk.

What we did: HMAC-based MAC + nonce + 30-second timestamp window, implemented as custom middleware.

5. Skills Don’t Have Strict I/O Schemas

Skills declare input/output modes (text, data, file) but not typed schemas. An orchestrator agent can’t programmatically validate whether its output matches a skill’s expected input format.

This caused us real bugs — the orchestrator happily sent malformed data to a skill that expected a specific JSON structure, got an opaque error back, and had no way to self-correct.

What we did: JSON Schema validation at the task layer, maintained as a convention on top of the spec. It’s not in A2A. You add it yourself.

The Honest Readiness Assessment

A2A is protocol-ready but not plug-and-play enterprise-ready.

It’s at roughly the same maturity point REST APIs were in 2010. The communication primitive was solid. OAuth2, API gateways, rate limiting, and observability had to be built on top before enterprises trusted it.

A2A needs the same treatment:

LayerWhat You NeedWhat We UsedTransport securityTLS 1.3+ with cert validationAzure APIMAgent identitymTLS or OIDCIstio service meshAuthorizationLeast-privilege OAuth2 scopesAPIM Policies + OPAReplay protectionNonce + timestamp + MACCustom middlewarePrompt injectionInput sanitization per boundaryCustom guardrail layerAudit loggingFull task + artifact chainOpenTelemetry → Azure Monitor

If you already have this infrastructure — and most mature teams do — A2A slots in cleanly and becomes genuinely powerful.

If you don’t have it, the protocol alone is not enough for production.

Should You Use A2A?

Use A2A when:

- You have multiple agents across different frameworks that need to collaborate

- You’re building a multi-agent orchestration system where a coordinator routes work to specialized agents

- You need async, long-running tasks with real-time progress streaming

- You’re in a multi-team or multi-vendor environment where each team owns their agent independently

- You’re solving exactly what we solved: one workflow, many customers

Don’t use A2A yet when:

- You have a single agent calling tools — use MCP, it’s simpler and purpose-built

- You’re in a tightly coupled single-framework system — direct function calls have lower overhead

- Your team cannot own the security layers A2A leaves to you — the protocol alone is not safe in production

- You need strictly typed, schema-validated inter-agent contracts right now — A2A’s skill definitions are too loosely typed for that out of the box

What’s Coming in Part 2

Part 1 was the foundation. Part 2 is the real thing.

In the next article, I’m sharing the actual implementation — the code, the architecture decisions, the things that broke in staging that no tutorial warned us about:

- Case 1: Wrapping our existing LangGraph agent as an A2A server and validating the full task lifecycle end-to-end with the a2a-inspector tool

- Case 2: Building the orchestrator that discovers, authenticates, and delegates to multiple shared agents from a single agentic workflow

- The deployment architecture on AKS with Azure APIM as the gateway layer

- The three bugs we didn’t expect and how we fixed them

- The one thing I’d do differently if we started over today

This research cost me a month of evenings and a few genuinely frustrating dead ends. I hope this saves you some of that.

If you’ve hit similar problems — multiple agents, multiple customers, integration hell — I’d love to hear how you’re solving it. Drop it in the comments. I read every one.

Part 2 drops next week. Follow to not miss it.

If this was useful, the clap button doesn’t cost anything — but it helps more people find it. Thanks for reading.

Your AI Agents Can't Talk to Each Other. A2A Is Why That Changes. was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.