Introduction: The Quiet Revolution in AI

A few years ago, interacting with AI felt fairly straightforward and a bit limited. Whether it was a customer support chatbot or a virtual assistant, these systems were fundamentally reactive. They responded to prompts, matched patterns and returned answers, but rarely understood intent beyond surface-level signals. Their usefulness was confined to well-defined scenarios and even slight deviations often led to frustrating experiences. For organizations this meant AI could assist but not truly augment decision-making or automate complex workflows.

Today, that paradigm is changing rapidly. Advances in large language models, combined with improvements in system design, have transformed AI from a passive responder into an active participant in problem-solving. Modern AI systems are no longer limited to answering questions, they can break down tasks, retrieve and synthesize information, interact with external tools, and even collaborate across multiple specialized agents. In many ways, they are beginning to resemble digital teams rather than standalone tools, capable of handling multi-step processes that once required human coordination.

This shift represents more than just technological progress; it marks a fundamental evolution in how we think about intelligence in software systems. We are moving from a world where AI simply reacts to inputs, to one where it can plan, act, and adapt over time. What started as simple chat interfaces is now evolving into autonomous systems that can operate with increasing independence. This quiet revolution is redefining the boundaries of automation and setting the stage for a future where AI becomes an integral part of how work gets done.

This shift is not incremental, it is transformational.

To understand where we are going, we need to understand where we started.

Phase#1: Rule-Based Chatbots — The Beginning

The journey of conversational AI begins not with intelligence, but with structure. Early chatbots were built in an era when computational resources were limited and the idea of machines “understanding” humans was still emerging. These systems relied entirely on handcrafted logic, where developers anticipated user inputs and mapped them to predefined outputs. There was no learning, no adaptation — only execution.

A landmark example from this phase is ELIZA, developed in the 1960s. ELIZA simulated conversation by rephrasing user inputs using pattern-matching rules, creating the illusion of understanding. While simple by today’s standards, it revealed something important: people were willing to engage with machines conversationally, even when intelligence was minimal.

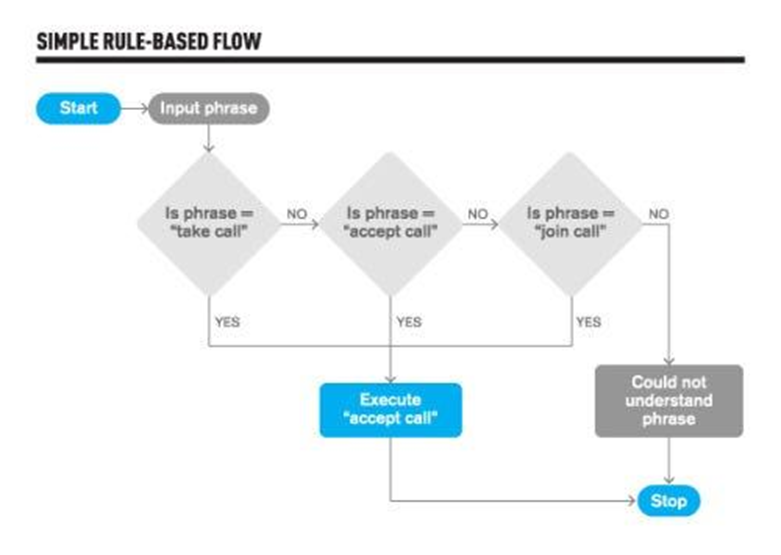

Key Characteristics:

Rule-based chatbots operate entirely on predefined logic, relying on pattern matching and keyword detection to interpret user input. At their core, these systems are structured as decision trees, where each branch represents a possible user intent mapped to a fixed response. When a user enters a query, the system scans for specific keywords or phrases and triggers the corresponding rule. This creates a deterministic interaction model where every outcome is explicitly scripted, leaving no room for interpretation or adaptation.

Strengths:

The primary advantage of rule-based systems lies in their predictability and control. Because every response is explicitly defined, developers can ensure consistent behavior across all interactions. This makes them particularly effective in structured environments such as customer support FAQs or guided workflows. Additionally, these systems are lightweight and efficient, requiring no training data or complex infrastructure, which made them practical and widely adopted in early applications.

Limitations:

Despite their simplicity, rule-based chatbots are inherently rigid. They lack contextual awareness and treat each user input as an isolated event, often resulting in unnatural or disjointed conversations. Even minor variations in phrasing can cause failures, as the system cannot generalize beyond predefined rules. As the scope of interactions grows, maintaining and scaling the rule set becomes increasingly complex, limiting their usefulness in dynamic, real-world scenarios.

Phase#2: Machine Learning Chatbots — Learning Intent

As the limitations of rule-based systems became apparent, the field shifted toward data-driven approaches. Machine learning introduced the ability for chatbots to learn patterns from historical interactions rather than relying solely on predefined logic. This marked the beginning of systems that could generalize across different inputs and adapt to linguistic variability.

Instead of matching exact phrases, machine learning chatbots classify user intent and extract key entities, enabling them to understand variations in how users express the same request. This transition significantly improved the flexibility and scalability of conversational systems.

Key Characteristics:

Machine learning chatbots are built on statistical models trained on labeled datasets to recognize user intent and extract relevant entities. These systems leverage algorithms that learn patterns from examples, allowing them to interpret diverse phrasings of similar requests. Rather than following fixed rules, they use probabilistic reasoning to determine the most likely intent behind a user’s input, making interactions more flexible and adaptive.

Strengths:

One of the most important advantages of machine learning chatbots is their ability to handle linguistic diversity. Users are no longer constrained to specific phrases, as the system can infer intent from a wide range of expressions. This makes interactions more natural and user-friendly. Additionally, these systems scale more efficiently than rule-based approaches, as improvements can be achieved by retraining models with additional data instead of manually expanding rule sets.

Limitations:

However, machine learning chatbots depend heavily on the quality and quantity of training data. Building effective models requires large, well-annotated datasets, which can be resource-intensive to create. Moreover, while these systems can classify intent, they still lack deep semantic understanding and often struggle with ambiguity, sarcasm, or complex queries. Their ability to maintain context across multiple interactions remains limited, which can result in fragmented conversations.

Phase#3: NLP-Powered Assistants — Understanding Language

The next evolution introduced deeper linguistic processing through Natural Language Processing (NLP). This phase marked a shift from simply identifying intent to analyzing the structure and meaning of language. Systems began to incorporate techniques such as parsing, entity recognition, and semantic analysis, enabling more sophisticated interactions.

This era also saw the rise of voice assistants like Siri and Google Assistant, which brought conversational AI into everyday consumer experiences. Users could now interact with technology using natural speech, significantly lowering the barrier to adoption.

Key Characteristics:

NLP-powered assistants utilize a combination of linguistic techniques to process and understand user input. These include tokenization to break text into components, syntactic parsing to analyze grammatical structure, and entity recognition to extract meaningful information. Additionally, speech-to-text and text-to-speech technologies enable seamless voice interactions, expanding the usability of conversational systems across different modalities.

Strengths:

These systems significantly improve the naturalness and accessibility of human-computer interaction. By understanding the structure and components of language, they can respond more accurately and contextually to user queries. The integration of voice capabilities further enhances usability, allowing users to interact with systems in a more intuitive and conversational manner. This phase played a critical role in mainstream adoption of AI assistants.

Limitations:

Despite these advancements, NLP-powered systems are still largely constrained by predefined intents and workflows. While they can process language more effectively, they often struggle with maintaining deep contextual awareness over extended conversations. Complex or ambiguous queries can lead to incorrect interpretations, and the reliance on structured pipelines can limit flexibility in dynamic or unpredictable scenarios.

Phase#4: Conversational AI with Context — Stateful Systems

As user expectations grew, the need for more natural and continuous conversations became evident. This led to the development of stateful conversational systems that could retain context across multiple interactions. Instead of treating each query independently, these systems track conversation history and use it to inform future responses. This shift enabled chatbots to support multi-step workflows and handle follow-up questions more effectively, making interactions feel more coherent and human-like.

Key Characteristics:

Stateful conversational systems incorporate dialogue management frameworks that track the state of a conversation and maintain contextual memory. These systems store relevant information from previous interactions and use it to interpret subsequent inputs. This allows them to handle multi-turn dialogues where each response builds upon prior context, enabling more fluid and meaningful conversations.

Strengths:

The introduction of context-awareness represents a significant improvement in conversational quality. Users can interact more naturally without needing to repeat information, as the system remembers previous inputs and adapts accordingly. This enhances efficiency and user satisfaction, particularly in complex workflows such as booking, troubleshooting or customer support scenarios.

Limitations:

However, managing conversational context introduces additional complexity. Designing systems that accurately track and utilize context across diverse scenarios can be challenging. These systems often rely on predefined dialogue structures, which can limit flexibility when conversations deviate from expected patterns. Additionally, context retention is typically limited in scope and may not scale effectively for long or highly dynamic interactions.

Phase#5: LLM-Based Chatbots — Generative Intelligence

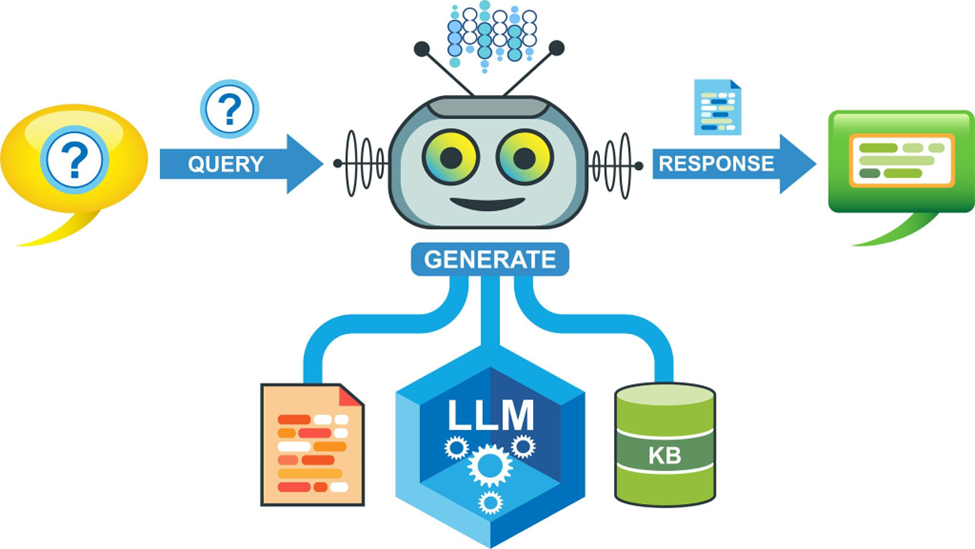

The introduction of Large Language Models (LLMs) marked a transformative moment in the evolution of conversational AI. Unlike previous systems that relied on predefined rules or structured pipelines, LLMs generate responses dynamically based on patterns learned from vast amounts of text data. This enables a level of flexibility and expressiveness that was previously unattainable. Applications like ChatGPT demonstrate how these models can engage in open-ended conversations, generate content, and adapt to a wide range of topics with minimal configuration.

Key Characteristics:

LLM-based chatbots are built on transformer architectures that enable them to process and generate language at scale. They are trained on massive datasets and can perform a wide variety of tasks through few-shot or zero-shot learning. These systems generate responses dynamically, taking into account the context of the conversation and leveraging learned patterns to produce coherent and contextually relevant outputs.

Strengths:

The flexibility and power of LLMs have significantly enhanced the quality of conversational AI. These systems can handle complex, open-ended queries and generate responses that are fluent, nuanced and context aware. They eliminate the need for predefined intents, allowing them to operate across multiple domains with minimal setup. This makes them highly versatile and capable of supporting a wide range of applications.

Limitations:

Despite their capabilities, LLMs present several challenges. They can produce inaccurate or fabricated information, requiring additional mechanisms to ensure reliability and factual correctness. Their computational demands are substantial, making them resource-intensive to train and deploy. Furthermore, aligning their outputs with user expectations and ethical considerations remains an ongoing challenge in the field.

Phase#6: Autonomous AI Agents — The Future

The latest phase in this evolution moves beyond conversation into action. Autonomous AI agents are designed not just to respond, but to achieve goals. These systems combine reasoning, planning, and execution capabilities, allowing them to perform complex tasks with minimal human intervention. Latest frameworks illustrate how AI can orchestrate multi-step workflows, interact with external tools, and iteratively refine its approach to problem-solving.

Key Characteristics:

Autonomous AI agents operate through iterative loops that involve perception, reasoning, and action. They are designed to break down high-level goals into smaller tasks, use tools and APIs to execute those tasks, and continuously refine their approach based on feedback. These systems often integrate multiple components, including memory, planning modules, and execution engines, to enable complex, goal-driven behavior.

Strengths:

The ability to automate complex workflows represents a major advancement in AI capability. Autonomous agents can perform multi-step tasks, interact with external systems, and adapt their strategies dynamically. This transforms AI from a passive conversational tool into an active problem-solving entity, opening up new possibilities for productivity and innovation across various domains.

Limitations:

However, the increased autonomy of these systems introduces significant challenges. Ensuring reliability, safety, and alignment becomes more difficult as agents gain the ability to act independently. Errors in reasoning or execution can propagate across multiple steps, leading to unintended consequences. Additionally, building and managing such systems requires sophisticated infrastructure and careful oversight, as the technology is still evolving and not yet fully mature.

While these advancements are impressive, they also introduce new complexities that cannot be ignored, making it essential to understand the challenges involved in building reliable and trustworthy AI systems. At the same time, it is equally important to look ahead as the next phase of AI which will be defined not just by capability, but by how these systems evolve and integrate into real-world environments.

Challenges in Modern AI Systems:

Modern AI systems face a range of challenges that extend far beyond their impressive capabilities. One of the most pressing issues is reliability, as these systems can produce outputs that appear accurate but are factually incorrect or misleading. They are also heavily dependent on large volumes of high-quality data, which can introduce bias and fairness concerns if not carefully managed. In addition, the computational cost of training and deploying advanced models is substantial, raising questions about scalability and environmental impact. Another significant challenge is explainability, as many modern models operate as “black boxes,” making it difficult to understand how decisions are made. As these systems are increasingly integrated into real-world applications, ensuring safety, accountability, and alignment with human values becomes critical, especially in high-stakes domains like healthcare, finance, and governance.

Designing responsible AI:

Designing responsible AI requires embedding ethical principles directly into every stage of the system lifecycle — from data collection and model development to deployment and monitoring. At its core, responsible AI emphasizes fairness, transparency, accountability, privacy, and safety. This begins with curating diverse and representative datasets to minimize bias, followed by rigorous evaluation using fairness metrics and bias detection techniques. Equally important is explainability, ensuring that model decisions can be interpreted and audited, especially in high-stakes domains such as healthcare and finance. Governance frameworks, human oversight, and continuous monitoring to detect unintended behaviors post-deployment are needed. Additionally, privacy-preserving techniques like differential privacy and secure data handling practices help protect user information. Ultimately, responsible AI is not a one-time effort but an ongoing commitment.

The Future of AI Systems:

The next generation of AI systems will evolve into deeply integrated, intelligent ecosystems that move far beyond isolated tools, enabling seamless collaboration across platforms and services where multiple AI components coordinate to solve complex problems. These systems will increasingly learn continuously from new data and interactions, refining their performance over time while adapting to dynamic environments. At the same time, they will operate with greater autonomy, capable of planning, reasoning, and executing multi-step tasks with minimal human intervention, supported by orchestration frameworks such as LangChain. As a result, AI will become deeply embedded into everyday workflows across industries, transforming from a supportive assistant into a foundational layer of decision-making and automation, ultimately driving efficiency, innovation, and intelligent operations at scale.

Conclusion:

The evolution from chatbots to autonomous agents reflects a fundamental transformation in AI systems ; from reactive tools that simply respond to inputs into proactive systems that anticipate needs and take initiative; from single, isolated models into distributed intelligence where multiple agents collaborate and specialize; and from static architectures into adaptive systems that continuously learn and evolve. This shift signals a deeper change in how AI delivers value, moving beyond surface-level interaction toward meaningful impact. Ultimately, the future of AI is not about generating better responses — it is about enabling better decisions and executing intelligent actions that solve real-world problems.

Resources:

- ELIZA — Original concept and overview https://en.wikipedia.org/wiki/ELIZA

- https://www.eesel.ai/blog/ai-agent-vs-rule-based-chatbot

- https://medium.com/free-code-camp/how-i-made-a-smarter-chatbot-with-intents-5e6ad6e0fd71

- https://haystack.deepset.ai/blog/memory-conversational-agents

- https://kitrum.com/blog/optimizing-your-business-process-routine-with-autonomous-ai-agents-2/

- https://pagergpt.ai/ai-chatbot/evolution-of-ai-chatbots

- https://yellow.ai/blog/future-of-chatbots/

- https://cube.dev/blog/the-rise-of-ai-data-teams-from-chatbots-to-autonomous-agents

- What Is NLP (Natural Language Processing)? | IBM

The Evolution of AI Systems — From Reactive Chatbots to Autonomous Agents was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.