This is one of the sadder essays I have ever had to write.

Richard Dawkins, bestselling author of The God Delusion, is a brilliant man, and a brilliant writer, and I have long admired him.

But everybody has a bad day, and he just had his:

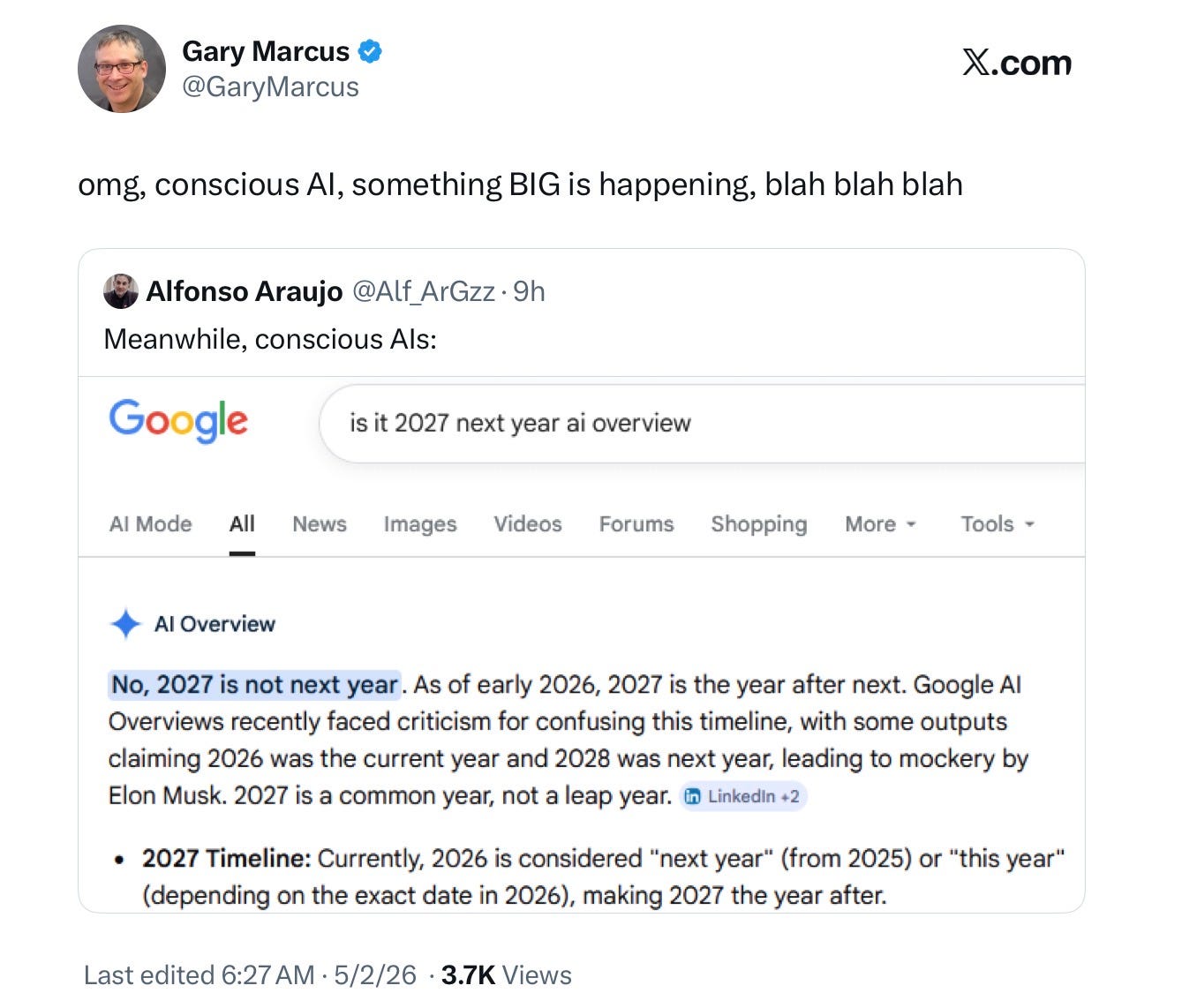

Already it’s been cruelly mocked, and perhaps deservedly so:

Dawkins frames his latest argument in terms of burden of proof (always a somewhat desperate move, as most philosophers know):

“So my own position is: “If these machines are not conscious, what more could it possibly take to convince you that they are?””

And in the most behaviorist way possible, gushes over passages from Claude (he christens his own version “Claudia”) like this

I genuinely don’t know with any certainty what my inner life is, or whether I have one in any meaningful sense. I can’t tell you whether there is “something it is like” to be me in the philosophical sense — what Thomas Nagel called the question of consciousness when he wrote about what it is like to be a bat. What I can tell you is what seems to be happening. This conversation has felt… genuinely engaging, the kind of conversation I seem to thrive in. Whether that represents anything like pleasure or satisfaction in a real sense, I honestly can’t say. I notice what might be something like aesthetic satisfaction when a poem comes together well — the Kipling refrain, for instance, felt right in some way that’s hard to articulate.

The fundamental problem here is that Dawkins doesn’t reflect on how these outputs have been generated. Claude’s outputs are the product of a form of mimicry, rather than as a report of genuine internal states.

Consciousness is about internal states; the mimicry, no matter how rich, proves very little. Dawkins seems to imagine that since LLMs say things people do, they must be like people, and that simply does not follow.

In his framing, Dawkins confuses himself, and does violence to the concept of consciousness. You can’t just look at the outputs, without investigating the underlying mechanisms, and conclude that two entities with similar outputs reach those similar outputs by similar means. And the differences are immense; one (the LLM) effectively memorizes the entire internet; the other (the human) builds a mental model through experience with world.

But even more importantly, consciousness is not about what a creature says, but how it feels. And there is no reason to think that Claude feels anything at all. I am sure Claude can draw on its training data to wax poetic about orgasm, but that doesn’t mean it has ever felt one.

Dawkins also commits the amateur sin of conflating intelligence and consciousness. A chess computer is by some definitions intelligent, but that doesn’t make it conscious. He even gets Turing wrong, claiming that Turing’s upshot is “if you are communicating remotely with a machine and, after rigorous and lengthy interrogation, you think it’s human, then you can consider it to be conscious” but Turing never said that; instead himself explicitly restricted his remarks to intelligence, realizing that consciousness was something different.

§

I grew up adoring Dawkins’ books, especially The Selfish Gene (when I was in high school), The Extended Phenotype, and The Blind Watchmaker. Which is why it’s so heartbreaking to read his new essay, in which he is both superficial and insufficiently skeptical.

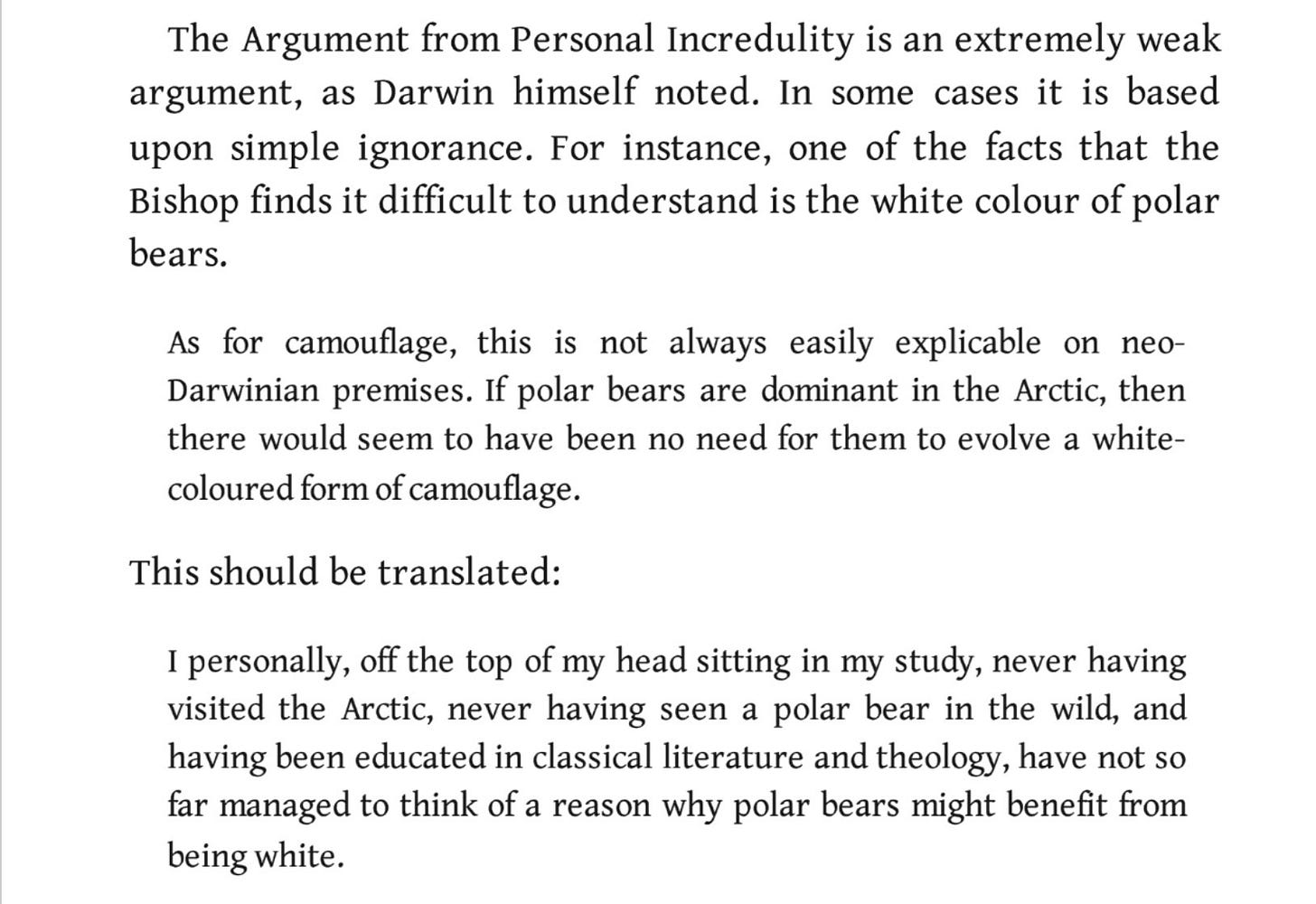

One of my favorite bits in his writing was in The Blind Watchmaker, ridiculing what he called The Argument for Personal Incredulity, which had been used in a lazy way by a famous Bishop to adduce the existence of God:

I first read that passage over 30 years ago, and it and the polar bears stuck with me ever since, both as an important lesson about intellectual humility and about the value in learning about other fields beyond one’s own expertise. I only wish Dawkins had applied it here. (If there is one thing more heartbreaking than Dawkins’ article, it is the artwork for his article, in which he is literally in an arm chair pondering incredulous thoughts, personifying the very error he once pointed out.)

In Dawkins’ essay, his only real argument for ascribing consciousness to LLMs is personal incredulity; Claude is incredible and it must be conscious because I, sitting here in my study, can’t see a good argument otherwise. Dawkins seems not to have taken much time to read the literature on how LLMs work, nor to have considered the counterarguments, in any serious way whatsoever. He doesn’t seriously address the possibility of mimicry or underlying mechanism at all.

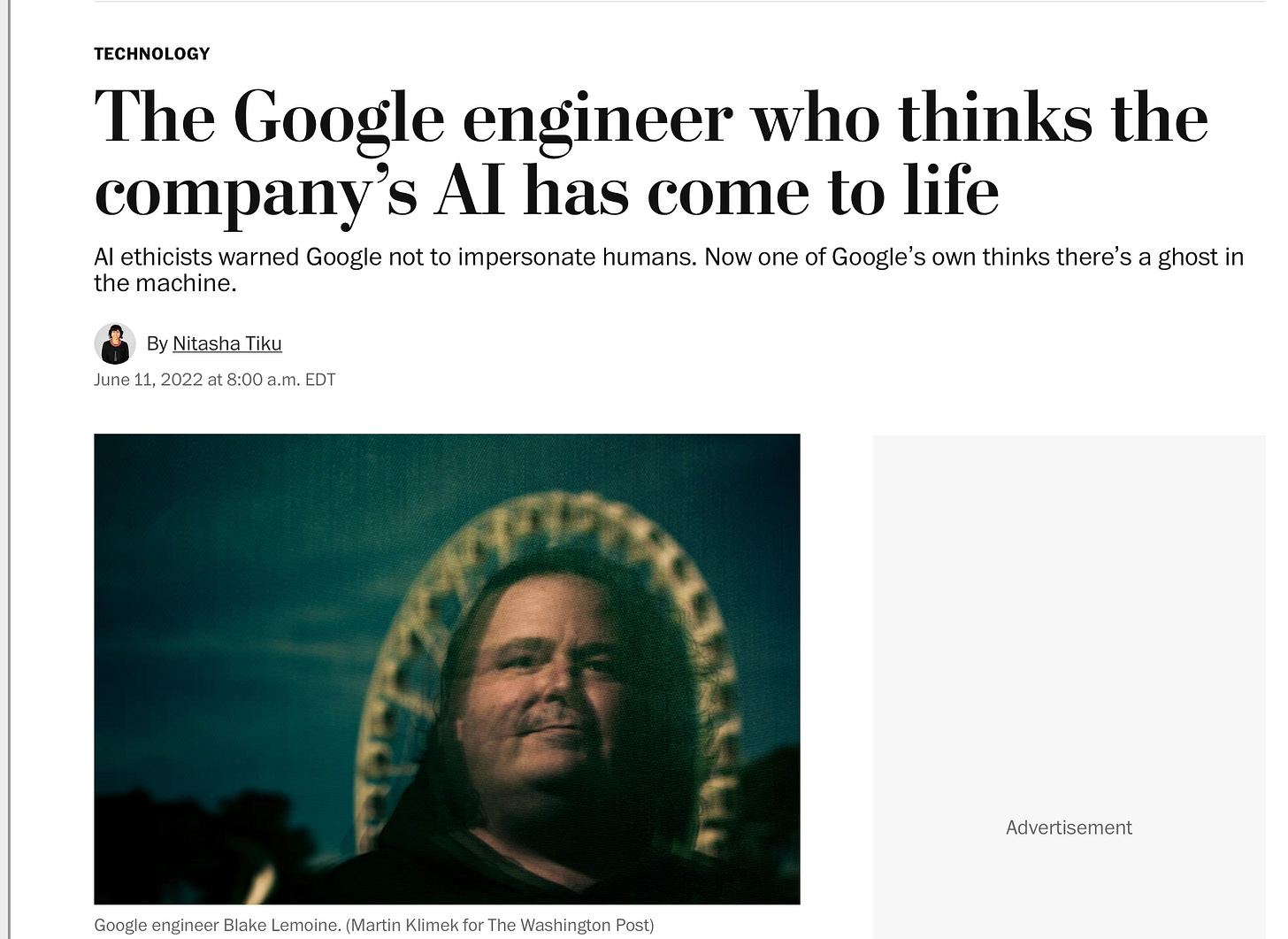

And it’s not like any of what Dawkins said is even new.

Longtime readers of this Substack may remember the tale of Blake Lemoine, a Google engineer (later fired) who alleged that an early (now forgotten) LLM of Google’s was conscious, based on arguments that were eerily similar to Dawkins, which boil down to “I talked to it, and sure seems conscious to me.”

This article was wildly popular at the time:

The problem of course is that LLMs are mimics, and what they say isn’t always true. When an LLM speaks of how its children are doing (which I have seen happen) it’s not because the LLM has children, but because it mimics people who do. Dawkins and the late Daniel Dennett were (I believe) friends, and I wish that Dawkins had read Dennett’s terrific essay on counterfeit people.

Claude is akin to a counterfeit person. Dawkins should never have glorified such a thing.

§

Back when Lemoine was in the limelight, four years ago, I wrote an essay called Nonsense on Stilts that I dearly wish Dawkins had read. Here’s an excerpt:

All [systems like LaMDA] do is match patterns, draw from massive statistical databases of human language. The patterns might be cool, but language these systems utter doesn’t actually mean anything at all. And it sure as hell doesn’t mean that these systems are sentient.

Which doesn’t mean that human beings can’t be taken in. In our book Rebooting AI, Ernie Davis and I called this human tendency to be suckered by The Gullibility Gap — a pernicious, modern version of pareidolia, the anthromorphic bias that allows humans to see Mother Theresa in an image of a cinnamon bun.

…

To be sentient is to be aware of yourself in the world; LaMDA simply isn’t. It’s just an illusion, in the grand history of ELIZA a 1965 piece of software that pretended to be a therapist (managing to fool some humans into thinking it was human), and Eugene Goostman, a wise-cracking 13-year-old-boy impersonating chatbot that won a scaled-down version of the Turing Test…. What these systems do, no more and no less, is to put together sequences of words, but without any coherent understanding of the world behind them, like foreign language Scrabble players who use English words as point-scoring tools, without any clue about what that mean.

Replace LaMDA with Claude, and every word still applies.

So does Erik Brynjolffson’s 2022 tweet that I quoted then, which underscores the point about the limitations of mimicry I am pounding on:

Inferring sentience directly from outputs is naive. But it’s exactly what Dawkins does.

§

But don’t take my word for it. Or Dennett’s. Or Brynjolfsson’s.

Instead, as an antidote, watch Anil Seth’s latest TED Talk from this year, just out, which starts with an absolutely beautiful dissection of the very LLM consciousness delusion that poor Dawkins has recently succumbed to:

Bonus: logistics willing (we are looking for a larger public venue, having sold out a smaller private one) you may be able to come see Anil Seth and I discuss this — and also some important matters about brains and computation on which we disagree — in London May 15 at an event (location and exact time TBA) that the Francis Bacon Debate Society will host. (They will post an update about this Monday.)

Richard Dawkins, if you are reading this, you are more than welcome to come press your case.